The AI Jobs Debate: Jensen Huang Is Right, And Also Missing the Point

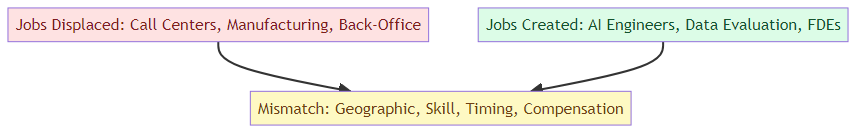

Jensen Huang says AI is creating an enormous number of jobs. He's not wrong. But the jobs being created are different from the jobs being displaced — and the transition is far messier than the 'AI creates jobs' framing suggests. Here's what the AI employment debate actually looks like from inside the industry.