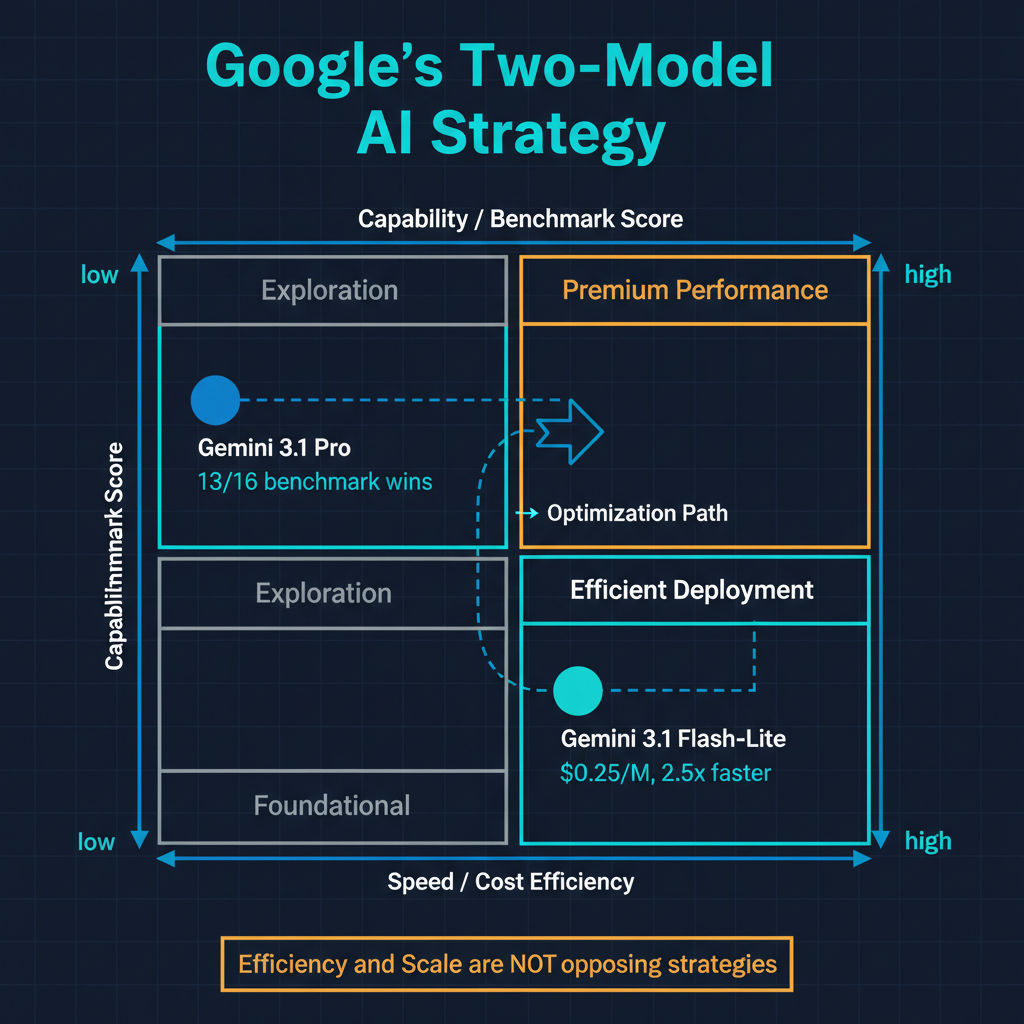

Google's latest model releases tell two stories simultaneously, and both of them matter. Gemini 3.1 Flash-Lite arrives with 2.5x faster response times, 45% faster output generation, and a price point of $0.25 per million input tokens -- making it one of the cheapest capable models available from any major provider. At the same time, Gemini 3.1 Pro now dominates 13 of 16 major benchmarks, establishing itself as a genuine frontier model competitor. Google is not choosing between efficiency and capability. It is pursuing both, and the strategic logic behind that choice reveals something important about where the AI industry is heading.

The Economics of Flash-Lite

At $0.25 per million input tokens, Gemini 3.1 Flash-Lite is priced to change the economics of AI deployment at scale. To put that number in perspective: processing a million tokens at this price costs roughly what a cup of coffee costs. For applications that handle high volumes of relatively straightforward tasks -- classification, extraction, summarization, routing -- this pricing makes AI inference a negligible line item rather than a significant cost driver.

The 2.5x latency improvement is equally significant, though for different reasons. In production systems, latency is often the binding constraint rather than cost. A model that costs twice as much but responds in half the time is frequently the better choice for user-facing applications, because user experience degrades nonlinearly with response time. Users who wait three seconds are mildly impatient. Users who wait eight seconds abandon the task. Flash-Lite's combination of low cost and low latency makes it viable for applications where both constraints matter simultaneously -- and that covers a large portion of production AI use cases.

I have been watching the inference cost curve closely, and the trend is striking. Two years ago, processing a million tokens through a capable model cost roughly $30-60 on the input side. Today, Flash-Lite offers comparable task performance for many use cases at less than 1% of that cost. This is not an incremental improvement. It is a structural shift that opens entirely new categories of AI applications that were economically infeasible at previous price points.

What Flash-Lite Is and Is Not

It is important to be precise about what Flash-Lite optimizes for. This is not a model designed for open-ended reasoning or tasks requiring deep analytical judgment. It is optimized for tasks where the reasoning requirements are moderate but the volume and latency requirements are high: classification, entity extraction, structured data transformation, intent routing, content moderation, and simple question answering from provided context.

The model makes deliberate tradeoffs to achieve its speed and cost profile. It has fewer parameters and a narrower capability envelope than Pro or Ultra-class models. This is not a limitation -- it is the point. Flash-Lite represents a different engineering philosophy: given a defined capability target, minimize the resources required to hit it. The historical parallel is the progression from mainframes to microprocessors. Microprocessors were worse at many tasks, but they were good enough at enough tasks, cheap enough, and fast enough to create entirely new computing paradigms. Flash-Lite is playing the same role -- not replacing frontier models, but enabling applications that frontier models are too expensive and too slow to serve.

Gemini 3.1 Pro: The Benchmark Sweep

While Flash-Lite addresses the efficiency frontier, Gemini 3.1 Pro's performance on 13 of 16 major benchmarks establishes Google's position at the capability frontier. This is notable because the benchmark landscape has become increasingly competitive. Anthropic's Claude Opus, OpenAI's GPT-5.4, and various open-source models have all been posting strong results. For any single model to lead on the majority of established benchmarks simultaneously is a significant achievement.

The specific benchmarks where Pro leads are worth examining. They span mathematical reasoning, code generation, multilingual understanding, scientific knowledge, and long-context comprehension. This breadth suggests that Pro's improvements are not the result of narrow optimization on specific benchmark tasks but reflect genuine advances in the model's general reasoning capabilities.

Google's advantage in training data and infrastructure should not be underestimated here. Google has access to the largest index of human knowledge ever assembled, combined with custom TPU hardware designed specifically for large-scale model training. The scale advantages that once seemed like they might be offset by architectural innovations from smaller labs have, at least for now, reasserted themselves.

The Two-Model Strategy

The simultaneous release of Flash-Lite and Pro is not coincidental -- it reflects a deliberate product strategy that I think other providers will increasingly adopt. The logic is straightforward: a single model cannot be optimal for all use cases, and trying to build one creates unnecessary tradeoffs.

In practice, most production AI systems should be running multiple models. The routing logic is simple in principle: tasks that require deep reasoning, complex analysis, or creative generation go to a frontier model like Pro. Tasks that require fast, cheap, reliable execution of well-defined operations go to an efficiency model like Flash-Lite. The savings from routing high-volume, low-complexity tasks to Flash-Lite can easily fund the higher per-query cost of using Pro for the tasks that actually need it.

I have implemented this pattern in several systems, and the economics are compelling. In a typical enterprise application, roughly 60-70% of queries are routine enough for an efficiency model, and 30-40% genuinely benefit from frontier capability. Routing the routine queries to a model priced at $0.25 per million tokens instead of $15-30 per million tokens reduces total inference costs by 40-50% without any degradation in output quality for the queries that matter most.

The challenge is building the routing logic correctly. A misrouted complex query that gets sent to Flash-Lite will produce a noticeably worse result. A misrouted simple query that gets sent to Pro wastes money and adds latency. The routing layer itself becomes a critical component that needs its own evaluation and optimization pipeline. This is an emerging area of AI engineering that does not get enough attention.

Efficiency as a Strategic Moat

Google's investment in efficiency-class models reveals a strategic insight that I think is underappreciated: in a market where frontier capability is increasingly commoditized, efficiency becomes a differentiator. When multiple providers offer models within a few percentage points of each other on benchmarks, the model that delivers comparable performance at lower cost and lower latency wins the volume game.

The volume game matters because AI inference is becoming infrastructure -- something that runs continuously in the background rather than being invoked occasionally for high-value tasks. Google's TPU infrastructure, purpose-built for AI workloads, gives them a structural cost advantage in this race. This dynamic also has implications for the open-source ecosystem, which may find its strongest competitive position not in matching frontier capability but in delivering efficiency-class performance at even lower marginal costs on self-hosted hardware.

What This Means for Practitioners

For AI practitioners and teams building production systems, the Gemini 3.1 release reinforces several practical recommendations.

First, model selection should be a portfolio decision, not a vendor loyalty decision. The right model for classification is not the right model for analysis, and treating all queries the same wastes resources.

Second, inference cost and latency should be treated as first-class engineering requirements, not afterthoughts. The difference between $0.25 and $15 per million tokens is the difference between AI being a line item and AI being a budget category.

Third, efficiency-class models are now good enough for a majority of production tasks. The instinct to use the most powerful available model for everything is understandable but increasingly indefensible from an engineering perspective.

The AI industry spent its first phase proving that large language models could do remarkable things. The next phase is about making those remarkable things affordable, fast, and reliable enough to run at infrastructure scale. Google's dual bet on Flash-Lite and Pro suggests they understand this transition better than most. The question for the rest of the industry is how quickly they follow.