If you are building AI products in 2026, the regulatory environment you operate in depends entirely on where your customers are, where your company is incorporated, and which specific AI capabilities you deploy. There is no unified global framework. There is no single set of rules. There is, instead, a patchwork of overlapping, sometimes contradictory, and rapidly evolving regulations that AI companies must navigate simultaneously.

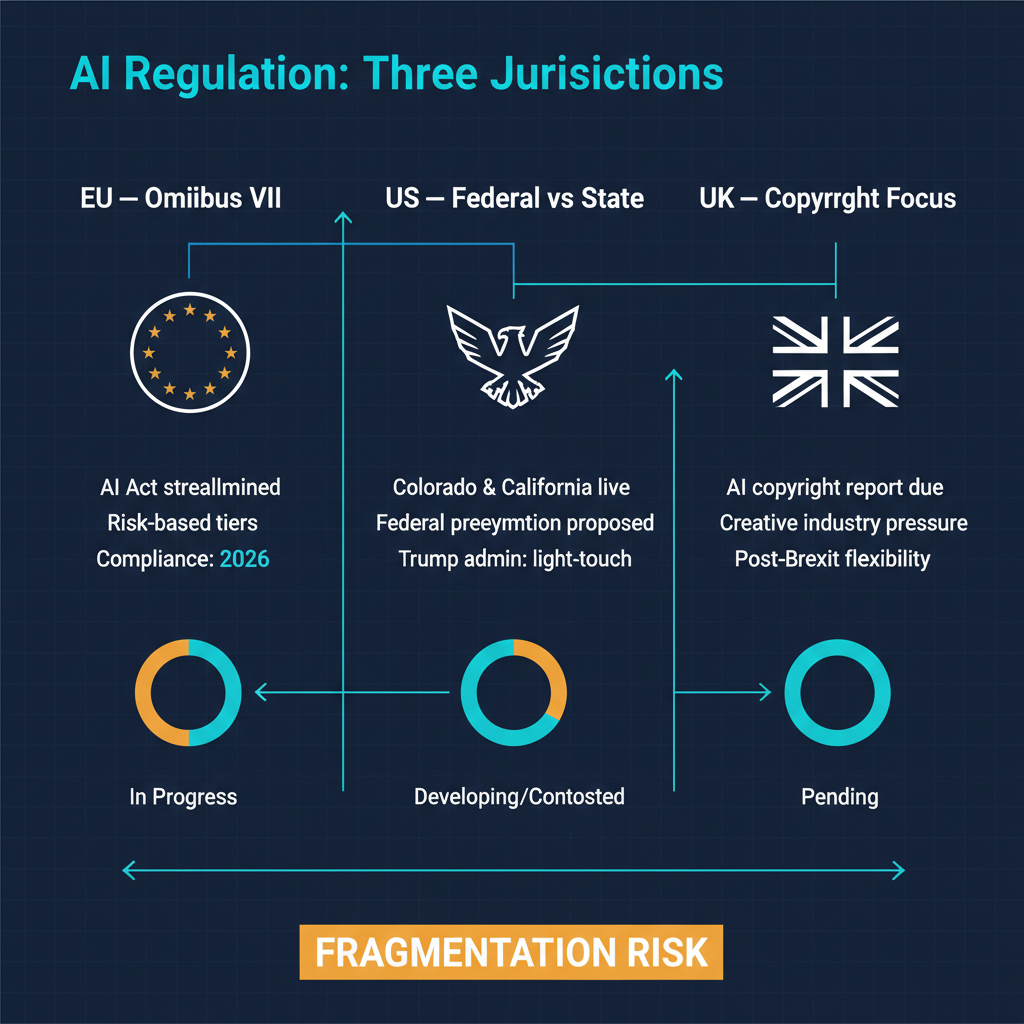

This month has brought several developments that sharpen the picture — and the challenges — of global AI regulation. The EU Council agreed its position on Omnibus VII, a simplification package for the AI Act. In the United States, Colorado's AI Act and California's AI Transparency Act have both taken effect. The Trump administration has signaled its intent to preempt state AI laws with a federal executive order. And the United Kingdom is due to publish its long-awaited AI copyright reports by March 18.

Each of these developments deserves attention. Together, they illustrate a regulatory landscape that is becoming more complex, not less.

The EU AI Act: Simplification or Retreat?

The EU AI Act, which entered into force in August 2024 with a phased implementation timeline, is the most comprehensive AI regulation in the world. It classifies AI systems by risk level — unacceptable, high, limited, and minimal — and imposes corresponding obligations on developers and deployers.

The challenge has been implementation. The European Commission missed its February deadline for publishing guidance on what constitutes a "high-risk" AI system — a classification that triggers the most significant compliance requirements. Without clear guidance, companies building AI systems for use in the EU have been operating in a gray zone, uncertain whether their products fall within scope and what specific obligations apply.

The Omnibus VII package, on which the EU Council agreed its position this month, is explicitly designed to address this problem. Framed as a simplification initiative, Omnibus VII aims to reduce compliance burden, streamline reporting requirements, and provide clearer criteria for risk classification. The stated goal is to make the AI Act more workable for companies — particularly SMEs — without weakening its core protections.

I am cautiously supportive of this direction but want to flag a concern. There is a meaningful difference between simplifying regulation and diluting it. The AI Act's strength is its risk-based approach and its willingness to impose real obligations on high-risk AI systems. If Omnibus VII simplifies the compliance process while maintaining the substantive requirements, that is good governance. If it narrows the definition of "high-risk" in ways that exempt AI systems that pose genuine risks, it is a retreat dressed up as streamlining.

The final text, which will emerge from trilogue negotiations between the Council, Commission, and Parliament, will determine which of these outcomes prevails. AI companies operating in the EU should be tracking this closely.

The United States: State Laws Fill the Federal Vacuum

The United States has no comprehensive federal AI regulation. This is not an oversight — it reflects a deliberate policy choice by both the previous and current administrations, which have favored sector-specific guidance and voluntary commitments over horizontal AI legislation.

In the absence of federal action, states have stepped in. Two state-level AI laws that took effect recently illustrate the range of approaches.

Colorado's AI Act focuses on algorithmic discrimination. It requires deployers of high-risk AI systems — defined as systems that make or substantially contribute to consequential decisions about consumers in areas like employment, housing, insurance, and credit — to conduct impact assessments, disclose AI use to affected consumers, and implement risk management practices. The law places obligations on both developers and deployers, creating a shared responsibility framework.

California's AI Transparency Act takes a different approach, focusing on disclosure. It requires operators of generative AI systems to label AI-generated content, provide mechanisms for users to report concerns, and maintain records of AI system capabilities and limitations. The emphasis is on transparency rather than risk management — ensuring that people know when they are interacting with AI and have recourse if something goes wrong.

These two laws are not contradictory, but they are different in philosophy and scope. A company that complies with Colorado's risk assessment requirements is not automatically compliant with California's transparency requirements, and vice versa. Multiply this by the growing number of states considering their own AI legislation, and the compliance landscape for AI companies operating nationally becomes genuinely complex.

The Federal Preemption Question

The Trump administration has signaled its intent to address this fragmentation through an executive order that would preempt state-level AI regulation in favor of a unified federal framework. The administration's stated rationale is that a patchwork of state laws creates uncertainty and compliance costs that hinder American AI innovation and competitiveness.

There is merit to the efficiency argument. A single federal standard is genuinely easier for companies to comply with than fifty potentially different state standards. The fragmentation concern is real and shared by companies across the AI industry.

But preemption raises its own concerns. First, the executive order mechanism: preemption of state law by executive order, rather than by federal legislation, raises significant legal questions. State laws enacted by state legislatures have democratic legitimacy. Overriding them by executive action — without Congress passing a replacement federal law — creates a governance gap. The states' AI laws would be preempted, but no federal law would take their place.

Second, the substance of the federal framework matters as much as its existence. If federal preemption replaces state laws with a robust federal standard that addresses algorithmic discrimination, transparency, and accountability, that is a net improvement. If it replaces them with voluntary guidelines or a light-touch approach that lacks enforcement mechanisms, it effectively deregulates AI at the moment when the technology's societal impact is accelerating.

I think the right answer is federal legislation — actual law passed by Congress — that establishes baseline requirements for AI systems while allowing states to go further in specific areas where local conditions warrant it. This is the approach the U.S. has used successfully in environmental regulation and consumer protection. But getting AI legislation through Congress requires bipartisan agreement on a technically complex topic, and there is no indication that such agreement is imminent.

The UK Copyright Question

The United Kingdom's approach to AI regulation has been markedly different from both the EU and the US. Rather than comprehensive legislation, the UK has pursued a sector-specific, principles-based approach through existing regulatory bodies. The Information Commissioner's Office handles AI and data protection. The Competition and Markets Authority addresses AI competition concerns. The Financial Conduct Authority oversees AI in financial services.

The most consequential UK AI policy question right now is copyright. The UK government is due to publish reports by March 18 that will address the legal status of AI training on copyrighted material. This is not an academic exercise. The outcome will determine whether AI companies can train models on copyrighted works under existing fair use provisions, whether they need explicit licenses from copyright holders, and what compensation mechanisms (if any) apply.

The copyright question is globally significant because it affects every foundation model company. If the UK adopts a strict licensing requirement, it creates a precedent that other jurisdictions may follow. If it adopts a permissive approach, it provides legal cover for the current training practices of most AI companies. The creative industries — music, publishing, visual arts, journalism — are watching this closely, as the outcome will determine whether they receive any compensation for the use of their work in training AI systems.

The Fragmentation Problem

Standing back from the specific developments, the pattern that concerns me most is fragmentation. The EU has comprehensive horizontal regulation with implementation challenges. The US has no federal framework and growing state-level fragmentation. The UK is pursuing a sector-specific approach. China has its own regulatory framework focused on content control and algorithmic management. India, Japan, South Korea, Brazil, and others are all developing their own approaches.

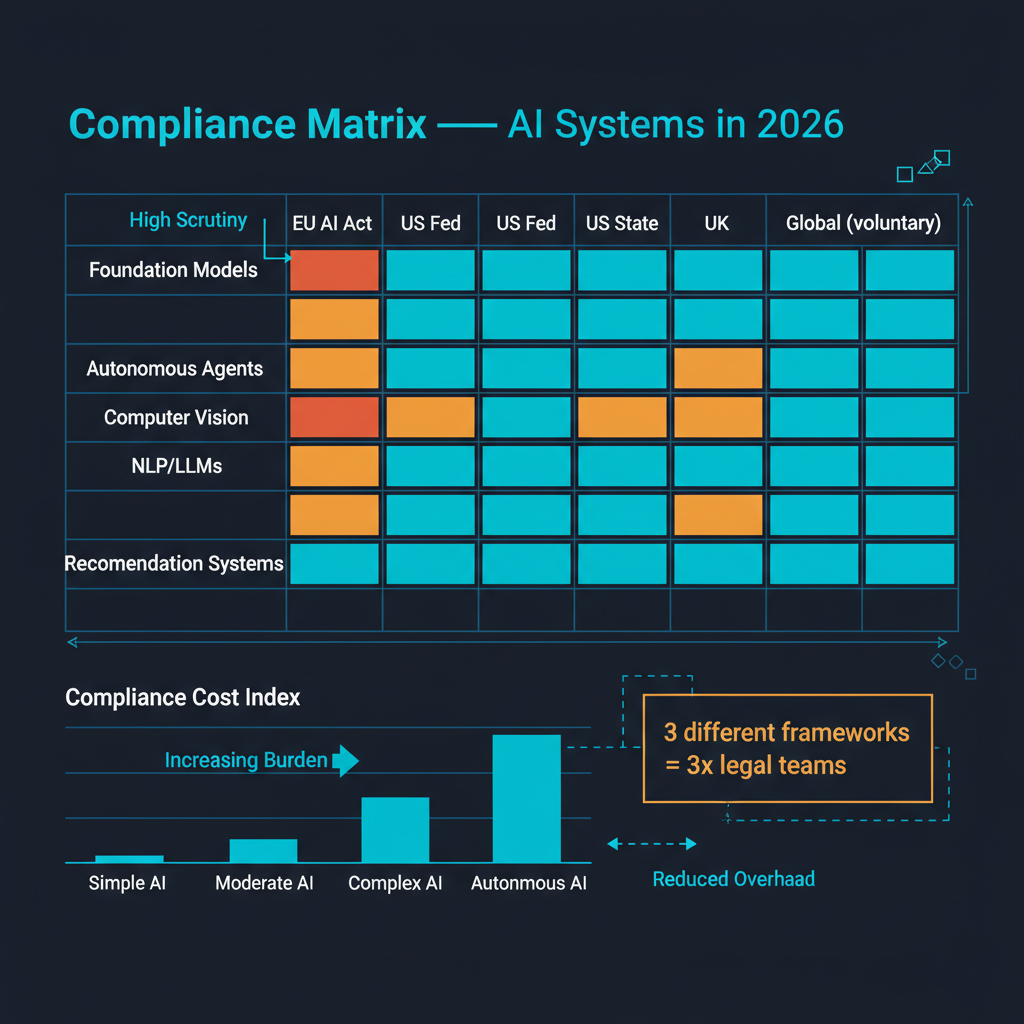

For AI companies operating globally — which includes most significant AI companies — this means simultaneous compliance with multiple, sometimes contradictory, regulatory frameworks. The EU AI Act's risk classification system does not map cleanly onto Colorado's definition of high-risk AI systems. California's transparency requirements differ from the EU's. The UK copyright position may conflict with the EU's approach under the Copyright Directive.

This fragmentation is not merely a compliance cost. It shapes what AI products get built and for whom. If compliance requirements are sufficiently different across jurisdictions, companies will build different products for different markets, or avoid certain markets altogether. The highest-compliance markets may get the safest AI products. They may also get fewer AI products, deployed more slowly, by fewer companies.

Where I Come Down

I believe AI regulation is necessary, appropriate, and overdue. The technology is powerful enough to cause real harm when deployed without adequate safeguards, and voluntary commitments from AI companies are insufficient as the sole governance mechanism.

But the current trajectory — regulatory fragmentation across jurisdictions, with each major economy pursuing its own approach — is producing a compliance landscape that is complex for large companies and potentially prohibitive for smaller ones. The AI companies with the resources to maintain compliance teams in every jurisdiction will navigate this. The startups that cannot will either limit their geographic reach or take compliance risks.

The solution is not less regulation. It is more coordination. Mutual recognition agreements between regulatory regimes, harmonized risk classification frameworks, and international standards for AI transparency and accountability would preserve the substantive protections that regulators are rightly pursuing while reducing the fragmentation that undermines innovation. Whether the political will exists for such coordination is another question entirely.