Navigating the AI Regulation Maze: A Global Governance Overview for 2025

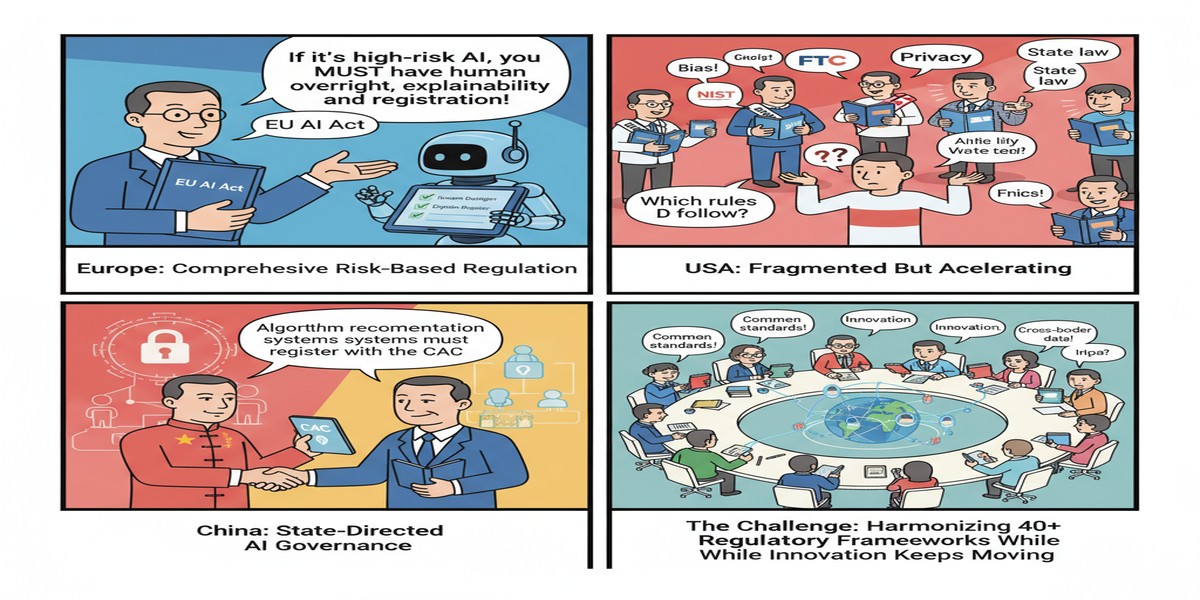

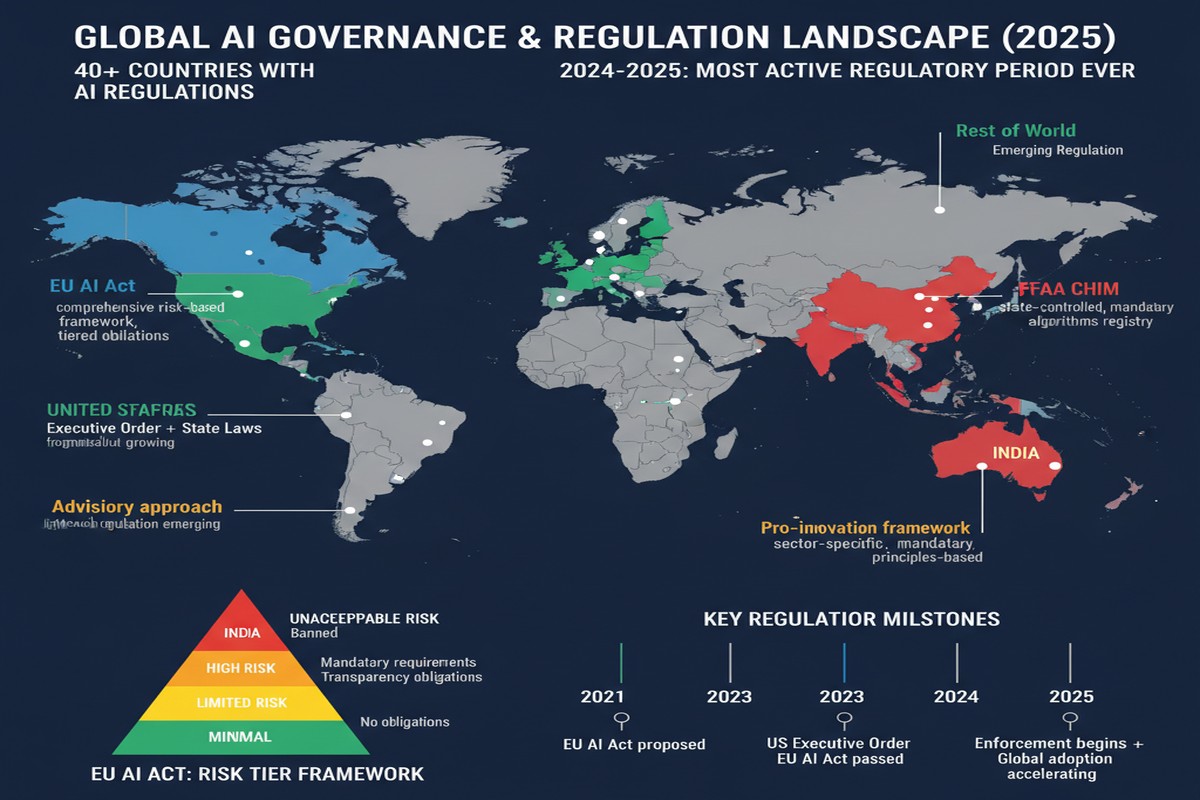

If you're building AI systems in 2025, you are operating in a regulatory landscape that didn't exist three years ago and is changing faster than any compliance team can track. The EU AI Act is actively applying requirements. India's DPDP Act has teeth. The US has executive orders, state legislation, and proposed federal frameworks. China has generative AI regulations. Each jurisdiction has different risk categories, different compliance requirements, different enforcement mechanisms.

"Global AI Governance Overview: Understanding Regulatory Requirements Across Global Jurisdictions" (arXiv: 2512.02046, Dec 2025) provides the most comprehensive cross-jurisdictional analysis of AI governance frameworks published to date. For AI practitioners and organizations deploying AI systems, this is required reading.

The Regulatory Landscape: Five Major Frameworks

graph TD

subgraph EU - Risk-Based Approach

A[EU AI Act\nAug 2024 - Active]

A --> A1[Prohibited AI: biometric surveillance, social scoring]

A --> A2[High-Risk: education, employment, healthcare, law enforcement]

A --> A3[GPAI Models: transparency + copyright compliance]

end

subgraph US - Sectoral + Executive

B[Executive Order 14110\nOct 2023]

B --> B1[NIST AI RMF adoption]

B --> B2[Sector-specific guidance]

B --> B3[State legislation patchwork]

end

subgraph China - Content + Security Focus

C[Generative AI Measures\nAug 2023]

C --> C1[Content safety review]

C --> C2[Algorithm registration]

C --> C3[Security assessment for frontier models]

end

subgraph India - Data Privacy Focus

D[DPDP Act 2023\nRules 2025]

D --> D1[Consent for personal data in AI]

D --> D2[Data localization provisions]

D --> D3[Significant data fiduciaries]

end

subgraph UK - Principles-Based

E[AI Safety Institute\nSector-specific guidance]

E --> E1[Foundation model evaluation]

E --> E2[Voluntary safety commitments]

end

The EU AI Act: The World's Most Comprehensive Framework

The EU AI Act entered into force in August 2024, with provisions applying progressively through 2026. As of August 2025, approximately 28.3% of provisions are active, including:

General-Purpose AI (GPAI) Model Obligations: The most relevant provisions for frontier AI developers. Any AI model trained on more than 10^25 FLOPs is considered "systemic risk" and faces additional obligations:

- Technical documentation of training methodology, data sources, and evaluation

- Cybersecurity compliance

- Energy efficiency reporting

- Cooperation with national authorities for incident reporting

As of mid-2025, the GPAI Code of Practice — a voluntary compliance framework developed by the AI industry — has been submitted to the EU Commission. Major labs including Google DeepMind, Anthropic, Mistral, and others have participated.

High-Risk AI Systems: The category that catches most enterprise AI deployments. High-risk systems include AI used in:

- Employment (CV screening, performance monitoring)

- Education (student assessment, admissions)

- Healthcare (medical devices, clinical decision support)

- Law enforcement (risk assessment, biometric identification)

- Critical infrastructure (power grid management, water systems)

High-risk systems require conformity assessments, registration in the EU AI database, human oversight provisions, and ongoing monitoring.

The Accountability Paradox: One of the paper's most important observations. The multi-layered regulation creates situations where violations could simultaneously implicate GDPR (data privacy), the AI Act (AI-specific), and product liability law — each enforced by different authorities. This fragmentation creates legal uncertainty about which authority has jurisdiction in cross-cutting cases.

The US Approach: Fragmented but Real

The US doesn't have a comprehensive federal AI law (as of this writing). What exists:

- NIST AI Risk Management Framework: Voluntary but increasingly referenced by regulators as the standard for responsible AI deployment

- Executive Order 14110 (Biden, Oct 2023): Required safety evaluations for frontier models, dual-use research reporting, immigration pathways for AI talent

- State legislation: California SB-1047 (vetoed), Colorado's AI Act, Texas AI framework — a patchwork creating significant compliance complexity for national deployments

- Sectoral regulation: FDA guidance for AI medical devices, CFPB guidance on AI in consumer financial services, EEOC guidance on AI in hiring

The analysis notes that the US approach is "sectoral and principles-based" — meaning enforcement happens through existing sector regulators applying AI-specific guidance, not through a dedicated AI regulator. This creates faster iteration but less legal certainty.

China: Mandatory Registration and Content Safety

China's regulatory approach focuses on:

- Algorithm recommendation regulation (2022): Requires registration of recommendation algorithms

- Deep synthesis regulation (2022): Covers deepfakes and synthetic media

- Generative AI measures (2023): Content safety review, training data requirements, security assessments for frontier models

The paper notes that China's requirements are most restrictive for content-facing applications targeting Chinese users — less so for enterprise/infrastructure AI. But the frontier model security assessment requirement is significant: models considered to pose "significant security risks" require government approval before public deployment.

India's DPDP Act: Data Sovereignty Meets AI

India's Digital Personal Data Protection Act (2023) with its 2025 implementing rules has direct implications for AI:

- Consent requirements: Processing personal data (including for AI training) requires valid consent from data principals

- Data fiduciary obligations: Organizations with significant data volumes have enhanced obligations including impact assessments

- Cross-border transfer: Restrictions on transferring Indian user data to certain jurisdictions — directly affecting cloud AI services

For AI companies serving Indian users, DPDP compliance means either data localization (keeping Indian user data in India) or federated/privacy-preserving approaches that process data without centralizing it.

The Compliance Architecture: What Organizations Actually Need

Based on the paper's analysis, organizations deploying AI across jurisdictions need:

flowchart TD

A[AI System] --> B{Risk Classification}

B -->|Prohibited use case| C[Don't deploy]

B -->|High-risk EU| D[Conformity Assessment\nTechnical Documentation\nHuman Oversight\nRegistration]

B -->|GPAI Systemic| E[GPAI Obligations\nSafety Documentation\nCybersecurity\nEnergy Reporting]

B -->|Standard risk| F[Transparency Requirements\nBasic Documentation]

D --> G[EU Compliance]

E --> G

G --> H{US Deployment?}

H -->|Yes| I[NIST RMF alignment\nSector-specific guidance]

H -->|No| J[EU-only compliance]

I --> K{India deployment?}

K -->|Yes| L[DPDP consent framework\nData localization assessment]

style C fill:#ef4444,color:#fff

style G fill:#059669,color:#fff

Documentation: Every jurisdiction requires technical documentation of AI systems. The depth varies (EU requires extensive documentation for high-risk systems; US requires NIST RMF alignment), but the common core is: what does the system do, on what data was it trained, how is it evaluated, what risks does it pose.

Human oversight: High-risk AI systems under the EU AI Act require meaningful human oversight — not just a nominal "human in the loop" but genuine capacity for humans to override, correct, and shut down the system.

Incident reporting: Multiple jurisdictions are moving toward mandatory AI incident reporting, following the model of security breach disclosure requirements.

Why This Matters for AI Development Teams

Regulatory compliance is no longer a legal department problem — it's an engineering problem. The documentation requirements, audit trails, and oversight mechanisms required by the EU AI Act need to be built into AI systems from the start, not retrofitted.

The "technical documentation" requirements are particularly demanding: training data provenance, evaluation methodology, performance characterization across demographic groups, known limitations and failure modes. This level of documentation is rarely maintained by current AI development practices.

My Take

The global AI regulation landscape is maturing faster than the AI industry's institutional readiness to comply. Most AI teams I interact with are aware that regulation exists but haven't mapped their specific systems to specific regulatory requirements.

The EU AI Act's GPAI provisions deserve more attention than they're getting in technical circles. Any model trained at frontier scale now has explicit regulatory obligations in the EU — transparency, safety testing, cooperation with regulators. These aren't soft guidelines; they're legal requirements with enforcement mechanisms.

My concern is the fragmentation between jurisdictions. A global AI system faces EU requirements, US sectoral guidance, India's DPDP, China's content rules — simultaneously and sometimes inconsistently. The compliance burden is highest for smaller organizations that lack dedicated regulatory affairs teams.

The paper's observation about the "accountability paradox" is astute and underappreciated: when an AI system causes harm in a cross-cutting way (violating data privacy, using a flawed model, causing physical harm), multiple regulatory frameworks apply simultaneously with different enforcement authorities. Legal uncertainty in this space is a feature, not a bug — it's forcing organizations to take a conservative approach to high-risk AI deployments.

For AI practitioners in India specifically: the DPDP Act's implications for AI training data and consent are only beginning to be operationalized. This is going to be a significant compliance challenge for domestic AI companies over the next 18-24 months.

Regulation is not the enemy of innovation. Clear rules create clear playing fields. The sooner the field develops best practices for AI regulatory compliance, the better for everyone.

Paper: "Global AI Governance Overview: Understanding Regulatory Requirements Across Global Jurisdictions", arXiv: 2512.02046, Dec 2025.

Explore more from Dr. Jyothi