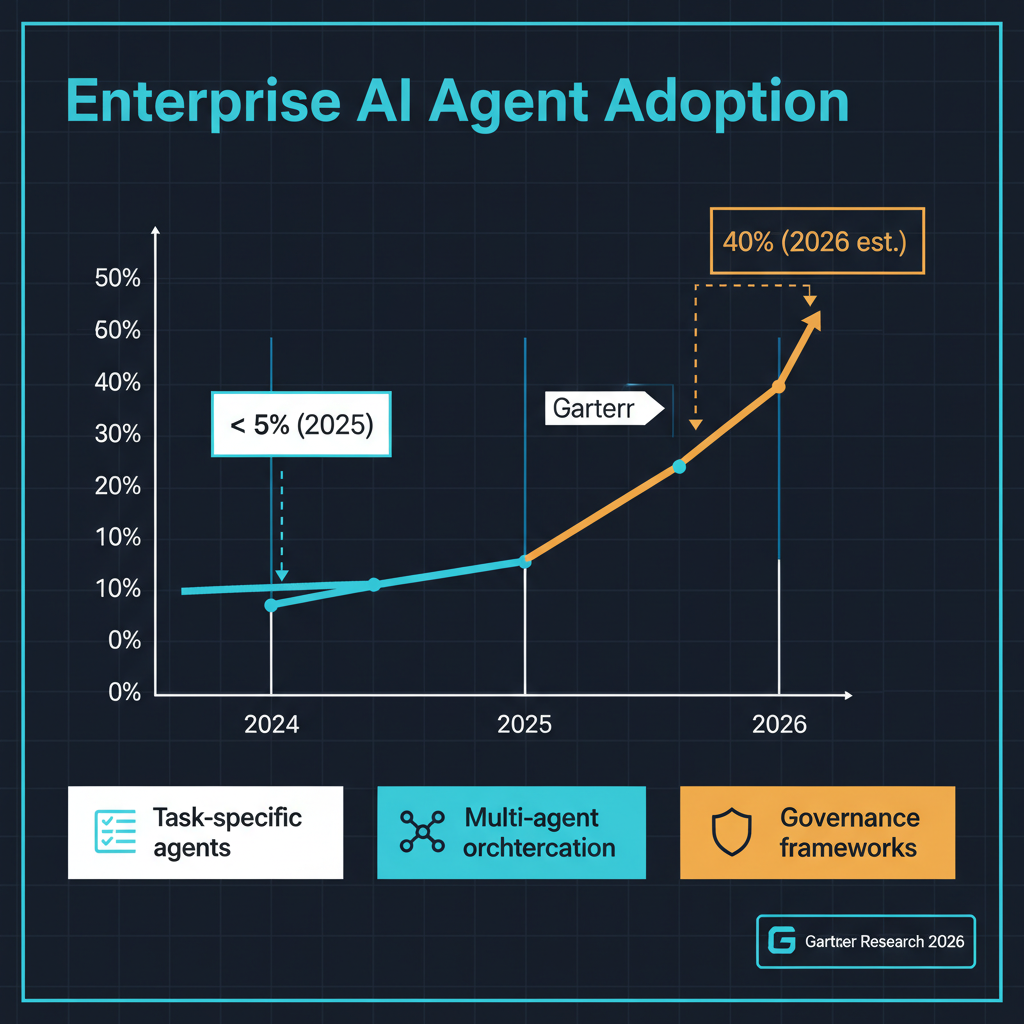

Gartner's latest prediction that 40% of enterprise applications will incorporate task-specific AI agents by the end of 2026 -- up from less than 5% in 2025 -- is the kind of forecast that sounds hyperbolic until you look at what is actually shipping. Multi-agent systems are moving from research demonstrations and startup pitch decks into production deployments at companies that do not typically chase hype cycles. Galileo just launched Agent Control, an open-source governance framework for autonomous AI systems. NVIDIA released its Physical AI Data Factory Blueprint for training agents that operate in the real world. The infrastructure for agentic AI is being built, and the pace suggests that Gartner's prediction may actually be conservative.

From Generative to Agentic: What Changed

The generative AI era that defined 2023-2025 was fundamentally about producing content: text, images, code, summaries. Users provided a prompt, the model generated output, and a human decided what to do with it. The interaction pattern was call-and-response, and the human remained firmly in the loop at every step.

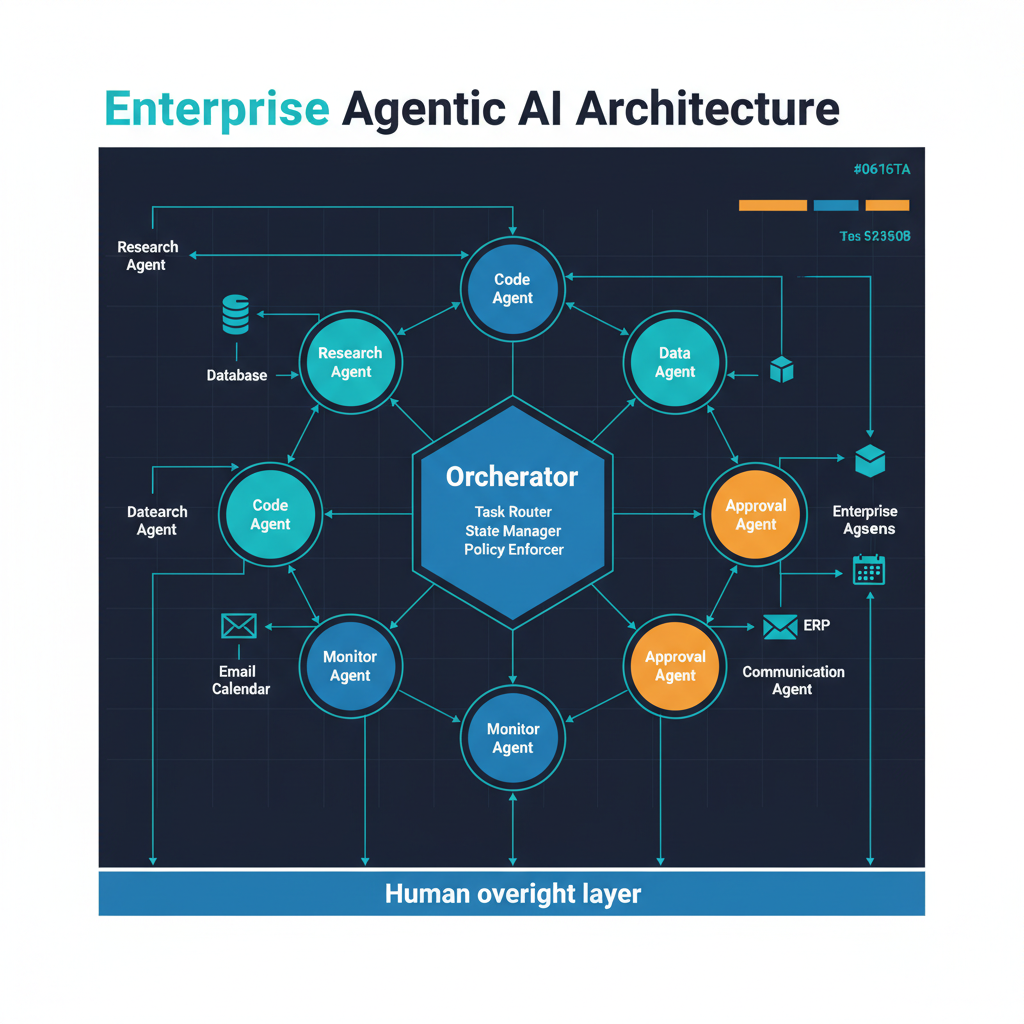

Agentic AI represents a structural shift in that interaction pattern. An agent does not just generate a response -- it pursues a goal. It breaks complex objectives into subtasks, selects and uses tools, evaluates intermediate results, adjusts its approach when something does not work, and continues until the goal is achieved or it determines that human input is needed. The human is no longer in the loop at every step. They are on the loop -- setting objectives, reviewing results, and intervening when necessary, but not directing every action.

This shift from in-the-loop to on-the-loop is what makes agentic AI both more powerful and more consequential than the generative systems that preceded it. A chatbot that generates a wrong answer creates a minor inconvenience. An agent that takes a wrong action can create real-world consequences that are difficult or impossible to reverse.

Why 40% Is Plausible

The 40% figure seems aggressive until you consider what counts as "incorporating task-specific AI agents." Gartner is not predicting that 40% of enterprise applications will be fully autonomous. They are predicting that 40% will include agent capabilities -- meaning the ability to perform multi-step tasks with some degree of autonomy within defined boundaries.

This includes customer service applications that can resolve issues end-to-end rather than just suggesting responses. It includes development tools that can write, test, and deploy code changes without human intervention at each step. It includes business process automation that can handle exceptions and edge cases rather than failing to a human queue. It includes data analysis tools that can formulate hypotheses, query databases, run statistical tests, and present findings without step-by-step human guidance.

Many of these capabilities are already shipping or in late-stage development at major enterprise software vendors. Salesforce's Agentforce, ServiceNow's agent capabilities, Microsoft's Copilot Studio agents, and similar products are being deployed at scale. When the major enterprise platforms all add agent capabilities to their existing installed base, reaching 40% of enterprise applications is a matter of software updates, not net-new adoption decisions.

Multi-Agent Systems: Beyond the Single Agent

The more interesting development is not individual agents but multi-agent systems -- architectures where multiple specialized agents collaborate to accomplish goals that no single agent could handle alone. Multi-agent architectures address a fundamental limitation of single-agent systems: the tension between capability breadth and reliability. A system of specialized agents, each operating within a narrow domain, can be more reliable in aggregate because each component can be independently tested and improved.

I have been building multi-agent systems in my own work, and the architectural patterns are still maturing. The hardest problems are at the boundaries between agents: how they communicate context, how failures propagate, how conflicting actions are resolved, and how the overall system maintains coherence when individual agents have partial views of the world state. These are distributed systems problems, and the AI industry is rediscovering lessons that the distributed computing community learned over decades.

Galileo's Agent Control: Governance as Infrastructure

Galileo's launch of Agent Control as an open-source framework for agent governance addresses what I consider the most critical gap in the current agentic AI ecosystem: the absence of standardized tools for monitoring, constraining, and auditing autonomous AI systems.

Agent Control provides primitives for defining what agents are and are not allowed to do, monitoring agent behavior in real time, detecting anomalous actions, enforcing approval gates for high-risk operations, and maintaining audit trails of every action an agent takes. These are not nice-to-have features -- they are prerequisites for deploying agentic AI in any context where mistakes have real consequences, which is to say, virtually every enterprise context.

The decision to release Agent Control as open source is strategically sound. Agent governance cannot be a proprietary capability controlled by a single vendor, because agents will operate across multiple platforms, models, and environments. An open standard for agent governance creates a common language and common tooling that the entire ecosystem can build on. It is the same logic that drove the adoption of open observability standards like OpenTelemetry for distributed systems.

For practitioners, I strongly recommend evaluating Agent Control or similar governance frameworks before deploying agents in production. The temptation to move fast -- to get an impressive demo into production as quickly as possible -- is understandable but dangerous. An agent without governance is a liability. An agent with robust governance is an asset.

NVIDIA's Physical AI Data Factory Blueprint

NVIDIA's Physical AI Data Factory Blueprint addresses a different dimension of the agentic transition: agents that operate in the physical world. Training agents for robotics, navigation, or industrial control requires massive amounts of simulation data. The Blueprint provides a reference architecture for generating synthetic training data using NVIDIA's Omniverse platform -- realistic physics simulation, sensor modeling, environment randomization, and the compute infrastructure to run millions of simulation episodes in parallel.

This release makes physical AI agent development accessible to organizations without in-house simulation capability. Factory automation, warehouse robotics, and autonomous vehicles all require agents that operate in unstructured physical environments, and the gap between digital and physical agent capabilities has been one of the most persistent bottlenecks in applied AI. The Blueprint's focus on high-fidelity simulation is an attempt to narrow the sim-to-real transfer gap that has historically limited the effectiveness of simulation-trained agents.

The Risks of the Agentic Transition

Agentic systems are fundamentally more difficult to make safe than generative systems, for a simple reason: they act. An agent that takes an incorrect action may have already created consequences by the time the error is detected. The failure modes of multi-agent systems are particularly concerning -- emergent behaviors arising from agent interactions are difficult to predict and diagnose. The governance gap is real: most organizations deploying agents today do not have adequate monitoring or audit infrastructure, and the speed of adoption is outpacing governance development.

Where This Goes

The agentic transition is not a future event -- it is happening now, and the speed is accelerating. The 40% prediction for enterprise applications by year-end is a waypoint, not a destination. The question is not whether agentic AI will become pervasive but whether the governance infrastructure will mature fast enough to make that pervasiveness safe and beneficial.

My view is cautiously optimistic. The technical capability for useful agents exists. The governance tooling is emerging. The enterprise demand is real and growing. The organizations that approach agentic AI with the same engineering discipline they apply to any other critical system -- rigorous testing, monitoring, constraint, and incremental deployment -- will capture genuine value. The organizations that deploy agents without that discipline will generate cautionary tales.

The transition from generative to agentic AI is the most significant architectural shift in the AI industry since the transformer. Getting it right matters.