Three years ago, the most sophisticated AI coding tool was GitHub Copilot, which completed lines and blocks of code as you typed. It was impressive and useful — a genuine productivity boost for developers — but it was fundamentally a smarter autocomplete. The human wrote the code. The AI suggested completions.

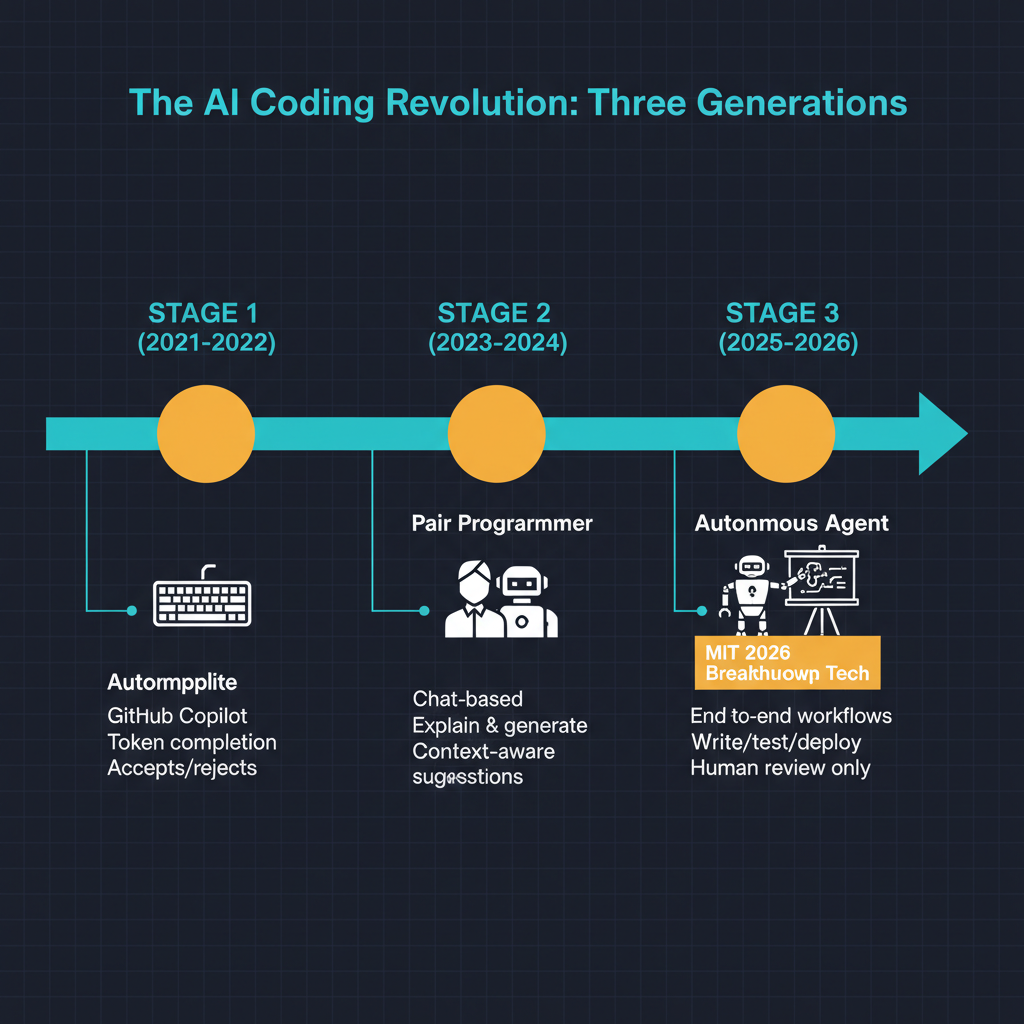

Today, OpenAI's Codex manages entire development workflows autonomously. Claude Code operates as a command-line agent that reads codebases, writes implementations, runs tests, debugs failures, and iterates until the task is complete. Cursor has redefined the editor experience by embedding AI deeply into the development workflow. MIT Technology Review named generative coding one of the ten breakthrough technologies of 2026.

The trajectory from autocomplete to autonomous agent happened in roughly thirty months. That is fast even by AI standards, and the implications for the software engineering profession are worth examining with more nuance than the typical "will AI replace developers" headline provides.

The Three Generations

The evolution of AI coding tools can be understood in three distinct generations, each representing a qualitative shift in the human-AI interaction model.

Generation one: autocomplete. GitHub Copilot, launched in 2021 and widely adopted in 2022-2023, represented the first generation. The interaction model was straightforward: the developer writes code in their editor, and the AI suggests completions based on the context of the current file and the broader training corpus. The human drives. The AI assists at the keystroke level. Productivity studies consistently showed 25-55% speed improvements for routine coding tasks, with the largest gains in boilerplate-heavy work like writing tests, implementing standard patterns, and working with unfamiliar APIs.

The limitations of generation one were clear. Copilot could complete the function you were writing, but it could not reason about the broader codebase. It could not understand your architecture, identify bugs across files, or suggest refactoring strategies. It operated at the level of individual code snippets, not at the level of software engineering.

Generation two: pair programming. Cursor, Claude in integrated editor environments, and the evolution of Copilot with chat capabilities represented the second generation. Here, the interaction model shifted from autocomplete to conversation. The developer describes what they want in natural language, and the AI generates multi-file implementations, explains existing code, identifies bugs, suggests architectural improvements, and engages in back-and-forth dialogue about design decisions.

This generation was qualitatively different because it operated at the level of intent rather than syntax. Instead of completing the line you were typing, it could understand "add authentication to this API endpoint" and generate the middleware, update the routes, add the database migration, and write the tests. The human remained in the loop — reviewing, approving, and directing — but the granularity of direction shifted from individual lines to features and components.

Generation three: autonomous agents. Claude Code, OpenAI Codex, and similar tools represent the current frontier. The interaction model has shifted again: the developer describes a task, and the AI agent independently reads the relevant codebase, formulates an implementation plan, writes code across multiple files, runs the test suite, diagnoses failures, iterates on the implementation, and delivers a working result.

The critical difference from generation two is the autonomy of the iteration loop. In pair programming mode, the human reviews each AI output and provides feedback. In agent mode, the AI runs its own review-and-iterate cycle, only coming back to the human when the task is complete or when it encounters a decision that requires human judgment. The human has shifted from driver to supervisor.

What Autonomous Coding Actually Looks Like

I have been using Claude Code extensively in my own work, and I want to give an honest picture of what the experience is like in practice.

For well-defined tasks with clear specifications — implement this API endpoint with these inputs and outputs, write tests for this module, refactor this function to handle these edge cases — autonomous coding agents are remarkably capable. They read the existing codebase, understand the patterns and conventions in use, generate implementations that are consistent with the project's style, and run the tests to verify correctness. For these tasks, the agent genuinely functions like a junior developer who can execute independently on well-scoped work.

For ambiguous tasks that require architectural judgment — design the data model for this new feature, decide how to handle concurrent access to shared resources, evaluate whether this should be a microservice or part of the monolith — the agents are less reliable. They can generate reasonable approaches, but they lack the broader context about organizational constraints, performance requirements, and future roadmap that inform architectural decisions. This is where human engineering judgment remains essential.

The most productive workflow I have found is what I think of as the "team lead" model. I make the architectural decisions, break work into well-scoped tasks, and delegate implementation to the agent. I review the output at the feature level rather than the line level. This mirrors how a senior engineer works with a team of junior developers — setting direction, delegating execution, reviewing results.

The Productivity Question

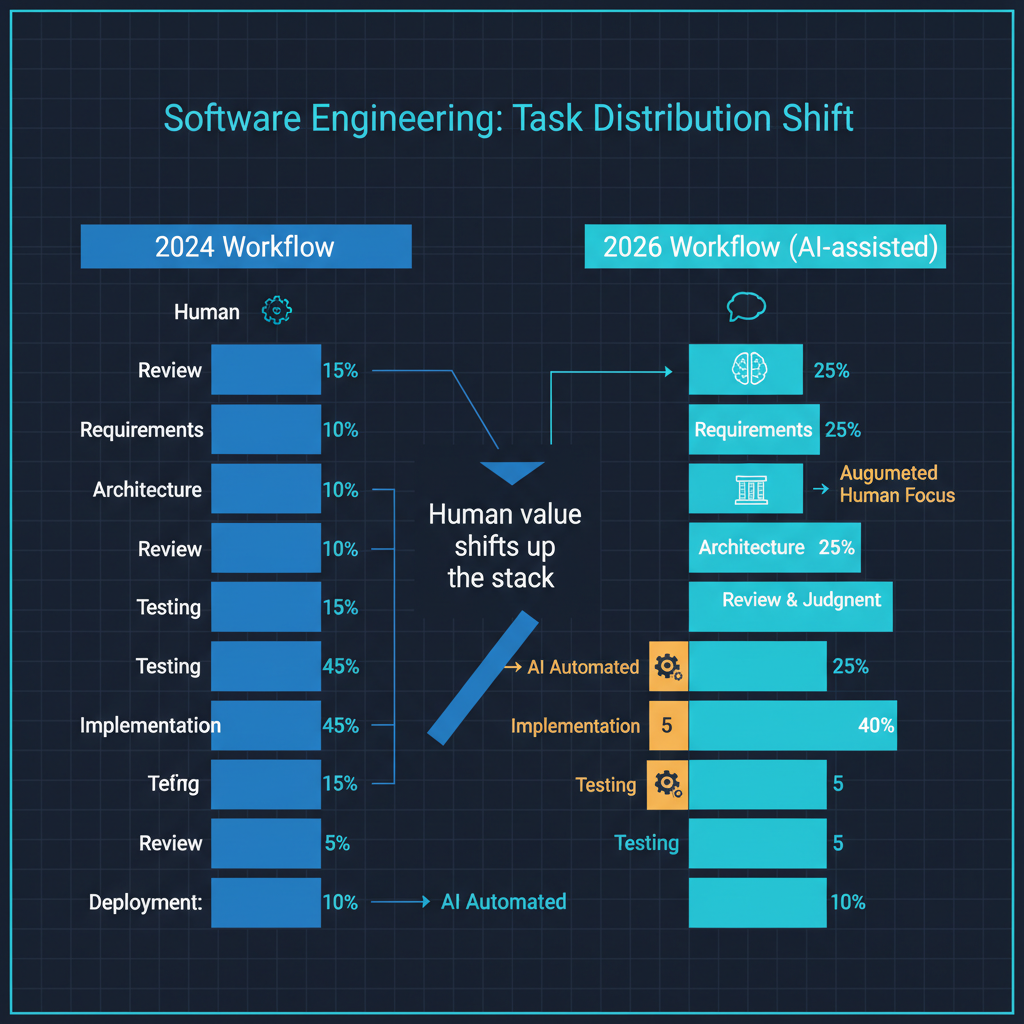

The productivity gains from generation three tools are substantial but uneven. In my experience, the multiplication factor depends heavily on the type of work.

For greenfield implementation of well-understood patterns — building CRUD APIs, implementing standard data pipelines, setting up infrastructure configurations — the productivity gain can be five to ten times. Tasks that would take hours of typing, looking up documentation, and debugging syntax errors are completed in minutes.

For debugging complex issues in existing systems — race conditions, performance problems, subtle logic errors — the productivity gain is more modest, perhaps two to three times. The agent can quickly search the codebase, form hypotheses, and test fixes, but complex bugs often require understanding of runtime behavior, deployment environment, and user interaction patterns that the agent does not have full visibility into.

For design and architecture work, the productivity gain is minimal. The agent can generate options and articulate tradeoffs, but the judgment required to make good architectural decisions comes from experience with the specific system, understanding of organizational constraints, and intuition about future requirements. These are not capabilities that current AI systems reliably possess.

What This Means for the Profession

The question everyone asks is whether AI coding agents will replace software developers. I think this framing misses what is actually happening.

The role of the software developer is being redefined, not eliminated. The work that is being automated — translating well-specified requirements into code, writing boilerplate, implementing standard patterns — was always the least creative and least valuable part of software engineering. The work that remains — understanding user needs, making architectural decisions, managing technical debt, evaluating tradeoffs, and ensuring system reliability — is the most valuable part.

What I expect to see is a compression of the skill distribution. Tasks that previously required a mid-level developer can increasingly be accomplished by a junior developer with an AI agent. Tasks that required a senior developer can be accomplished by a mid-level developer with the right tools. The bar for entry into productive coding is lower, but the bar for genuine software engineering judgment remains high.

The developers who will thrive are those who can work effectively at the "team lead" level — who understand systems deeply enough to break problems into well-scoped tasks, evaluate the quality of AI-generated solutions, and make the architectural and design decisions that agents cannot. The developers who may struggle are those whose primary value has been the ability to translate specifications into working code quickly and accurately, because that is precisely what AI agents do best.

The Open Questions

Several important questions remain unresolved as AI coding agents mature.

Code quality and technical debt. AI-generated code tends to be functional but not always well-architected. Over time, an agent that generates working solutions without deep understanding of the system's design principles can introduce subtle architectural drift. How teams maintain code quality when an increasing proportion of code is AI-generated is an unsolved problem.

Security implications. Agents that can read, write, and execute code in your development environment have significant access to sensitive systems. The security model for AI coding agents — what they can access, what they can execute, how their actions are audited — is still evolving.

Liability and responsibility. When an AI agent introduces a bug that causes a production outage, who is responsible? The developer who delegated the task? The company that built the agent? The established frameworks for software engineering liability do not cleanly accommodate autonomous AI contributors.

Learning and skill development. If junior developers learn to code by writing code, and AI agents increasingly write the code, how do junior developers develop the deep understanding that makes senior engineers valuable? The risk is that we optimize for short-term productivity while undermining the pipeline that produces the experienced engineers who can supervise the agents.

Where This Goes

MIT Technology Review's designation of generative coding as a 2026 breakthrough technology is accurate, but the breakthrough is not the technology itself — it is the speed of the transition from tool to collaborator to agent. The coding profession is being reshaped in real time, and the pace of change shows no sign of slowing.

My honest assessment: the developers who embrace these tools as force multipliers, who learn to work effectively at a higher level of abstraction, and who invest in the judgment and design skills that agents lack will find that their careers are enhanced, not threatened. The transition is uncomfortable — any fundamental change in how work gets done is uncomfortable — but the direction is clear. The AI coding agent is not replacing the developer. It is redefining what it means to develop software.