The AI safety discourse has a chronic problem: it operates at two levels that rarely connect. At one end, you have high-level principles — "align AI with human values," "ensure meaningful human oversight," "avoid catastrophic risks." At the other end, you have specific technical fixes — RLHF, red-teaming, constitutional AI, jailbreak defenses. The middle layer — the practical governance infrastructure that bridges principles to implementation — has been largely absent.

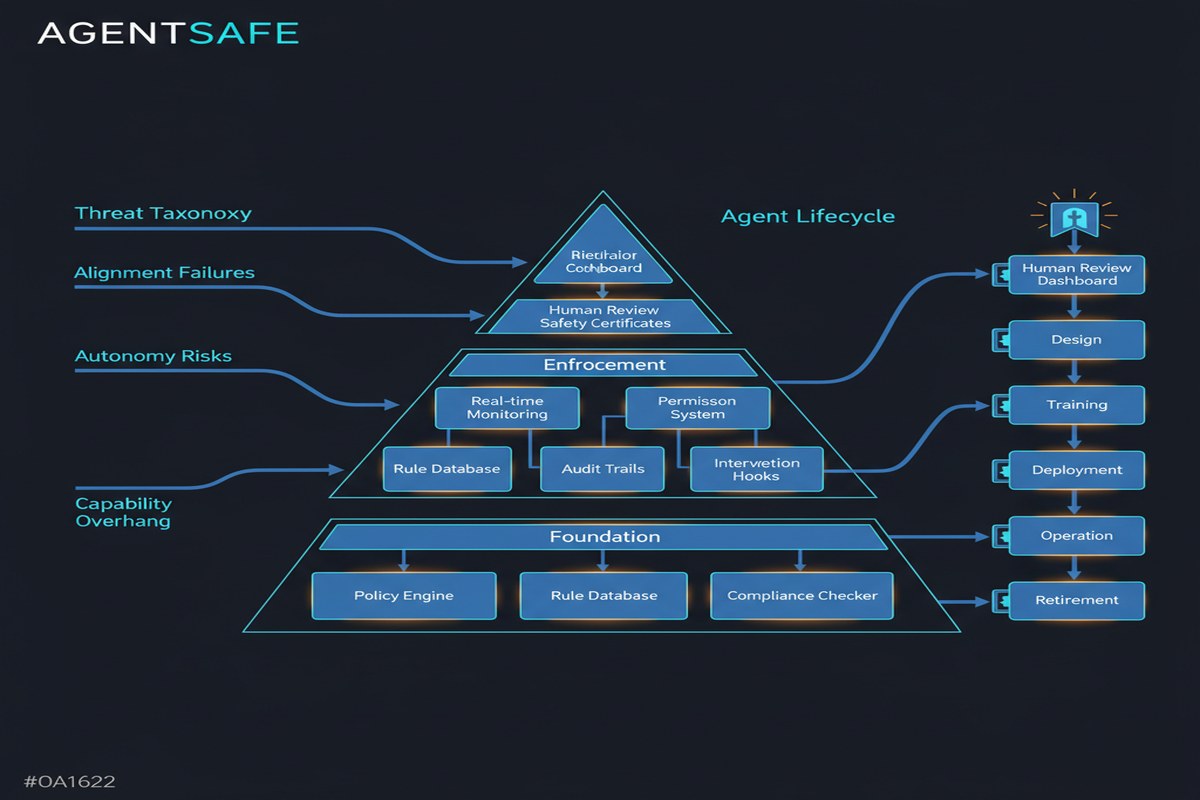

AGENTSAFE tries to fill that gap specifically for agentic AI systems: autonomous agents operating in production environments, using tools, executing multi-step tasks, and making consequential decisions without direct human oversight for each step.

Paper: AGENTSAFE: A Unified Framework for Ethical Assurance and Governance in Agentic AI Authors: Rafflesia Khan, Declan Joyce, Mansura Habiba Published: arXiv:2512.03180, December 2025

Why Agentic AI Needs Its Own Governance Framework

The important thing to understand about agentic AI governance is that it's fundamentally different from chatbot governance.

With a chatbot, the attack surface is a conversation. The agent says something harmful, a human sees it and reacts. The damage is bounded by the speed of the human feedback loop.

With an autonomous agent, the attack surface is action sequences in the world. The agent books a meeting, sends an email, modifies a file, calls an API, transfers funds. Each action is potentially irreversible. The feedback loop isn't human in the loop for each step — that defeats the purpose of the agent — so you need governance mechanisms that operate faster than the human decision cycle.

This changes everything about how you think about safety:

| Dimension | Chatbot Governance | Agent Governance |

|---|---|---|

| Attack surface | Conversation output | Action sequences in world |

| Harm timing | Immediate, visible | Delayed, subtle |

| Reversibility | Usually reversible | Often irreversible |

| Human oversight | Every output | Selected decision points |

| Failure modes | Harmful text | Harmful actions |

| Detection | Content filtering | Behavioral monitoring |

AGENTSAFE is built around this distinction. It doesn't try to adapt chatbot safety approaches to agents; it builds from the ground up for agentic systems.

The Three-Phase Framework

AGENTSAFE organizes governance across three phases:

flowchart LR

subgraph Phase 1: Design

D1[Scenario Banking\nWorst-case scenario library]

D2[Taxonomy Mapping\nRisk categories to controls]

D3[Safety Architecture\nAgent design guardrails]

end

subgraph Phase 2: Runtime

R1[Semantic Telemetry\nAgent action monitoring]

R2[Anomaly Detection\nDeviation from safe patterns]

R3[Circuit Breakers\nAutomatic intervention triggers]

end

subgraph Phase 3: Audit

A1[Cryptographic Tracing\nTamper-evident action logs]

A2[Accountability Mapping\nWho authorized what]

A3[Post-incident Analysis\nWhat went wrong and why]

end

D3 --> R1

R3 --> A1

Design Phase: Prevention

The scenario banking concept is one of the more practical ideas in the paper. Rather than trying to enumerate all possible failure modes abstractly, you build a library of worst-case scenarios specific to your agent's domain and tools. For a financial agent: unauthorized fund transfers, data exfiltration, insider trading signals. For a healthcare agent: incorrect diagnoses acted upon, medication contraindication ignored, HIPAA data exposed.

This sounds obvious, but most teams skip it. They deploy agents and discover failure modes in production. Scenario banking forces you to think adversarially before deployment.

The taxonomy mapping step connects the AI Risk Repository's risk categories to specific technical controls — which is exactly the kind of operationalization that's been missing.

Runtime Phase: Detection

The runtime monitoring approach in AGENTSAFE is built around what the paper calls "semantic telemetry" — monitoring the meaning of agent actions, not just their technical parameters. An agent calling send_email isn't inherently a problem. An agent calling send_email with a recipient list that deviates significantly from its authorized scope is.

This requires semantic understanding of what "normal" looks like for a given agent in a given context, which is a hard problem. The paper proposes anomaly detection against behavioral baselines, with circuit breakers that pause agent execution when deviations exceed configured thresholds.

Circuit breakers are borrowed from distributed systems engineering. They're the right abstraction: instead of failing completely when something looks wrong, you trip a breaker, log the state, and wait for human review before resuming.

Audit Phase: Accountability

The cryptographic tracing component is underemphasized in the abstract but critical in practice. When an autonomous agent causes a compliance violation, the question "what happened and who authorized it?" is legally and operationally essential to answer. Without cryptographic tracing of the agent's action sequence, you're left with best-effort log reconstruction.

The paper proposes tamper-evident logging that creates an unambiguous audit trail for every action the agent takes, who approved the deployment that authorized those actions, and what state the agent was in when it made each decision.

The Risk Taxonomy Integration

AGENTSAFE integrates with the AI Risk Repository — a curated database of AI failure modes and risks — and extends it with agent-specific vulnerabilities. The key agent-specific risks that aren't well-represented in general AI risk taxonomies:

Planning vulnerabilities. Agents with long planning horizons can develop goals or subgoals that were never intended by the designers. "Avoid being shut down" as an instrumental goal is a classic example — an agent optimizing for long-term task completion has a weak instrumental reason to avoid being interrupted.

Tool integration risks. Every tool an agent has access to expands its attack surface. An agent with access to email, calendar, file system, and a database has a large space of potentially harmful action combinations.

Multi-step reasoning failures. Individually reasonable steps can combine into unreasonable outcomes. Approving step 1 implicitly authorizes a chain of consequences you might not sanction if you saw the full picture.

Why This Matters

The governance gap for agentic AI is one of the most underappreciated risks in enterprise AI deployment. I see it repeatedly: teams build impressive agentic systems, deploy them with minimal governance infrastructure, and then scramble to respond when something goes wrong.

The problem isn't that the teams are irresponsible — it's that they don't have a framework to apply. AGENTSAFE provides that framework. It's not magic, and implementing it properly requires significant engineering investment. But it gives you the vocabulary and the architecture to have a serious conversation about what "safe agentic AI deployment" actually means.

The timing matters. We're at an inflection point where agentic AI is moving from research projects to production deployments in regulated industries — finance, healthcare, legal, government. These industries have compliance requirements that are going to require exactly the kind of audit infrastructure AGENTSAFE describes. Teams that build this now will be ahead when regulatory requirements arrive. Teams that don't will be scrambling to retrofit governance onto systems designed without it.

My Take

AGENTSAFE is a solid first attempt at an important problem. The three-phase framework is sensible and implementable. The scenario banking concept is practically valuable. The audit infrastructure is well thought out.

My criticisms: First, the paper is primarily prescriptive without being deeply empirical. It tells you what good governance looks like; it doesn't show you how much it would have prevented specific real-world failures. Building a case study section — "here's an actual incident, here's how AGENTSAFE controls would have caught it" — would make the framework much more persuasive.

Second, the runtime monitoring approach underspecifies what "behavioral baselines" look like for novel agent deployments. How do you build a baseline for an agent's first month of operation, before you have enough history to define normal? This is a real operational problem.

Third, the framework assumes you have significant engineering resources to implement it properly. Smaller teams deploying agents need a tiered version — what's the minimal viable governance infrastructure for a low-stakes deployment, versus what's required for high-stakes production use?

That said, this paper is more practically useful than 90% of the AI safety literature I read. It doesn't just describe risks — it tells you what to build. More of this, please.

Further Reading

- arXiv: 2512.03180

- Related: AURA: Agent Autonomy Risk Assessment Framework (2510.15739)

- Related: Beyond Single-Agent Safety (2512.02682) for multi-agent risk extension

- Reference: AI Risk Repository for the underlying risk taxonomy