Paper: Agentic Reasoning for Large Language Models arXiv: 2601.12538 | January 2026 Authors: Tianxin Wei et al. (29 authors)

The AI agent literature has a serious organization problem. Over the past two years, the term "agent" has been applied to everything from simple ReAct prompting loops to fully autonomous multi-model systems with persistent memory, tool use, and planning over multi-hour horizons. The resulting conceptual blur makes it genuinely hard to reason about what class of problem you're solving, what capability you need, and what prior work is relevant.

This paper — 29 authors, submitted January 2026 — is the most serious attempt I've seen to impose taxonomic order on the agentic reasoning landscape. It proposes a three-dimensional framework for categorizing agent capabilities, and more importantly, it uses that framework to surface where the genuine research gaps are.

If you are building, deploying, or evaluating AI agent systems in 2026, this paper should be on your reading list.

The Three-Dimensional Framework

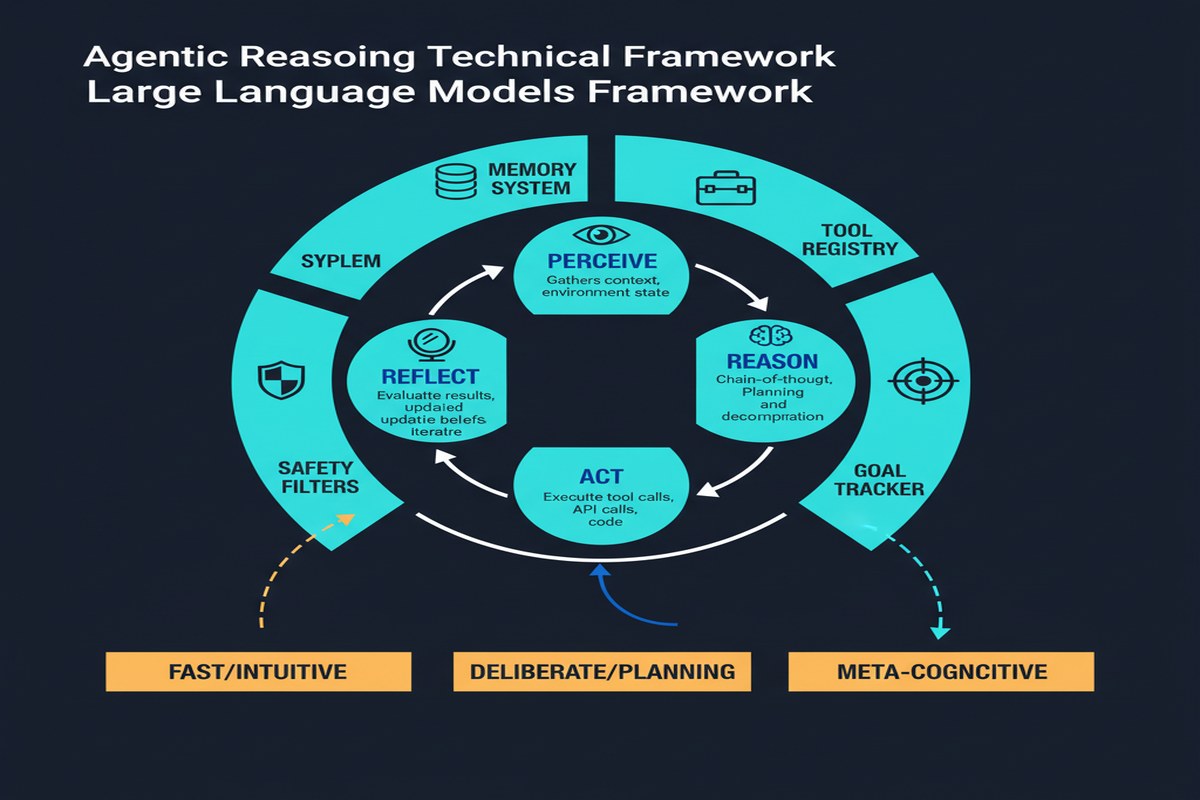

The paper organizes agentic reasoning along three complementary dimensions, which it calls foundational, self-evolving, and collective.

Dimension 1: Foundational Agentic Reasoning

This is the base layer — the capabilities that a single LLM agent needs to act autonomously in the world. The paper breaks this into four subcapabilities:

- Planning: The ability to decompose a goal into a sequence of subgoals and order them appropriately. This includes both hierarchical planning (decomposing into subtasks) and reactive planning (adapting when earlier steps fail).

- Tool Use: The ability to select, invoke, and interpret external tools — APIs, code executors, search engines, databases. Not just knowing tools exist, but knowing when to use each and how to recover from tool failures.

- Memory Management: Maintaining task-relevant context across time. This includes working memory (within a context window), episodic memory (retrieving relevant past interactions), and semantic memory (organized knowledge about the domain).

- Self-Critique: Evaluating the quality of one's own outputs and reasoning. This is a foundational requirement for iterative improvement and is notoriously hard to calibrate — models that are wrong tend to be confidently wrong.

mindmap

root((Agentic Reasoning))

Foundational

Planning

Hierarchical decomposition

Reactive replanning

Tool Use

Selection

Invocation

Recovery

Memory

Working memory

Episodic retrieval

Semantic knowledge

Self-Critique

Output evaluation

Reasoning verification

Self-Evolving

In-context Learning

Few-shot adaptation

Instruction following

Fine-tuning Loops

RLHF for agents

Distillation from traces

Meta-Learning

Learning to learn

Strategy adaptation

Collective

Role Assignment

Task decomposition

Agent specialization

Communication

Information sharing

Conflict resolution

Emergence

Collective capabilities

Swarm behaviors

Dimension 2: Self-Evolving Agentic Reasoning

Single-inference agents are limited by what they know at deployment time. Self-evolving agents update their behavior based on new information and experience. The paper identifies three mechanisms:

- In-context learning: Adapting behavior based on examples or instructions provided at inference time. This is the most widely deployed form of agent adaptation — ReAct-style agents that observe action results and adjust subsequent actions are exhibiting in-context self-evolution.

- Fine-tuning loops: Using agent interaction traces to update the underlying model weights. This requires generating trajectories, filtering for successful ones, and training on them — a more expensive but potentially more powerful adaptation mechanism.

- Meta-learning: Learning to adapt more efficiently. The most ambitious form — training agents that can learn new tasks with minimal examples by learning the structure of task families.

Dimension 3: Collective Multi-Agent Reasoning

When the task is too complex for a single agent, or when specialization is valuable, multi-agent systems distribute reasoning across multiple coordinated agents. The paper identifies role assignment, communication protocols, and emergent collective behaviors as the key research questions.

This dimension is the least mature. Most deployed multi-agent systems are relatively simple pipelines: a planner agent decomposes tasks, specialist agents execute subtasks, a reviewer agent checks results. The paper points to more interesting dynamics — agents negotiating about task interpretation, agents with conflicting information reaching consensus, emergent problem-solving strategies that no individual agent was explicitly designed to exhibit — as the research frontier.

The Gaps the Framework Exposes

The framework is most valuable for what it reveals about where we are not. The paper is explicit about four major gaps:

Gap 1: Long-horizon planning under uncertainty. Current agents handle planning well for 3-10 step tasks in well-defined environments. They degrade significantly for tasks that require 50+ steps, that involve significant uncertainty about intermediate outcomes, or where early decisions have non-obvious downstream consequences. This is the gap between "agent demos" and "agent systems that work in production."

Gap 2: Robust memory architectures. Most deployed agents have ad-hoc memory — some combination of context window stuffing, retrieval-augmented lookups, and external database calls. The paper notes the absence of principled architectures for when to store what, how to retrieve efficiently under time pressure, and how to handle contradictions between memory contents.

Gap 3: Calibrated self-critique. Agents that can accurately assess whether their outputs are correct are dramatically more useful than agents that can only generate outputs. The self-critique capability is a prerequisite for reliable autonomous operation — an agent that can't tell when it's wrong needs constant human supervision. Current self-critique mechanisms are inconsistent and frequently overconfident.

Gap 4: Multi-agent alignment. When multiple agents work together, ensuring that their collective behavior is aligned with the user's actual goals — not just their stated goals — is a hard unsolved problem. Individual agent alignment is hard enough; multi-agent collective alignment adds additional complexity.

Why This Matters

The framework provides a common language. When you're building an agentic system, the three dimensions tell you where to focus: are you primarily working on foundational capabilities? Building a self-updating system? Coordinating multiple specialists? The taxonomy makes conversations crisper and makes it easier to evaluate whether existing work is directly relevant.

The gaps are specific and actionable. The paper doesn't just say "more research is needed." It identifies specific failure modes — long-horizon planning degradation, inconsistent self-critique, memory architecture poverty — that practitioners can observe in their systems and researchers can target.

Self-evolving agents are underinvested. The paper's coverage of Dimension 2 highlights how little systematic work has been done on agents that improve themselves through deployment experience. Most agent systems are static at inference time — they don't learn from successful or failed runs except through human-mediated fine-tuning cycles. The potential for automated, continuous improvement from interaction data is substantial and underexplored.

My Take

I build and deploy agentic systems, and I've spent years frustrated by the lack of common conceptual vocabulary in this space. The paper fills a real gap.

The three-dimensional framework is good, but I'd push back on one implicit assumption: the paper treats the three dimensions as relatively separable. In practice, they're deeply entangled. An agent with poor self-critique capabilities (Dimension 1 gap) will generate low-quality fine-tuning data that corrupts its self-evolution (Dimension 2) and produces noise in multi-agent coordination (Dimension 3). The failure modes cascade.

This matters for system design. If you're building an agentic system and your self-critique mechanism is weak, you can't naively layer in self-evolving fine-tuning loops on top — you'll be training on garbage traces and making the system worse. The foundation has to be solid before the superstructure can be built.

The multi-agent section is the most interesting to me precisely because it's the least developed. Current multi-agent systems are mostly sequential pipelines — step 1 goes to agent A, step 2 goes to agent B, and so on. The genuinely interesting multi-agent behaviors — emergent problem solving, graceful degradation when an agent fails, productive disagreement between agents with different specializations — are barely explored in production systems.

My prediction: the biggest commercial value in agentic AI over the next two years will come from teams that seriously invest in Dimension 1 fundamentals — particularly planning robustness and calibrated self-critique — rather than teams that race to add more agents to their systems. More agents doesn't help if each individual agent is unreliable.

This paper is worth your time if you care about building agents that work in the real world, not just in demos.

arXiv:2601.12538 — read the full paper at arxiv.org/abs/2601.12538

Explore more from Dr. Jyothi