Paper: Autonomous Agents for Scientific Discovery: Orchestrating Scientists, Language, Code, and Physics arXiv: 2510.09901 | October 2025 Authors: Zhou et al. (17 authors)

AI for science has been a recurring promise in the community for years. We have had individual demonstrations — AlphaFold for protein structure, generative models for molecule design, LLMs for literature review — but the integration has been fragile and narrow. Each demo operates in its isolated domain, doesn't talk to the other pieces, and requires a human scientist to synthesize results and decide what to do next.

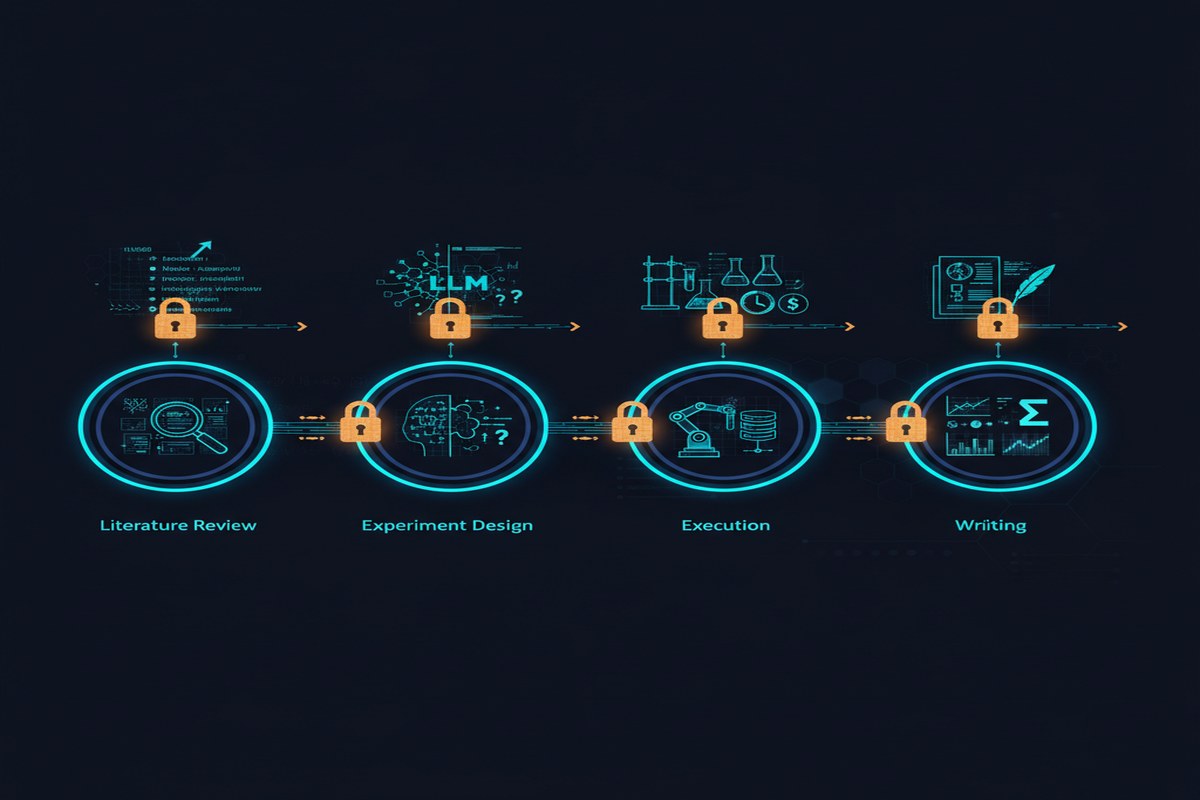

This paper asks a harder question: what would it look like to build LLM-based agents that orchestrate the full scientific discovery lifecycle? Not one step of the process, but all of them — hypothesis discovery, experimental design, execution, result analysis, and iterative refinement?

The paper's answer is a conceptual framework that is more concrete and more useful than most "AI for science" vision papers. It maps the problem domain, identifies the distinct roles that language agents play at different stages, and characterizes the constraints that make scientific agentic systems different from general-purpose LLM agents.

This is required reading for anyone building AI systems that interact with scientific workflows.

The Discovery Lifecycle: Four Stages, Four Agent Roles

The paper organizes the scientific discovery lifecycle into four stages and maps LLM agent capabilities to each:

Stage 1: Hypothesis Discovery

The hardest problem in science is often knowing what to look for. Hypothesis generation requires synthesizing existing literature, identifying gaps, and formulating testable claims. LLM agents provide value here through systematic literature review at scale, pattern recognition across domains (identifying analogies from adjacent fields), and constraint-guided hypothesis generation (proposing hypotheses consistent with known physical laws).

The paper correctly notes that this stage requires not just retrieval but reasoning about uncertainty — the agent needs to distinguish between "this hasn't been tried" (gap) and "this was tried and didn't work" (dead end), and to weight hypotheses by their probability of yielding novel, publishable results.

Stage 2: Experimental Design

Given a hypothesis, how do you design an experiment to test it? This stage requires translating abstract claims into concrete experimental protocols, specifying controls, estimating required sample sizes, and anticipating confounds.

LLM agents are useful here for protocol synthesis (leveraging knowledge of established experimental methods), constraint checking (does this protocol violate known physical constraints or safety requirements?), and resource optimization (can this experiment be designed to produce maximum information per unit cost?).

Stage 3: Execution and Instrumentation

This is where AI for science has historically been weakest. Running experiments requires interaction with physical instruments, laboratory automation systems, and simulation environments. The paper maps this as the "Physics" dimension of the framework title — the agent must interface not just with language and code but with physical reality.

The most interesting contribution here is the distinction between automated laboratories (robotic systems that can execute agent-specified protocols without human intervention) and hybrid workflows (agent-augmented human-executed experiments). The paper argues both are important and characterizes when each is appropriate.

Stage 4: Result Analysis and Iteration

After experiments produce data, the agent must analyze results, identify whether the hypothesis is supported, characterize unexpected findings, and propose refinements or follow-up experiments. This is the stage where current LLM capabilities are strongest — it's closest to standard data analysis and reasoning tasks.

flowchart TD

H[Hypothesis Discovery] -->|"Literature synthesis\nGap identification\nHypothesis generation"| D

D[Experimental Design] -->|"Protocol synthesis\nConstraint checking\nResource optimization"| E

E[Execution & Instrumentation] -->|"Automated lab control\nSimulation execution\nData collection"| A

A[Result Analysis] -->|"Statistical analysis\nPattern identification\nAnomaly detection"| R

R[Refinement & Iteration] -->|"Hypothesis update\nProtocol refinement\nNew hypotheses"| H

S[Human Scientist] -->|"Oversight\nDomain judgment\nEthics/safety review"| H

S --> D

S --> E

S --> A

S --> R

subgraph Agent Types

LA[Language Agent\nLiterature, reasoning]

CA[Code Agent\nAnalysis, simulation]

PA[Physical Agent\nLab automation]

end

LA --> H

LA --> D

CA --> E

CA --> A

PA --> E

The Orchestration Challenge

The paper's title includes "orchestrating" — and this is where the contribution gets most technically interesting. Scientific discovery isn't a linear pipeline; it's a loop with multiple feedback channels. Experimental results can invalidate hypotheses (sending the system back to Stage 1), expose design flaws (sending it back to Stage 2), or reveal instrumentation problems (sending it back to Stage 3).

Orchestrating agents across this non-linear process requires several capabilities that current LLM agents handle poorly:

Long-horizon memory: Scientific projects span months. An agent system needs to maintain coherent context about a project's history — prior hypotheses, failed experiments, promising leads — across sessions. Current LLM context windows, even at 1M tokens, don't solve this; the agent needs structured memory systems, not just longer contexts.

Uncertainty quantification: Scientific reasoning requires explicit reasoning about confidence. "This experiment might work" is not the same as "this experiment has a 70% probability of producing a statistically significant result given our prior." The paper argues that agents in scientific settings need calibrated uncertainty, not just point estimates.

Multi-agent coordination: Real research teams are multi-person. A full scientific agent system will involve multiple specialized agents — literature agents, experimental design agents, analysis agents — that need to coordinate without a central planner becoming a bottleneck.

Why This Matters

Scientific discovery is the highest-value application of AI capabilities. Not content generation, not customer service chatbots — discovery. If LLM-based agents can genuinely accelerate the rate at which humans generate new scientific knowledge, the compounding impact is civilization-scale.

The paper is important because it's honest about what this requires. Not just a better language model. Not just a longer context window. A carefully engineered system that handles the full lifecycle, integrates with physical infrastructure, maintains long-horizon memory, and supports human oversight at the right checkpoints.

The AI for science field has too many papers that demonstrate individual capabilities in isolation. This paper is notable for insisting on the integration problem — the hardest part is not any individual capability but the orchestration of multiple capabilities across the full workflow.

My Take

I've spent time thinking carefully about where AI can have asymmetric impact, and scientific discovery is at the top of my list. The bottleneck in most research fields is not intelligence — it's throughput. Highly trained scientists spend enormous fractions of their time on tasks that don't require their expertise: searching literature, running routine analyses, writing boilerplate experimental protocols. Agents that can handle these efficiently create leverage that multiplies human scientific capacity.

What I find valuable about this paper is that it doesn't pretend the current capability level is sufficient for autonomous science. It's honest about the gap between current LLM agent capabilities and what's required for genuine end-to-end scientific discovery. The framework is aspirational but grounded — it identifies specific, solvable engineering problems (long-horizon memory, uncertainty quantification, physical world integration) rather than waving hands at AGI.

The piece I think the paper underemphasizes is the epistemics problem. Scientific knowledge isn't uniformly reliable. Papers have methodological errors. Results don't replicate. Literature review by an LLM that treats every published paper as equally credible will produce agents that are confidently wrong in ways that are very hard to catch. Building agents that reason appropriately about source quality, replication status, and methodological rigor is harder than building agents that can read abstracts. I'd want to see more work on this layer.

I'm also watching the physical world integration piece closely. The automated laboratory infrastructure required for Stage 3 autonomy exists in only a handful of well-funded labs. Democratizing scientific agent systems will require this infrastructure to become more accessible — either through cloud robotics platforms, shared automated laboratory networks, or simulation environments that are validated against physical reality. This is an infrastructure problem that the AI community alone can't solve, but AI researchers should be pushing for it.

The trajectory this paper describes is the one I believe in. The question is timeline, and on that the paper is appropriately cautious.

arXiv:2510.09901 — read the full paper at arxiv.org/abs/2510.09901

Explore more from Dr. Jyothi