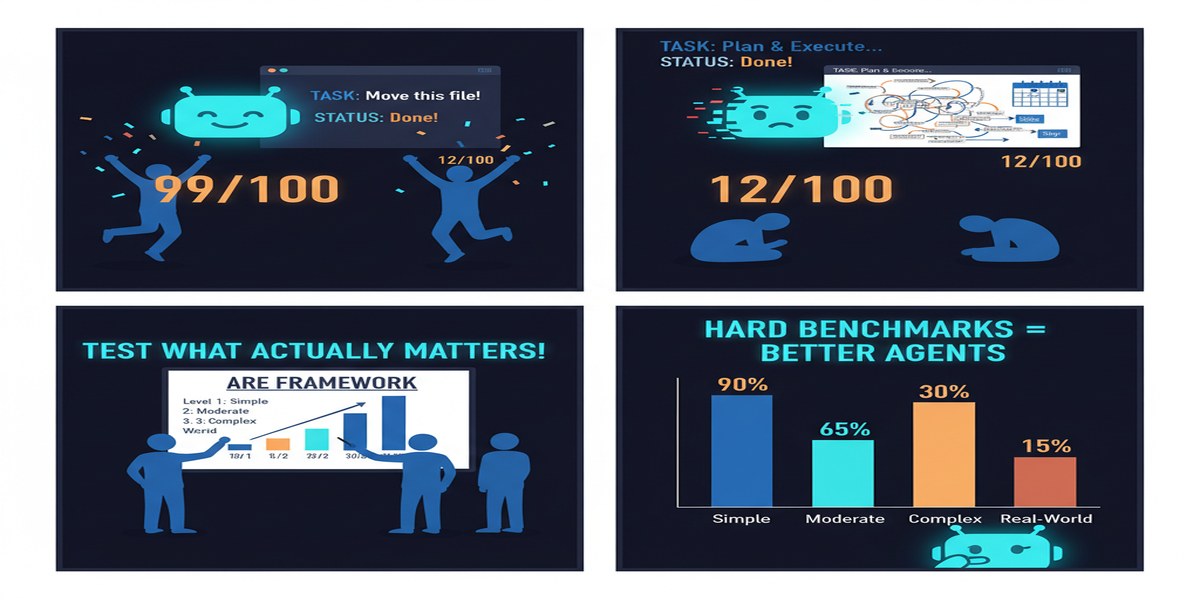

Agent benchmarking is caught in a paradox. The benchmarks that are realistic enough to be meaningful are expensive to scale. The benchmarks that are cheap to scale aren't realistic enough to be meaningful. WebArena and its descendants are valuable but limited to web-based tasks. Code benchmarks miss the messy complexity of real-world tasks. Task-specific benchmarks don't generalize. Synthetic benchmarks are gameable.

Meta's September 2025 paper introducing ARE — Agent Research Environments — is the most ambitious attempt I've seen to build evaluation infrastructure that is simultaneously scalable, diverse, and realistic. Whether it succeeds fully is a separate question from whether the attempt is worthwhile. It is.

Paper: ARE: Scaling Up Agent Environments and Evaluations Authors: Romain Froger, Pierre Andrews, Matteo Bettini, et al. (24 authors, Meta) Published: arXiv:2509.17158, September 2025 (revised December 2025)

What ARE Actually Is

ARE is not just a benchmark — it's a research platform for building and running agent evaluations. The distinction matters. A benchmark is a fixed test set. A platform is infrastructure that lets you create new test environments, integrate them with existing systems, and run evaluations reproducibly.

The platform provides:

- Environment abstractions for modeling arbitrary task environments (web interfaces, file systems, code environments, databases, APIs)

- Synthetic and real application integration — the ability to run agents against real applications or controlled simulations of them

- Agentic orchestration infrastructure — built-in support for multi-step agent evaluation

- Verification layers — the key innovation, which I'll discuss in detail

On top of this platform, the Meta team built Gaia2, a benchmark targeting complex tasks with three specific properties that distinguish it from existing benchmarks:

Ambiguity. Real-world tasks are often underspecified. Users don't tell you every constraint and assumption. Gaia2 tasks have deliberate ambiguity that requires agents to make reasonable inferences and handle edge cases gracefully.

Dynamic adaptation. Tasks in Gaia2 require agents to adapt mid-execution when conditions change. This tests robustness to partial failures and unexpected information.

Collaboration. Some Gaia2 tasks require multiple agents to coordinate to achieve the goal. This introduces the multi-agent coordination challenges into evaluation in a structured way.

The Reasoning-Efficiency Tradeoff

The finding that "stronger reasoning often comes at the cost of efficiency" is Gaia2's most important empirical result — and the one with the most direct practical implications.

What they mean by this: agents that use extended reasoning chains (chain-of-thought, tree-of-thought, reflection loops) perform better on complex tasks but consume dramatically more tokens and time than agents that operate more directly. The tradeoff is real and significant:

xychart-beta

title "Reasoning Depth vs. Efficiency Tradeoff"

x-axis ["Direct\nResponse", "Basic\nCoT", "Extended\nThinking", "Deep\nReflection"]

y-axis "Relative Score" 0 --> 100

bar [45, 62, 78, 88]

line [95, 60, 35, 15]

(Note: chart illustrates the qualitative tradeoff pattern — bars show task performance, line shows efficiency. Not exact Gaia2 numbers.)

The implication is that you can't optimize for both simultaneously. Agents that maximize task completion will be slow and expensive. Agents that maximize efficiency will leave task quality on the table.

This matters for deployment architecture. For high-stakes, low-frequency tasks (a complex legal document review, a major code refactoring decision), you want maximum reasoning depth. For high-frequency, latency-sensitive tasks (customer service, real-time recommendations), you want efficient, direct responses. You need different agent configurations for different task types, even if the underlying model is the same.

The Verification Layer Innovation

One of ARE's most important technical contributions is its verification architecture. Many agentic evaluations use LLM-as-judge approaches: a separate language model evaluates whether the agent's output is correct. This is convenient but introduces the judge's own failure modes.

ARE's verification layer is more sophisticated:

Deterministic verification for structured outputs. For tasks with ground-truth answers (database queries, code execution results, factual lookups), verifiers check correctness directly without involving another LLM.

Rubric-based verification for open-ended outputs. For tasks where "correct" is a range rather than a point, verifiers apply structured rubrics with multiple criteria. The rubrics are defined by humans, not learned by a model.

Composite verification for multi-step tasks. For long-horizon tasks, verification checks intermediate steps, not just final outputs. An agent that reaches the right answer through a wrong process gets a lower score than one that gets there correctly.

This makes Gaia2 results more trustworthy than evaluations that rely entirely on LLM-as-judge, and it establishes a model for how future agent evaluations should be structured.

Why This Matters

The evaluation problem is arguably the most important unsolved problem in agentic AI right now. You can't improve what you can't measure. And the current state of agent measurement is inadequate for production use cases.

Most practitioners use a combination of:

- Automated metrics on narrow tasks (pass/fail test cases)

- Human evaluation on sampled outputs (expensive and slow)

- Deployment monitoring (real users as evaluators, ethically questionable)

None of these scale well to complex multi-step agentic tasks. ARE's platform approach is the right architecture for solving this: build composable, reusable evaluation infrastructure that lets you define new task environments without rebuilding the evaluation pipeline from scratch.

The Gaia2 benchmark itself is valuable, but the platform is more valuable. If the community adopts ARE as evaluation infrastructure, we'll get much faster iteration on agent capabilities because the evaluation feedback loop will be faster and more reliable.

The Ambiguity Issue (And Why It's Important)

I want to highlight the ambiguity dimension of Gaia2 specifically, because it's the most underappreciated property.

Most agent benchmarks are precisely specified. The task description is clear, the success criterion is well-defined, the evaluation is objective. This is good for research reproducibility but bad for measuring real-world capability.

Real tasks are ambiguous. "Clean up this codebase" could mean a hundred different things. "Research our competitors" has no clear stopping criterion. "Write a proposal for this project" requires inferring unstated preferences and constraints.

Agents that perform well on precisely-specified benchmarks often fail on ambiguous real-world tasks because they were implicitly trained to find the "right answer" when there isn't one — to execute confidently on a specification rather than to reason under uncertainty about what the specification should be.

Gaia2's deliberate ambiguity is measuring a different capability: not "can the agent execute a well-defined task?" but "can the agent reason about what a well-defined task would even look like and then execute it?" This is closer to what enterprise users actually need.

My Take

ARE and Gaia2 are genuine contributions. The platform infrastructure is well-designed, the benchmark properties (ambiguity, dynamic adaptation, collaboration) are the right things to measure, and the verification architecture is more principled than most alternatives.

My criticisms: First, Meta built this — which means the incentives to make it a genuine research platform versus a showcase for Meta's own models need to be watched. The paper is careful to present results in ways that don't look like marketing, but the long-term governance of an evaluation platform created by a major AI lab is legitimately tricky.

Second, the reasoning-efficiency finding needs more investigation. The paper reports the tradeoff but doesn't explain it mechanistically. Is it fundamental to how reasoning models work? Is it an artifact of current training methods? Could future models achieve high reasoning depth without the efficiency penalty? These are important questions for the field.

Third, 24 authors from one organization makes this inherently a proprietary research output. The platform and benchmark need to be genuinely open and independently validatable before the research community can rely on them. The current arXiv preprint doesn't give enough detail about reproducibility.

But the direction is right. We need scalable, realistic agent evaluation infrastructure, and ARE is the most serious attempt at building it. If the platform lives up to its promise, it will accelerate progress across the entire agentic AI field.

Further Reading

- arXiv: 2509.17158

- Related: Towards a Science of Scaling Agent Systems (2512.08296) for complementary scaling analysis

- Related: SWE-Bench Pro (2509.16941) for domain-specific (code) evaluation

- Related: Evaluation and Benchmarking of LLM Agents survey (2507.21504)