Paper: Beyond a Million Tokens: Benchmarking and Enhancing Long-Term Memory in LLMs arXiv: 2510.27246 | October 2025 Authors: Multiple institutions

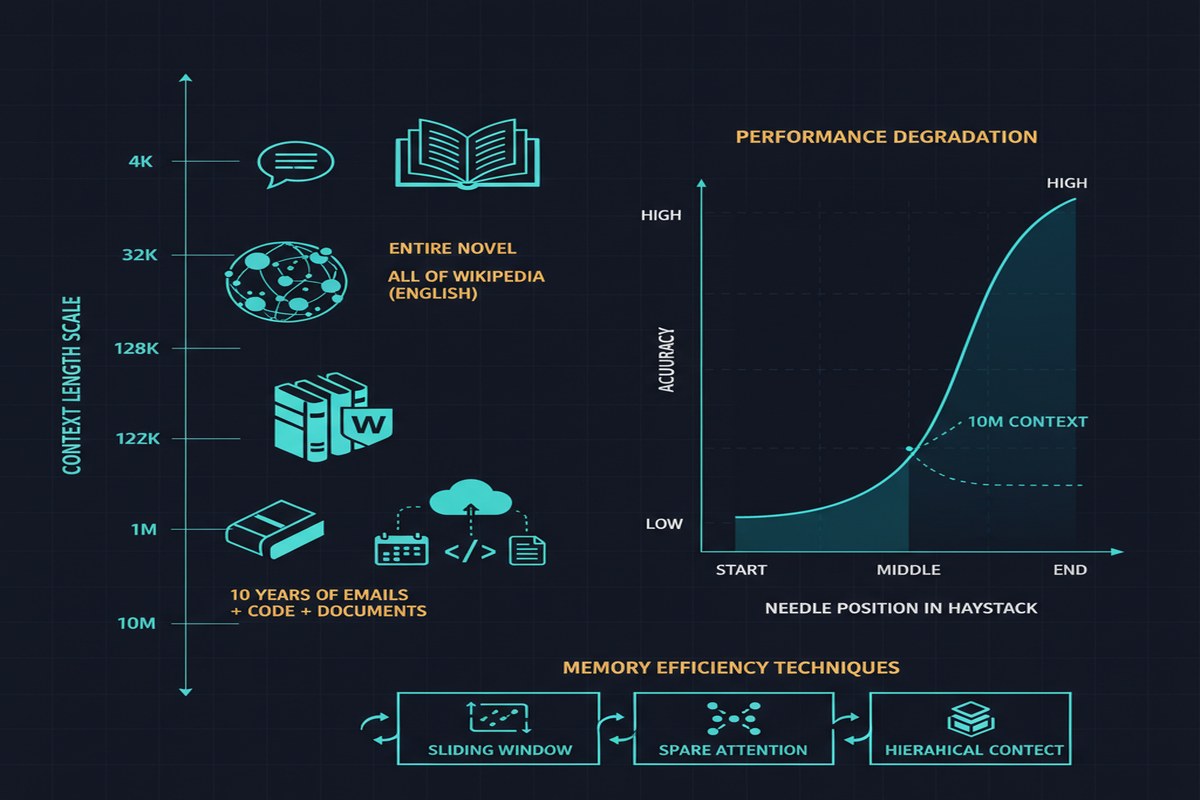

We are in the middle of a marketing arms race on context window size. Claude 3 offered 200K tokens. GPT-4-Turbo hit 128K. Gemini 2.0 pushed to 1M. Then Llama 4 landed with a 10M token context window, and the press releases were rapturous.

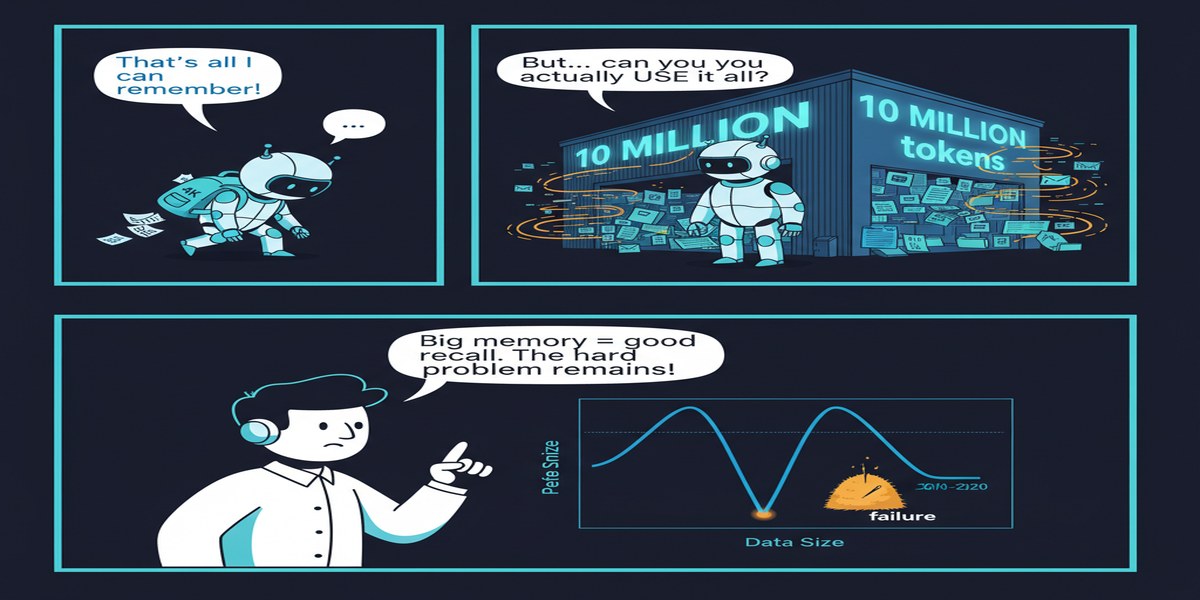

Here is the question nobody was asking loudly enough: can any of these models actually use that context?

This paper attempts to answer that question rigorously. It builds a benchmark specifically designed for long-context evaluation up to 10M tokens, introduces tasks that are genuinely hard at scale — not just needle-in-a-haystack retrieval — and documents the uncomfortable reality of how current models perform when you put them at extreme context lengths.

The Gap Between Context Windows and Context Utilization

Let me frame the problem clearly. Having a 1M-token context window means the model can accept 1M tokens of input. It does not mean the model reasons effectively over that input.

The empirical record shows consistent performance degradation as context length increases:

- Relevant information in the middle of a long context is retrieved less reliably than information at the edges ("lost in the middle")

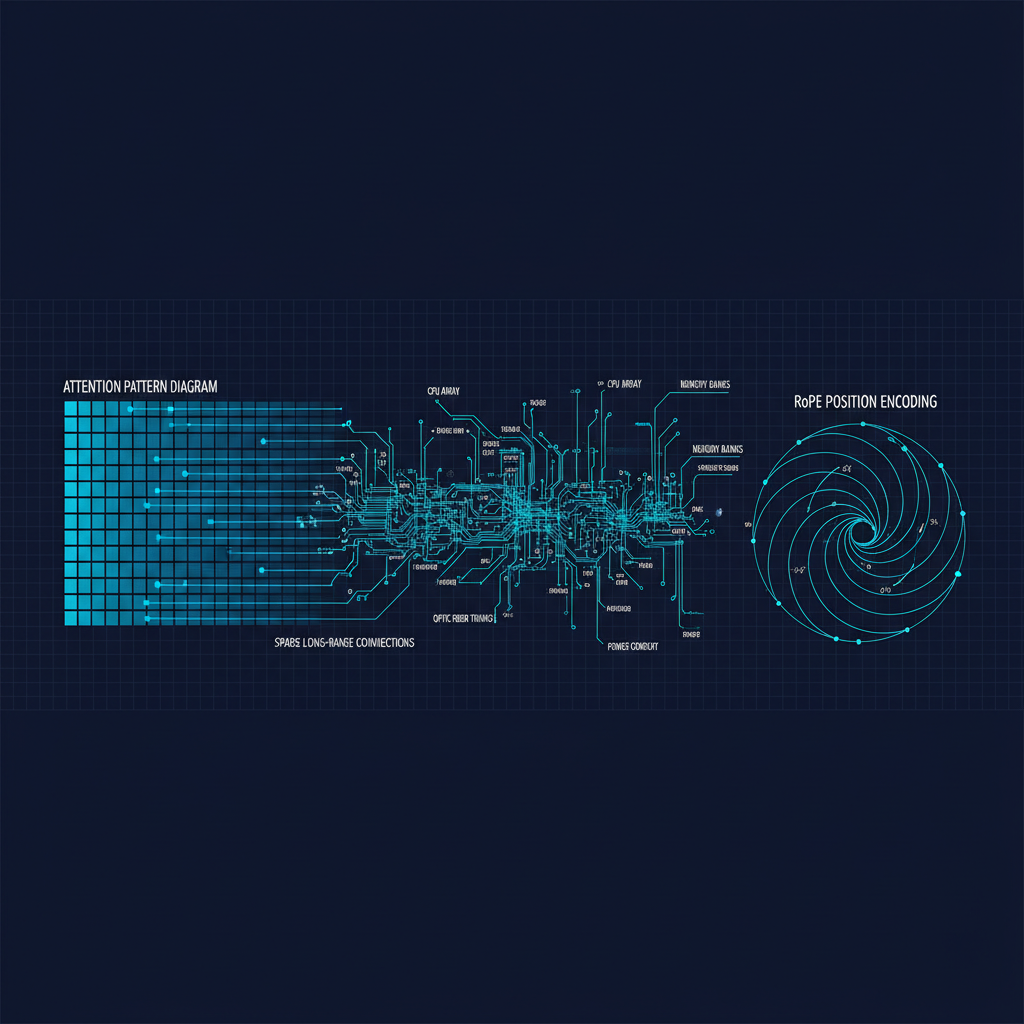

- Attention weights become increasingly diluted across long sequences

- Long-range dependencies — connecting a fact mentioned early in a document to a conclusion needed late — are harder to maintain

- Instruction following degrades when instructions appear far from the relevant context

These problems have been documented extensively in contexts up to 128K tokens. This paper extends the analysis to 10M, where the failures are more severe and more varied.

What the Benchmark Introduces

Most existing long-context benchmarks are essentially needle-in-a-haystack tests: hide a specific fact somewhere in a long document, ask the model to retrieve it. These tests are useful but not sufficient. Real-world long-context tasks require more than retrieval — they require reasoning over distributed information.

This paper introduces three genuinely harder task categories:

flowchart LR

A[10M Token Benchmark Suite] --> B[Classic Retrieval]

A --> C[Contradiction Resolution]

A --> D[Event Ordering]

A --> E[Instruction Following at Scale]

B --> B1["Find specific fact buried in sequence"]

C --> C1["Identify when earlier statement contradicts later one"]

D --> D1["Order events across a 10M token narrative"]

E --> E1["Follow early instructions when later context conflicts"]

style C fill:#ff6b6b,color:#fff

style D fill:#ff6b6b,color:#fff

style E fill:#ff6b6b,color:#fff

Contradiction Resolution: Given a document where earlier and later passages conflict, identify the contradiction. This requires the model to maintain a mental model of what was asserted early in the sequence and flag when later content violates it. At 10M tokens, this tests whether attention can bridge across tens of thousands of intervening tokens.

Event Ordering: Given a narrative with events spread across 10M tokens, reconstruct the temporal ordering. This is a basic task for humans reading a document over time — we naturally track sequence. LLMs doing it in a single forward pass must maintain temporal state across extreme distances in the token stream.

Long-Range Instruction Following: Instructions given at position 100 in a 10M-token sequence should still be followed when the model generates a response at position 10M. If later context explicitly contradicts those instructions, the model should recognize and navigate the conflict.

Results: The Brutal Truth

Current frontier models — including Claude, GPT-4o, and Gemini — show significant performance degradation on all three hard tasks as context length increases from 128K toward 10M tokens.

The specific degradation patterns are instructive:

- Contradiction resolution degrades fastest, suggesting that maintaining a coherent "world model" across very long contexts is the hardest cognitive challenge

- Event ordering is more robust but still degrades, especially when events are spread beyond 500K tokens apart

- Retrieval remains the most robust capability but also shows degradation past 2M tokens

Llama 4, designed for 10M contexts, shows better relative performance than models not designed for that scale — but still exhibits meaningful degradation on the harder tasks.

The paper also benchmarks several enhancement techniques:

- Hierarchical summarization: periodically compress earlier context into a summary, reducing effective context length

- Retrieval augmentation: index the long document externally, retrieve relevant chunks per query

- Memory-augmented architectures: external memory banks that persist state across chunks

No single technique fully closes the gap, but hierarchical summarization combined with selective retrieval produces the most consistent improvements.

Why This Matters

1. The 10M context window is partly marketing. That's a strong statement, but the evidence supports it. Raw context window size means little if performance on hard tasks degrades past 1M tokens. Until models are demonstrated to perform reliably on contradiction resolution and event ordering at 10M scales, the 10M context claim should be understood as "accepts 10M tokens" not "reasons effectively over 10M tokens."

2. New benchmarks shape model development. Once this benchmark exists and is widely adopted, model developers will optimize for it. The history of MMLU, HumanEval, and SWE-bench shows that benchmarks become training targets. Expect 2026 model releases to be evaluated on 10M-token tasks — which should improve real long-context capability.

3. The enhancement techniques point to a hybrid future. The finding that hierarchical summarization + selective retrieval outperforms raw long-context inference suggests the winning architecture for long documents may not be a single huge context window, but a hybrid: a medium-context model (128K-512K) with intelligent retrieval and compression of longer inputs. This aligns with how Recursive Language Models (see my post on RLMs) approach the problem.

4. Agentic applications need this research. Long-context reasoning is not academic. Agentic systems that operate on enterprise codebases, legal documents, or long-running task histories face exactly these challenges. We need models that maintain coherent state over millions of tokens to build reliable autonomous agents.

My Take

I appreciate this paper for its honesty. It does not celebrate the existence of 10M-token context windows — it interrogates whether they work. That kind of critical benchmarking is exactly what the field needs, and I wish more papers were written in this spirit.

My view on the findings: the degradation on contradiction resolution is the most troubling result. Detecting when you've contradicted yourself is a fundamental reasoning requirement. If models can't do this reliably at 1M+ tokens, then trust in model-generated long-context summaries and analyses should be limited. I have seen enterprise teams discover this the hard way — Claude or GPT-4 producing confident summaries of long documents that silently contradict the source material.

The practical upshot is that we should not design production systems around the assumption that a model with a large context window will reliably use all of it. Design for the effective context limit, not the stated limit. Use retrieval augmentation, chunking, and hierarchical compression for inputs beyond 128K-256K tokens. The native long-context models will improve — this benchmark will help them get there — but right now, the effective reliable limit is shorter than the marketed limit.

The researchers did the unglamorous work of building proper evaluation infrastructure before the hype got too far ahead of the reality. More papers should do this.

arXiv:2510.27246 — read the full paper at arxiv.org/abs/2510.27246