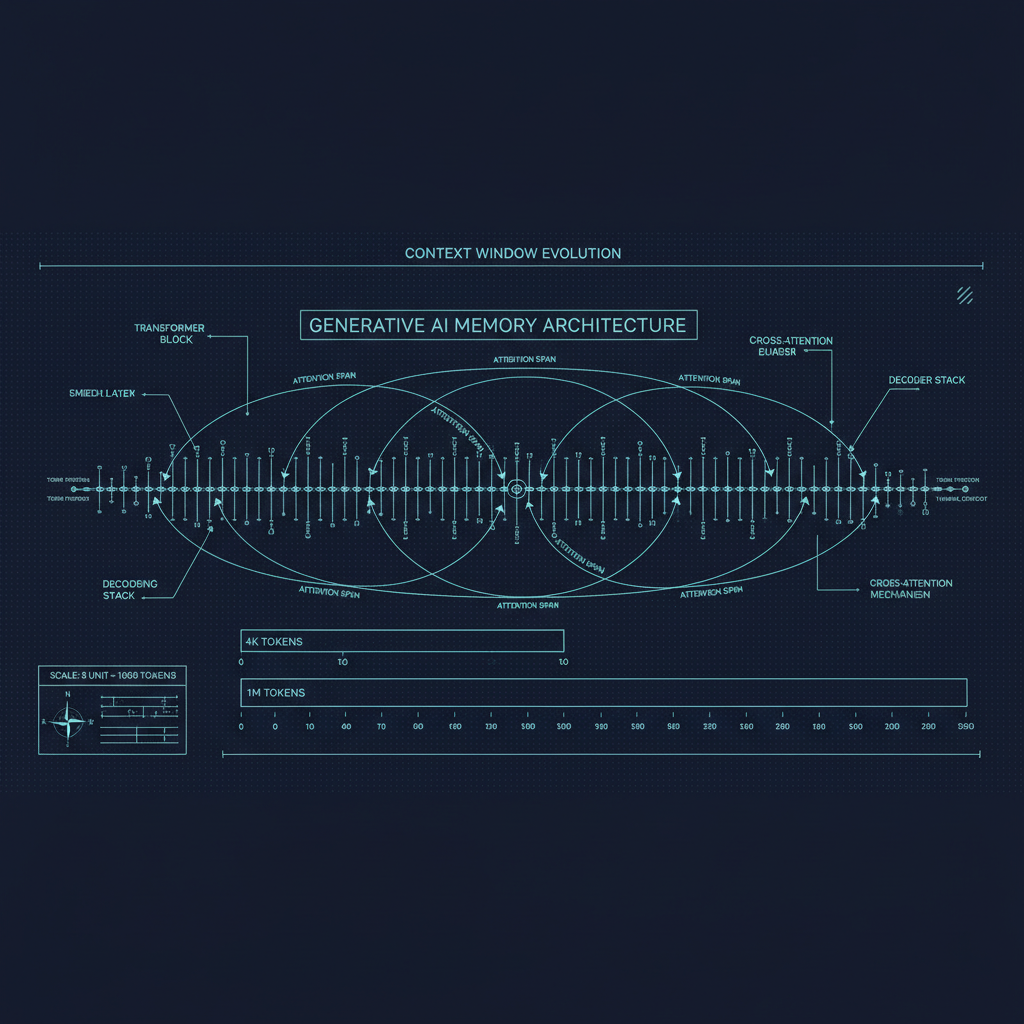

The context window arms race of the last two years has been remarkable. From GPT-3's 2,048 tokens to Claude's 200K, Gemini 1.5's 1 million, and experimental systems pushing further still, the sheer numbers have grown at a pace that would seem implausible if you had described it in 2022. But a growing body of research has forced a more uncomfortable question than "how large can context windows get?" — namely, "do models actually use long contexts well?"

The answer turns out to be significantly more nuanced than the marketing suggests. Models with nominally large context windows exhibit systematic patterns of what they attend to, what they remember, and where they fail. Understanding these patterns is essential for anyone building applications that depend on long-context reasoning — and for anyone trying to understand what "long-context capability" actually means.

The Lost in the Middle Problem

The most clearly documented failure mode for long-context models is what Liu et al. (2023) called "Lost in the Middle": models are substantially better at retrieving and using information from the beginning and end of long contexts than from the middle.

The paper's experimental design is elegant. A factual question is answered by a specific document hidden within a set of irrelevant documents. The position of the relevant document is varied systematically. Performance — the rate at which the model uses the correct information to answer — is plotted as a function of position.

The resulting U-shaped performance curve — high at position 0 (beginning of context), declining sharply through the middle, and partially recovering at the end — was consistent across multiple models and context lengths. For some models and conditions, accuracy dropped by 20-30 percentage points between the best and worst positions.

Why does this happen? The attention mechanism assigns higher weight to tokens that are more similar to the query token and to tokens that are closer in position (due to positional encoding effects and the causal attention mask). The beginning of a context window benefits from the fact that early tokens are attended to by all subsequent tokens, which may strengthen their representations throughout forward passes. The end benefits from recency — the final tokens are closest in position to the generation step. The middle has neither advantage.

This is not a flaw in any specific model; it is a consequence of how autoregressive transformer attention works in long sequences. The question is how much it has been mitigated by training and whether mitigation is sufficient for the applications being built.

Needle in a Haystack Evaluation

The "needle in a haystack" evaluation has become the standard test for long-context memory. A short, specific piece of information (the "needle") is inserted at a specific position within a long block of filler text (the "haystack"), and the model is asked to retrieve it. Position is varied both in terms of depth (where in the haystack the needle is) and context length (how long the haystack is).

Results from needle-in-a-haystack evaluations across major models show:

Claude 3 and Claude 3.5 demonstrate near-perfect recall across the full 200K context window, with consistent performance at all depths and lengths. This represents significant engineering investment specifically in long-context training.

Gemini 1.5 Pro also shows strong and consistent performance across its 1M context window in the simple single-needle retrieval task, as reported in the original paper.

GPT-4 Turbo (with 128K context) shows some performance degradation in the middle of very long contexts, though much improved from earlier versions.

The caveat to all of these results is that single-needle retrieval is a much simpler task than real long-context use cases. Retrieving one fact from a long document is different from synthesizing multiple pieces of information distributed throughout a document, tracking an argument across many pages, or answering questions that require understanding how different parts of a document relate to each other.

When evaluations move to more complex tasks — multi-hop reasoning across a long document, identifying inconsistencies distributed through a text, or tracking narrative threads through a novel — performance gaps are much more pronounced and the "lost in the middle" problem re-emerges even in models that pass simple needle-in-a-haystack tests.

Attention Patterns and What They Reveal

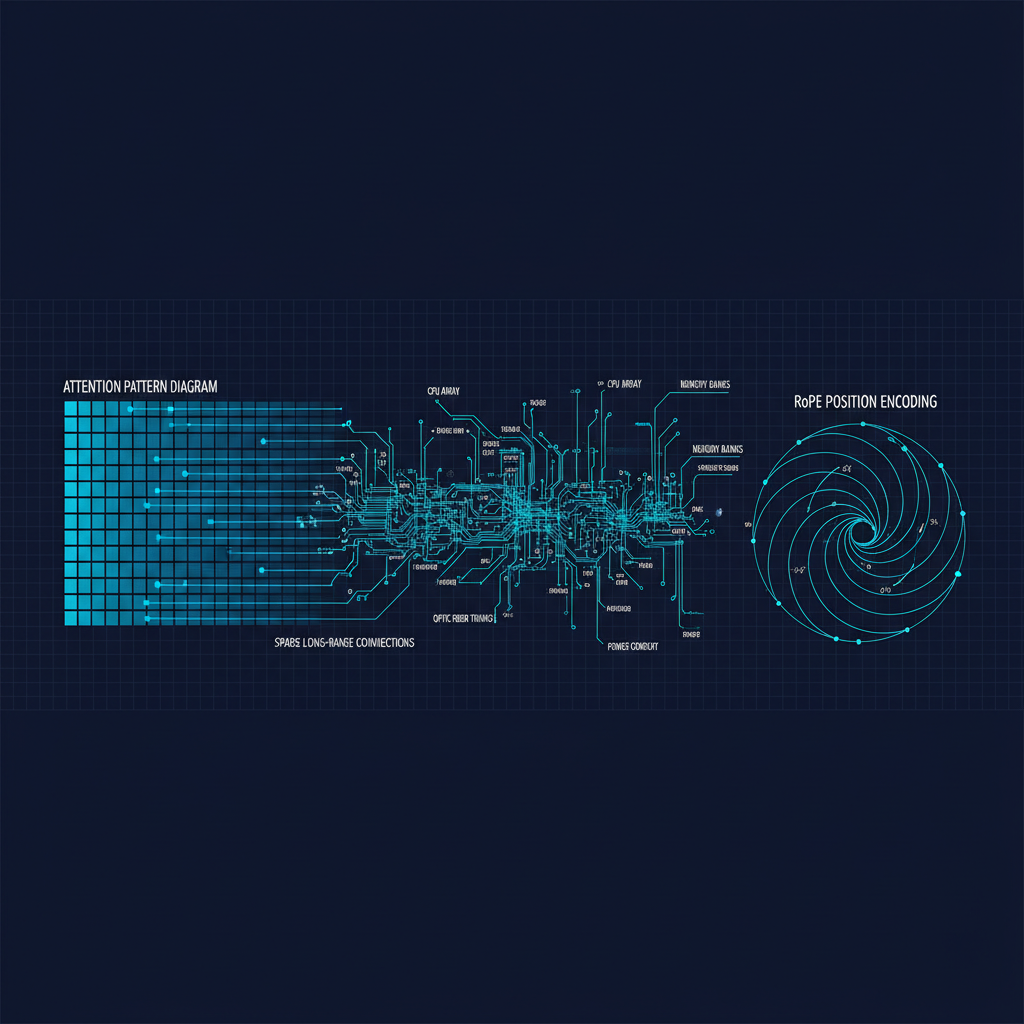

Mechanistic analysis of attention patterns in long-context models reveals interesting structure. Models do not attend uniformly across long contexts; they exhibit "attention sinks" at special tokens (particularly the first token and separator tokens), recency bias toward nearby tokens, and content-specific spikes where relevant tokens pull strong attention from query-relevant positions.

The "attention sink" phenomenon — described by Xiao et al. (2023) in work on StreamingLLM — shows that many attention heads in trained models assign disproportionate weight to the very first token in a sequence, regardless of its relevance. This appears to be a learned mechanism for maintaining attention probability distributions that sum to one: when no other token is particularly relevant, the first token absorbs the "spare" attention probability. This has implications for how models behave at the very beginning and very end of their context windows.

Understanding these patterns has practical implications. The attention sink effect means that injecting a critical instruction at position 0 in your prompt tends to have disproportionate influence on model behavior throughout long generations. Recency bias means that the most recently provided context tends to have the strongest immediate influence. Structuring prompts to take advantage of these biases — critical instructions at the beginning, most relevant documents immediately before the query — can meaningfully improve performance without any model changes.

Positional Encoding and Length Generalization

A fundamental challenge for long-context models is that position encodings trained on sequences up to length N tend to degrade when applied to sequences longer than N. The model has never seen positional encodings for position N+1, N+2, etc. during training; how should it behave?

Absolute positional encodings (the original transformer approach) simply fail to generalize — positions beyond training length are out-of-distribution and the model's behavior is unpredictable.

RoPE (Rotary Position Embedding), now standard in Llama, Mistral, and many other models, has better generalization properties but still degrades noticeably beyond training length. The YaRN approach to extending RoPE showed that by adjusting the frequency parameters used in RoPE, you can extend a model's effective context length significantly beyond its training length with relatively little additional fine-tuning. Llama 3's 128K context window was built using RoPE extension techniques applied to a model originally trained at shorter contexts.

LongRoPE took this further, demonstrating that careful adjustment of different RoPE frequency components for different attention heads could extend context to 2M tokens for certain model families.

The fundamental issue is that length generalization is a form of out-of-distribution generalization, which neural networks handle imperfectly. Training explicitly at long contexts — as Anthropic and Google appear to do for their flagship models — is the most reliable approach, but it is computationally expensive.

The KV Cache Bottleneck

Processing long contexts is not just a model capability question; it is a systems engineering challenge. The key-value (KV) cache stores the attention keys and values for all previous tokens, allowing efficient incremental generation. Its size grows linearly with context length and with the number of layers and attention heads.

For a large model (say, 70B parameters with 80 attention layers and 64 attention heads) processing a 128K token context, the KV cache can exceed 100GB. Serving this efficiently requires careful memory management, specialized hardware, and often compression strategies.

Multi-query attention (MQA) and grouped-query attention (GQA) address this by sharing key and value projections across multiple query heads, reducing the KV cache size by a factor of 2-8x with modest performance impact. Almost all modern long-context models use GQA.

KV cache quantization — compressing the cached keys and values from float16 to INT8 or INT4 — can further reduce memory requirements. Research has shown that INT8 KV cache with minimal quality loss is feasible, and INT4 with careful implementation can be acceptable for many applications.

PagedAttention (used in vLLM) applies virtual memory techniques to KV cache management, allowing the cache to be stored in non-contiguous memory blocks and enabling higher concurrency for serving long-context requests.

What This Means for Applications

For practitioners building on long-context models, several practical implications follow from these findings.

Needle-in-a-haystack performance does not guarantee task performance. Evaluate your specific task on your specific document distribution at your expected context lengths. Do not assume that a model that passes simple retrieval tests will handle complex multi-hop reasoning over the same length of text.

Document ordering matters. Based on the lost-in-the-middle research, placing the most important information at the beginning or end of a long context tends to improve performance. If you have a large set of retrieved documents to provide, put the most relevant ones first and last.

Chunking and structure help. Explicitly breaking long documents into labeled sections, providing a table of contents, or summarizing key points before presenting the full document can help models navigate long contexts more effectively.

For reliability, shorter is better when sufficient. If you can answer a user's question with 20K tokens of context rather than 200K, the shorter context will generally be more reliable. Long context capability is valuable when the task genuinely requires it; it should not be used by default when shorter context would work.

Monitor costs carefully. Long-context requests are substantially more expensive than standard requests, both in API costs and in processing time. Ensure that your application's context length is justified by task requirements.

The Open Research Questions

The most important open questions in long-context modeling are not architectural but cognitive: what does it mean for a model to "understand" a long document, and how do we evaluate that?

Simple retrieval tests show whether a model can find information. Synthesis tasks (summarizing a long document) test whether it can compress information. But many high-value long-context applications require something more complex: tracking an evolving argument, understanding how different parts of a text are in tension with each other, or maintaining coherent understanding of a complex system described across many pages.

We lack good evaluation frameworks for these higher-order long-context tasks, which makes it hard to measure progress or identify which models are genuinely better rather than just better at the specific benchmarks we happen to use. Developing such frameworks is important work.

The direction the field is moving — toward hybrid architectures that combine attention-based context processing with persistent memory systems — suggests that the context window as currently implemented may not be the final answer to the long-context problem. But as an available capability today, it has already unlocked applications that were not possible before, and understanding how to use it well is immediately valuable.