The original GPT-3 had a context window of 2,048 tokens — roughly 1,500 words. At the time, this felt expansive. A full article, a code file, a short story: it all fit. But as language models became more capable and users pushed them toward harder tasks, the limitations became apparent. You could not fit a whole codebase. You could not provide an entire book. You could not have a very long conversation without losing the beginning.

Three years later, Claude's 200K context window fits a full novel. Gemini 1.5 Pro hit one million tokens. Gemini 1.5 Ultra pushed toward two million. These are not incremental improvements — they represent a qualitative change in what language models can do and how we should deploy them. Understanding how this happened, and what it really means, requires looking at both the technical architecture and the practical reality.

Why Context Windows Were Small to Begin With

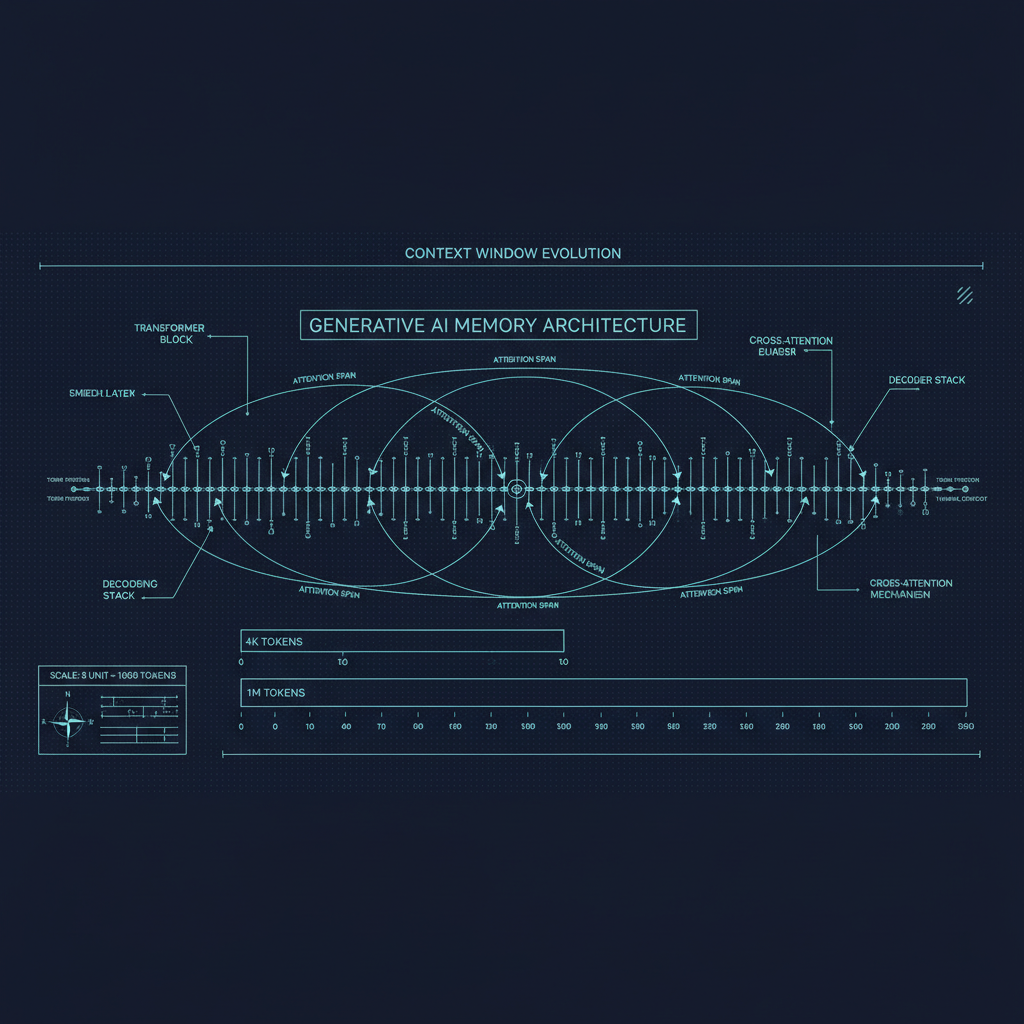

The core issue is the attention mechanism in transformers. Standard self-attention is quadratic in the sequence length: if you double the context, the attention computation quadruples. For a model with 32 attention heads processing a 128K token context, the memory footprint for storing the key-value cache alone becomes enormous, and the computation per token grows proportionally.

The original BERT and GPT designs accepted this constraint and simply capped context length. This was reasonable when models were smaller and context demands were modest. But as models scaled up and users demanded more, the quadratic scaling became an engineering ceiling that required either clever architecture choices or brute-force hardware spending to overcome.

The Technical Path to Long Context

Several innovations combined to make long context practical.

Sparse attention patterns. Instead of every token attending to every other token, sparse attention schemes have each token attend only to nearby tokens and a small set of globally relevant tokens. The Longformer and BigBird papers demonstrated this approach for BERT-scale models. It reduces attention complexity from O(n²) to O(n), though with approximations that can miss some cross-document connections.

Flash Attention. Dao et al.'s FlashAttention (2022) and its successors did not change the algorithmic complexity of attention, but dramatically reduced its memory and compute cost in practice through clever GPU kernel design. By tiling the attention computation to stay within GPU SRAM, FlashAttention reduced the memory bandwidth requirements for attention by a large factor. This enabled longer contexts on existing hardware without algorithmic changes. FlashAttention 2 and 3 pushed this further, and these kernels are now essentially universal in frontier model training.

Positional encoding innovations. Standard positional encodings like the sinusoidal encodings in the original transformer or learned absolute positions do not generalize well to sequence lengths longer than what the model was trained on. Rotary Position Embedding (RoPE), introduced by Su et al. and adopted by almost every modern open model family including Llama, Mistral, and DeepSeek, provides relative position information that generalizes better. Subsequent work on YaRN, LongRoPE, and related methods showed how to extend RoPE-trained models to much longer contexts than their training length, sometimes without any additional training.

Sliding window and grouped-query attention. Mistral's introduction of sliding window attention (where each token attends only to the N most recent tokens) combined with grouped-query attention (where multiple query heads share key-value heads, reducing cache memory) demonstrated that you could get competitive performance at much longer effective contexts with substantially reduced memory cost.

Better training data and curricula. Long-context capability is not just about architecture; it requires training on data that actually demands long-range reasoning. Models trained predominantly on short sequences do not effectively use long contexts even when the architecture supports them. Google's Gemini training reportedly included significant amounts of long-document data, which was essential to making the 1M context window genuinely useful rather than technically possible but practically unreliable.

Gemini 1.5 and the Practical Reality

Gemini 1.5 Pro's 1M token context window was the most dramatic demonstration of long-context capability to date. Google's technical report described experiments where the model was given a full-length feature film (as a video transcript and frames) and asked detailed questions about specific scenes. It was given 10,000 lines of code and asked to debug them. It was given translations of all books in a language that has very few native speakers and asked to translate new sentences.

The results were genuinely impressive, but also revealing. Performance on "needle in a haystack" tests — where a specific piece of information is hidden at different positions in a long document — showed that the model maintained strong retrieval across the full context window. This was not obvious; early long-context models often struggled to access information near the middle of very long contexts (a phenomenon called the "lost in the middle" problem, documented by Liu et al.).

However, the Gemini 1.5 results also made clear that long context is not a free lunch. Processing a 1M token context is expensive — in time, in money, and in the hardware required. At the time of release, 1M token queries were not available via the public API due to infrastructure constraints. Long-context capability is most valuable when you can actually afford to use it.

What Changes When Context Windows Are Long

The most interesting question is not whether long context works, but what it enables that shorter contexts cannot.

Whole-codebase reasoning. A developer can now paste an entire large codebase into a single context window and ask the model to explain its architecture, identify security vulnerabilities, or suggest refactoring strategies. This is qualitatively different from asking about individual files — the model can trace dependencies, understand module interactions, and identify patterns that span the full codebase.

Document-level tasks at scale. Legal document review, scientific literature synthesis, due diligence analysis — these tasks have traditionally required summarization as an intermediate step, with the attendant information loss. Long-context models can in principle work with the full primary sources.

Long conversations. A 200K context window means a conversation can continue for days or weeks without losing earlier context, as long as the total history fits in the window. For customer service, tutoring, and therapy applications, this matters enormously.

In-context learning with many examples. Few-shot prompting with hundreds or thousands of examples becomes possible. Research has shown that in-context learning can match fine-tuning in some settings when given enough examples, and long contexts dramatically expand this possibility.

The Retrieval Augmented Alternative

It is worth noting that the long-context revolution did not kill retrieval-augmented generation (RAG). RAG remains highly relevant even as context windows expand, for several reasons.

First, real-world knowledge bases are often larger than any practical context window. A company's full documentation, all its customer service history, or its entire product catalog may run to billions of tokens. RAG with vector search retrieves the relevant subset.

Second, RAG is cheaper. Retrieving 5 relevant documents and processing them in a 20K context is far less expensive than processing a 500K token context, even if the latter is architecturally possible.

Third, RAG is more interpretable. When a model answers based on retrieved documents, you can show users exactly which sources were consulted. This is valuable for trust and auditing in enterprise settings.

The practical picture is that long-context models and RAG are complementary, not competitive. The optimal architecture for most production applications combines both: RAG for broad knowledge retrieval, long context for deep reasoning on the retrieved material.

Where We Are Headed

The trajectory of context windows points toward models that can in principle ingest and reason over the full corpus of human knowledge in a single query. Whether this is achievable in practice, and whether it is the right architectural direction, are open questions.

The alternative vision is that context windows will plateau and the field will move toward persistent memory architectures — systems that do not need to fit everything in a single context but can selectively store and retrieve information across sessions. This is closer to how human cognition works, and several research groups are exploring architectures that combine attention-based context processing with external memory systems.

What seems clear is that the era of 4K context windows was a constraint that limited applications in ways we are only now fully appreciating. The removal of that constraint has already unlocked genuinely new capabilities, and the full impact on what language models can do is still being explored.