Every team building a production application on large language models faces the same decision at some point: should we fine-tune a model for our use case, or can we get what we need through better prompting? This question is often framed as a binary, but the reality is more of a spectrum with several dimensions. Getting this decision right can mean the difference between a project that ships on time and one that gets stuck in an expensive training loop chasing performance gains that prompting could have delivered in a day.

I have seen smart teams make expensive mistakes in both directions — investing months in fine-tuning infrastructure when a well-crafted system prompt would have worked, and shipping mediocre products because they did not invest in the domain-specific training that their use case required. Here is how I think about this decision systematically.

What Prompting Can and Cannot Do

Modern language models like GPT-4o, Claude 3.5 Sonnet, Llama 3.1 405B, and their contemporaries are extraordinarily capable at following natural language instructions. They have been trained via RLHF to be responsive to detailed prompts, and the gap between a naive prompt and a carefully engineered prompt is enormous in practice.

Prompting excels at adapting the model's behavior along dimensions it was already trained to understand: tone, format, level of detail, domain focus, persona, output structure. If you want the model to always respond in JSON, adopt a specific writing style, refuse certain kinds of requests, or focus on a particular area of knowledge, a well-crafted system prompt often accomplishes this reliably.

The research literature on prompt engineering is substantial. Techniques like few-shot examples, chain-of-thought instructions, role specification, and explicit output format constraints can dramatically improve performance on specific tasks. The DSPY framework and similar approaches have even begun to automate prompt optimization, treating the prompt itself as something that can be searched over and optimized.

However, prompting has genuine limits. The most important one is that prompting cannot give the model knowledge or capabilities it does not have. If the model has not seen enough examples of a specific domain during training — specialized legal terminology, obscure scientific notation, proprietary internal processes — prompting cannot conjure competence from nothing. You can tell a model to act like an expert in 18th-century Portuguese maritime law, but if it lacks the underlying knowledge, the result will be confidently incorrect.

The second limit is token efficiency. Every few-shot example you add to a prompt costs tokens, which costs money and latency. If you need 50 examples to demonstrate the behavior you want, your prompt is consuming thousands of tokens per query. Fine-tuning that behavior in might cost more upfront but be dramatically cheaper per query at scale.

The third limit is behavioral consistency. Prompting is fragile to prompt perturbations in ways that fine-tuned models are not. Users who modify the system prompt (or whose inputs interact with the system prompt in unexpected ways) can shift the model's behavior. Fine-tuned models tend to be more robust to context variations because the behavior is encoded in the weights, not in the context window.

What Fine-Tuning Actually Does

Fine-tuning a pre-trained language model means continuing training on a dataset specific to your task, updating the model's weights. There are several regimes, and they have different cost-benefit profiles.

Full fine-tuning updates all model parameters on your training data. This provides the most flexibility and potentially the best performance, but requires the most compute and storage. For large models (70B+), full fine-tuning requires significant GPU infrastructure and is economically viable mainly for organizations with substantial training budgets.

Parameter-efficient fine-tuning (PEFT) updates only a small fraction of the model's parameters, dramatically reducing compute and memory requirements. LoRA (Low-Rank Adaptation) is the dominant PEFT method and works by injecting trainable low-rank matrices into the attention layers while keeping the base model frozen. QLoRA extends this with quantization to reduce memory requirements further. A well-tuned LoRA adapter for a 7B model can be trained in a few hours on a single consumer GPU, and the resulting adapters are only a few hundred MB rather than the full model size. This has made fine-tuning accessible to small teams in ways that were not possible two years ago.

Instruction fine-tuning specifically trains the model on (instruction, output) pairs to improve instruction following on specific task types. This is closer to what the SFT step in RLHF does — you are training the model to be better at a specific kind of task, not teaching it new world knowledge. This is effective for tasks with consistent structure: document summarization, classification, extraction, translation in specialized domains.

RLHF fine-tuning for specific alignment goals is in principle possible but requires significant infrastructure. Most teams do not pursue this outside of large ML organizations.

The Decision Framework

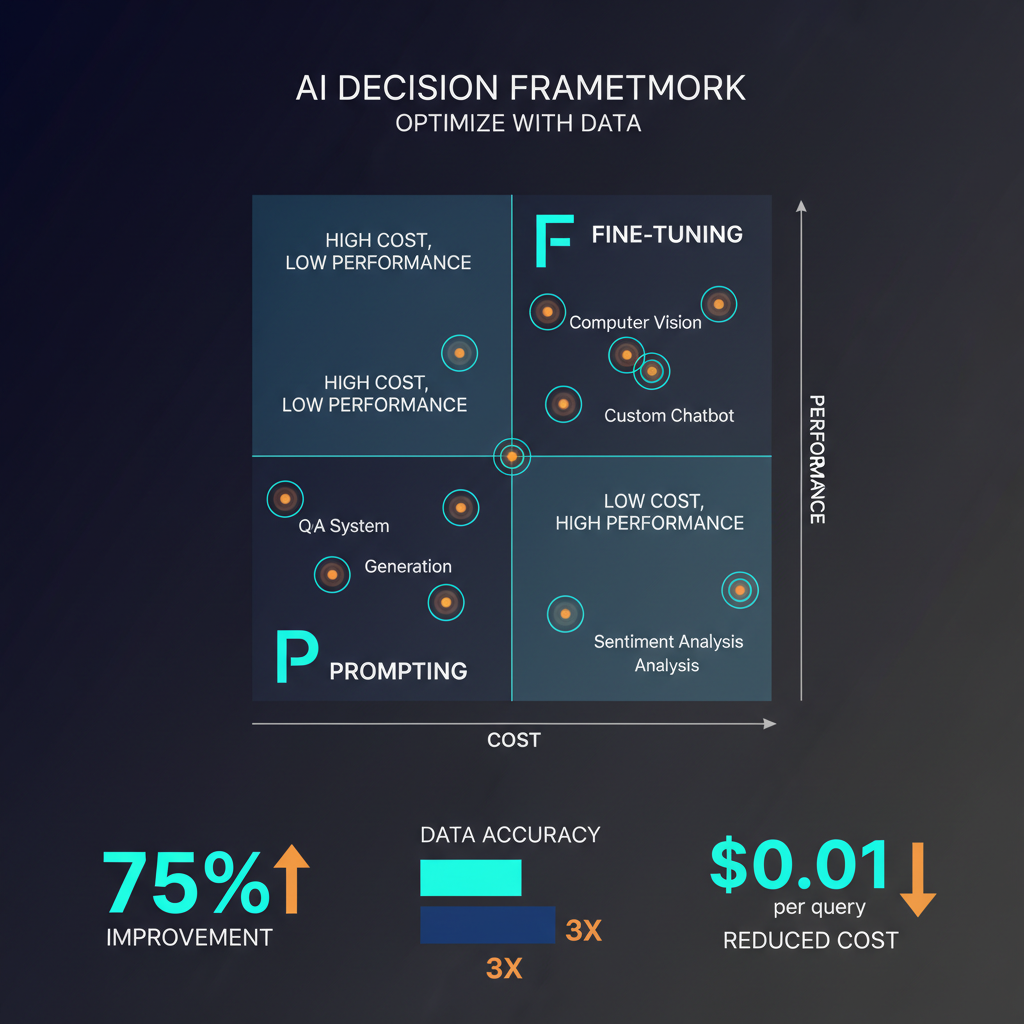

I use a rough decision tree that has served me well:

First question: Is the behavior you need achievable through prompting alone? Test this before investing in fine-tuning. Write the best prompt you can, include relevant few-shot examples, and evaluate on your actual use cases. If performance meets your requirements, you are done. The cost of good prompt engineering is measured in hours; the cost of a fine-tuning run is measured in days to weeks.

Second question: Is the performance gap between prompting and your requirements about knowledge or behavior? If it is about knowledge — the model lacks domain-specific information — fine-tuning may not be the right solution. Fine-tuning on small domain-specific datasets is effective for teaching patterns and style, but RAG (retrieval-augmented generation) is generally more effective for injecting specific factual knowledge. Fine-tuning on a small set of proprietary documents often degrades general capabilities while failing to reliably install the specific facts you wanted.

If the gap is about behavior — format consistency, tone, task-specific reasoning patterns, or following your specific rubric for quality — fine-tuning is likely the right tool. You are teaching the model to do a task in a specific way, not teaching it facts.

Third question: What is your volume and cost sensitivity? Fine-tuning is a fixed cost amortized over many queries. If you are running millions of queries per day, the per-query token savings from fine-tuning (because you no longer need long system prompts or many few-shot examples) can justify significant upfront investment. If you are in an early-stage product with uncertain volume, prompting first is almost always better.

Fourth question: Can you use a smaller model after fine-tuning? This is often the most compelling economic argument for fine-tuning. A fine-tuned 8B model running specialized inference can often match a general-purpose 70B model on a specific task, at a fraction of the cost. Distilling a specific capability from a large frontier model into a smaller specialized model through fine-tuning is a legitimate and powerful strategy.

Practical Considerations

The fine-tuning landscape has specific gotchas that catch teams off guard.

Catastrophic forgetting is real. Fine-tuning a model on a narrow task can degrade its performance on tasks it was previously good at. If your application requires both specialized behavior and general capability, you need to be careful about how you construct your training data and may need to include regularization on the base distribution. LoRA is more resistant to this problem than full fine-tuning because most of the base model weights are unchanged.

Training data quality dominates quantity. A hundred high-quality, carefully verified training examples will outperform a thousand automatically collected examples with noise. Before investing in fine-tuning infrastructure, invest in data curation. Every mislabeled or low-quality example in your training data degrades the result.

Evaluation must be built before training. You need a held-out test set that accurately reflects your production distribution before you run a single training epoch. Without this, you cannot tell if fine-tuning is working, and you will waste compute.

Model selection matters before fine-tuning matters. If you are fine-tuning a model that does not have the base capability you need, fine-tuning will not save you. Evaluate multiple base models before committing to a fine-tuning strategy.

The Current State of Play

The arrival of efficient open models — Llama 3.1 8B and 70B, Mistral 7B and Mixtral, Qwen 2.5, DeepSeek-V3 — has democratized fine-tuning in a way that was not possible when the only capable models were GPT-4 (which you cannot fine-tune with full weight updates) or models that required specialized hardware to run at all. Today, a team with a single high-end GPU and careful data collection can produce a fine-tuned model for specific domains that outperforms general-purpose frontier models on their specific task.

At the same time, the frontier general-purpose models have become capable enough that for many tasks, the prompting baseline is genuinely competitive with what specialized fine-tuning can achieve. The bar for when fine-tuning is worth the investment has risen.

The right mental model is that prompting and fine-tuning are tools, not philosophies. Use the one that gets you to your quality requirements at acceptable cost, and do not be ideologically committed to either.