There is a moment in the GPT-4o demo from May 2024 that captures something important about where AI is heading. A researcher holds up a piece of paper with a math problem written on it. GPT-4o sees the image, reads the problem, works through the solution, and explains it — in a natural speaking voice, with appropriate emotional cadence, responding to the researcher's follow-up questions in real time. The latency is low enough that it feels like a conversation, not a query-response system.

This was not magic, but it was remarkable: a single model that could see, hear, speak, and reason, operating across modalities in a way that earlier AI systems achieved only through fragile pipelines of specialized components. Understanding how we got here, what is technically happening inside these systems, and where the real frontier challenges lie requires stepping back from the demos and into the architecture.

How Multimodal Models Work

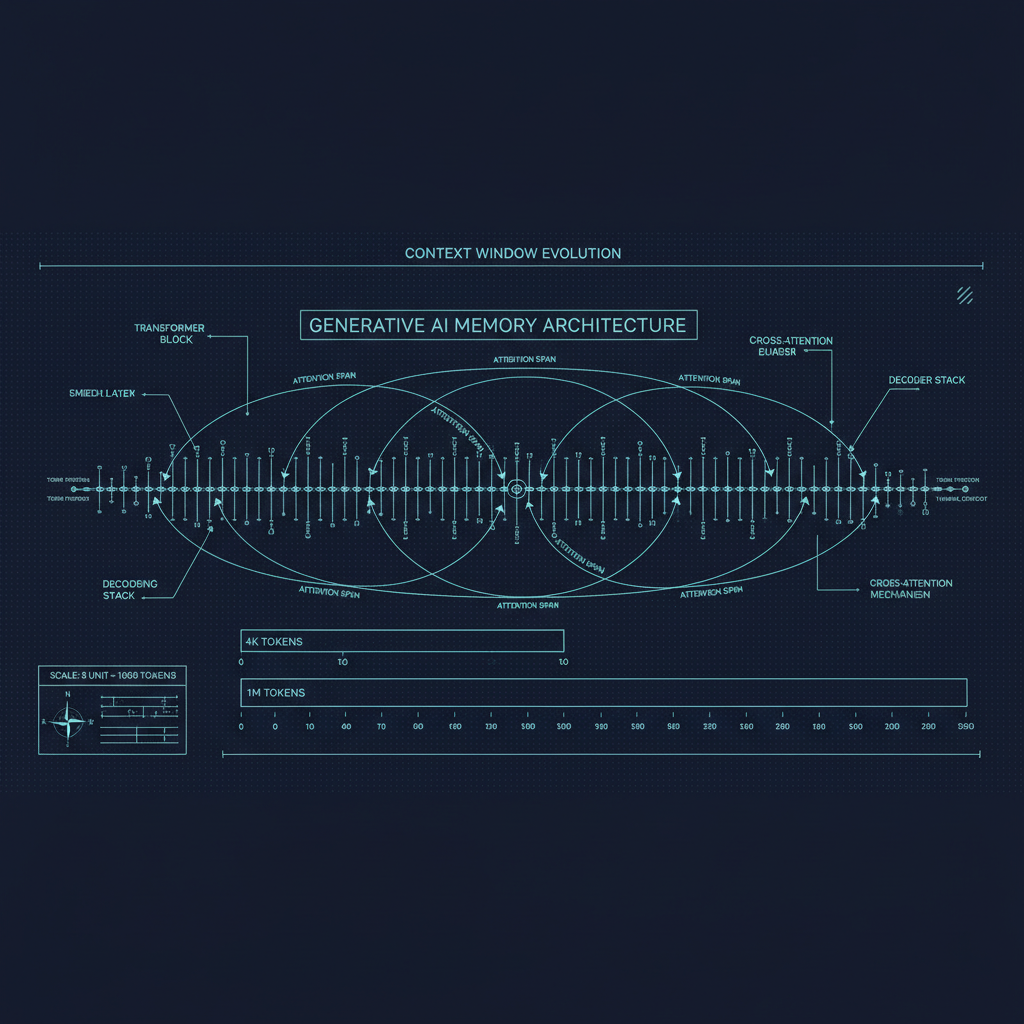

The dominant paradigm for multimodal language models is modality-specific encoders combined with a language model backbone. Images, audio, and video are encoded into token-like representations that can be fed into the same transformer architecture that processes text. The language model then reasons over a mixed sequence of text tokens and encoded visual or audio tokens.

For vision, the standard approach draws from the CLIP paper (Radford et al., 2021), which trained a visual encoder to align image representations with text descriptions. In GPT-4V and similar models, a visual encoder (often a variant of ViT, the Vision Transformer) converts an image into a grid of patch embeddings. These are then projected into the same embedding space as the text tokens through a trainable linear projection or a more complex cross-attention mechanism, and appended to the input sequence.

This is conceptually simple but practically demanding. The model needs to learn that the pixel patches corresponding to a cat are "the same kind of thing" as the text token for "cat," and it needs to do this across the enormous diversity of visual content — photographs, diagrams, charts, handwritten text, medical images, and more. The CLIP pre-training approach provides a strong starting point, but significant additional training is needed to make the model effective at vision-language reasoning rather than just recognition.

For audio, a similar approach applies. The Whisper model (Radford et al., 2022) demonstrated that a transformer trained on large amounts of transcription data could develop excellent speech recognition capabilities. Models like GPT-4o go further: rather than just transcribing audio to text and then processing the text, they encode audio features directly and process them alongside text, allowing the model to be sensitive to prosody, emotion, and non-speech sounds that would be lost in pure transcription.

GPT-4o and the Native Multimodal Approach

GPT-4o represented a specific architectural claim: that truly native multimodal training — training a single model end-to-end on text, audio, and images together, rather than stitching together specialized models — produces qualitatively better performance, particularly on tasks that require integrating information across modalities.

The evidence for this claim comes from tasks where cross-modal reasoning matters. Describing the mood of a piece of music while looking at an image, answering questions about the audio track of a video, or understanding the relationship between a diagram and its caption — these require the model to reason across modalities in integrated ways that pipeline approaches handle awkwardly. A model that processes audio tokens and image tokens in the same attention computation can in principle learn richer cross-modal associations.

The real-time conversation capability of GPT-4o also benefited from this native architecture. Earlier systems using separate speech recognition, language model, and text-to-speech components accumulated latency at each step and lost information across steps (the TTS system could not convey what the language model knew about the emotional context, for example). GPT-4o's end-to-end approach potentially allows these to be integrated.

Gemini's Multimodal Architecture

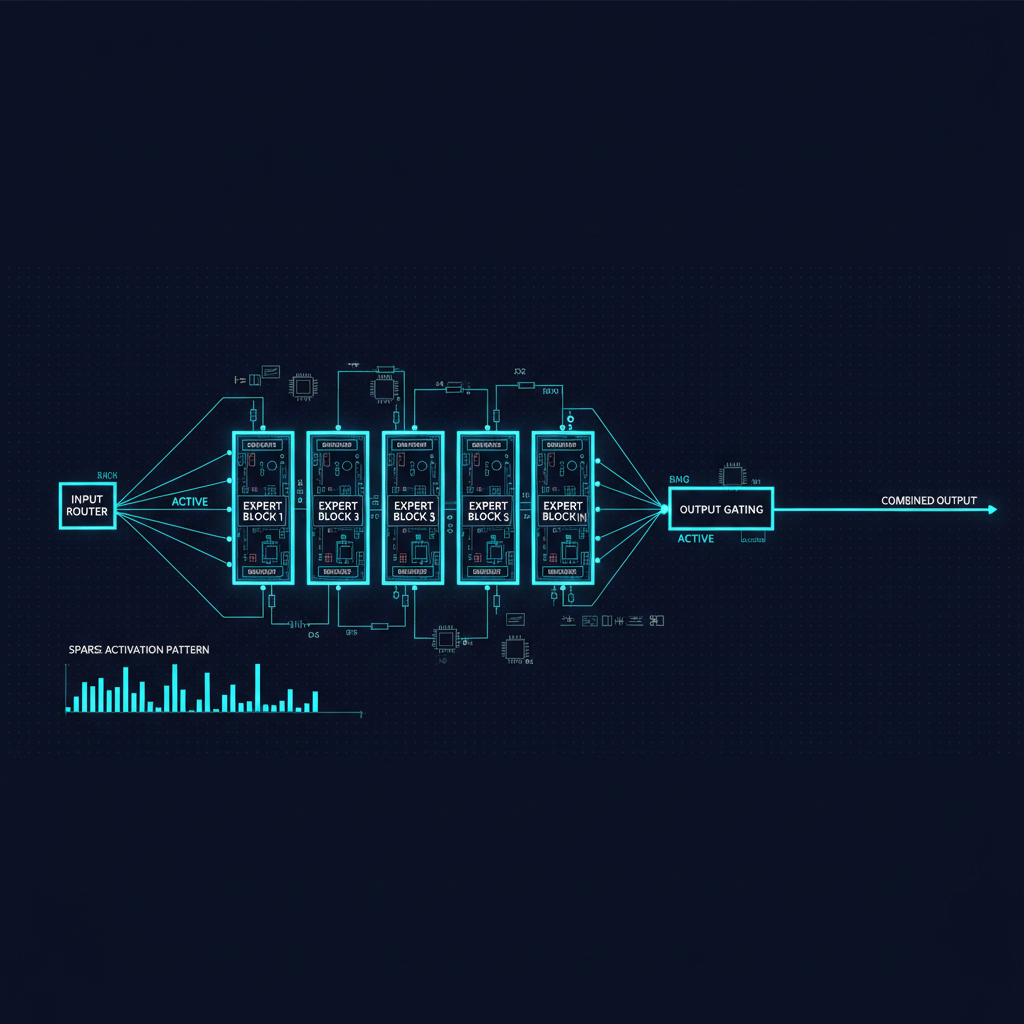

Google's Gemini family took a somewhat different approach. Gemini was designed from the beginning as a natively multimodal model, trained on a dataset that interleaved text, images, audio, and video. The Gemini 1.5 architecture used a mixture-of-experts design that could be specialized for different modalities while sharing common reasoning machinery.

Gemini's strongest relative advantage over competing systems has been in video understanding. Processing video requires handling temporal coherence — understanding not just what is in each frame but how content changes over time, what actions are being performed, and what causal relationships hold across the sequence. Gemini demonstrated long video understanding capabilities that were ahead of the competition at the time of release, processing multi-hour videos in ways that earlier systems struggled with.

Vision Language Models in the Open Ecosystem

The open-source ecosystem has also produced strong multimodal models. LLaVA (Large Language and Vision Assistant) and its successors demonstrated that you could build competitive vision-language models by combining open visual encoders with open language models and training the connecting components. The LLaVA-NeXT and LLaVA-1.6 releases showed competitive performance with commercial models on many benchmarks.

InternVL, Qwen-VL, and MiniCPM-V have pushed this further, with the latter demonstrating that remarkably capable vision-language models can run on device hardware. The efficiency improvements in these models — optimized visual encoders, token compression schemes that reduce the number of visual tokens fed to the language model — have made multimodal capability accessible in resource-constrained settings.

Where Multimodal Models Still Struggle

Despite the impressive demos, multimodal models have characteristic failure modes that practitioners need to understand.

Spatial reasoning. Asking a model to count objects in an image, identify left-right relationships, or understand 3D structure from 2D images is surprisingly difficult. Models trained on web images and their text descriptions have a lot of information about what things look like, but visual grounding — tying specific text references to specific image regions — remains imperfect. Models often describe what they expect to see rather than what is actually present.

Fine-grained visual understanding. Distinguishing between similar species of birds, reading small text in an image, or identifying subtle defects in manufacturing photographs pushes current models significantly. These tasks require high spatial resolution and specialized training data that general web-crawled multimodal datasets do not provide abundantly.

Temporal reasoning in video. Understanding cause and effect across time, tracking specific objects through occlusion and camera motion, and reasoning about events that happen off-screen are challenging. Models can describe what they see in video frames, but reasoning about the temporal structure of events requires capabilities that are still developing.

Consistent multimodal reasoning. Models can sometimes correctly answer visual questions and text questions separately, but fail when the same reasoning needs to integrate both. The model's internal representation of a scene does not always maintain consistency as additional context is added.

Audio understanding beyond speech. While speech recognition has become excellent, understanding non-speech audio — music, environmental sounds, emotional cues in voice beyond semantics — is much harder. Models trained primarily on transcription data have strong speech-to-text capabilities but weaker holistic audio understanding.

The Practical Takeaways

For practitioners building applications on multimodal models, several practical lessons apply.

First, image quality matters more than you might expect. Models process images as discrete patches; very small text, subtle visual distinctions, or poor image quality can cause significant performance degradation. When preparing images for model input, consider resolution, contrast, and whether the information you need the model to understand is visually clear.

Second, explicit verbal descriptions often improve performance on vision tasks. When asking a model about an image, describing relevant aspects of the image in the text prompt ("in the chart showing quarterly revenue...") can improve accuracy on questions about those aspects. This is counterintuitive if you assume the model perfectly "sees" everything in the image, but reflects the reality that text tokens and visual tokens are processed in a shared attention context where explicit text can guide attention.

Third, do not assume cross-modal capabilities generalize from demos. The impressive showcased capabilities often represent cherry-picked examples or carefully constructed prompts. Systematic evaluation on your specific use case is essential before deploying multimodal systems in production.

The arc of multimodal AI over the last three years has been genuinely astonishing. The remaining challenges are real, but they are the kind of challenges that yield to sustained engineering and research effort. A world where AI can see, hear, and reason about the full richness of human communication is no longer a speculative future — it is the present we are learning to work with.