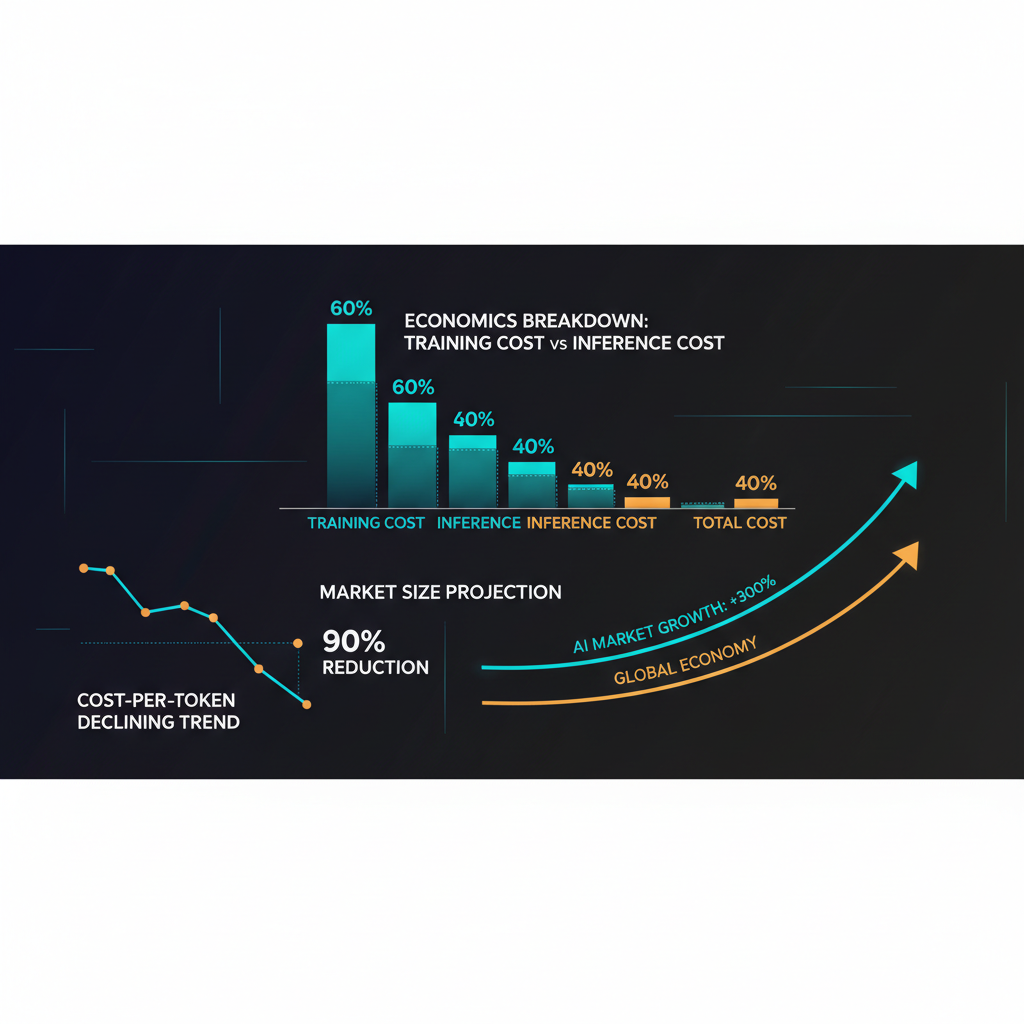

The economics of foundation models are simultaneously staggering and rapidly deflating — a combination that produces genuine confusion about the AI industry's financial structure. Training costs for frontier models are in the hundreds of millions of dollars and trending upward. Inference costs for those same models have fallen by roughly 100x in two years. These two trends coexist and each tells an important part of the story.

I want to cut through the handwaving in both directions — the "AI is too expensive to matter" camp and the "costs always fall, don't worry" camp — and give you a grounded view of where the economics actually stand and what they imply for the industry's structure.

Training Costs: The Numbers and the Trends

Training a frontier LLM is one of the most capital-intensive private-sector activities that exists. The publicly available estimates for recent training runs:

- GPT-4: estimated $50–100 million (training compute only, before research, infrastructure, and labor costs)

- Claude 2/3 series: not publicly disclosed, but roughly comparable to GPT-4 class models

- Llama 3 405B: Meta has not disclosed costs, but analysts estimate $30–60 million for the training run

- Gemini Ultra: again, not disclosed, but estimated at $100–200 million for training

These numbers represent direct compute costs — the GPU-hours × cost per GPU-hour calculation. They do not include the research headcount (frontier AI researchers command $500K–$2M+ total compensation annually), infrastructure engineering, data acquisition and processing, safety and evaluation costs, or the multiple failed training runs that precede a successful one. The total economic cost of a frontier model is typically 3–5x the raw compute cost.

The headline compute cost trend is upward: GPT-3 was trained for an estimated $5–10 million; GPT-4 represents a 10–20x increase. The scaling hypothesis — that capability reliably improves with compute — has driven an arms race where each generation of frontier models trains on more compute than the last. GPT-5 class models are expected to cost $1 billion or more in direct compute costs.

However, this trend coexists with dramatic efficiency improvements. The computational cost to reach a given capability level — measured in, say, MMLU score or HumanEval pass rate — has fallen dramatically, driven by architectural innovations (mixture-of-experts, improved attention mechanisms, better tokenization) and training methodology improvements. A model that cost $100M to train in 2023 would cost perhaps $20–30M to train today with current methods for the same level of performance. This is roughly consistent with the "algorithmic progress" estimates by researchers like Epoch AI, who find AI training efficiency improving at roughly 3–4x per year.

Inference Economics: The Deflationary Force

If training economics are inflationary, inference economics are intensely deflationary. The cost of running an LLM — processing input tokens and generating output tokens — has fallen dramatically as model serving infrastructure has matured, competition among providers has intensified, and hardware efficiency has improved.

Specific data points: The cost to process 1 million input tokens with a GPT-3.5 class model fell from roughly $2.00 in 2023 to under $0.10 by late 2025. Even for frontier models, the price decline has been steep: GPT-4 Turbo input token pricing dropped from $30/million tokens to $10/million to $5/million over roughly 18 months as competition and efficiency improvements compounded.

The drivers of this deflation are multiple:

Hardware efficiency improvements. Nvidia's successive GPU generations (A100 → H100 → H200 → Blackwell architecture) have delivered substantial improvements in tokens per second per dollar. The H100 delivered roughly 2–3x the inference efficiency of the A100 for LLM workloads. Custom silicon from Google (TPU v5), AWS (Trainium/Inferentia), and Meta's MTIA chips have further expanded the efficiency frontier.

Model distillation and quantization. The ability to distill a large frontier model's capabilities into a significantly smaller model — and then quantize that model to run in reduced precision — has dramatically expanded the range of hardware that can serve capable models efficiently. Models that required datacenter-scale GPU clusters two years ago now run on a single powerful GPU or even on-device hardware.

Competition among providers. The inference API market is now genuinely competitive. OpenAI, Anthropic, Google, Mistral, Cohere, and numerous specialized inference providers (Fireworks AI, Together AI, Groq) are competing on price and performance. Groq's inference hardware, purpose-built for LLM inference with Language Processing Units (LPUs), has demonstrated extremely high throughput at competitive cost, forcing price responses across the market.

Batching and caching optimizations. Infrastructure innovations like KV-cache optimization, prompt caching (reusing computation for repeated prompt prefixes), and speculative decoding have improved the effective throughput of inference infrastructure without hardware changes.

The Unit Economics of AI Products

For companies building products on top of foundation model APIs, the inference cost trajectory is broadly favorable. A customer support application processing 1 million interactions per month with an average of 2,000 tokens per interaction — two years ago, that would cost $10,000–$50,000 per month in API costs alone. Today, using optimized model selection and caching, the same workload can run for $1,000–$5,000.

But "broadly favorable" doesn't mean "economically solved." The challenge is that inference cost at scale is still meaningful relative to the revenue per interaction for many use cases. The math works well for high-value professional applications (legal document analysis, financial research synthesis) where the per-interaction value is high. It works less well for consumer applications with high interaction volume and modest per-user revenue.

The other cost dynamic that frequently surprises teams building AI products: output token costs are typically 3–5x input token costs, because generation is computationally more intensive than evaluation. Applications that require long-form generated content at scale face a different cost structure than applications that primarily use the model for classification or short answers. Understanding your token budget and optimizing prompt design to minimize output token waste is both an engineering discipline and a meaningful cost lever.

The Infrastructure Capital Stack

Above the API layer, enterprises building serious AI capability face significant infrastructure investment. The components:

GPU compute: Reserved or on-demand GPU infrastructure for fine-tuning, batch inference, and model evaluation. The market has evolved significantly: Nvidia H100 spot instance prices that exceeded $8/hour in 2024 have moderated somewhat as supply caught up with demand, though they remain elevated. The major clouds have improved their GPU availability — AWS, Azure, and Google Cloud all have H100-class hardware with reasonable availability for committed customers.

Vector databases and retrieval infrastructure: For RAG-based applications, a vector database (Pinecone, Weaviate, or pgvector on Postgres for smaller deployments) is a core infrastructure component. At scale, this becomes a meaningful cost and engineering investment. Storing and searching billions of document embeddings efficiently is a non-trivial distributed systems problem.

LLM observability and evaluation infrastructure: Production AI systems require continuous monitoring of outputs, latency, cost, and quality metrics. This infrastructure — whether built internally or purchased from vendors like Arize, Langfuse, or Braintrust — is an operational necessity that adds cost but is essential for catching model regressions and quality drift.

Data pipeline infrastructure: The preprocessing, chunking, embedding, and ingestion pipeline for RAG systems is often the unglamorous engineering work that determines whether a RAG application actually works well in production. Teams frequently underestimate this investment.

Nvidia's Dominance and Its Challengers

No discussion of LLM economics is complete without addressing Nvidia, which has achieved a market position in AI training hardware that is remarkable in its degree of lock-in. CUDA, Nvidia's parallel computing platform, has accumulated two decades of software ecosystem development. The research community's code, the ML frameworks (PyTorch, JAX), and the operational tooling of production AI are all deeply optimized for Nvidia hardware.

The challengers — Google's TPUs (available via Google Cloud, outstanding performance for Google's own workloads), Graphcore's IPUs, Cerebras's Wafer-Scale Engine, Intel Gaudi, and AMD's Instinct MI-series — have made progress but face the CUDA moat problem. Switching from Nvidia to alternative hardware requires software porting work, performance tuning, and operational expertise that most organizations don't want to invest. AMD's MI300X has shown competitive performance on some benchmarks and is gaining traction among large cloud and hyperscale customers willing to invest in the software porting effort, but Nvidia retains its dominant position.

The implication: Nvidia's pricing power remains substantial, and the AI industry's compute costs are materially determined by Nvidia's capital expenditure and pricing decisions. This is a structural dependency that the industry is aware of and working to reduce — but slowly.

The Long-Term Economic View

The long-term economics of foundation models are genuinely uncertain in ways that I don't think the industry is being fully honest about. The key questions:

Does the scaling law continue to hold? If capability reliably improves with compute and the scaling trend continues, the next generation of frontier models will cost $1B+ to train. At what point does this represent a return on investment for commercial applications? The assumption embedded in current hyperscaler AI investment (Microsoft has committed $80B to AI infrastructure in 2025, Google is investing $75B) is that the capability improvements justify the cost. This assumption is doing significant work.

When does inference become truly commoditized? If inference costs fall another 100x over the next three years — plausible given current trends — what happens to the revenue model of companies whose primary value proposition is API access to capable models? The answer depends on whether capability continues to advance faster than commoditization, maintaining a frontier premium, or whether inference quality convergences enough that the cheapest provider wins.

What is the actual value being created? The economic justification for AI investment ultimately requires that the productivity gains and new capabilities enabled by AI generate more value than the training and infrastructure costs. The evidence that this is true in aggregate is strong in certain domains (code generation, document processing, customer service automation) and weak in others (creative applications, complex reasoning tasks). The investment in AI infrastructure is running ahead of the measured economic value creation — which is normal for infrastructure investment during a growth phase, but creates vulnerability if the value creation doesn't materialize at projected scale.

My view: the foundation model economics work if you take the long view and if you believe (as I do) that the capability progress of the past three years will continue at a meaningful rate. The organizations best positioned are those with durable competitive advantages — proprietary data, distribution, domain expertise — that don't depend on having the cheapest compute. The organizations at risk are those whose entire value proposition is API arbitrage on model capabilities that are becoming commoditized.

Explore more from Dr. Jyothi