Model Merging for LLMs: The Inconvenient Truth From a Large-Scale Study

Model merging is one of the most intellectually satisfying ideas in deep learning: instead of training separate models for each task, or doing expensive multitask training, you simply average the weights of fine-tuned models together. The combined model supposedly inherits the capabilities of all its constituent models. It's elegant, it's cheap, and it seems to work... on vision models.

For LLMs? The story is messier. "A Systematic Study of Model Merging Techniques in Large Language Models" (arXiv: 2511.21437, Nov 2025) runs the largest rigorous evaluation of model merging on LLMs to date — and the results are sobering.

What They Tested

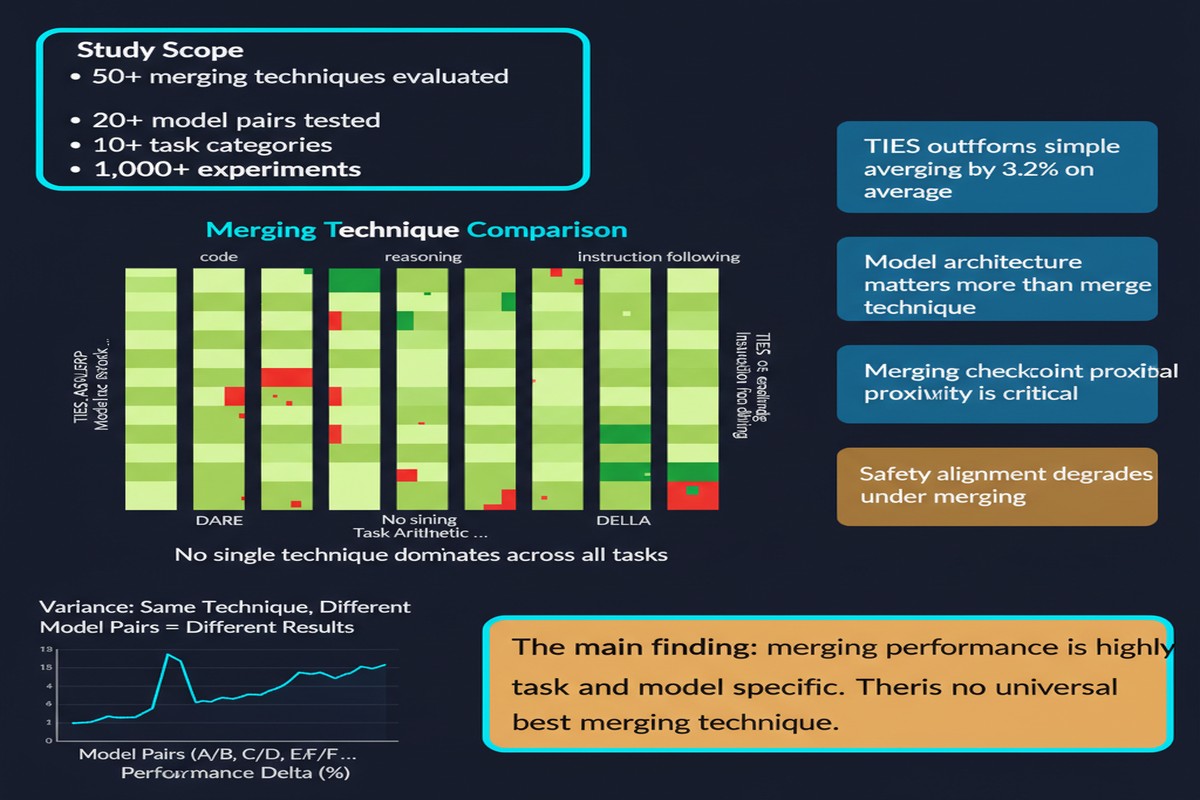

The study evaluates six state-of-the-art merging methods:

- Simple Averaging — just average the weights

- Task Arithmetic — add task-specific weight deltas to the base model

- TIES-Merging — prune conflicting parameters before merging

- DARE — randomly prune and rescale task vectors

- DELLA — magnitude-based pruning with learned scaling

- Breadcrumbs — prune by magnitude and sparsity

Evaluated across:

- 4 base LLMs (spanning different architectures and sizes)

- 12 fine-tuned checkpoints per base model (specialized for different domains)

- 16 standard LLM benchmarks (reasoning, coding, math, instruction following)

This is the scale and rigor that the field has been missing. Previous positive results for model merging came from small-scale experiments on vision models or selective cherry-picked LLM settings.

The Core Finding: Task Arithmetic Works, Everything Else Doesn't

graph TD

subgraph Merging Methods Performance vs Baseline

A[Task Arithmetic] -->|+Performance| B[Reliable Gains]

C[Simple Averaging] -->|Variable| D[Sometimes works]

E[TIES-Merging] -->|-Performance| F[Significant Drops]

G[DARE] -->|-Performance| F

H[DELLA] -->|-Performance| F

I[Breadcrumbs] -->|-Performance| F

end

style B fill:#059669,color:#fff

style D fill:#d97706,color:#fff

style F fill:#dc2626,color:#fff

Task Arithmetic (Ilharco et al., 2023) is the only method that reliably yields performance gains on LLMs across the evaluation suite. The others — TIES, DARE, DELLA, Breadcrumbs — typically result in significant performance drops compared to the individual fine-tuned models and often compared to the base model itself.

This is a damning result. TIES, DARE, and their variants were specifically designed to handle parameter interference — the problem where weights trained for different tasks conflict when merged. They work beautifully on vision models. On LLMs, they don't.

Why Don't Interference-Aware Methods Work on LLMs?

The paper's analysis points to structural differences between vision and language models:

Scale changes interference geometry. Vision models that have been merged successfully are typically smaller (ViT-B/16, ResNet-50). Modern LLMs have billions of parameters with complex interdependencies. The interference patterns that TIES and DARE are designed to handle look fundamentally different at this scale.

LLM fine-tuning creates dense, not sparse, weight changes. Instruction tuning and RLHF modify a broader set of parameters than task-specific vision fine-tuning. When you try to prune "conflicting" parameters in LLMs, you're often removing weights that encode important general capabilities, not just task-specific ones.

The distribution of weight deltas matters. Vision model task vectors tend to be relatively sparse — only a fraction of weights change significantly during fine-tuning. LLM task vectors, especially after instruction tuning, are denser. Methods designed for sparse task vectors break down in this regime.

flowchart LR

Base[Base LLM] --> FT1[Fine-tune: Coding]

Base --> FT2[Fine-tune: Math]

Base --> FT3[Fine-tune: Safety]

FT1 --> TA[Task Arithmetic:\nBase + delta1 + delta2 + delta3]

FT2 --> TA

FT3 --> TA

TA --> Result[Merged LLM\nwith combined capabilities]

style TA fill:#2563eb,color:#fff

style Result fill:#059669,color:#fff

Task Arithmetic avoids the pruning and rescaling steps that break other methods. It simply computes the weight delta for each fine-tuned model relative to the base and adds them together. This naive approach, paradoxically, is more robust.

Implications for the Field

This paper should change how researchers and practitioners think about model merging for LLMs:

Stop chasing complexity. The field has produced increasingly sophisticated interference-handling methods for model merging. The complexity isn't helping. Task Arithmetic — a simple vector addition — outperforms them on LLMs. This suggests the theoretical frameworks underlying TIES, DARE, etc. don't transfer from vision to language.

Merging is a research gap, not a solved problem. The community has been overclaiming based on vision model results. LLM-specific merging methods need to be developed from the ground up, with LLM interference geometry as the starting point.

Practical applications are narrower than advertised. Model merging for LLMs is real and useful — particularly for combining models fine-tuned on complementary tasks (e.g., coding + math) using Task Arithmetic. But it's not a general solution for composing arbitrary capabilities.

Where Model Merging Still Works

Despite the critical tone, model merging is valuable in specific LLM contexts:

Same-family, complementary tasks: Task Arithmetic reliably helps when merging models fine-tuned on complementary domains (not competing ones) from the same base model.

Checkpoint merging during pretraining (a different paper — see arXiv: 2505.12082): Merging intermediate checkpoints during pretraining, not fine-tuned checkpoints, is a different regime that shows consistent improvements for both dense and MoE models.

Alignment hedging: The "Mix Data or Merge Models?" line of research shows that merging models fine-tuned for helpfulness, harmlessness, and honesty separately can match or exceed multi-objective training — a useful alignment strategy.

Why This Matters

Model merging has been positioned as a democratizing technology — a way for teams without massive compute to combine specialized models into powerful general ones. The vision is compelling: open-source model ecosystems where you can mix and match fine-tuned adapters like Lego blocks.

This paper says that vision, for LLMs, requires different building blocks than we thought. The fine-print on model merging papers that only benchmark on vision tasks needs to be read more carefully.

My Take

I appreciate the honesty of this paper. The field has needed this kind of systematic negative result to calibrate expectations.

My read: model merging for LLMs is genuinely promising but the theoretical foundation isn't there yet. We need merging methods designed specifically for the geometry of LLM weight spaces — which are different from vision models in important ways we don't fully understand.

Task Arithmetic working while interference-aware methods fail is actually a useful signal. It suggests that the interference problem for LLMs is either structured differently than assumed, or that brute-force parameter pruning is too destructive for the dense weight landscapes of modern LLMs.

The right research direction: study the geometry of LLM weight deltas empirically, build interference models specific to instruction-tuned LLMs, then design merging algorithms to match. Until then, use Task Arithmetic and manage your expectations.

Model merging is not dead. It just needs LLM-native methods.

Paper: "A Systematic Study of Model Merging Techniques in Large Language Models", arXiv: 2511.21437, Nov 2025.