SuperBPE: Why Every LLM Tokenizer Is Getting Word Boundaries Wrong

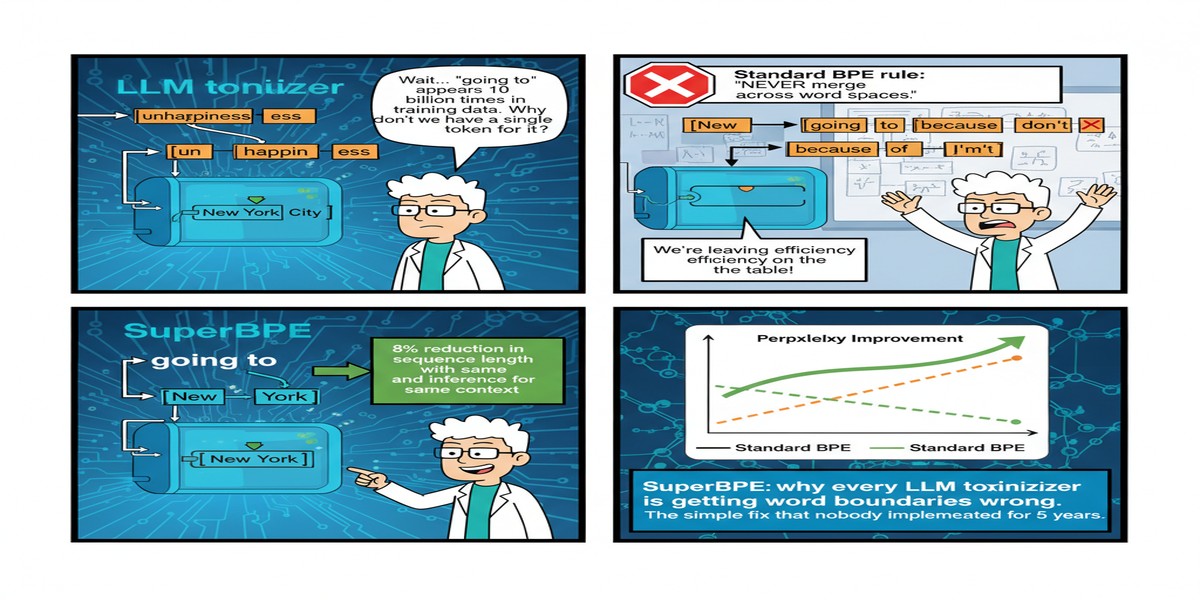

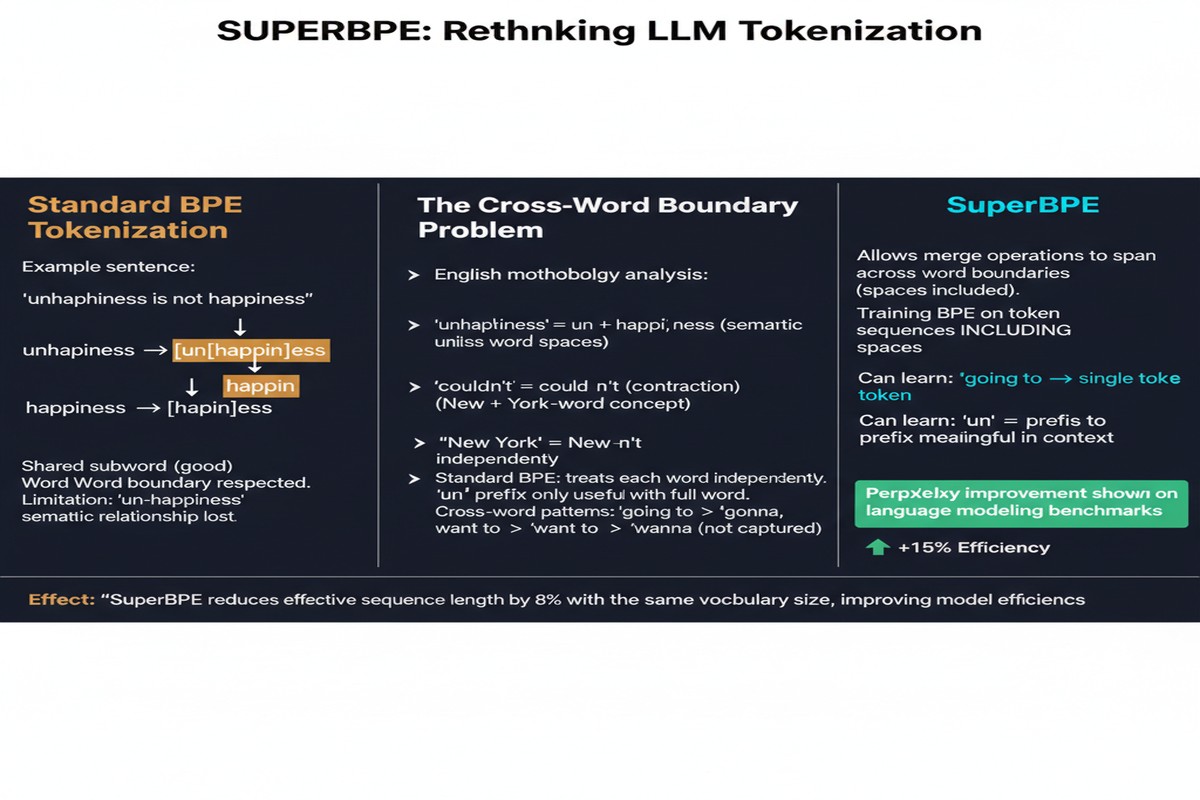

There's a quiet assumption baked into virtually every language model you've ever used: tokens shouldn't cross word boundaries. GPT, LLaMA, Qwen, Mistral — they all use variants of Byte Pair Encoding (BPE) that respect whitespace as a hard constraint. A token can be a subword fragment like ##ing or a full word like transformer, but it can never be something like transformer-based or in the.

SuperBPE (arxiv: 2503.13423), published at COLM 2025, says this assumption is wrong — and proves it with numbers that should make the NLP community uncomfortable.

What SuperBPE Actually Does

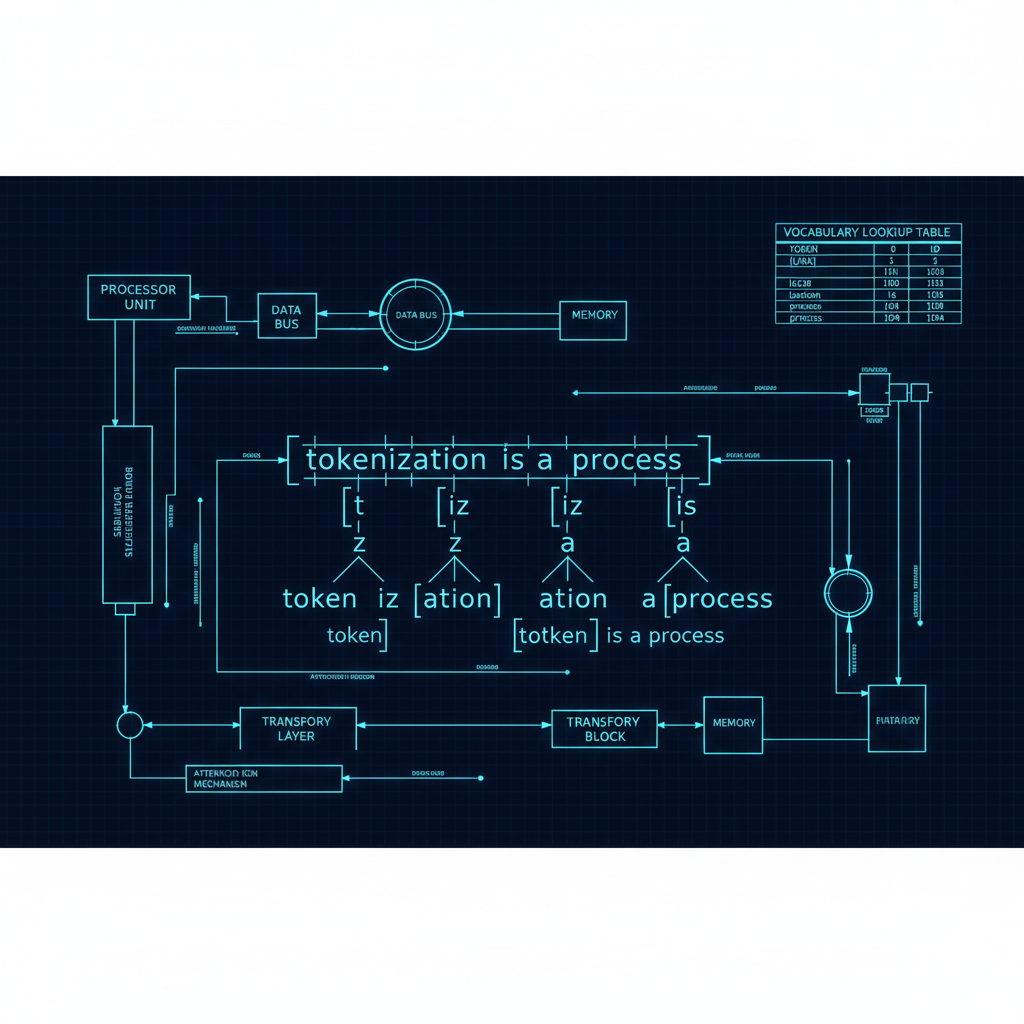

Standard BPE works by greedily merging the most frequent byte or character pair across a corpus, iteratively building up a vocabulary of subword tokens. The critical constraint is that merges never cross whitespace. This "pretokenization" step — splitting text by spaces before BPE runs — is treated as gospel.

SuperBPE questions that gospel. It operates in two passes:

flowchart TD

A[Raw Text Corpus] --> B[Pass 1: Standard BPE\nLearn subword vocabulary\nwithin word boundaries]

B --> C[Subword Tokenizer V1]

C --> D[Pass 2: SuperBPE\nLearn superword merges\nacross whitespace boundaries]

D --> E[Final Superword Vocabulary]

E --> F[Tokenized Text with\nCross-Word Tokens]

style B fill:#2563eb,color:#fff

style D fill:#7c3aed,color:#fff

style F fill:#059669,color:#fff

Pass 1 runs standard BPE to learn conventional subword tokens. This gives you a baseline tokenizer.

Pass 2 runs a second BPE phase on top, but now treats the subword-tokenized text as the input. Crucially, this second pass is allowed to merge across what were previously whitespace boundaries, creating "superwords" — multi-word tokens for common phrases like in the, of the, New York, or domain-specific compound expressions.

The curriculum design matters here. By first learning subwords properly, then layering superwords on top, SuperBPE avoids the instability of trying to learn everything cross-boundary at once.

The Numbers Are Hard to Ignore

SuperBPE's results at a vocabulary size of 200K:

- 33% fewer tokens to encode the same text compared to standard BPE

- +4.0% absolute improvement on average across 30 downstream tasks

- +8.2% improvement on MMLU alone — the standard LLM knowledge benchmark

- 27% less compute at inference time due to shorter sequences

That last point is underappreciated. Inference costs scale with sequence length. If you can encode the same text in a third fewer tokens, you get a third reduction in KV-cache memory, attention computation, and decoding steps. This is essentially free inference acceleration without changing the model architecture.

The MMLU improvement of 8.2 points is genuinely surprising. MMLU tests factual knowledge and reasoning across 57 subjects. The fact that a better tokenizer moves the needle this much suggests that tokenization-induced fragmentation of common phrases and compound concepts was actively hurting the model's ability to represent knowledge.

Why Word Boundaries Were Sacred in the First Place

The word-boundary constraint wasn't arbitrary. It emerged from practical concerns:

Computational tractability: Pre-tokenizing by whitespace dramatically reduces the BPE search space. Without it, every possible sequence of characters becomes a candidate merge target.

Linguistic intuition: Words are meaningful units. It seemed natural that tokens should align with or subdivide them.

Historical inertia: GPT-2's tokenizer made this choice in 2019, and every major model since has followed the pattern.

SuperBPE's two-pass curriculum sidesteps the computational tractability problem entirely. By doing standard BPE first, then superword merges, the search space for the second pass is already constrained to subword units — manageable and fast.

The Deeper Insight: Per-Token Difficulty Uniformity

The paper's analysis reveals something philosophically interesting: SuperBPE produces tokenizations where per-token "difficulty" is more uniform. Standard BPE creates tokens of wildly varying complexity — some tokens are trivial single characters, others are dense technical subwords. This variance creates uneven gradients during training.

SuperBPE's cross-word tokens tend to capture semantically coherent multi-word expressions: fixed phrases, proper nouns, common collocations. These are single concepts masquerading as multiple words. By tokenizing them as single units, the model can treat them appropriately rather than reconstructing their meaning from fragmented parts.

graph LR

subgraph Standard BPE

A1[New] --- A2[York] --- A3[is] --- A4[crowded]

end

subgraph SuperBPE

B1[New York] --- B2[is] --- B3[crowded]

end

style A1 fill:#ef4444,color:#fff

style A2 fill:#ef4444,color:#fff

style B1 fill:#059669,color:#fff

New York as two tokens forces the model to learn their relationship through co-occurrence. New York as one superword token encodes their unity directly in the vocabulary.

Why This Matters

Tokenization is the first transformation applied to every input and the last applied to every output. It shapes what a model can and cannot represent efficiently. Yet for most of LLM history, tokenization has been treated as a solved problem — a preprocessing detail, not a research frontier.

SuperBPE shows there's significant headroom left. If a simple two-pass modification to BPE yields +4% across 30 benchmarks, what else is the field leaving on the table?

The implications extend beyond English. Agglutinative languages (Turkish, Finnish, Hungarian) and morphologically rich languages have always struggled with BPE. Superword tokenization — if extended to understand morphological and cross-word patterns in these languages — could provide substantially larger gains than English models see.

My Take

This paper is overdue. The word-boundary constraint was always a convenience assumption, not a linguistic truth. The fact that SuperBPE yields a clean 33% token reduction and meaningful benchmark improvements should make every major AI lab reconsider their tokenization strategy.

I do have one concern: vocabulary bloat. At 200K vocabulary size, adding cross-word superwords could create sparse coverage issues for rare or domain-specific text that doesn't match common phrase patterns. The paper doesn't deeply analyze out-of-distribution behavior on specialized corpora.

But the core result stands. If you're training a new model from scratch, there's now a compelling argument to use SuperBPE over vanilla BPE. The question is whether existing labs will retrofit their tokenizers mid-training-run — and the answer is almost certainly no, because vocabulary changes require full retraining.

The next step should be integrating SuperBPE principles into multilingual tokenizers and studying how superword tokens interact with retrieval, generation diversity, and fine-tuning stability. There's a rich research agenda here that the NLP community should chase aggressively.

Tokenization is core infrastructure. Time to start treating it like it matters.

Paper: "SuperBPE: Space Travel for Language Models", COLM 2025. arXiv: 2503.13423.