Ask GPT-4 how many r's are in the word "strawberry" and there is a reasonable chance it will get it wrong. This is not a reasoning failure or a knowledge gap — the model knows what the letter r looks like in other contexts. It is a tokenization failure: the model does not "see" the individual characters of words unless it has been specifically trained to process them that way.

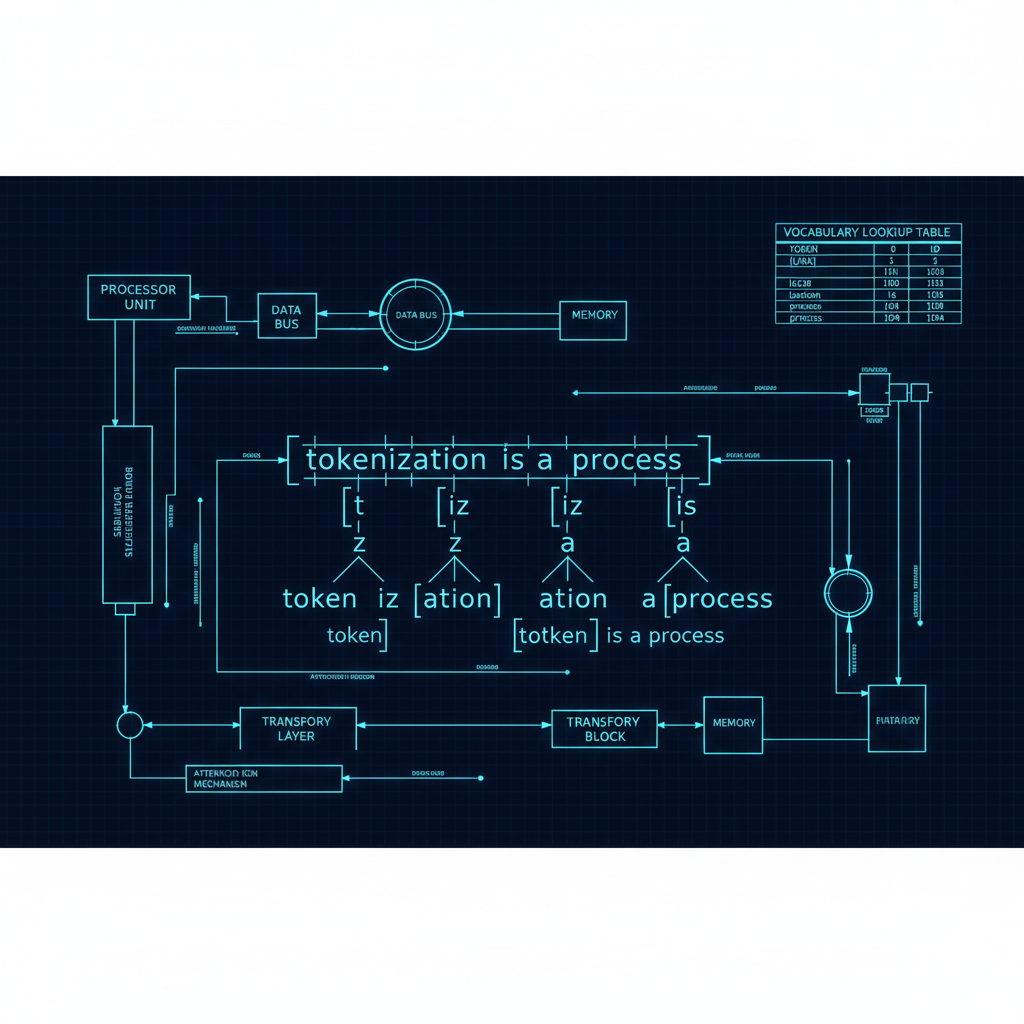

This example is a window into one of the most underappreciated aspects of how language models work: the tokenizer. Before a model processes a single piece of text, a tokenizer converts it into a sequence of integer IDs representing subword units. The choices made in tokenizer design — what units to use, what the vocabulary size is, how to handle unusual inputs — shape what models can and cannot do in ways that persist through training and cannot be fixed through prompting alone.

What Tokenization Is and Why It Exists

The earliest neural language models operated on individual characters or words. Characters are appealing because the vocabulary is small (26 letters for English) and the representation is unambiguous. Words are appealing because they align with meaningful semantic units. Both have serious problems at scale.

Character-level models face very long sequence lengths — even short sentences become hundreds of characters — which strains attention-based architectures that scale quadratically or linearly with sequence length. Word-level models face open vocabulary problems: any word not seen in training must be handled as an out-of-vocabulary token, and the vocabulary of natural language is effectively infinite (proper nouns, technical terms, new coinages, rare words).

Subword tokenization splits words into common subword units — "tokenize" might become ["token", "ize"], and "unbelievable" might become ["un", "believ", "able"]. This balances the competing demands: sequences are not as long as character-level, and the vocabulary covers the vast majority of text without out-of-vocabulary issues.

Byte Pair Encoding: The Dominant Approach

Byte Pair Encoding (BPE), introduced for NLP by Sennrich et al. (2016) and then adopted by GPT-2, GPT-3, GPT-4, and most subsequent models, is the dominant subword tokenization method. The algorithm starts with a character-level vocabulary and iteratively merges the most frequent pair of adjacent tokens into a new combined token, until the desired vocabulary size is reached.

The result is a vocabulary where common words are represented as single tokens, common prefixes and suffixes are their own tokens, and rare words are split into recognizable subword pieces. The vocabulary size is a key hyperparameter — GPT-4 uses approximately 100,000 token vocabulary, while earlier models used 50,000. Larger vocabularies reduce average sequence length (common words are single tokens) but increase the number of embeddings the model must learn.

The GPT tokenizer (tiktoken) uses BPE applied at the byte level, which elegantly handles any Unicode text without out-of-vocabulary issues: any byte sequence can be represented, even if specific characters are rare or novel. This "byte-level BPE" approach is used by GPT-2 through GPT-4 and their successors.

LLM families like Llama and Mistral use SentencePiece, another subword tokenization approach that operates on Unicode characters rather than bytes but otherwise has similar properties.

How Tokenization Creates Model Failure Modes

The strawberry example reveals a general pattern: current tokenization schemes obscure character-level structure in ways that create systematic model weaknesses.

Spelling and character counting. When "strawberry" is tokenized as ["straw", "berry"] or ["st", "raw", "berry"], the model sees a sequence of subword tokens, not individual characters. Counting the occurrence of a specific letter requires reasoning across token boundaries, which the attention mechanism does not trivially support. The model has to learn, through training, to decompose tokens into their character constituents — and this learning is imperfect and inconsistent.

Number representation. Numbers present similar challenges. "1234567" might be tokenized as a single token or as ["1", "234", "567"] or as something else entirely, depending on the tokenizer and training distribution. Arithmetic on tokenized numbers requires the model to understand the positional significance of digits that may be arbitrarily grouped across token boundaries. The systematic failure of early models on multi-digit arithmetic is substantially a tokenization problem, not just a reasoning problem.

Multilingual inequality. Tokenizers trained on predominantly English text are inefficient for other languages. A word in Chinese, Arabic, or a low-resource African language may require many more tokens to represent than an equivalent English expression. This means that for the same context window, models can process far less content in non-English languages, and the effective training exposure to non-English text in terms of semantic units is lower than raw token counts suggest.

Research has documented that this multilingual tokenization inequality systematically disadvantages users of languages that are poorly represented in training data. Multilingual models and tokenizers designed with language balance in mind (like those used in mBERT or BLOOM) partially address this, but the problem persists in most frontier models.

Code and specialized syntax. Code tokenization varies by language and can be suboptimal. Python code with significant whitespace indentation may tokenize differently than semantically equivalent but differently formatted code. Mathematical notation, LaTeX, and other structured formats may be tokenized in ways that obscure their structure.

Alternative Tokenization Approaches

Several research directions explore alternatives to standard BPE that might address these limitations.

Character-level models with efficient attention. With sufficient attention efficiency improvements (FlashAttention, linear attention), character-level models become computationally feasible for shorter texts. MegaByte (Yu et al., 2023) proposed a hierarchical approach: process characters in local patches, then process the sequence of patches with a larger model. This retains character-level access while controlling sequence length.

Byte-level approaches without tokenization. ByT5 and similar models work directly at the byte level, eliminating the tokenizer entirely. This provides perfect coverage of any text and equal treatment of all languages, but requires the model to learn linguistic structure from scratch at a very low level. Sequence lengths are dramatically longer, which increases compute requirements.

Word-level tokenization with better OOV handling. Some research has revisited word-level tokenization with explicit mechanisms for handling unknown words — morphological decomposition, character fallback, or cached rare word embeddings — to avoid the information loss of aggressive subword splitting.

Morphologically-informed tokenization. Rather than BPE (which is frequency-based and does not respect morphological boundaries), linguistically motivated tokenization that splits words along morpheme boundaries could provide more principled representations. This is particularly relevant for morphologically rich languages where BPE behavior is less predictable.

Recent Improvements and Workarounds

The field has not stood still on tokenization issues.

OpenAI's models have improved character-level awareness over time, partly through training data that includes explicit character-level tasks. GPT-4o is significantly better at character counting and spelling tasks than GPT-3.5, suggesting that training data curation and possibly auxiliary training objectives can teach models to reason about character-level structure despite tokenization.

Larger context windows partially mitigate tokenization inefficiency for non-English languages — with more tokens available, the practical impact of needing more tokens per semantic unit decreases.

Some recent models have experimented with dynamic tokenization — adapting the tokenization strategy based on the input domain — though this adds complexity and is not yet mainstream.

Instruction-following training has helped models learn to be explicit about tokenization uncertainty. Modern models are more likely to hedge on spelling tasks ("let me check each character...") rather than confidently confabulating.

Practical Implications for Practitioners

For developers building applications on top of language models, tokenization has several practical implications.

Cost and context length. Token counting determines API costs and determines whether your content fits in a context window. Non-English text, code with significant whitespace, and heavily symbolic text (URLs, code, mathematical notation) may use tokens less efficiently than plain English prose.

Input preprocessing. For tasks involving character-level reasoning (spelling, abbreviation expansion, anagram detection), explicitly breaking text into individual characters in the prompt — or providing character-by-character versions — can substantially improve performance.

Number handling. For arithmetic tasks, explicitly providing structured format guidance ("calculate step by step, writing each digit separately") helps the model navigate token boundary issues. For applications requiring precise arithmetic, calling a calculator tool rather than relying on in-context math is almost always better.

Multilingual applications. When serving non-English users, budget more tokens per semantic unit than you would for English, and evaluate performance explicitly in the target language rather than assuming that English benchmark performance transfers proportionally.

The Long View

Tokenization is one of those foundational choices in deep learning that has enormous downstream consequences but does not get the research attention its impact deserves. The entire edifice of language model capability is built on top of these token sequences, and the structure of those sequences shapes what the models can easily learn to do.

It is plausible that future generations of language models will use fundamentally different input representations — perhaps operating at the character or byte level with efficient enough attention mechanisms, perhaps using learned tokenizers that adapt dynamically to their inputs. Until then, understanding the tokenizer is essential for understanding both the strengths and the systematic limitations of the models built on top of it.

The strawberry problem is small. The principle it reveals is not.