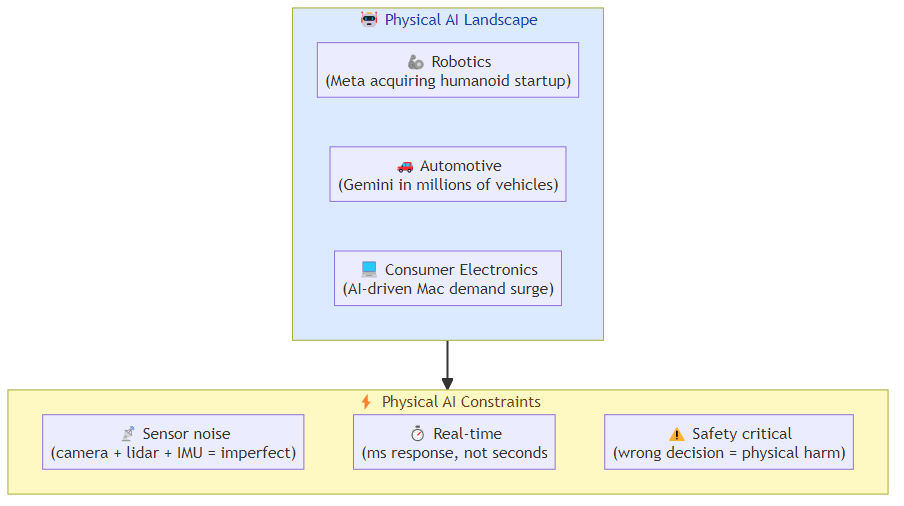

The conversation about AI agents has been almost entirely about software: LLM-based systems that reason, plan, and act in digital environments. But a parallel track is developing where AI agents operate in the physical world — robots that navigate environments, vehicles that make driving decisions, manufacturing systems that adapt in real time.

The evidence is accumulating. Meta acquired a robotics startup to advance its humanoid AI ambitions. Google's Gemini is expanding into millions of vehicles. Apple saw surprising demand for Macs driven by AI capabilities. These aren't just product announcements — they're signals that the AI industry is preparing for a future where agentic AI isn't confined to software.

Why Physical AI Is Different

Software AI agents operate in an environment they can fully model: text, code, structured data. The physical world introduces constraints that software agents don't face:

Sensor limitations: a robot's perception of the world is mediated by sensors that have noise, latency, and blind spots. An LLM that reasons about a scene from a perfect textual description behaves differently than one that reasons from noisy camera feeds and imprecise sensor data.

Actuator constraints: software agents can take any action that the system permits. Physical agents can only take actions that their mechanical embodiment allows — and mechanical systems have inertia, wear, and failure modes that software doesn't.

Real-time requirements: software agents can often take seconds or minutes to respond. Physical agents in dynamic environments often need to respond in milliseconds — a robot navigating a crowded warehouse or a vehicle responding to a sudden obstacle can't wait for a language model to finish reasoning.

Safety criticality: a software agent that makes a wrong decision typically causes data problems or user frustration. A physical agent that makes a wrong decision can cause physical harm. This changes everything about how you evaluate, test, and deploy these systems.

The Hardware Bet

Meta's acquisition of a robotics company for humanoid AI is a specific signal about where the industry is heading. Humanoid robots are a particularly interesting target because they represent the physical embodiment that can most easily replace human labor in environments designed for human bodies.

The technical challenges are significant. Humanoid robots require:

- Fine motor control for manipulation tasks

- Balance and locomotion in unstructured environments

- Natural language understanding for human-robot interaction

- Navigation in spaces designed for human movement

- Physical reasoning about object interactions

These are all active research problems, but the integration challenge is what makes humanoid robotics specifically hard. A system that can do all of these things individually needs to do them simultaneously, in real time, while handling the unexpected.

The Automotive AI Frontier

Google's Gemini expansion into vehicles represents a different physical AI application: AI assistants that operate while the user is engaged in a task (driving) that requires intermittent but meaningful interaction. This is an interesting middle ground between pure software agents and full autonomous systems.

The use case is becoming clear: drivers who need to interact with complex systems (navigation, climate, communication, entertainment) while keeping their attention on the road. AI assistants that can handle these interactions through natural language — "find a charging station within 50 miles that has a coffee shop nearby" — reduce the distraction burden of vehicle interaction.

The long-term play is likely autonomous driving, where the AI assistant isn't just helping with infotainment but coordinating the vehicle's operational decisions. Google's automotive partnerships are building toward a future where the AI assistant is a co-pilot, not just a voice interface.

Consumer Electronics as Physical AI

Apple's surprise at AI-driven Mac demand is interesting because it suggests a different physical AI angle: AI capabilities in personal devices that change hardware purchasing decisions. The "AI Mac" is becoming a product category, not just a feature.

This creates a new dimension for physical AI: devices that are specified, in part, by their AI capabilities. The laptop that can run local LLMs for privacy-sensitive tasks, the phone that can do on-device inference for low-latency AI responses, the desktop that can serve as an AI workstation — these are physical AI部署 that change how people think about their devices.

The implication for AI builders: the distribution of AI capability is shifting from cloud-only to cloud + edge. Edge AI deployment (on-device inference) has different characteristics than cloud deployment — lower latency, higher privacy, lower cost per query — and is becoming a primary deployment target for physical AI applications.

The Agentic AI Convergence

What's happening across these domains — robotics, automotive, consumer electronics — is a convergence toward the same architectural pattern: physical AI agents that combine sensing, reasoning, and actuation in real time.

The agentic AI frameworks I've written about for software agents (multi-agent orchestration, tool use, structured output, human-in-the-loop) have physical counterparts:

- Multi-agent coordination for multi-robot systems

- Tool use for robot-environment interaction

- Structured output for sensor data interpretation

- Human-in-the-loop for safety-critical decisions

The software AI agent patterns are portable to physical agents, but the physical context introduces constraints that require adaptation. A software agent that times out on a tool call can retry. A physical agent that times out on a navigation decision may crash.

What This Means for AI Builders

The emergence of physical AI creates two distinct opportunity areas:

The AI infrastructure for physical agents: the software layer that enables physical AI — sensor processing, real-time inference optimization, actuator control interfaces, safety monitoring — is a less developed area than pure software AI infrastructure. Companies building this layer are positioned similarly to where software AI infrastructure companies were 3-4 years ago.

The application integration layer: connecting AI agents (software or physical) to physical world systems. Most physical systems — factory equipment, vehicles, building systems — were not designed to be AI-controlled. The integration layer that makes them controllable by AI agents is a significant engineering opportunity.

The Bottom Line

The agentic AI revolution isn't just happening in software. The physical world is becoming an AI deployment target, with robotics, automotive, and consumer electronics all moving toward AI-integrated physical systems.

The architectural patterns for software agentic AI provide a foundation, but physical deployment requires adaptation for sensor noise, actuator constraints, real-time requirements, and safety criticality. The teams that build expertise in physical AI — the combination of AI capability with mechanical systems, real-time constraints, and safety requirements — will be operating in a space with less competition and more durable moats than pure software AI.

The next phase of the AI revolution has a physical dimension. The companies that understand both the software and physical aspects of AI agents will define what comes next.

Related posts: Multi-Agent Orchestration at Scale — the orchestration patterns that extend to physical agents. AI Agent Commerce — AI agents operating in the physical world of transactions and logistics.