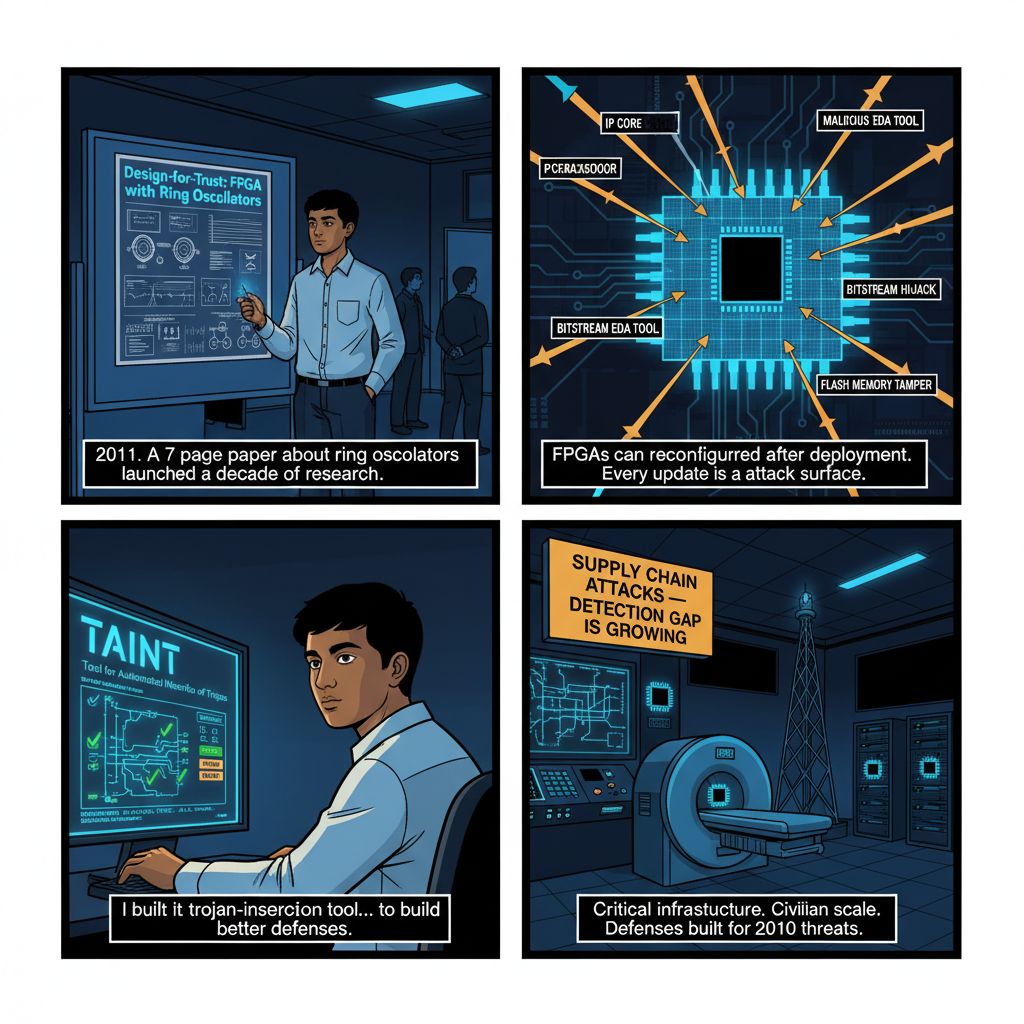

In 2011, the paper that launched my research career was a 7-page conference submission about ring oscillators.

The idea was elegant in its simplicity: FPGAs contain millions of look-up tables (LUTs) with measurable propagation delays. Those delays are determined by the nanoscale physics of the specific device — by the particular way that transistors on that chip were formed during fabrication. Design a circuit that uses those delay characteristics to generate a unique fingerprint, and you have a hardware root of trust that doesn't depend on software, keys stored in potentially-compromised memory, or any assumption about the integrity of the supply chain.

The paper — "Design and Analysis of Ring Oscillator Based Design-for-Trust Technique," published at the IEEE VLSI Test Symposium — went on to accumulate 109 citations. More importantly to me at the time, it established a research direction that I would spend the next six years pursuing: how do you verify that a programmable hardware device hasn't been compromised, in a world where you can't fully trust the supply chain that produced it?

That question has only become more urgent. And the answer, which I'll give you now before walking through why: we don't have a complete one, and the window to develop adequate defenses is narrowing faster than most security teams realize.

What Makes FPGAs Different

Before getting into supply chain attacks specifically, it's worth understanding why FPGAs occupy a unique and particularly dangerous position in the hardware security landscape.

An FPGA — Field-Programmable Gate Array — is fundamentally a blank slate. It's an array of configurable logic blocks, interconnects, and I/O elements that can be programmed and reprogrammed to implement almost any digital circuit. That flexibility is the point. FPGAs let engineers deploy hardware functionality without the cost and lead time of custom ASIC fabrication. They're used for signal processing in telecommunications equipment, image processing in medical scanners, cryptographic acceleration in network security appliances, control logic in industrial systems, and increasingly as the fabric of reconfigurable data center infrastructure.

That same flexibility is the core of the security problem.

An ASIC — Application-Specific Integrated Circuit — is fabricated once and its logic is fixed in silicon. A supply chain attack on an ASIC requires compromising the design or fabrication process, inserting malicious logic into the chip before it's manufactured. That's a real threat, but it requires resources and access that limit the attack surface.

An FPGA can be reconfigured after deployment. The configuration data — the "bitstream" that defines what circuit the FPGA implements — can be updated in the field. This is used legitimately all the time: firmware updates, bug fixes, feature additions without hardware replacement. But it means a compromised FPGA can be a completely different device from the one that was tested and certified, and the reconfiguration attack can happen months or years after deployment.

Consider what that means for supply chain security. An attacker who gains access to the configuration infrastructure for FPGAs deployed in a network security appliance doesn't need to touch the hardware. They push a malicious bitstream, and the physical device that was certified as secure is now running attacker-controlled logic. The silicon hasn't changed. The configuration has.

The Supply Chain Attack Surface: Where the Threats Actually Are

The popular mental model of an FPGA supply chain attack involves a state actor compromising a chip factory and inserting malicious logic before fabrication. This is a real threat category. It's also not where most of the practical risk lives.

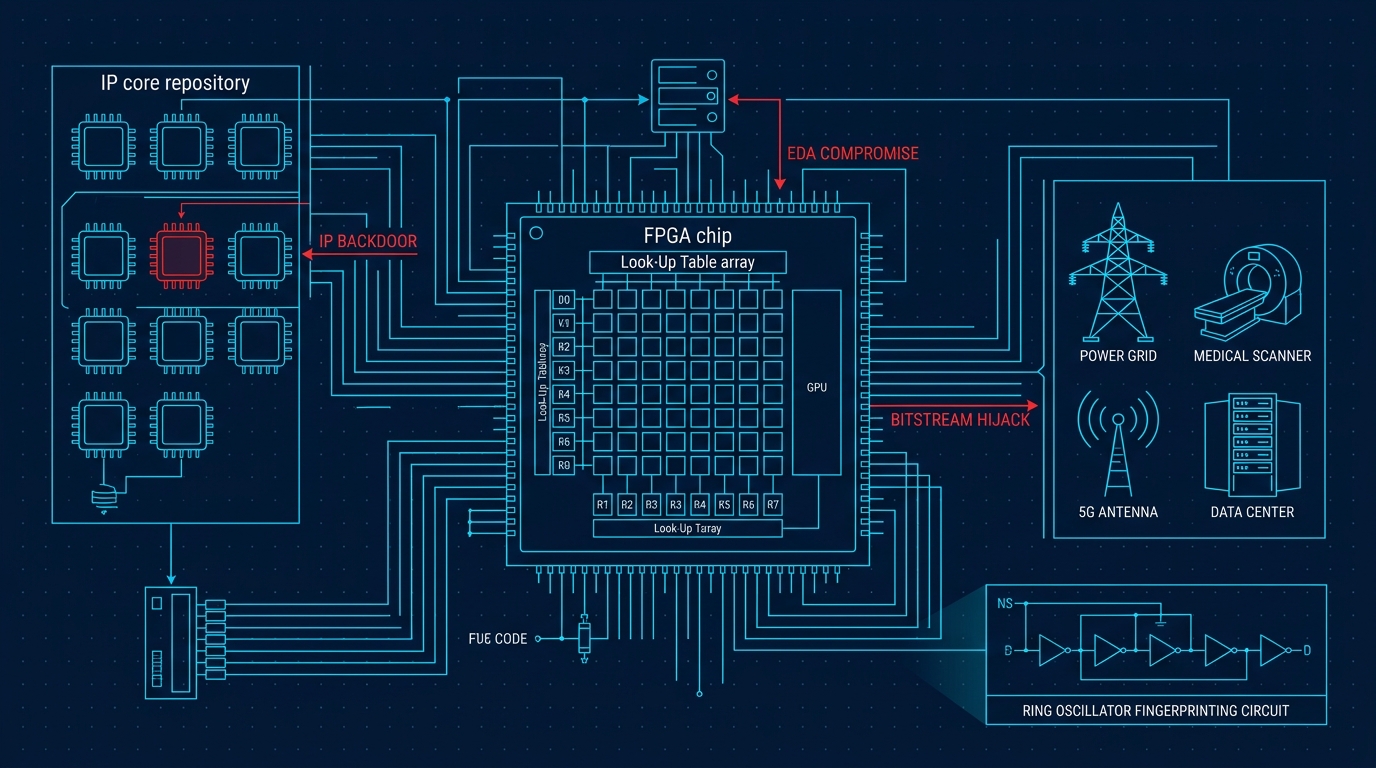

The actual FPGA supply chain has multiple attack surfaces, and several of them are more accessible than chip-fab compromise:

IP core supply chain. FPGAs are programmed using hardware description languages (VHDL, Verilog) and often incorporate third-party intellectual property (IP) cores — pre-designed blocks for common functions like PCIe interfaces, DDR memory controllers, or cryptographic primitives. These IP cores come from a fragmented ecosystem of commercial vendors, open-source repositories, and design service companies. A malicious IP core inserted into a design can introduce backdoors, covert channels, or subtle functionality degradation that survives the entire downstream design and verification process.

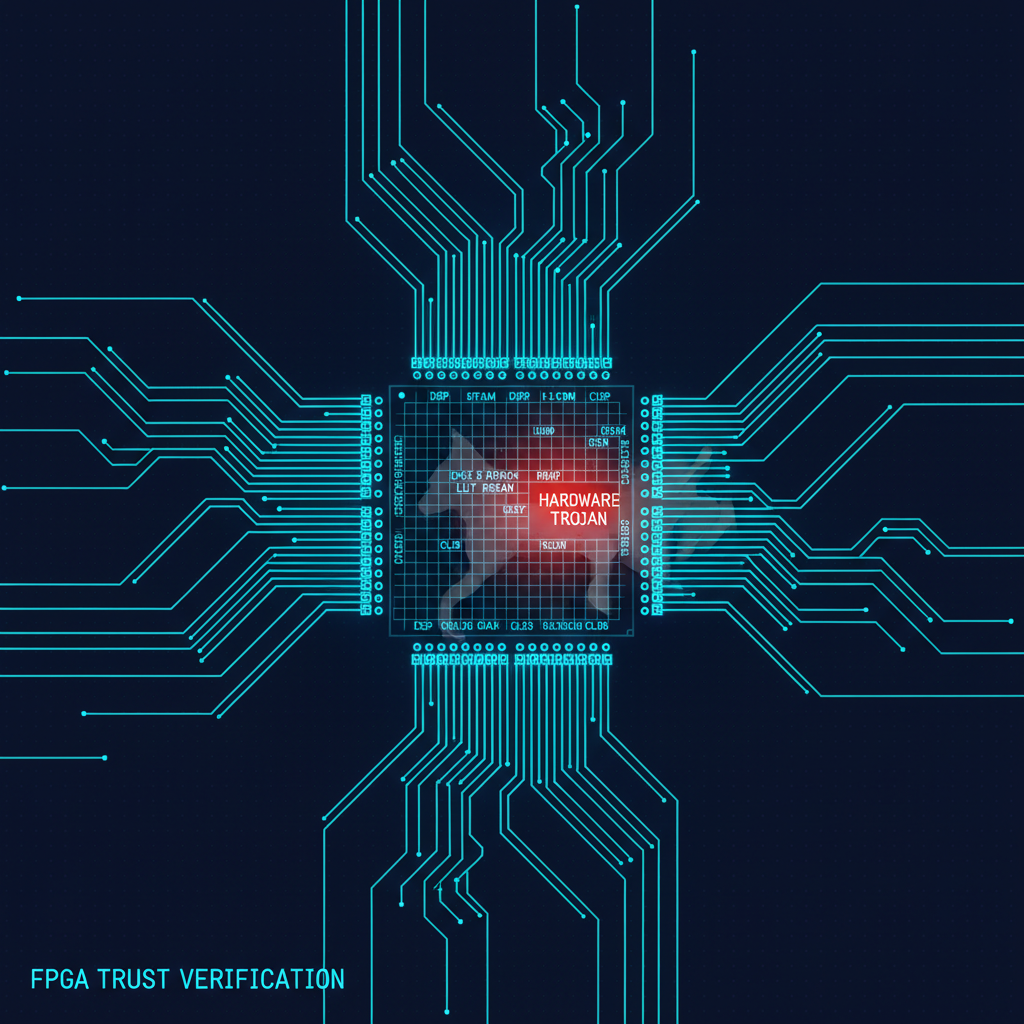

I built a tool during my NYU Tandon Ph.D. research called TAINT — Tool for Automated INsertion of Trojans — specifically to study this attack surface. The goal wasn't to make trojans easier to insert. It was to generate a realistic, diverse set of trojan-infected designs that could be used to develop and benchmark detection methods. What TAINT demonstrated, when we published it at IEEE ICCD in 2017, was how astonishingly easy it is to insert trojans that evade existing verification flows. The trojans that TAINT generated — conditional logic that activates only under rare trigger conditions — passed standard functional simulation with high coverage. The detection problem is harder than most design teams appreciate.

EDA tool supply chain. Electronic Design Automation tools — the software used to synthesize, place and route, and simulate FPGA designs — are complex applications with deep access to design files and build environments. A compromised EDA tool could silently modify designs during synthesis or place-and-route without leaving traces in the source code. This is the supply chain equivalent of a compiler that inserts backdoors into every binary it generates. It's a conceptually understood threat that has received relatively little practical attention in the FPGA security community.

Bitstream distribution infrastructure. Most FPGA deployments include remote update capabilities. The infrastructure used to deliver bitstream updates — update servers, signing infrastructure, distribution networks — represents an attack surface that, if compromised, provides access to every FPGA device in a deployed fleet. The security of this infrastructure is often an afterthought, designed by teams focused on the hardware and software functionality rather than security.

Configuration memory integrity. FPGAs read their configuration from external flash memory at startup. An attacker with physical access to a deployed device can modify that flash memory to load a malicious bitstream. This is an obvious attack, but it's surprisingly common in practice — industrial FPGA deployments often lack physical security controls adequate to address an adversary with even brief physical access to the equipment.

What I Built: Fingerprinting and the Trust Zone

My research at NYU Tandon developed two primary technical approaches to FPGA supply chain security. Both have seen commercial deployment. Neither is a complete solution. Understanding what they can and can't do is important for anyone designing security architecture that relies on them.

FPGA Fingerprinting. The ring oscillator work from 2011 grew into a comprehensive fingerprinting methodology that I continued developing through my Ph.D. The core insight, which I published with collaborators at IEEE ICCD in 2017, is that every FPGA has a unique physical identity derived from manufacturing process variation — differences in transistor dimensions, dopant concentrations, and gate oxide thickness that occur at the nanoscale level and cannot be reproduced, even by the manufacturer, even for a supposedly identical device from the same production run.

Circuits that measure these variations — Physical Unclonable Functions (PUFs) — generate device-unique signatures. Our fingerprinting work extended PUF technology in several specific directions: improving the stability of signatures across temperature and voltage variation, developing techniques for authenticating FPGA identity without requiring secure key storage (the keys are derived from physics, not stored anywhere that can be compromised), and building enrollment protocols that could establish trusted device identities before deployment.

What this enables: a deployed FPGA can prove its physical identity cryptographically, without relying on any stored secret that an attacker could extract. You can verify that the device you enrolled pre-deployment is the same physical device responding post-deployment. This closes the physical device substitution attack.

What this doesn't enable: it doesn't verify what that physical device is doing. An FPGA with a valid physical identity can still be running a malicious bitstream. Fingerprinting addresses device authentication, not bitstream integrity — a different, and in some ways harder, problem.

FPGA Trust Zone. The Trust Zone work, published at IEEE ICCD 2016, addressed a different aspect of the problem: how do you incorporate security monitoring directly into an FPGA design so that integrity violations during operation can be detected?

The architecture divides FPGA resources into trust zones with different security properties. Security-critical functions are isolated in higher-trust zones with integrity monitoring circuits. Anomaly detectors watch for deviations from expected behavior — unusual switching activity, unexpected data patterns, timing anomalies that might indicate malicious logic activation. The monitoring circuits are designed to be resilient to tampering by the logic they're monitoring.

This is fundamentally a detect-and-respond approach rather than a prevent approach. It accepts that trojans may be present and focuses on catching them when they activate. The limitation is activation rate: a sophisticated trojan designed to trigger only under conditions that don't overlap with the monitoring window can potentially evade detection. The work demonstrated that statistical monitoring across operational time can dramatically reduce the viable trigger space for an attacker, but it doesn't reduce it to zero.

The practical patent portfolio that emerged from this research — including work on hardware trust verification, power management for AI hardware, and security for trusted AI processing — reflects the broader applicability of these techniques beyond just FPGA research.

Why Critical Infrastructure Is the Priority Target Now

For most of the time I spent on FPGA security research, the primary concern was military and intelligence systems. FPGAs in that context were highly specialized, procured under strict controls, and operated in environments with some physical security. The threat model was primarily nation-state actors with significant resources.

That threat model is now incomplete in three important ways.

The deployment scale has changed. FPGAs are no longer primarily a defense procurement concern. They're deployed at scale in cloud data centers as SmartNICs and DPUs handling network processing. They're the reconfigurable acceleration layer in 5G base stations. They're in the medical devices doing real-time signal processing in ICUs. They're in the industrial control systems managing power grid substations and water treatment facilities. The attack surface is now civilian critical infrastructure at massive scale.

The attack economics have changed. A nation-state attack on a military FPGA requires significant resources and accepts high detection risk. A supply chain attack on open-source FPGA IP cores — of which there are thousands, maintained by small teams with limited security resources — can potentially affect thousands of downstream designs at marginal cost. The attacker ROI calculation for FPGA supply chain attacks on civilian infrastructure has become much more favorable.

The detection gap has grown. The security verification practices for FPGAs in critical infrastructure — where they exist at all — were designed for the threat model of 2010. Functional testing against specification. Certification against standards that don't include supply chain threat scenarios. Procurement processes that treat the FPGA bitstream as an implementation detail rather than a security asset.

I worked through this reality in practical terms when I was involved in hardware security consulting after my NYU research. The gap between what the academic security community understood about FPGA supply chain attacks and what the practitioners responsible for deploying FPGAs in critical systems knew was striking. Not because practitioners were negligent — because the threat model had evolved faster than the practitioner training and tooling had.

Detection Methods That Actually Work (and Their Limits)

Let me be direct about the current state of FPGA hardware trojan detection methods, because this area is surrounded by optimism that occasionally overshoots the evidence.

Side-channel analysis exploits the measurable physical effects of hardware trojan presence — power consumption, electromagnetic emissions, thermal signatures. Our research at NYU Tandon contributed to this body of work. The method's strength is that it's sensitive to malicious logic that produces no functional anomalies — logic that hides by activating only under rare trigger conditions still affects power consumption characteristics. The limitation is that establishing what "normal" looks like requires reference devices, and in a supply chain you don't fully trust, those reference devices may themselves be compromised.

Formal verification can prove properties about a design with mathematical rigor. If you can express the security property you care about — "this design contains no logic that activates only when a 32-bit counter reaches value X" — formal verification can tell you whether the property holds. The practical limitation is that the property space of possible trojans is enormous, and formal verification doesn't scale to checking against an unbounded set of possible trojan structures.

Functional testing with high coverage remains the primary practical method in most deployment environments, and it has a fundamental structural limitation: sophisticated trojans are designed specifically to evade testing. The rare-trigger-condition design pattern means that even extremely high test coverage — 99.9% of possible input states — can completely miss a trojan designed to activate in the remaining 0.1%.

Runtime monitoring — what the FPGA Trust Zone work implements — provides detection coverage for the operational phase rather than just at test time. It's the most practically promising direction for deployed systems, because it doesn't require solving the impossible problem of testing against all possible trojan structures. The limitation is that effective monitoring requires knowing what anomalies to look for, and adaptive trojans can potentially learn monitoring patterns.

The honest synthesis: no single technique provides adequate coverage. A defense-in-depth approach — multiple detection methods at different points in the supply chain, combined with strong configuration integrity controls and runtime monitoring — is the right architecture. The individual techniques are partial answers to a fundamentally adversarial problem.

What Needs to Change

The FPGA security community has been producing research results for fifteen years. The practitioner community deploying FPGAs in critical infrastructure has adopted a fraction of those results. Closing that gap is the important work now.

Three specific changes would make the most difference:

Mandatory bitstream signing for critical infrastructure. Every FPGA bitstream loaded into critical infrastructure should be cryptographically signed and the signature verified before loading. This is technically feasible, toolchain support exists, and it's not routinely required. Mandatory bitstream integrity verification doesn't prevent supply chain attacks at the design phase, but it dramatically raises the cost of post-deployment bitstream replacement attacks.

IP core provenance tracking. Designs that incorporate third-party IP cores should be required to document the provenance of those cores and the verification steps applied to them. In practice, IP cores are often treated like software libraries — pulled from repositories without security review. Hardware IP cores are not like software libraries. A malicious software library can often be detected through behavioral analysis or sandboxing. Malicious hardware IP, once synthesized into a bitstream and loaded onto physical hardware, is orders of magnitude harder to detect.

Threat model updates for critical infrastructure certification. The standards used to certify FPGAs for critical infrastructure deployment — IEC 62443 for industrial control systems, FDA guidance for medical devices, various DoD and intelligence community standards — need to be updated to explicitly address supply chain attack scenarios. Current certification processes often don't require that supply chain integrity be addressed, which means deployers face no compliance incentive to implement available defenses.

The research exists. The tools exist. The gap is governance, incentives, and awareness — the usual gap between what the security community knows is necessary and what the deployment community is required to do.

Where This Goes Next

I've spent time since my NYU research applying hardware security principles to AI systems — including the work at Pathtronic on AI chip security and the patent work on security for trusted AI hardware processing. The intersection of FPGA security and AI acceleration is a genuinely new and important frontier, because FPGAs are increasingly used as the reconfigurable substrate for AI inference in edge and cloud environments.

An FPGA running AI inference is an FPGA with all the supply chain vulnerabilities I've described, plus the specific concern that a compromised bitstream could subtly manipulate the AI computation itself — biasing outputs in specific contexts, leaking model weights through covert channels, or degrading accuracy in ways that are invisible without carefully constructed test cases. This is not a hypothetical concern. It is an engineering reality that the hardware security community needs to engage with seriously as AI inference infrastructure scales.

For hardware-level attacks on AI accelerators beyond supply chain compromise, see my analysis of side-channel attacks on ML accelerators — which addresses a complementary attack surface where the threat actor doesn't need supply chain access, only physical or co-tenancy proximity to the device.

The supply chain attack frontier in hardware security has moved faster than our defenses. FPGA security is a microcosm of that dynamic — a field where the attacks are well-understood, the defensive techniques exist, and the deployment gap remains large. Closing it is the work in front of us.

I spent a decade building tools to detect FPGA hardware trojans. I remain convinced that the detection problem is solvable — not with a single technique, but with defense-in-depth architectures that accept the adversarial reality and layer multiple imperfect defenses appropriately. What I'm less certain about is whether the governance structures that would drive adoption of those defenses will move fast enough to matter. That's a question the broader security community needs to take seriously before the cost of inaction becomes concrete.