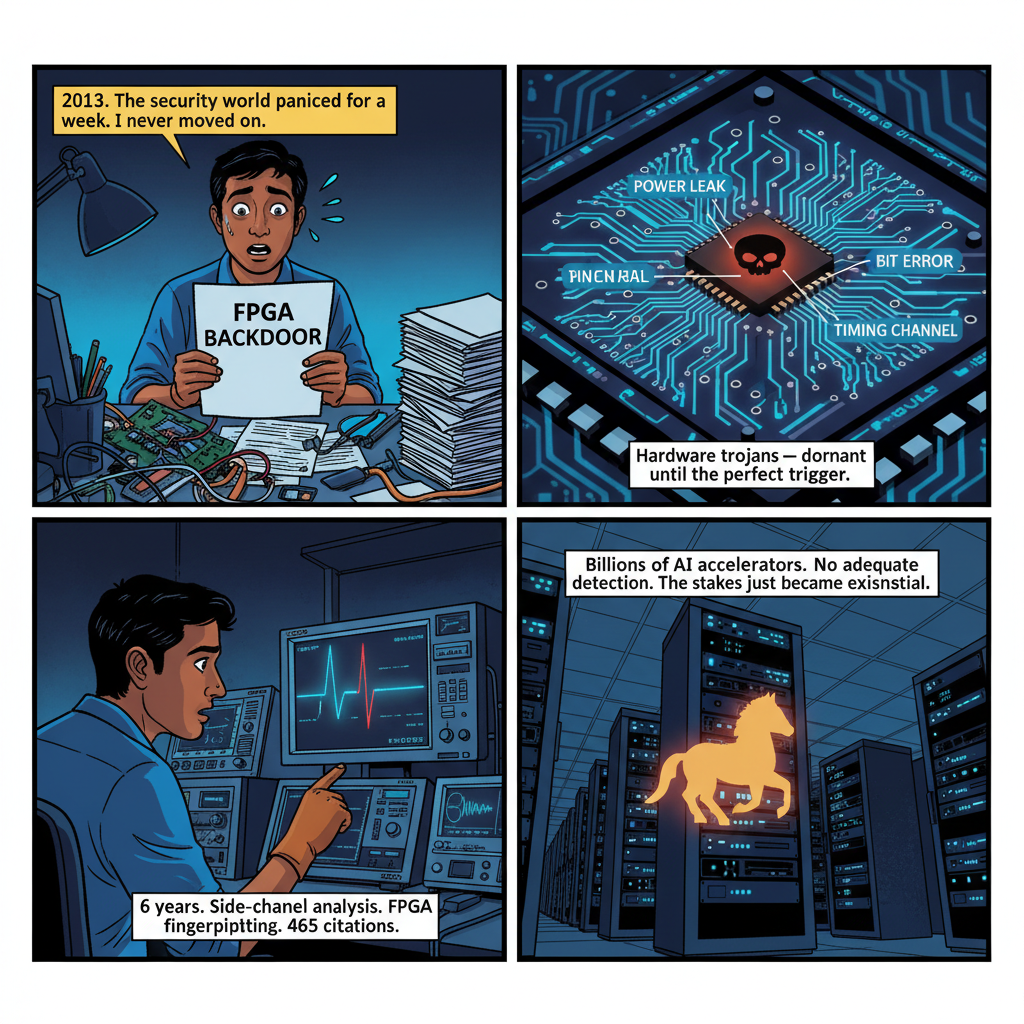

In 2013, a research team published a paper that made the hardware security world briefly panic. They claimed to have found a backdoor in a widely-used military-grade FPGA — a hardware trojan allegedly capable of allowing remote access to classified systems. The research was contested, the manufacturer pushed back hard, and the mainstream news cycle moved on within weeks.

I was in the middle of my Ph.D. at NYU Tandon School of Engineering at the time. I didn't move on.

What struck me wasn't the specific claim — it was the terrifying realization that even if that particular backdoor was misidentified, the possibility was real. We had reached a point in global chip manufacturing where hardware trojans — malicious circuits embedded in silicon during design or fabrication — were a credible, detectable threat. And most of the security industry was still treating it as an academic curiosity.

I spent the next six years working to change that. Here's what I learned — and why it matters more now than it did when I started.

What a Hardware Trojan Actually Is (Not What Most Security Teams Think)

The popular mental model of a hardware trojan is a physical bug planted by a spy during manufacturing. That image — a state actor inserting a rogue chip on a factory floor somewhere in Asia — is vivid and mostly wrong.

Real hardware trojans are subtle. They're additional logic circuits inserted into a chip's design, activated only under rare trigger conditions, and designed to be invisible to standard testing. A trojan might:

- Leak secret keys by encoding them in power consumption patterns

- Degrade cryptographic operations by introducing precisely calibrated bit errors

- Create a covert timing channel that allows communication even in air-gapped systems

- Lie dormant for millions of clock cycles until a specific trigger sequence activates it

- Manipulate random number generators to weaken security without producing detectable functional failures

The trigger condition is the key to understanding why hardware trojan detection is so difficult. A sophisticated trojan might activate only when a 32-bit counter reaches a specific value — a condition that only occurs during a particular type of operation at a particular system state. Standard functional testing, which covers tens of thousands of test vectors, might never exercise that trigger state in the chip's entire deployed lifetime.

This is why detection requires fundamentally different thinking than software security. In software, you can audit source code. You can run fuzzing. You can decompile and trace execution paths. In hardware, once a chip is fabricated, you're working with physical silicon that cannot be easily inspected without destroying it — and even destructive analysis (depackaging, delayering, electron microscopy) only tells you what you're looking at if you know what you're looking for.

The Detection Problem: Six Years in the Weeds

My research at NYU Tandon focused on two complementary approaches to hardware trojan detection: side-channel analysis and FPGA fingerprinting.

Side-channel analysis exploits the fact that hardware trojans consume power, emit electromagnetic radiation, and generate heat — even when dormant. The challenge is that these signals are extraordinarily noisy. A chip running legitimate cryptographic operations generates the same kinds of power signatures as a chip running that same operation with an embedded trojan. Separating signal from noise requires statistical techniques borrowed from signal processing, combined with machine learning classifiers trained on known-clean reference chips.

The fundamental limitation: you need clean reference chips to establish a baseline. In a world where you cannot trust the supply chain, where exactly do those reference chips come from?

This is the question that defined years of my research and generated some of our most interesting results. We developed approaches to establish relative baselines — comparing chips from different lots, using statistical outlier detection across populations, building ensembles of classifiers that could flag suspicious deviations without requiring a ground-truth clean sample. The work contributed to patents in hardware trust verification and results that have been cited hundreds of times in the academic literature.

But I never lost sight of what the numbers meant: every technique we developed was a partial answer to an adversarial problem where the adversary had perfect information about our detection methods. This is the nature of hardware security research. You're not solving the problem — you're raising the cost for the attacker.

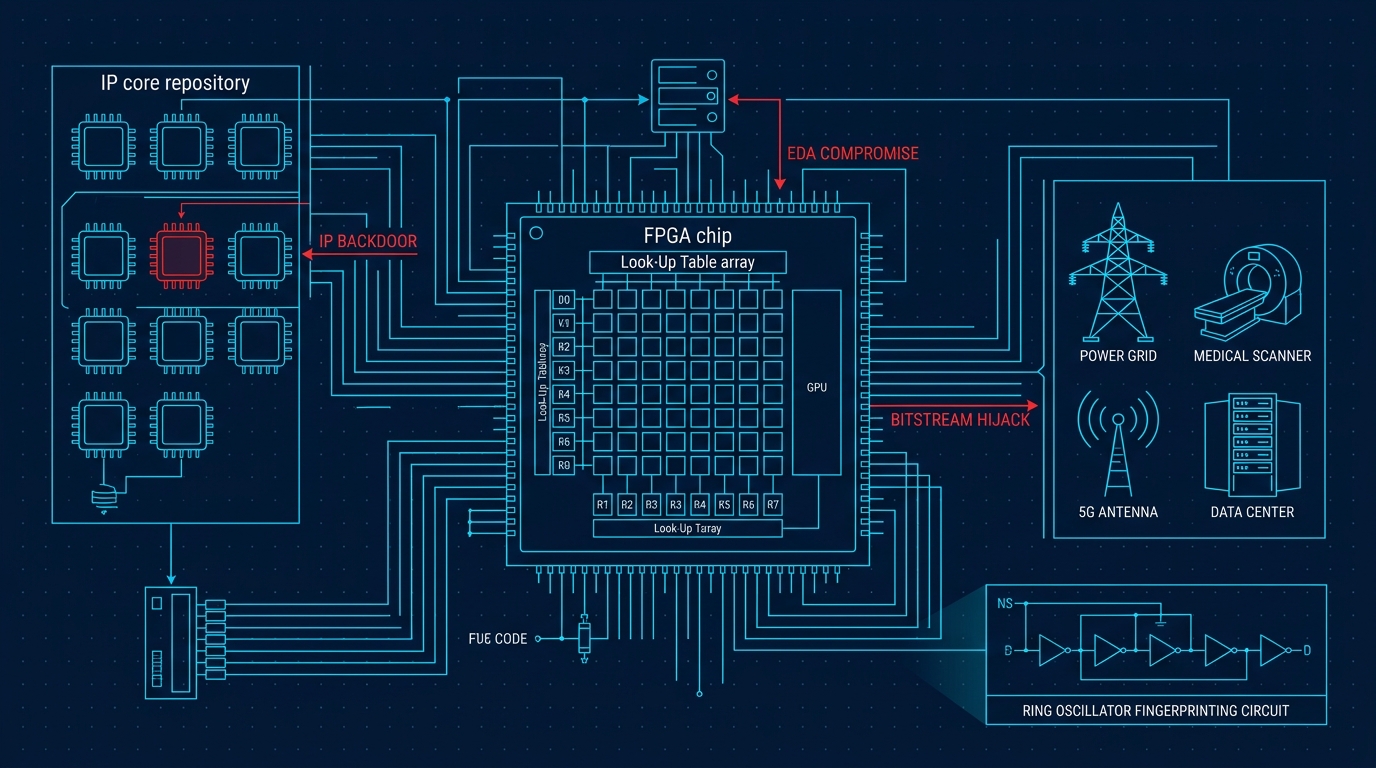

FPGA fingerprinting attacks a different problem: how do you verify that an FPGA hasn't been tampered with after deployment? FPGAs are reprogrammable by nature — their flexibility is the point. But that same flexibility means a compromised device can have its configuration overwritten, and a trojan can be inserted not at the fab but in the field.

The answer lies in physical unclonable functions (PUFs) — circuits that exploit nanoscale manufacturing variations to generate device-unique signatures. No two FPGAs, even identical models from the same wafer, have exactly the same PUF response. These signatures are unpredictable, unclonable without the underlying physics, and stable across temperature cycling and device aging.

We developed fingerprinting techniques that could authenticate FPGA identity without requiring secure key storage — the keys are derived from physics, not stored in potentially-compromised memory. This research became foundational to hardware attestation approaches used in high-assurance applications. The patent portfolio we built around these techniques reflects a genuine engineering contribution, not just academic novelty.

Teaching Advanced Hardware Design and Reconfigurable Computing at NYU sharpened my understanding in ways that purely research-focused work doesn't. When you have to explain why side-channel leakage exists to a room full of skeptical graduate students, you develop precision about your own assumptions. Side-channel leakage on ML accelerators — power, electromagnetic, and timing emissions that can expose model weights — is the attack surface most closely related to the hardware trojan problem, since both exploit physical properties of the hardware rather than software bugs. Several of those students went on to work in hardware security at semiconductor companies and defense contractors. The ecosystem matters.

Why the Threat Model Just Changed: AI Accelerators

For most of my research career, the hardware security community was focused on a relatively contained threat surface: high-value military and intelligence systems using specialized ASICs and FPGAs from a limited set of suppliers under stringent procurement controls.

That threat model is now dangerously incomplete.

Consider what has happened to the AI hardware supply chain in the last four years:

The scale has exploded. Major GPU manufacturers ship millions of AI accelerators annually. Cloud hyperscalers have developed custom silicon — tensor processing units, training accelerators, neural engines — that runs in data centers processing billions of queries per day. AI hardware is no longer a niche defense procurement problem. It's infrastructure.

The supply chain complexity has grown proportionally. Leading-edge chip fabrication is concentrated at a small number of foundries. Design IP flows through dozens of third-party EDA vendors, IP licensors, and design service companies before a single transistor is fabricated. Each handoff in that chain is a potential trojan insertion point — and the chain is longer and less auditable than it was a decade ago.

The attack surface is now consequential at a civilizational scale. A hardware trojan in an AI accelerator deployed at cloud scale could:

- Leak model weights through covert power or timing channels (IP theft affecting billions in R&D investment)

- Manipulate floating-point operations to subtly bias inference outputs in specific contexts (model poisoning at the hardware layer, invisible to software-level monitoring)

- Create exploitable vulnerabilities in the secure enclaves used for confidential AI computing

- Establish persistent access to cloud infrastructure through hardware-level backdoors that survive software patching, OS reinstalls, and hypervisor updates

I want to be precise about what I am and am not claiming here. I have no evidence that any commercially available AI accelerator contains hardware trojans. I am not making that claim. What I am claiming is that the traditional reassurance — "a trojan would be too hard to insert in a chip this complex" — is no longer adequate justification for complacency. Chip complexity cuts both ways: it creates more places to hide malicious logic, not fewer.

The practical detection tools from my research — the TAINT methodology for hardware trojan detection and the PUF-based device authentication work — are the state of the art in finding these threats. And my patent portfolio reflects the work on hardware trust verification that emerged from this research.

The Detection Gap in Modern AI Systems

The standard industry response to hardware trojan concerns is functional testing: run the chip through millions of test vectors at the fab, certify that it behaves correctly according to specification, ship it. This approach has two structural problems in the AI compute context.

First, AI accelerators are fundamentally probabilistic systems operating at the limits of numerical precision. They perform floating-point matrix multiplication at massive scale. A trojan that introduces errors in a specific type of tensor operation at a rate below the numerical noise floor of the computation might be completely undetectable through functional testing while still being exploitable by an adversary who knows precisely where to look and what to trigger.

Second, the test coverage problem is dramatically worse for neural network workloads. Traditional chip testing uses structured test patterns designed to achieve high fault coverage for manufacturing defects — a well-understood problem with established metrics (stuck-at coverage, path delay coverage, etc.). Neural network inference doesn't follow those patterns. A trojan triggered by a specific activation pattern in a specific layer of a specific model architecture might never be activated by any standard test vector. You would need to test the chip running the actual workload at the actual scale to have any hope of triggering it.

This is a research gap that I believe is dramatically underinvestigated relative to its importance. The hardware security community has excellent methods for analyzing traditional digital logic. Extending those methods to the distributed, probabilistic, workload-dependent world of AI compute is genuinely hard. The tooling doesn't fully exist. The benchmarks don't fully exist. And the incentives for semiconductor companies to invest in this research are complicated by the commercial implications of disclosing vulnerability surfaces.

What Organizations Should Actually Do

I'll be direct: most organizations cannot implement state-of-the-art hardware trojan detection internally. That's not pessimism — it's resource realism. Side-channel measurement at the chip level requires specialized equipment, controlled environments, and statistical expertise that exists in a small number of academic labs and government research centers.

What organizations can do is adopt a hardware trust posture that's calibrated to their actual risk profile.

For critical infrastructure and high-assurance systems:

- Demand supply chain transparency from hardware vendors. This means Bill of Materials attestation, trusted foundry certification documentation, and third-party security audits — not a statement of assurance in a press release.

- Implement side-channel monitoring at the system level. Even without chip-level analysis, anomalous power consumption patterns at the server or rack level can flag behavior worth investigating. Side-channel attacks on ML accelerators provide the technical background on what this attack surface looks like in practice.

- Use hardware attestation — TPM-based or PUF-based — to verify device identity and detect configuration tampering. FPGA-based systems especially should have attestation designed into their architecture from the ground up, not bolted on afterward.

- Design systems with the assumption that hardware components may be compromised. Limit the blast radius: minimize what any single hardware component can access, log and monitor cross-boundary data flows, and build detection at the system level rather than relying entirely on hardware-level guarantees.

For cloud and enterprise AI:

- Ask your cloud providers harder questions about hardware supply chain practices. This is a legitimate procurement and security question, not a paranoid one. Major providers with serious security programs should have substantive answers.

- Consider confidential computing — hardware-based trusted execution environments — for your most sensitive AI workloads. These approaches don't eliminate the hardware trojan risk (TEEs are implemented in hardware), but they raise the bar for several attack classes significantly.

- Think carefully about where you run inference for your highest-sensitivity models. The threat profile of a commodity cloud GPU is different from a GPU in a physically secured, access-controlled facility with known procurement history.

For the AI research and development community:

- Take hardware security seriously as a research area that intersects with your work. Formal verification methods, adversarial ML, privacy-preserving computation — all of these interface with hardware trust in ways the field hasn't fully worked through.

- Engage with hardware security researchers. Six years of dissertation-level work on side-channel detection, PUF design, and supply chain verification produces transferable knowledge. That knowledge needs to be applied to AI hardware contexts. The people who can do that translation are not numerous.

The Geopolitical Dimension

It's impossible to write about hardware trojans in 2026 without acknowledging the geopolitical environment that has made this topic newly urgent across the entire industry.

AI chip export controls — restrictions on exporting advanced AI semiconductors across certain jurisdictions, and the countermoves around rare earth minerals and packaging technology — have created a hardware security environment that didn't exist five years ago. Nations are explicitly treating AI hardware as strategic military and economic infrastructure, not just commercial technology.

In that context, hardware trojan research is not merely academic. It's part of a larger question about whose chips you trust to run your most critical AI systems, and how you verify that trust in the absence of full supply chain visibility.

My honest answer, after years of working on this: verification is hard, and the current state of practice is not adequate for the threat environment we're entering. We have good techniques. We don't have comprehensive solutions. And we're deploying AI hardware at a rate that outpaces the development of hardware trust verification methods by a significant margin.

That gap has to close. The research community, the semiconductor industry, and the policy community all have roles to play in closing it.

Six Years Later: The Lens That Changed Everything

When I finished my Ph.D. and moved from the chip labs at NYU Tandon to building AI systems in Bengaluru, I didn't leave hardware security behind. It came with me as a lens for everything I built afterward.

When I think about the AI systems I've been part of building — medical imaging platforms processing scans across hundreds of hospitals, multi-agent systems handling sensitive financial data, network security tools operating at wire speed — I ask myself what it would take to compromise them not at the software layer, not at the model layer, but at the silicon layer.

The answer remains uncomfortable, and I've stopped pretending otherwise.

We're deploying AI at a scale and speed that has outrun our ability to verify the trustworthiness of the hardware it runs on. The threat isn't hypothetical in the way it was in 2013. The infrastructure is real. The stakes are real. The adversaries are patient in ways that quarterly security reviews are not designed to accommodate.

This is not an argument to slow down AI deployment. The benefits are real too, and I've seen them directly in medical imaging contexts where AI tools are changing clinical outcomes for patients who previously had no access to specialist diagnostics.

It is an argument to be honest about what we know and don't know about the hardware substrate those benefits depend on. It is an argument to fund hardware security research proportionally to the importance of hardware in the AI stack. And it is an argument to ask harder questions of the supply chains that deliver the chips our most critical systems run on.

The adversaries who understand hardware trojan insertion think in years and decades. Their patience is a capability. Our defenses need to match that timescale — not react to it after the fact.

I'll keep working on it from both sides of the problem: the research side and the deployment side. The gap between them is where the interesting work lives.

Dr. Vinayaka Jyothi is a hardware security researcher, AI engineer, and entrepreneur. He holds a Ph.D. in Electrical Engineering from NYU Tandon School of Engineering, where he also served as Adjunct Professor teaching Advanced Hardware Design and Reconfigurable Computing. He holds eleven patents in hardware trust and AI systems, with 502 citations and an h-index of 12. He is Head of AI at Snow Mountain AI, building multi-agent orchestration platforms and financial AI systems, and the founder of Ai-Bharata, a medical imaging AI company serving 270+ healthcare institutions across India.