There is a particular kind of corporate vulnerability that emerges when a company's greatest historical strength becomes the source of its most visible weakness. Apple is living through that moment right now. The company that defined the modern era of consumer technology — that made hardware, software, and services integration its core identity — is struggling with the one technology layer that has come to define the current era: artificial intelligence.

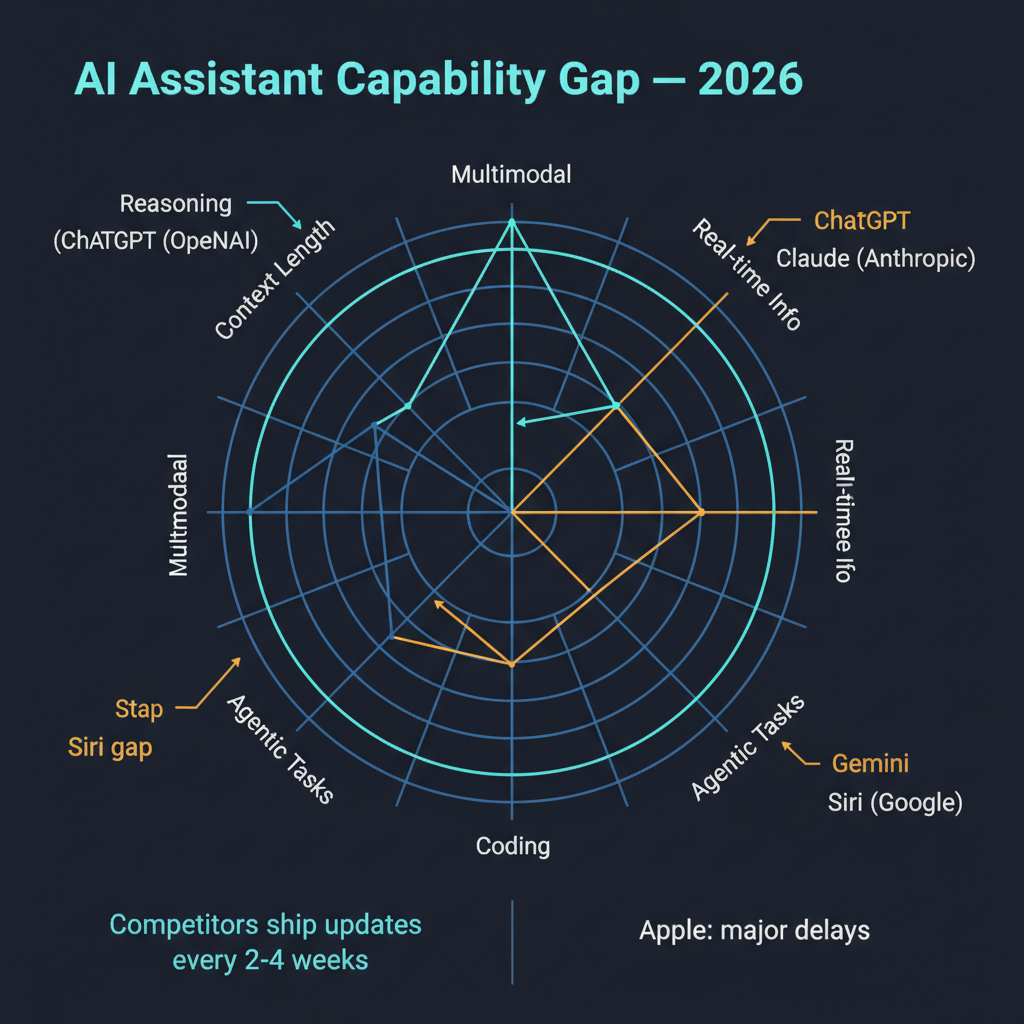

Reports continue to surface that Apple's AI difficulties with Siri are not merely an embarrassment but are actively affecting hardware product development and launch schedules. Features that were supposed to ship with recent devices have been delayed or quietly descoped. The AI capabilities that Apple has managed to deliver have been met with reviews ranging from lukewarm to openly critical. And all of this is happening while OpenAI, Google, Anthropic, and Meta ship meaningful AI capability updates on a cadence of every two to three weeks.

The gap is no longer a perception problem. It is a product problem.

The Cadence Gap

To appreciate the severity of Apple's position, consider the competitive landscape in terms of shipping velocity. OpenAI has been releasing significant model updates, API features, and product improvements at a pace that would have seemed impossible two years ago. Claude from Anthropic has seen multiple major capability upgrades in the past quarter alone. Google's Gemini is being woven into every Google product with aggressive iteration cycles. Meta's Llama releases have established a rhythm that keeps the open-source ecosystem in constant forward motion.

These organizations have built their development processes around rapid iteration on AI capabilities. They ship, collect feedback, improve, and ship again in cycles measured in weeks. This cadence is not incidental — it reflects a fundamental organizational orientation toward AI as the primary product.

Apple's release cadence for AI features, by contrast, remains tied to its traditional hardware launch cycle. Major software features arrive with new iPhone, iPad, and Mac releases. Updates between launches tend to be incremental. This cadence made perfect sense when the primary innovation axis was hardware design and software polish. It is structurally inadequate for a domain where the state of the art advances monthly.

I want to be fair to Apple here — shipping AI features to over a billion devices with Apple's quality expectations is a different engineering challenge than running a cloud API. But the market does not grade on a curve. Users compare Siri's capabilities to what they can do with ChatGPT, Gemini, or Claude, and the comparison is not favorable.

Why Apple's AI Problem Is Structural

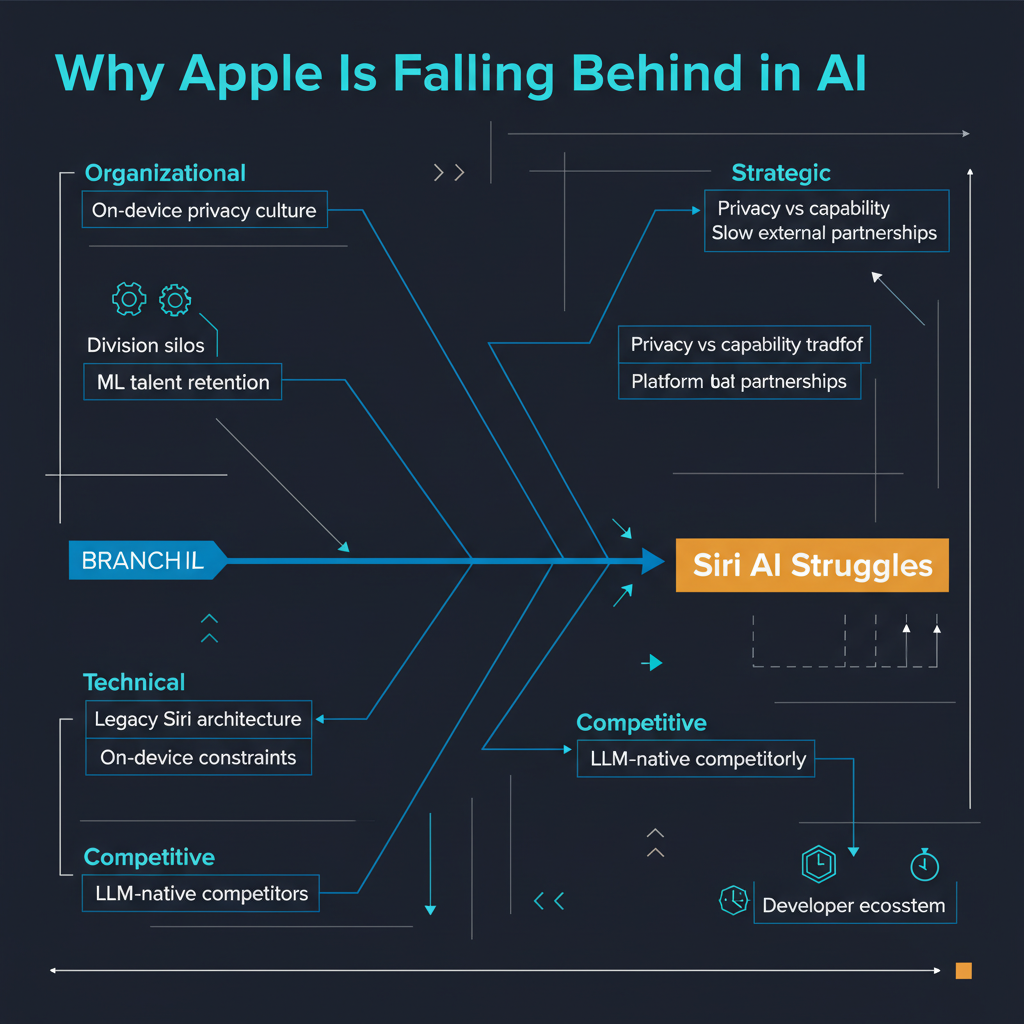

Apple's AI difficulties are not simply a matter of insufficient investment or engineering talent. The company has invested billions in AI research and has thousands of machine learning engineers. The problem is structural, rooted in design decisions and organizational priorities that made perfect sense before the current AI wave and now create compounding friction.

The first structural issue is Apple's commitment to on-device processing. Privacy is a genuine differentiator for Apple, and running AI models locally on device hardware is a legitimate way to protect user data. But on-device inference imposes severe constraints on model size and capability. The most capable language models today have hundreds of billions of parameters and require powerful server-grade GPUs to run. Apple's latest chips are impressive, but they cannot match the inference capability of a cloud data center running clusters of H100s or TPUs.

Apple has attempted to bridge this gap with Apple Intelligence, routing some requests to cloud-based processing through what they call Private Cloud Compute. But this hybrid approach adds latency, complexity, and a category of failure modes that purely cloud-based services avoid. It is an engineering compromise born of competing priorities — privacy and capability — that are genuinely difficult to reconcile.

The second structural issue is Siri's architectural heritage. Siri was built as a command-and-control system, not as a conversational AI. Its underlying architecture was designed to parse specific intents, map them to predefined actions, and execute those actions reliably. This architecture is excellent for "set a timer for 10 minutes" and fundamentally inadequate for "help me plan a dinner party for eight people with these dietary restrictions and this budget." Rebuilding Siri's architecture to support genuinely conversational, context-aware interaction is reportedly what has been causing delays — it is not a feature addition but a ground-up reconstruction.

The third issue is organizational. Apple's functional organizational structure — where hardware, software, services, and AI teams operate as distinct organizations under senior vice presidents — creates coordination challenges for a technology that needs to be deeply integrated across all of them. Companies like Google and OpenAI have organized themselves around AI as the central product axis. Apple's AI efforts must navigate an organizational structure designed around hardware product lines.

The Hardware Ripple Effect

What makes the current moment particularly concerning for Apple is that AI shortcomings are beginning to affect hardware decisions. There are credible reports that features planned for upcoming devices have been delayed because the AI capabilities they depend on are not ready. When your AI platform cannot deliver the features that your hardware team has designed around, the entire product development timeline suffers.

This is a reversal of Apple's traditional dynamic. Historically, Apple's hardware capabilities enabled software features — the Neural Engine chip enabled on-device ML features that software teams could build on. Now, the software and AI layer is becoming the constraint that hardware launches must work around. That inversion is deeply uncomfortable for a company whose identity is built on hardware excellence enabling seamless user experiences.

The business implications extend beyond individual product launches. Apple's services revenue — which has become a critical growth driver as hardware sales mature — depends partly on AI-powered features that drive user engagement. If Siri and Apple Intelligence cannot match the capabilities that users experience through third-party apps powered by OpenAI or Google, Apple risks ceding the most valuable layer of the user experience to competitors whose AI runs on Apple hardware but whose revenue and data accrue elsewhere.

What Apple Could Do

I do not think Apple's position is irrecoverable, but the path forward requires decisions that cut against the company's institutional instincts.

The most impactful move would be to decouple AI feature releases from hardware launch cycles. Ship Siri improvements continuously, even if they are incremental. The current approach of bundling AI features into annual OS releases means that improvements developed in January do not reach users until September. In a domain moving this fast, that delay is competitive suicide.

Second, Apple needs to make a clearer decision about the privacy-capability tradeoff. The current hybrid approach of on-device processing with cloud fallback satisfies neither priority fully. A more explicit tiered model — where users can choose between a private, on-device AI mode and a more capable cloud mode with transparent data handling — might serve users better than a compromise that underdelivers on both dimensions.

Third, Apple should consider deeper partnerships with frontier AI labs rather than trying to build everything internally. The partnership with OpenAI for some Siri features was a start, but it felt tentative and organizationally uncomfortable. Apple's core competency is integrating technology into polished consumer experiences, not necessarily training frontier models. There is no shame in recognizing that and acting accordingly.

The Larger Lesson

Apple's AI struggles illustrate a broader truth about technological transitions: the companies that dominated the previous era do not automatically lead the next one. IBM dominated mainframes but struggled with PCs. Microsoft dominated PCs but initially missed mobile. Apple dominated mobile but is struggling with AI. The pattern is not inevitable — Microsoft's successful pivot to cloud computing shows that large companies can navigate transitions — but it requires a willingness to reorganize around the new axis of competition rather than trying to fit the new technology into the old organizational and product structure.

Apple has the resources, the talent, and the user base to compete seriously in AI. What it needs is the organizational willingness to treat AI as the primary product challenge of this era rather than a feature to be layered onto existing products. The clock is ticking, and with each two-week update cycle from competitors, the gap gets a little harder to close.