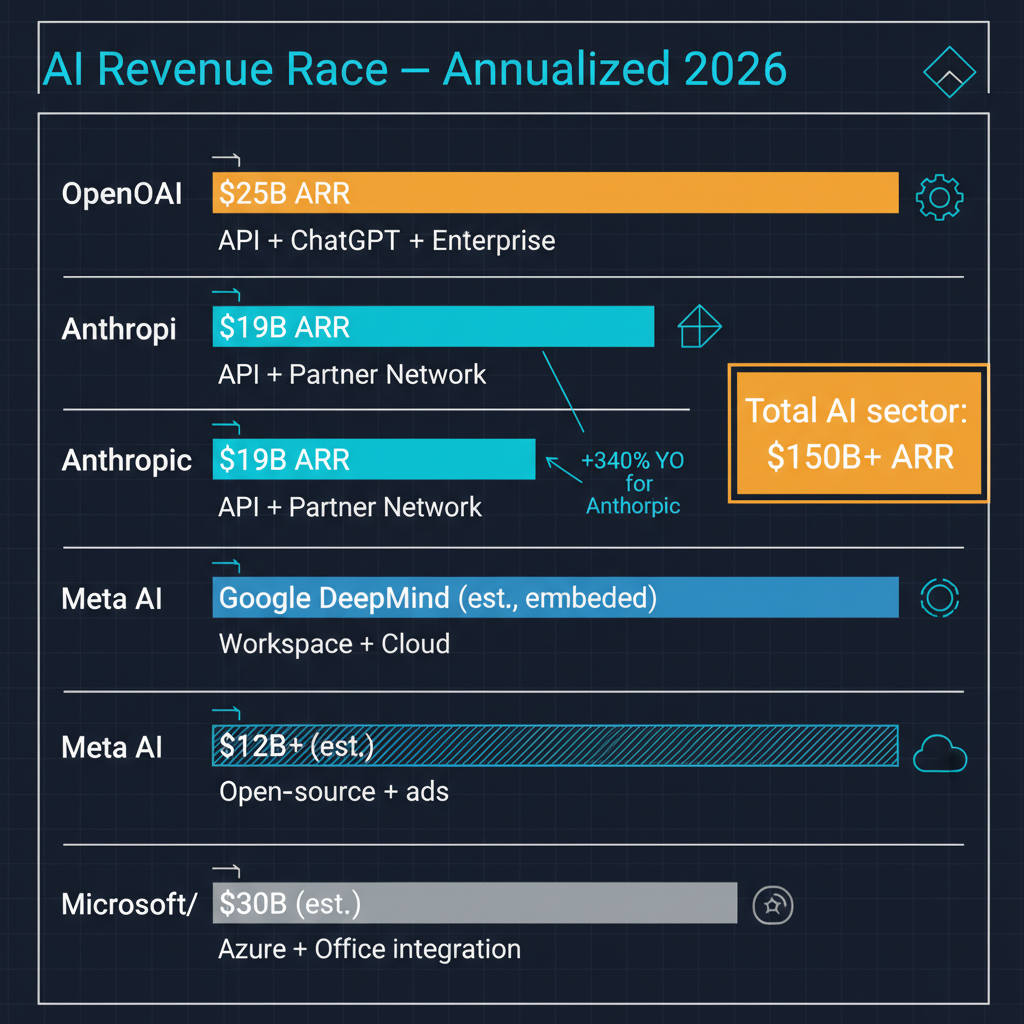

The numbers are staggering by any measure. OpenAI has reportedly surpassed $25 billion in annualized revenue and is actively planning an IPO for late 2026. Anthropic is approaching $19 billion annualized, a figure that would have seemed absurd even 18 months ago for a company that did not exist five years prior. These are not projections or aspirational targets — they represent actual money flowing into AI companies at a rate that has no precedent in the technology industry.

But raw revenue figures, impressive as they are, tell an incomplete story. The more interesting questions are about sustainability, competitive dynamics, and whether the current revenue trajectories reflect durable business models or a gold rush that will consolidate dramatically. Having followed this space closely, I think the answer involves all three possibilities simultaneously, depending on which segment of the market you examine.

The Revenue Landscape

Let me lay out the competitive map as it stands in March 2026. OpenAI leads in consumer revenue, driven primarily by ChatGPT's subscription tiers and the enterprise version of the product. The ChatGPT Plus and Pro subscriptions represent a consumer willingness to pay for AI capability that has few parallels in software history. The API business adds substantial revenue from developers and enterprises building on OpenAI's models.

Anthropic's revenue growth has been driven more heavily by the enterprise and API segments. Claude has found particular traction in professional services, legal, healthcare, and financial services — domains where the company's emphasis on safety, accuracy, and long-context capability resonates with buyers who care deeply about reliability. The consumer product has grown, but Anthropic's revenue mix skews more toward business customers than OpenAI's.

Google is the wildcard that gets insufficient attention. Gemini has been integrated into Google Workspace, Search, Android, and the broader Google ecosystem at a scale that dwarfs any standalone AI product. Google does not break out AI-specific revenue in the way that OpenAI and Anthropic can, which makes direct comparison difficult. But by user count and daily active usage, Google's AI features likely touch more people than ChatGPT and Claude combined. The monetization happens through existing subscription tiers, advertising, and cloud services rather than through a separate AI product line.

Meta occupies a different strategic position entirely. The Llama model family generates no direct revenue — it is open source. But Meta's strategy is to commoditize the model layer, driving down the cost of AI capability for everyone while capturing value through its advertising and social media platforms, which increasingly rely on AI for content recommendation, ad targeting, and creator tools. Meta's AI investment is enormous, but it is designed to create value in Meta's existing business rather than in a standalone AI product.

The Sustainability Question

The question I keep returning to is whether current revenue levels reflect sustainable business models or an early-market phenomenon where customers are experimenting at prices they will not accept long-term.

There are reasons for optimism. Enterprise AI spending is increasingly moving from experimental budgets to line-of-business budgets — a shift that indicates organizations are finding genuine productivity value rather than running pilots for innovation theater. The consumer willingness to pay $20 to $200 per month for AI assistants has proven more durable than many skeptics expected. And the API business is benefiting from a growing ecosystem of AI-powered applications that create recurring demand for inference compute.

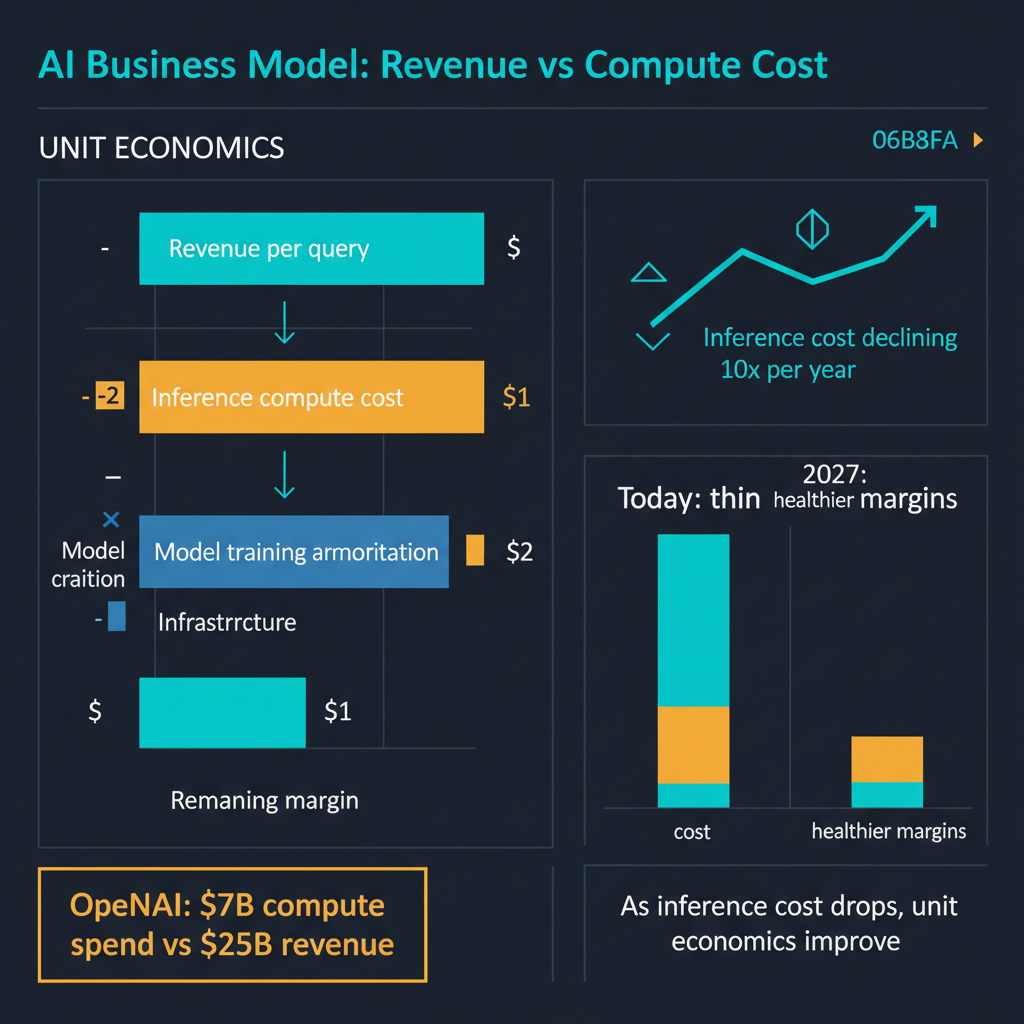

But there are also structural concerns. The cost of training and serving frontier models is enormous and growing. OpenAI's reported operating losses remain significant despite the revenue numbers, because the compute costs of running inference at scale are punishing. Anthropic faces similar economics. Both companies are in a position where they must continue raising prices, growing volume, or reducing costs simply to reach profitability — let alone generate returns that justify their valuations.

The pricing pressure from open-source models adds another dimension of complexity. Meta's Llama, Mistral's models, and the broader open-source ecosystem provide capable alternatives at dramatically lower cost for many use cases. Not all use cases — frontier reasoning capability still requires frontier closed models — but enough use cases that the price ceiling for AI API calls is being compressed from below.

The IPO Question

OpenAI's reported IPO plans for late 2026 introduce a fascinating set of incentives and constraints. Going public would provide liquidity for early investors and employees, validate the company's valuation, and provide a currency for acquisitions. But it would also impose public market disciplines — quarterly earnings expectations, margin scrutiny, and the kind of short-term pressure that can conflict with the massive, long-horizon R&D investments that frontier AI development requires.

The transition from a nonprofit-controlled structure to a fully for-profit public company is itself a significant governance event. OpenAI's original mission — ensuring that artificial general intelligence benefits all of humanity — sits uneasily with the obligations of a public company to maximize shareholder value. How OpenAI navigates this tension will be one of the most watched corporate governance stories of the decade.

Anthropic has not announced IPO plans but will face similar questions eventually. The company's emphasis on AI safety as a core differentiator works well with enterprise customers but could become complicated in a public market context where investors may pressure the company to prioritize growth over caution.

The Google Factor

I want to spend a moment on Google because I think its competitive position is systematically underrated in most AI industry analysis. Google has three structural advantages that are difficult to replicate.

First, distribution. Google Search, Gmail, Google Docs, YouTube, Android, and Chrome collectively reach billions of daily active users. Embedding AI capabilities into these existing products means Google does not need users to adopt a new product or form a new habit — the AI comes to where they already are.

Second, data. Google has the largest and most diverse dataset of human queries, documents, and interactions of any organization on earth. This data advantage compounds over time and is genuinely difficult for competitors to match.

Third, infrastructure. Google's TPU ecosystem, global data center network, and custom silicon give it a cost structure for training and inference that is competitive with or superior to any other organization. Google can afford to experiment aggressively because the marginal cost of serving AI features through existing products is lower than the cost of building a standalone AI business from scratch.

The risk for Google is execution and organizational focus. Google has historically struggled to ship consumer products with the polish and conviction of Apple or the speed of startups. But the stakes of the current AI transition seem to have concentrated the company's attention in a way that previous technology shifts did not.

Competitive Dynamics Going Forward

The competitive landscape is evolving toward what I think will be a tiered structure. At the frontier — the most capable models for the most demanding tasks — OpenAI, Anthropic, and Google will compete intensely, with each maintaining distinct positioning. OpenAI as the consumer and developer platform leader, Anthropic as the enterprise and safety-focused alternative, Google as the integrated ecosystem play.

Below the frontier, open-source models will increasingly commoditize standard AI capabilities. For tasks that do not require the absolute best model — and that is a large and growing category — Llama, Mistral, and their successors will provide sufficient capability at dramatically lower cost. This commoditization will compress margins for the frontier labs on their lower-tier offerings and force them to continuously demonstrate that frontier capability is worth the premium.

The enterprise segment will likely consolidate around a small number of providers, with purchasing decisions driven by integration with existing infrastructure, compliance and security requirements, and the specific capability profiles of different model families. This is a market that rewards scale, trust, and ecosystem rather than pure model performance.

What I Am Watching

Three things will determine how this competitive landscape resolves over the next 12 to 18 months. First, whether OpenAI can achieve profitability or at least a clear path to it before or through an IPO. The revenue numbers are impressive, but revenue without profits is a familiar and sometimes tragic story in technology. Second, whether Anthropic's enterprise-focused strategy can sustain its growth trajectory as competitors aggressively target the same customer base. Third, whether Google's AI integration into existing products translates into meaningful revenue growth or gets lost in the noise of a $300 billion revenue business.

The AI revenue race is real and accelerating. But the ultimate winners will not be determined by who generates the most revenue in 2026 — they will be determined by who builds a sustainable business model that funds continued research while delivering enough value to customers that switching costs make the relationship durable. That is a harder and more interesting competition than a simple revenue comparison suggests.