Something fundamental shifted in how we interact with AI over the past year, and it happened so gradually that most people did not notice. We stopped going to AI. AI came to us.

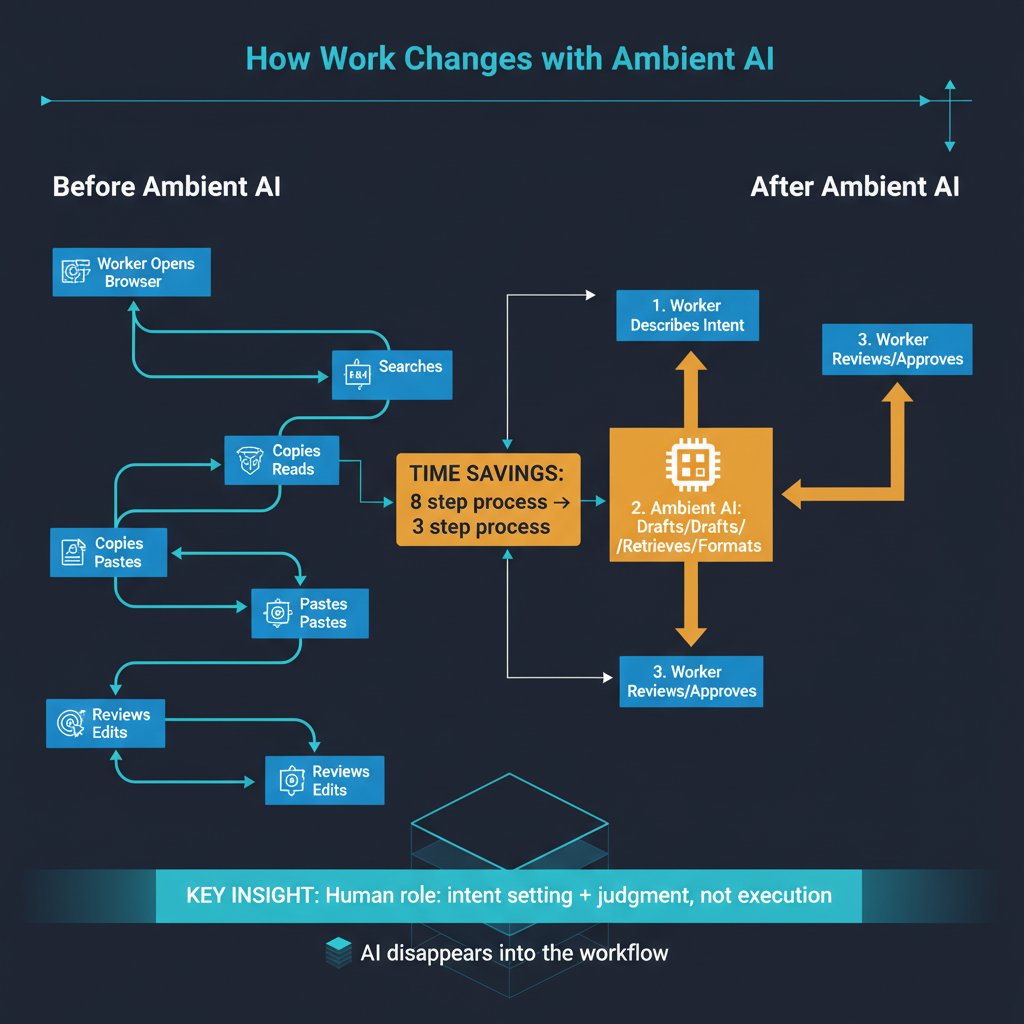

In 2023 and much of 2024, using AI meant opening a dedicated application — ChatGPT, Claude, Gemini — typing a prompt, and reading a response. It was a conscious, deliberate act. You interrupted your workflow, context-switched to an AI tool, formulated your request, waited for a response, and then carried the result back to whatever you were actually working on. The AI was powerful but separate, a specialist you consulted rather than a capability woven into your work.

By early 2026, that model feels increasingly quaint. AI has become an ambient layer — present in the tools where work already happens, activated without switching applications, operating with contextual awareness that makes explicit prompting less necessary. This is not a minor UX improvement. It represents a fundamental change in the human-AI interaction paradigm, and its implications for productivity, skill development, and organizational dynamics are profound.

The Disappearing Interface

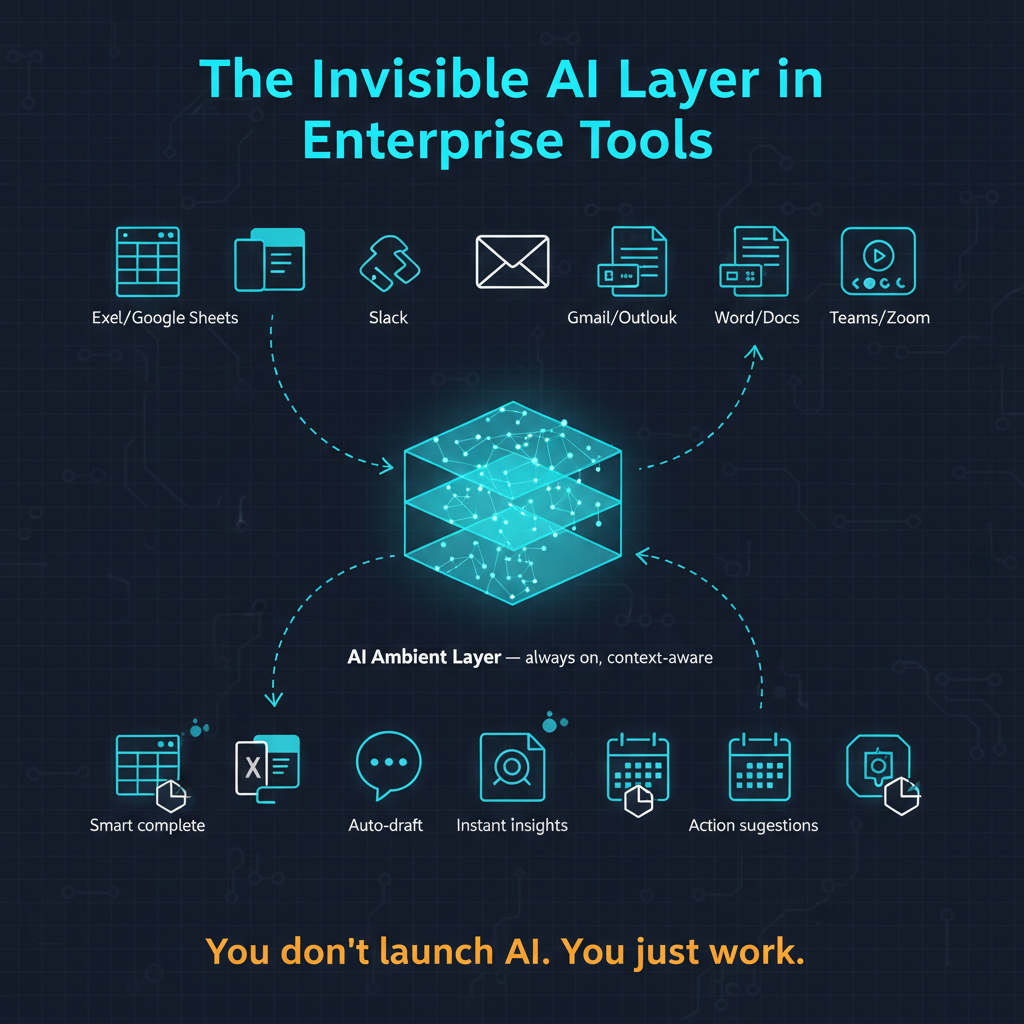

The most visible manifestation of this shift is the integration of AI capabilities directly into productivity tools. Microsoft Copilot is embedded in Word, Excel, PowerPoint, Outlook, and Teams. Google's Gemini is woven into Docs, Sheets, Gmail, and Meet. Notion, Slack, Figma, Adobe Creative Suite, and dozens of other tools have integrated AI features that activate in context rather than requiring users to leave their workflow.

Consider what this means in practice. A financial analyst working in Excel does not need to copy data into ChatGPT, ask for analysis, and paste the results back. The AI is there, in Excel, already aware of the data in the spreadsheet, capable of generating formulas, creating visualizations, and identifying patterns without any context-switching. A writer in Google Docs does not need to open a separate tab to ask for help with a paragraph — the AI is present in the document, aware of what has been written, and can suggest continuations, revisions, or restructuring in place.

This integration solves what I consider the single largest friction point in AI adoption: the context-switch tax. Every time a user has to leave their primary tool to consult an AI, they pay a cognitive cost — reformulating their problem in a way the AI can understand, losing the flow state of their primary task, and then translating the AI's response back into their working context. Ambient AI eliminates this tax almost entirely.

Memory and Contextual Awareness

The second dimension of the ambient AI shift is memory. Anthropic's introduction of memory features in Claude — where the system retains context across conversations and builds a persistent understanding of the user's preferences, projects, and working patterns — represents a qualitative change in what AI assistance feels like.

Without memory, every AI interaction starts from zero. The user must re-establish context, re-explain preferences, and re-orient the AI to their specific situation. This is tolerable for occasional use but becomes a significant productivity drag for heavy users who interact with AI dozens of times per day. With memory, the AI accumulates context over time, much like a human colleague who learns your preferences, understands your projects, and can anticipate your needs.

The practical effect is striking. An AI that remembers your writing style does not need to be told to match it. An AI that knows your project history can make connections between current work and past decisions without being prompted. An AI that has learned your organization's terminology, processes, and priorities can provide more relevant assistance with less explicit instruction.

This is not just a convenience feature — it changes the economics of AI interaction. When every interaction requires full context specification, the cost-benefit calculation for using AI includes significant setup time. When the AI carries context forward, the marginal cost of each interaction drops dramatically, making it rational to involve AI in smaller, more frequent decisions rather than reserving it for major tasks.

What Disappears When the Interface Disappears

The transition to ambient AI creates both gains and losses that are worth examining honestly.

The gains are primarily in productivity and accessibility. When AI is embedded in familiar tools, the adoption barrier drops to nearly zero. Users do not need to learn a new application or develop prompting skills. The AI meets them in their existing workflow with their existing data, reducing the gap between AI capability and AI utilization.

But there are losses worth acknowledging. When AI operates invisibly within tools, users may lose awareness of when they are interacting with AI and when they are not. This blurring has implications for trust calibration — if you do not know that a suggested formula in Excel was generated by AI rather than looked up from a template library, you may not apply the appropriate level of scrutiny to verify its correctness.

There is also a skill development concern. When AI handles routine cognitive tasks invisibly, users may not develop or maintain the underlying skills those tasks require. A junior analyst who never manually builds a financial model because the AI in the spreadsheet generates one automatically may not develop the deep understanding of financial modeling that would allow them to catch errors in the AI's output. The AI becomes a crutch before the user has developed the muscles it is supposed to augment.

These are not reasons to resist the ambient AI transition — the productivity benefits are too significant — but they are reasons to be thoughtful about how it is implemented and how organizations manage the transition.

The Enterprise Implications

For enterprises, the ambient AI layer creates strategic considerations that go beyond individual productivity.

Data governance becomes more complex when AI is embedded in every tool. Every AI-powered feature in Excel, Slack, or Google Docs is potentially processing sensitive corporate data. The governance frameworks that organizations built for standalone AI applications — where data flows were relatively contained and auditable — need to be extended to cover AI features that are activated implicitly across dozens of tools.

Vendor lock-in dynamics shift as well. When AI was a separate tool, switching costs were relatively low — you could move from ChatGPT to Claude without changing your underlying workflow. When AI is deeply embedded in your productivity suite, switching means changing not just an AI provider but your entire tool ecosystem. This increases the strategic importance of the AI capabilities built into the productivity platforms that organizations have already standardized on.

Organizational learning must also adapt. Training programs designed to teach employees how to use a standalone AI tool need to be replaced with guidance on how to work effectively with AI-augmented versions of tools they already use. This is a subtler and more distributed training challenge — it touches every role and every tool rather than being contained to a specific AI application.

The Competitive Landscape for Ambient AI

The race to be the ambient AI layer is fundamentally different from the race to build the best standalone AI model. Microsoft has an enormous advantage through its Office 365 and Teams installed base. Google has a comparable advantage through Workspace and its consumer products. Apple has the device-level integration advantage through iOS, macOS, and Siri — though, as I have discussed elsewhere, Apple's AI execution has not matched its distribution advantage.

For the pure-play AI companies — OpenAI, Anthropic, and others — the ambient AI shift poses a strategic challenge. If AI capabilities are increasingly consumed through productivity platforms rather than standalone applications, the value capture shifts toward the platform owners. OpenAI's partnership with Microsoft and Anthropic's partnerships with Amazon and Google reflect awareness of this dynamic, but the long-term balance of value between model providers and platform owners remains unresolved.

Where This Goes

I believe the ambient AI layer is the dominant interaction paradigm for the foreseeable future. Standalone AI applications will not disappear — there will always be use cases that benefit from a dedicated AI interface with full attention and customization. But for the vast majority of daily knowledge work, AI will be experienced as a capability of the tools people already use rather than as a separate tool they must learn and adopt.

The implications of this shift are still unfolding. Productivity gains will accumulate gradually rather than arriving as a single dramatic transformation. Skill development patterns will evolve as the boundary between human and AI contribution becomes harder to identify. Organizational structures will adapt as AI augmentation changes which tasks require human attention and which can be handled by the ambient layer.

The most important thing to understand about the ambient AI era is that it makes AI adoption a default rather than a choice. When AI is embedded in every tool, the question is no longer whether employees will use AI but whether organizations are prepared for the reality that they already are.