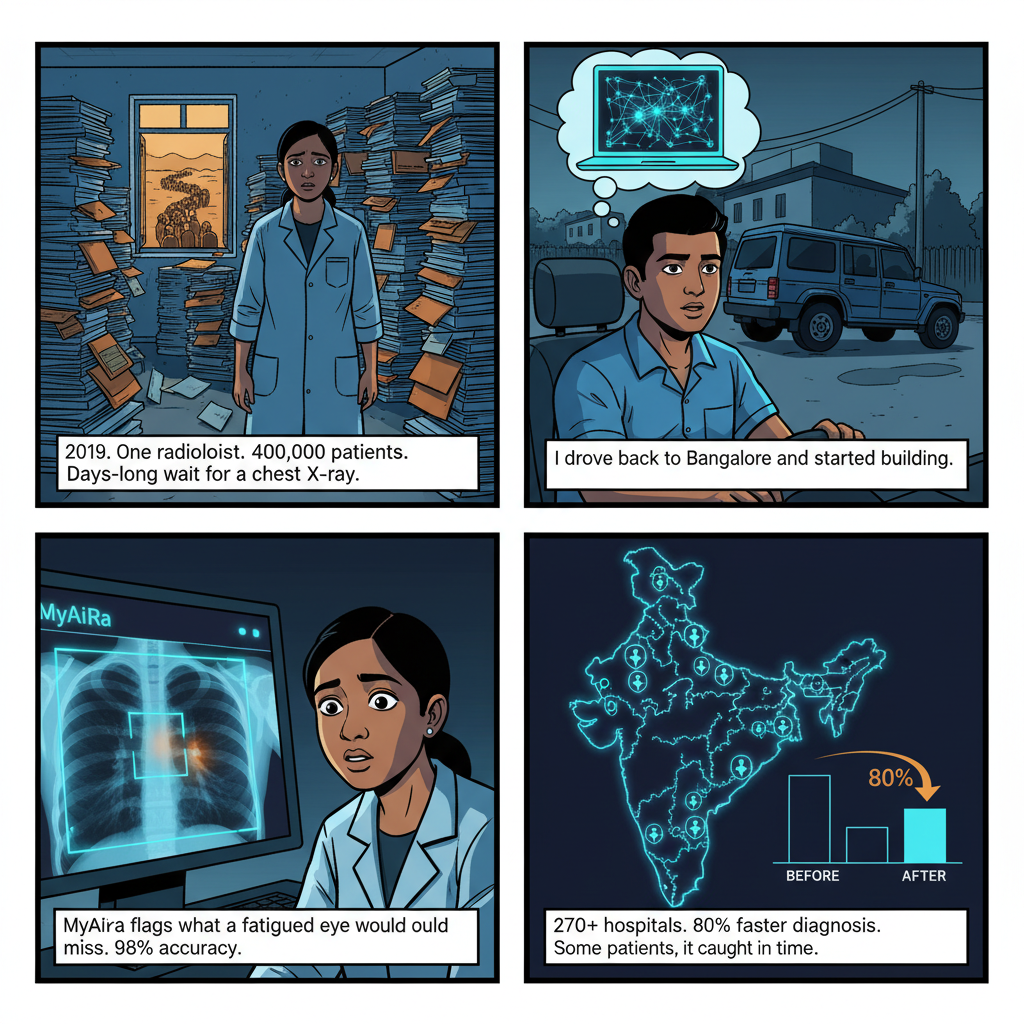

In 2019, I visited a district hospital in northern Karnataka that had exactly one radiologist serving a catchment area of roughly 400,000 people.

I watched her work for two hours. She was reading chest X-rays at a pace that would concern any Western radiology board — not because she was cutting corners, but because the backlog was physically present. A stack of films. Another stack. She was managing a waiting time measured in days for a modality that, in most clinical contexts, should produce a same-day result.

I had come from a Ph.D. in hardware security at NYU Tandon, had spent six years thinking about circuits and silicon and the mathematics of physical systems. I had published research on machine learning and signal processing. I had, in the abstract, always believed that AI could close diagnostic gaps in resource-constrained healthcare settings.

Sitting in that radiology room, watching a single physician try to carry an impossible workload, the abstract became specific. I drove back to Bangalore and started building Ai-Bharata.

Five years later, Ai-Bharata's Medishare platform runs in 270+ medical institutions across India. Our MyAiRa diagnostic AI achieves 98% accuracy on chest X-ray interpretation and has reduced average diagnostic turnaround time by 80% at the institutions where it's fully deployed. We won the Government of Karnataka's ELEVATE Idea2PoC competition, were runners-up at AWS ML Elevate, and took first at the MedTech Open Challenge.

None of that tells you what actually happened on the way there. Here's what I learned — the parts that don't make it into pitch decks or award citations.

The Diagnostic Gap Is Real, and It's Not Closing Fast Enough

India has approximately 1.3 radiologists per 100,000 people. The United States has roughly 11. That gap is not new information — healthcare policy researchers have been documenting it for decades. What's changed is that AI offers, for the first time, a technically credible path to filling part of that gap without simply training more specialists and waiting 15 years for them to graduate.

But "technically credible" is doing a lot of work in that sentence.

The challenge of medical AI deployment in India is not the AI. By 2019, deep learning models for chest X-ray interpretation were good enough to justify clinical piloting. The NIH Chest X-ray dataset, CheXNet, and dozens of follow-on models had demonstrated performance comparable to radiologist consensus on multiple findings. The computer vision problem was, at the level needed for a clinically useful assistive tool, largely solved.

The challenge was everything else. Infrastructure. Trust. Data. Workflow. Regulation. And the quietly important problem of what happens when an AI system is confident and wrong, and the physician who should catch that error is seeing 300 patients per day.

I'll work through each of these, because if you're building medical AI for deployment in India or any similar emerging market context, the failure modes here will define whether your product works in the real world.

The Infrastructure Problem Nobody Tells You About

When people talk about AI healthcare infrastructure in India, they tend to focus on compute. Can hospitals run your model? Do they have GPUs?

That's the wrong question. In five years of deployment across 270+ institutions, the compute problem was usually solvable. The harder infrastructure problems were:

Connectivity variability. India's digital infrastructure story is genuinely impressive — the Jio-driven connectivity revolution changed the economics of rural internet access dramatically. But averages conceal enormous variance. A hospital in Bengaluru's private sector has fiber connectivity and reliable power. A government district hospital in tier 3 Karnataka may have 4G internet that works well on average but drops out unpredictably, variable generator coverage during power cuts, and radiology equipment that is connected to the hospital LAN but not always to the internet.

We built Medishare with a hybrid offline-first architecture specifically because of this. The model inference runs locally on a device installed at the facility. Results sync to the cloud when connectivity is available. Reports can be read and flagged locally even when the connection is down. This sounds obvious in retrospect, but it required a fundamental architectural decision made early that changed everything about how we built the system.

Integration with existing equipment. The DICOM standard exists precisely to enable interoperability in medical imaging. In practice, legacy equipment in Indian hospitals varies enormously in DICOM compliance. We encountered DR machines that produced nominally compliant DICOM files that broke our parser. CR systems (computed radiography — the older technology where X-ray plates are scanned rather than captured digitally) that produced images at resolutions and orientations our preprocessing pipeline didn't handle correctly. Equipment that would produce technically valid DICOM but with metadata fields populated incorrectly, causing our triage logic to misclassify scans.

Every new institutional deployment began with an equipment audit. This took time. It was not glamorous. It was absolutely necessary.

Power reliability and hardware lifecycle. We deployed edge hardware at many sites — small form-factor computers running local inference. In environments with frequent power fluctuations, hardware fails faster than expected. We learned to design for hardware turnover as a normal operational event, not an exception. Provisioning new hardware needed to take less than an hour from unboxing to functional deployment. We eventually achieved that, but it required treating the deployment and provisioning workflow as a product engineering problem, not an IT operations problem.

The Data Problem Is Not What You Think

The standard discourse about AI in Indian healthcare focuses on the diversity challenge: India's population is genetically diverse, and models trained primarily on Western datasets may underperform on Indian patients for certain conditions. This is real and it matters, particularly for conditions with population-specific prevalence patterns.

But after five years of deployment, the more persistent data problem was annotation quality, not population diversity.

Medical AI requires labeled training data. For chest X-ray interpretation, that means radiologist readings — either prospective labels applied to new images by clinicians working with the research team, or retrospective labels derived from clinical radiology reports. Both approaches have serious problems at scale in the Indian context.

Prospective labeling is slow and expensive. Radiologists — the scarce resource whose scarcity motivated the entire project — are being asked to spend time annotating training data instead of reading clinical films. The incentive structures don't naturally align.

Retrospective labeling from clinical reports has a more subtle problem: the reports themselves are highly variable. Indian radiology reporting has no universal format. Reports range from detailed structured assessments to terse telegraphic impressions written under the same time pressure we were trying to relieve. Extracting consistent, granular labels from those reports requires significant natural language processing work — and the NLP models themselves need to be validated, which requires ground truth, which requires labeling, which is the original problem.

We developed a semi-automated annotation pipeline that used a combination of text classification, weak supervision techniques, and targeted radiologist review to scale our labeling process. The open-source MedicalAI framework we eventually published — which has been downloaded more than 45,000 times on PyPI — embeds some of this thinking, but the full internal annotation infrastructure was considerably more complex.

The lesson: when you're building AI for a data-scarce clinical environment, treat data infrastructure as a co-equal product problem with the model itself. You'll be tempted to treat it as an operations problem. That temptation will cost you.

Trust Is the Mechanism Through Which Everything Else Fails or Succeeds

I want to be precise here because "physician trust" is often discussed in a way that makes it sound like a marketing problem — convince physicians the AI is good, and adoption follows.

That's not the right framing. Physician trust in AI diagnostic tools is a calibration problem, not a persuasion problem. The question is not "do physicians believe in AI?" The question is "do physicians correctly understand when to rely on the AI, when to be skeptical of it, and when to override it — and does the interface design support that calibration?"

Overconfidence in AI outputs is a failure mode. Blanket skepticism that causes physicians to systematically ignore the AI signal is equally a failure mode, just a different one. The goal is appropriate reliance, and appropriate reliance is built through experience with the system's actual performance, not through training sessions or promotional materials.

We learned this the hard way. In early deployments, we saw both failure modes. At some institutions, physicians were treating MyAiRa's outputs as ground truth without applying clinical judgment — which was not how the system was designed or presented, but which emerged from workflow pressures and the natural human tendency to anchor on confident outputs. At other institutions, particularly where senior physicians had strong priors about AI limitations, the system was being routinely ignored even in cases where its signal was clear and would have saved time.

The interface redesign that most improved appropriate reliance wasn't a UI change — it was changing how we presented model uncertainty. Showing a probability estimate alongside a finding flag, rather than a binary positive/negative, gave physicians calibration information they could integrate with their clinical judgment. A chest X-ray flagged as "91% probability of consolidation" is a different input to clinical reasoning than "consolidation: positive."

This sounds obvious. It wasn't obvious to us at the start, because we were thinking about accuracy metrics, not about the physician cognition that sits between our output and the clinical outcome.

The Regulatory Landscape: Navigating CDSCO for AI Medical Devices

Medical AI in India is regulated as a medical device under the Central Drugs Standard Control Organisation (CDSCO). The regulatory pathway has evolved significantly since 2019 — when we started Ai-Bharata, the framework was genuinely ambiguous about how AI-based diagnostic software should be classified and approved. The Medical Devices Rules 2017 provided a foundation, but specific guidance for software-as-medical-device was limited.

We invested early in regulatory relationship-building that we didn't know we'd need. We documented our model development, validation methodology, and clinical evidence in a way that went well beyond what was required by the letter of regulation at the time. This felt like overhead. It became foundational when the regulatory environment tightened and institutions began asking harder questions about the basis for clinical claims.

Several lessons from navigating this environment:

Be conservative about clinical claims. Every claim you make about diagnostic performance creates a regulatory and liability surface. "98% accuracy" is a number that requires careful definition — accuracy on what task? Validated on which population? Under what conditions of acquisition? Being precise about these qualifications is not just regulatory hygiene. It's scientifically honest, and physicians will eventually notice if your real-world performance doesn't match your headline claim.

Institutional ethics committee approval is non-negotiable. Deploying a diagnostic AI system at a new institution in India appropriately requires ethics committee review. We encountered significant variation in how rigorously this was applied — some institutions had well-established IRB-equivalent processes, others had minimal infrastructure for this kind of review. We built support for that review process into our institutional deployment workflow because it was the right thing to do, not only because it was required.

Data localization matters more than you expect. Patient imaging data in India is subject to evolving data localization requirements. Our hybrid architecture — local inference, cloud sync — was a technical response to connectivity variability, but it also provided natural alignment with data governance requirements. The patient's diagnostic images never needed to leave the institution's network. Only de-identified metadata and analysis results moved to our systems. This architecture decision, made for operational reasons, also turned out to be the right privacy design.

What 270+ Deployments Actually Taught Me About Scale

The difference between making AI work in one hospital and making it work in 270 is not linear. It is not about running 270 copies of the same deployment playbook. It is about discovering all the ways that "this hospital" differs from "the abstracted hospital in your design assumptions."

Hospitals vary in:

- The age and model of their radiology equipment

- The mix of conditions they predominantly see (a hospital near a mining region sees different pneumoconioses than one near agricultural land)

- The technical capacity of their radiology staff to work with software systems

- Their reporting workflows and the languages in which reports are written

- The clinical hierarchy and who has the authority to adopt a new tool

- The regulatory environment of their state (some states have additional requirements beyond central CDSCO regulations)

Scaling through this variation required building institutional knowledge into our deployment process. Every 50-institution increment revealed new edge cases. The edge cases we encountered at hospitals 201-270 were different from the edge cases at hospitals 1-50 — not because hospitals become more exotic as you scale, but because the easy cases cluster early and the harder institutional configurations come later.

The most important scaling lesson: invest in customer success infrastructure before you think you need it. We scaled faster than we scaled our ability to support the institutions we had deployed at. The clinical and technical support demands of 270+ institutions require real infrastructure — not just a support email. Institutions that felt unsupported reverted to pre-AI workflows, which wasted everyone's time and damaged our credibility.

The Clinical Outcome That Changed How I Think About All of This

I want to describe a specific case, appropriately de-identified, because it captures something that metrics don't.

Eighteen months after deployment at a district hospital in Maharashtra, I received a message from the radiologist there who had been working with Medishare since our go-live. She described a case where MyAiRa had flagged a subtle finding in a chest X-ray that she had been prepared to read as normal — the queue was long, the X-ray looked clean at first scan, and a tired radiologist makes different errors than a rested one. The AI's flag prompted her to look again. She found early-stage consolidation consistent with atypical pneumonia. The patient was retained, treated appropriately, and discharged well.

She wrote: "I would have sent that patient home."

I have thought about that message many times since. The metric we cite is "80% reduction in diagnostic turnaround time." That metric is real and it matters enormously for throughput at overburdened institutions. But the metric that doesn't get cited is the number of diagnoses that were caught because an algorithm flagged something a fatigued human was prepared to miss. That number is harder to measure. It is not zero. And I think it is larger than most people assume.

This is why I keep building in this space, even when the deployment challenges are genuinely exhausting. The technology is not magic. The infrastructure problems are real. The trust and calibration problems require sustained effort. But the gap it can close — the gap between the care that is available and the care that is needed — is large enough to justify that effort.

What I Would Tell Anyone Starting This Work Today

The medical AI deployment India landscape has changed substantially since 2019. The regulatory environment is clearer. The venture ecosystem is more developed. The quality of public medical imaging datasets has improved, including datasets with genuine Indian population representation. The base technology is more mature.

None of that changes the fundamental challenges. Here is what I'd tell a founding team starting today:

Solve the offline-first problem before you touch the model. Your AI can be perfect and completely useless if it doesn't run where your users are. Design your architecture for the infrastructure realities of your lowest-connectivity deployment target, not your most favorable one.

Build data infrastructure as a product, not an afterthought. Your labeling pipeline, your data quality processes, your ability to measure distribution shift when new institutions come online — these are product problems. Treat them as such from day one.

Find the clinical champion before you start the deployment. Every successful hospital deployment we had shared one characteristic: a physician who genuinely understood and believed in the technology, had the credibility to influence their colleagues, and was willing to invest time in proper deployment. Without that person, deployments struggled regardless of technical quality.

Design for appropriate reliance, not maximum reliance. The goal is not to maximize physician deference to AI outputs. The goal is to help physicians use AI as one input among several, calibrated to their understanding of its real-world performance. Interface design that supports calibration is more valuable than another percentage point of model accuracy.

Start regulatory documentation from day one. The CDSCO framework for AI medical devices will continue to evolve. The institutions you want to deploy at will ask harder questions over time. Documentation that tracks your validation methodology, your model versioning, and your clinical evidence is not bureaucratic overhead — it is the foundation of defensible clinical claims.

Measure outcomes, not just outputs. Diagnostic accuracy on a validation set is necessary but not sufficient. Build mechanisms to understand what happens downstream of your AI's recommendations. Are physicians treating patients differently? Are clinical outcomes changing? Are the patients who most needed earlier diagnosis actually getting it? These questions are hard to answer. They are the right questions.

Where This Goes Next

I left Ai-Bharata's day-to-day operations in 2024 to focus on broader AI infrastructure work at Snow Mountain AI, building multi-agent orchestration platforms and financial AI systems that require many of the same rigor muscles — different domain, similar discipline. But the healthcare AI deployment problem in India is nowhere near solved.

270 institutions sounds like a lot until you understand that India has approximately 25,000 hospitals with more than 30 beds. The AI-assisted diagnostic gap coverage is perhaps 1% of where it needs to be. The models continue to improve. The infrastructure continues to build out. The regulatory environment is maturing into something that can support responsible scale.

The research foundations that underpin this work — neural architecture for medical imaging, uncertainty quantification, distribution shift detection — remain active areas where academic work and deployment reality are productively in tension. I maintain connections to that research community because deployment experience without research engagement eventually produces systems that are locally optimized and globally brittle.

The most honest thing I can say about five years of medical AI deployment in India is this: the technology worked better than I feared and worse than I hoped, for reasons that had almost nothing to do with the AI. The institutional, infrastructural, and human factors that determine whether a diagnostic AI system actually changes patient care are harder problems than building the model. They require different skills, different patience, and a different theory of change than most people bring to this work.

The opportunity is enormous. The work is hard. Both things are true.

Dr. Vinayaka Jyothi is a hardware security researcher, AI engineer, and entrepreneur. He was Founder and CTO of Ai-Bharata, a medical imaging AI company that deployed across 270+ healthcare institutions in India, and is currently Head of AI at Snow Mountain AI. He holds a Ph.D. in Electrical Engineering from NYU Tandon School of Engineering with 502 citations and an h-index of 12, and holds eleven patents in hardware trust and AI systems. The open-source MedicalAI framework he developed has been downloaded over 45,000 times on PyPI.