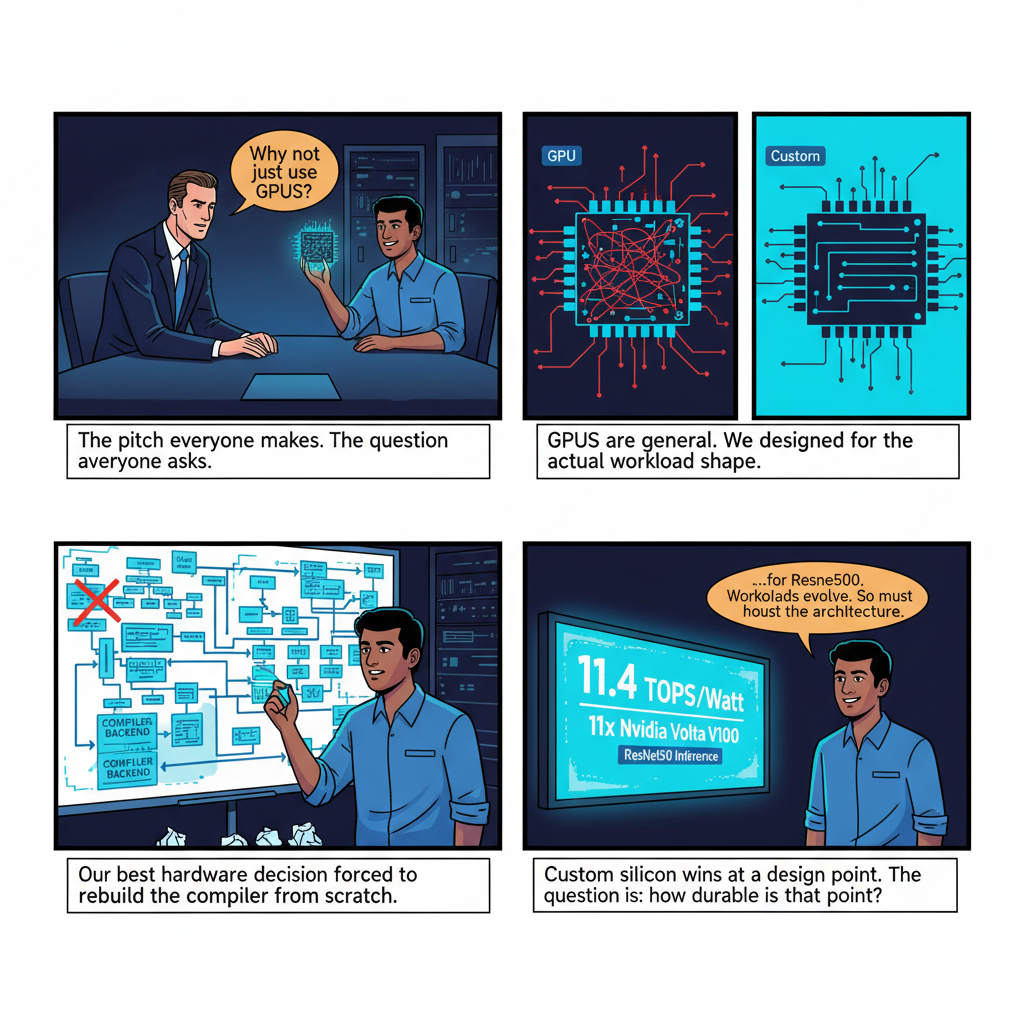

There's a pitch that every AI hardware startup eventually makes, and it goes something like this: "GPUs are general-purpose hardware retrofitted for matrix multiplication. We've designed something purpose-built for AI. We're 10x faster and 5x more power-efficient."

I've given versions of that pitch. I've sat across the table from people who gave it to me. And I can tell you that the pitch, while often technically accurate, usually obscures the two or three genuinely hard problems that will determine whether your chip ever sees production.

From 2017 to 2019, I co-founded Pathtronic Inc. and served as CTO. We designed an AI accelerator that achieved 11.4 TOPS/Watt and 2,519 frames per second on ResNet50 — delivering 11x better power efficiency and 20x better performance-per-area than the Nvidia Volta V100 at the time. Those numbers are real. The patents that came out of that work are filed. But the number I remember most clearly from those two years isn't a TOPS figure or a frame rate. It's the specific afternoon when we realized that our most elegant architectural decision — the one we were proudest of — was going to require us to rewrite the compiler backend from scratch.

That's what custom silicon actually looks like. Let me tell you what I learned.

Why Anyone Builds Custom AI Silicon in the First Place

The question I got asked most often when fundraising for Pathtronic was some variant of: "Why not just use GPUs?" It's a reasonable question. NVIDIA had a decade-plus head start, a mature software ecosystem, and economies of scale that made their hardware accessible even at research budgets.

The answer isn't that GPUs are bad at AI. They're remarkably good at it — CUDA's success as an ecosystem is a genuine engineering achievement, and NVIDIA's investment in the deep learning toolchain from 2012 onward was prescient in ways that the company itself probably didn't fully anticipate. The answer is that GPUs are optimized for throughput on dense matrix operations, and modern AI workloads have very different shapes than the workloads GPUs were tuned for.

Consider what happens when you run ResNet50 on a GPU. The convolution layers — where GPUs excel — account for roughly 90% of the FLOPs. But those FLOPs are not evenly distributed. The early layers have small spatial dimensions and large channel counts. The later layers invert this. Optimizing GPU utilization across that variance requires careful batching, careful kernel selection, and a memory bandwidth budget that scales linearly with model size. The GPU's answer to memory bandwidth constraints is to buy more memory bandwidth — bigger HBM stacks, wider buses, higher clock speeds. This works, but it's expensive in both silicon area and power.

The architectural insight that motivated Pathtronic was simpler than it sounds: most of the data movement in a neural network inference workload is predictable at compile time. You know the model. You know the weights. You know the activation tensor shapes at every layer. A purpose-built accelerator can be designed around that predictability in ways that a general-purpose GPU cannot.

What that means in practice: you can eliminate most of the memory hierarchy complexity that makes GPUs expensive to build and power-hungry to run. You can replace SRAM-heavy register files with a streaming dataflow architecture that keeps arithmetic units fed without the overhead of a conventional memory subsystem. You can make explicit what the GPU runtime makes implicit — data placement, computation scheduling, synchronization — and trade the flexibility of a general scheduler for the efficiency of a static one.

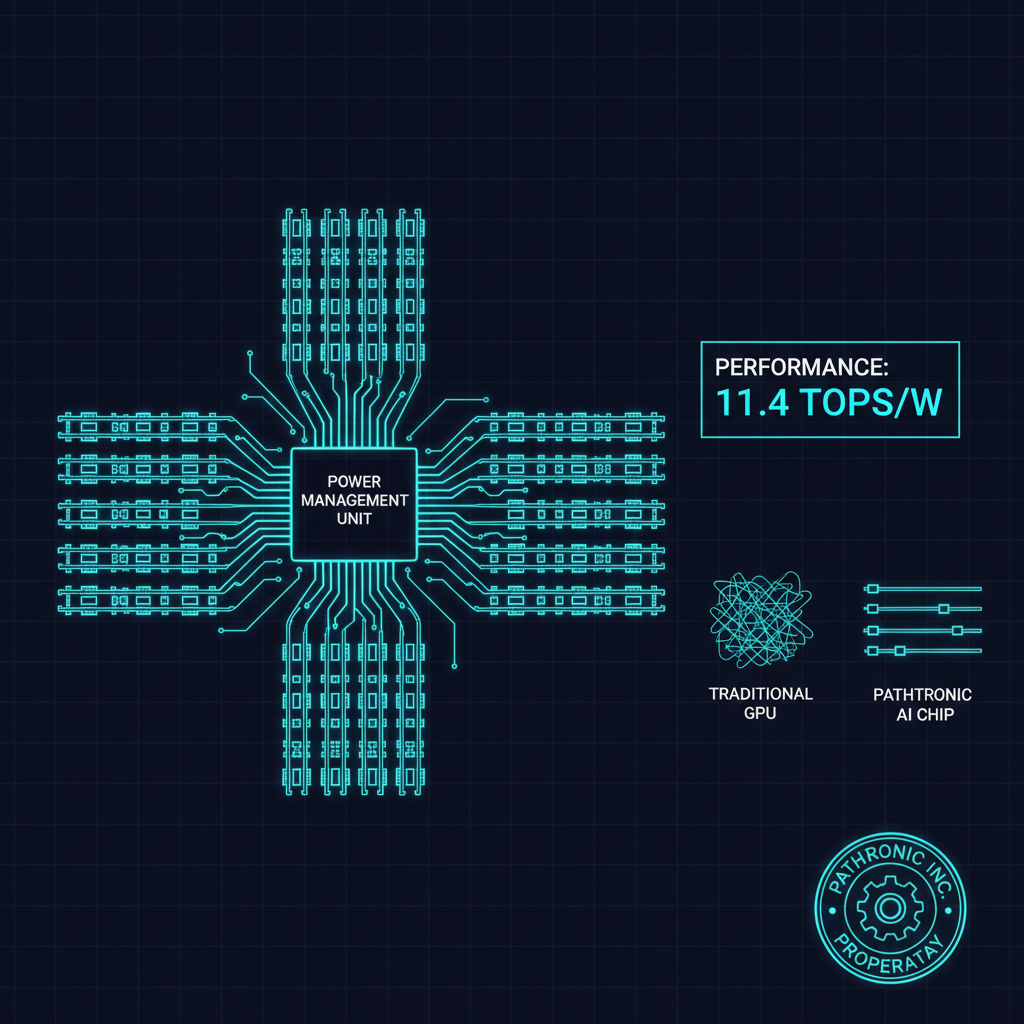

The virtual multilane architecture we developed at Pathtronic was our implementation of this insight. Rather than treating the accelerator as a bank of compute units sharing a unified memory pool, we designed a system where computation was organized into parallel lanes, each with its own dedicated data path, operating on pre-staged data with minimal coordination overhead between lanes. Power management became a function of lane utilization rather than global DRAM traffic. The result was the power efficiency profile that became our headline metric.

This sounds clean when described in retrospect. Living through the design process was considerably messier.

The Hardware-Software Co-Design Problem Nobody Talks About

Here's what the academic literature on custom AI accelerators usually underemphasizes: the chip is only as good as the compiler that targets it.

This sounds obvious. Every hardware architect knows it in the abstract. But the implications are deceptive, because the compiler problem for a custom AI accelerator is qualitatively different from the compiler problem for a conventional processor.

When you design a conventional processor, you're designing a machine that executes sequential instructions. Your compiler — even a sophisticated optimizing compiler — is fundamentally mapping a sequential computation onto a sequential machine. The optimization space is constrained: instruction scheduling, register allocation, loop transformations. Hard problems, but well-understood ones with decades of research and tooling behind them.

When you design a custom AI accelerator with a novel dataflow architecture, you're designing a machine that executes spatially mapped computations. The "compiler" has to solve a fundamentally different problem: given a computational graph representing a neural network, partition it across your accelerator's spatial resources in a way that maximizes data reuse, minimizes off-chip memory accesses, and respects the synchronization constraints of your lane architecture. This is not a constrained version of the classical compiler problem. It's closer to a scheduling problem with NP-hard optimal solutions.

At Pathtronic, our first compiler approach was hand-coded: we manually mapped the reference networks we were benchmarking against to our hardware. This gave us our best numbers — the 11.4 TOPS/Watt figure came from a carefully hand-optimized ResNet50 mapping that we spent weeks tuning. It also meant that every time we wanted to run a different network, someone had to spend weeks re-doing the work.

The pivot to an automated compiler backend was technically necessary and practically painful. We had to formalize the abstract machine model for our architecture — precisely specify what operations were atomic, what data placement assumptions the hardware made, what the cost functions were for different mapping decisions — before we could build a compiler that reasoned about it correctly. The formalization process revealed three architectural assumptions we had baked in implicitly that turned out to be incorrect under certain network shapes. We changed the hardware. The hardware changes required re-validating our timing closure analysis. Two weeks of work turned into six.

This is the central tension of hardware-software co-design: the hardware and software need to be designed together, which means they need to be designed iteratively, which means neither is ever stable while the other is changing. Managing that instability is the actual skill. The patents that came out of Pathtronic — including our flexible hardware processing framework — reflect not just the final architecture but the process of converging on an architecture that could be targeted efficiently by a compiler that could actually be built.

What the Industry Gets Wrong About Power Efficiency

Our 11x power efficiency advantage over the Volta V100 is the kind of number that makes a good slide. It's also the kind of number that invites skepticism, and that skepticism is sometimes well-founded.

Here's what the number actually means: at the specific workload (ResNet50 inference, batch size optimized for our architecture), our chip delivered 11.4 TOPS per watt compared to the V100's approximately 1 TOPS per watt under comparable measurement conditions. That's a real measurement of real hardware running a real workload. It's not a theoretical efficiency limit or a cherry-picked benchmark.

What it doesn't mean: that our chip was 11x more power-efficient at all workloads, or that it would remain 11x more efficient as model architectures evolved, or that the efficiency advantage would be preserved across the full stack including memory, cooling, and system-level power draw.

This is the power efficiency conversation that rarely gets had honestly in the AI hardware space. Custom silicon achieves its efficiency advantage by specializing. Specialization means your efficiency is a function of how closely the workload matches the design point. As the workload drifts, efficiency degrades. The question is how gracefully it degrades and across what range of workload variation.

The virtual multilane power management architecture we built was explicitly designed to address this: lanes could be powered down independently when not utilized, and the power gating was fine-grained enough that a network with irregular layer shapes could still run efficiently even if it couldn't fully utilize all lanes simultaneously. We validated this across several network families. The efficiency story held reasonably well across the convolution-heavy models that dominated in 2017-2019.

What I didn't fully appreciate until later was how rapidly the relevant workload distribution was about to shift. Transformer architectures, which were emerging in NLP during our development period, have a fundamentally different compute profile from convolutional networks: attention mechanisms dominate, and attention scales quadratically with sequence length in a way that creates memory access patterns quite different from convolution. An accelerator designed around the assumption that convolution was the dominant operation — which was a reasonable assumption in 2017 — needed rethinking for transformer-centric workloads by 2020.

This isn't a failure of Pathtronic's design. It's an illustration of the irreducible uncertainty in custom silicon: you're making a 3-5 year bet on a workload distribution that is itself evolving rapidly. NVIDIA's GPU advantage is partly that they're less exposed to this uncertainty — their architecture is flexible enough to run both convolution-dominated and attention-dominated workloads acceptably well. Custom silicon wins on efficiency at a specific design point and loses some of that advantage as the design point moves.

The honest version of the custom silicon pitch isn't "we're always more efficient." It's "we're more efficient at this workload distribution, and here's our argument for why that distribution is stable enough to build a business on."

What Neuromorphic Computing Gets Right (and Why We Explored It)

One of the more interesting detours during Pathtronic's development was our exploration of neuromorphic computing — specifically, whether spiking neural network architectures could achieve better power efficiency than our dataflow accelerator for certain classes of inference problems.

Neuromorphic computing is based on a biological insight: real neurons don't compute continuously. They spike — produce brief electrical pulses — when their input crosses a threshold, and are otherwise quiescent. The power consumption of a spiking system scales with the spike rate, not with the total number of neurons. For sparse, event-driven workloads, this can deliver significant efficiency gains over conventional accelerators that perform dense matrix multiplication regardless of input sparsity.

I filed a patent on a bio-plausible spiking neuron model for neuromorphic computing during this period — work that grew directly out of our Pathtronic research. The fundamental insight was that the biological plausibility wasn't the point; the engineering tractability was. We weren't trying to simulate biology. We were asking whether the computational structure of spiking networks — sparse, event-driven, temporally coded — could be implemented efficiently in silicon for specific AI inference tasks.

The honest answer from our investigation: yes, for a specific and currently narrow class of workloads. Spiking networks perform well on time-series classification, event-camera data processing, and certain kinds of edge inference where the input signal is inherently sparse. They struggle with the dense image classification benchmarks that dominated hardware comparisons in our era. Converting a trained convolutional network to a spiking representation without significant accuracy loss was an unsolved problem then, and remains partially unsolved now.

The broader lesson: the power efficiency frontier in AI hardware is not a single Pareto frontier. There are multiple different design points — dataflow accelerators, neuromorphic processors, in-memory computing, analog compute — each with different efficiency profiles across different workload families. The field is still early enough that it's not clear which of these will dominate at scale, and it's quite possible that different application domains will settle on different architectures.

What I'd Tell Someone Starting an AI Hardware Startup Today

Building Pathtronic was one of the most technically demanding things I've worked on. It was also genuinely exciting in a way that software-only AI work hasn't quite matched — there's something about having your abstraction layer terminate in actual transistors that changes how you think about computing. Here's what I'd tell a founder or CTO starting an AI chip company today:

Your benchmark isn't your product. ResNet50 on ImageNet is the AI hardware equivalent of running Dhrystone on a processor — it's useful for comparison, but no one is deploying ResNet50 on ImageNet in production applications. Define the actual workload distribution you're optimizing for as precisely as you can, design to that distribution, and be honest about where your efficiency degrades outside of it.

Invest in the compiler early. The temptation is to get the hardware right first and build the compiler later. Resist it. The hardware-software co-design process means that your compiler architecture needs to be at least partially specified before you can make good hardware decisions. Build a minimum viable compiler that can target your architecture in the first six months. The things you learn from actually using it will change your hardware design.

Power efficiency is a system property, not a chip property. Your chip might be 10x more power-efficient than a GPU at the compute level. But the system power — including memory, interconnects, cooling, and voltage regulation — looks different from chip-level efficiency. Model the full system from the start. A chip that's 10x more efficient at compute but requires custom memory solutions can easily lose that efficiency advantage at the system level.

Plan for workload evolution. The model architectures that are popular when you start your design will not be the model architectures that matter when you ship. Build in flexibility mechanisms — even at the cost of some peak efficiency — that let you address workload shifts without a full redesign. The flexible hardware processing framework we built at Pathtronic was partly a response to learning this lesson the hard way.

The ecosystem matters as much as the hardware. NVIDIA's moat is not primarily the GPU. It's CUDA — a software ecosystem that has accumulated fifteen years of developer familiarity, library support, and framework optimization. Custom silicon that requires developers to rewrite their training and inference pipelines faces a real adoption barrier regardless of performance metrics. Think hard about your software story from day one.

Where AI Chip Design Is Heading

The era of "GPUs for everything" in AI is already ending, and I say this as someone who genuinely respects what NVIDIA built. The workload diversity of AI is now too large for any single architecture to dominate efficiently. Edge AI, cloud training, cloud inference, embedded systems, scientific AI — these have genuinely different requirements, and the ecosystem is already fragmenting into specialized solutions.

What this means for custom silicon startups: the opportunity is real, but the thesis needs to be more specific than "we're better than GPUs." The question is better at what, for whom, and why that advantage is durable as the workload landscape evolves.

My time at Pathtronic left me with a conviction that hardware-software co-design — not just hardware design — is the core competency that matters. The chips that win won't just be more efficient at today's workloads. They'll be designed with enough insight into the software stack to adapt efficiently as the workloads change. That requires hardware architects who think like software engineers and software engineers who understand the constraints of silicon — a rare combination that remains the most valuable thing to find when building an AI hardware team.

The Pathtronic numbers — 11.4 TOPS/Watt, 2,519 frames per second — are a snapshot of what was possible in 2019 with a small team, a clear architectural insight, and two years of very focused engineering. The ventures I've built since have all been shaped by what that experience taught me about the relationship between hardware constraints and software design. It's a relationship that only gets more important as AI systems grow more capable and more power-hungry.

The GPU crowd will tell you that general-purpose hardware always wins in the long run. The custom silicon crowd will tell you that purpose-built always wins on efficiency. Both are right about different things. The interesting engineering happens in the space between those positions — and that space is where I've found the most valuable problems to work on.

One dimension of that space that's becoming impossible to ignore: hardware security in custom AI silicon. As AI accelerators become high-value targets in critical infrastructure, the security properties of custom silicon — whether the design is resilient against supply chain compromise, whether it supports hardware attestation, whether it has side-channel attack mitigations — become a first-class engineering constraint alongside power efficiency and silicon area. The teams that figure out how to make custom silicon that's both efficient and defensible will have a structural advantage as security requirements mature in the AI hardware market.