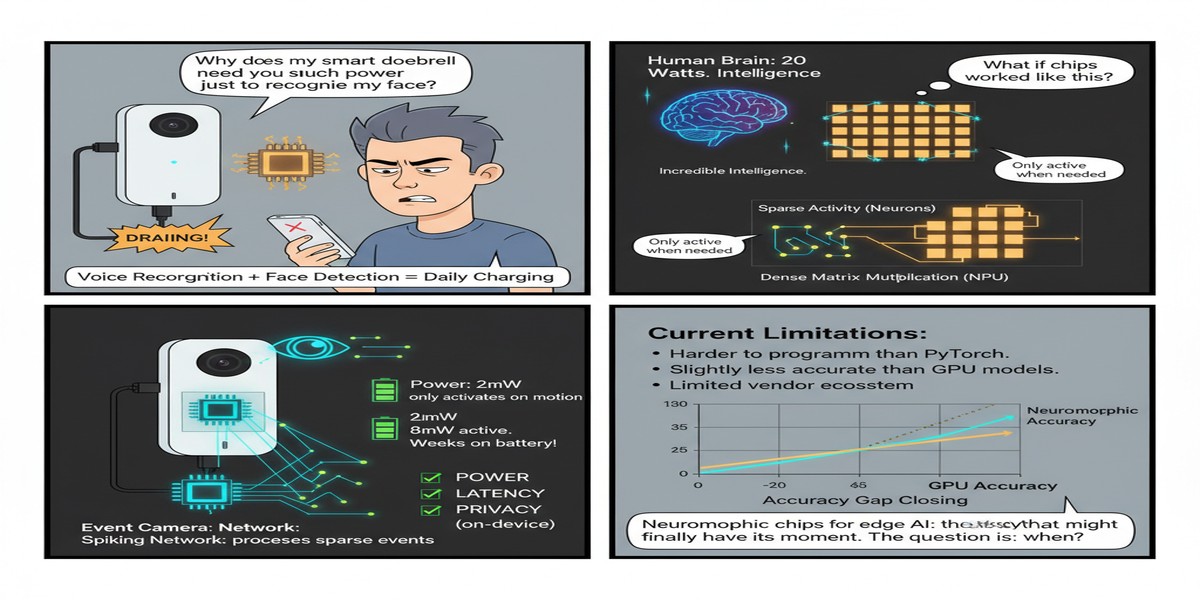

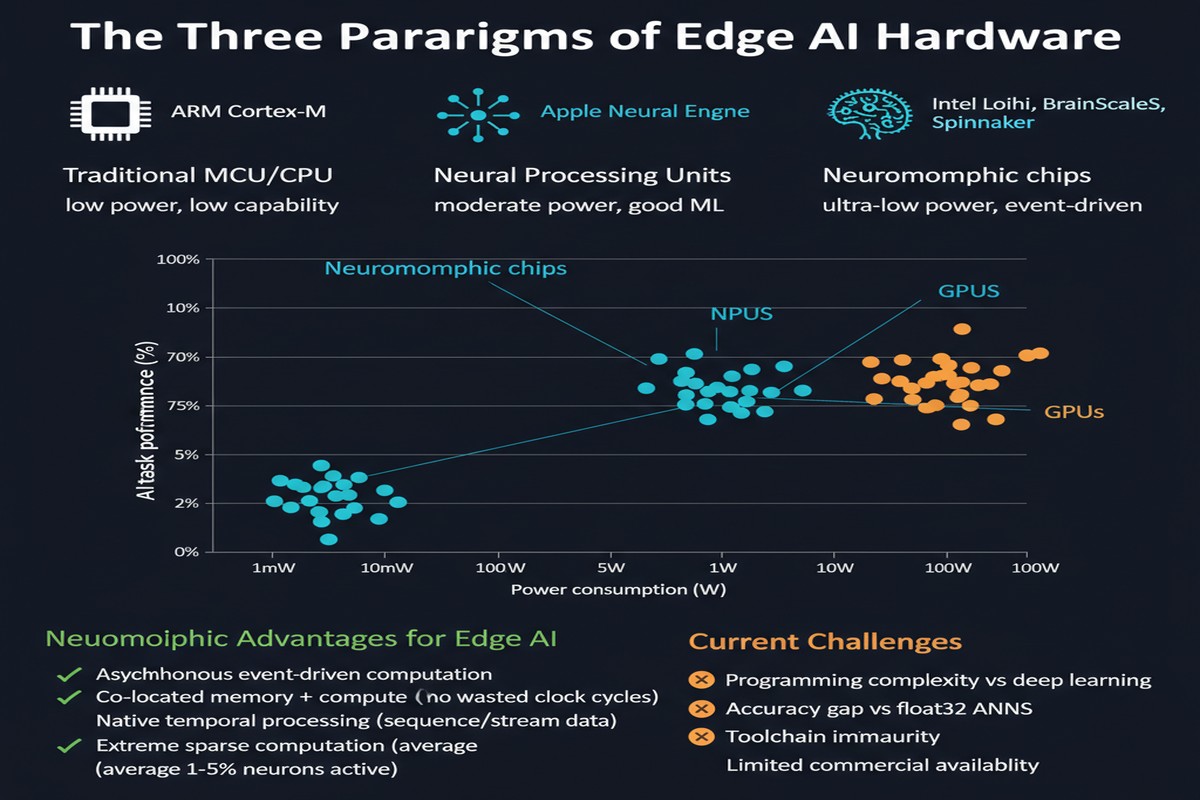

Neuromorphic computing has been the "technology of the future" for about thirty years. The idea is compelling: instead of simulating neurons in software running on von Neumann hardware, build chips that are neural networks — with spiking dynamics, sparse event-driven computation, and energy consumption that scales with activity rather than clock cycles.

The human brain processes information at roughly 20 watts. Our best AI chips consume kilowatts for equivalent-scale compute. There's clearly something the brain is doing right, and neuromorphic computing tries to learn from it.

But decade after decade, the promise hasn't translated to production deployment. Neuromorphic chips have been too specialized, too hard to program, and — critically — too divorced from the continuous-valued mathematics of modern deep learning. Spiking neural networks (SNNs), the computational model for neuromorphic hardware, have lagged behind deep neural networks on benchmark accuracy.

Energy-Efficient Neuromorphic Computing for Edge AI: A Framework with Adaptive Spiking Neural Networks and Hardware-Aware Optimization (arXiv:2602.02439) is a February 2026 paper that tries to close this gap. It proposes NeuEdge — a framework that combines adaptive SNNs with hardware-aware optimization for edge deployment. And while I'm not going to claim this paper proves neuromorphic computing has "arrived," it represents meaningful progress on the key challenges.

Why Now? The Edge AI Imperative

The timing of this work is not coincidental. Edge AI inference is undergoing a phase transition in 2025-2026. The demands are clear:

- Ultra-low power: IoT devices, wearables, and embedded sensors run on batteries or energy harvesting — milliwatts to microwatts, not watts

- Real-time inference: Sensor data needs to be processed locally without cloud round-trips — millisecond latency

- Sparse, event-driven data: Many edge sensors (cameras, microphones, LiDAR, touch sensors) produce sparse, temporally structured data — not dense, static tensors

These requirements align almost perfectly with what neuromorphic hardware does well. Spiking neural networks process events (spikes) rather than dense activations, consuming energy only when neurons fire. For sparse input data (a camera where most pixels don't change frame-to-frame, for example), this translates to dramatic energy reduction.

The question NeuEdge is trying to answer: can you build a framework that makes SNNs competitive with DNNs on accuracy while exploiting neuromorphic hardware's efficiency advantages?

How NeuEdge Works

NeuEdge has three key components:

Adaptive Spiking Neural Networks

Traditional SNNs use rate coding — a neuron's firing rate represents the value it encodes. This requires many time steps (typically 4-16) to encode a single value, adding latency and partially negating the efficiency advantage.

NeuEdge uses a temporal coding scheme that blends rate and spike-timing patterns. In this hybrid approach:

- High-confidence, high-magnitude values use rate coding (multiple spikes, unambiguous)

- Low-magnitude or uncertain values use spike-timing patterns (single spike at a specific time step, more efficient)

The result: reduced total spike activity while preserving accuracy. Fewer spikes directly translates to less energy on neuromorphic hardware, since computation is event-driven.

Hardware-Aware Optimization

NeuEdge includes a compilation and optimization layer that profiles the target neuromorphic hardware and adapts the SNN architecture accordingly. This includes:

- Timestep optimization: Finding the minimum number of time steps that achieves target accuracy for a given model and dataset

- Neuron model selection: Choosing between Leaky Integrate-and-Fire (LIF), Adaptive LIF, or other neuron models based on hardware support and efficiency

- Sparsity exploitation: Configuring the network to maximize zero activations, directly reducing compute work on neuromorphic hardware

Deployment-Time Adaptation

A key innovation in NeuEdge is that the SNN continues to adapt at deployment time, not just during training. The network monitors its own spike patterns and adjusts threshold parameters to maintain accuracy as input distribution shifts — a neuromorphic analog of test-time adaptation.

graph TD

subgraph NeuEdge["NeuEdge Framework"]

A["Input Data\n(event/sensor stream)"] --> B["Adaptive SNN\nTemporal Coding"]

B --> C{"Activity\nLevel?"}

C -->|High activity| D["Rate Coding Path\nMultiple spikes"]

C -->|Low activity| E["Timing Coding Path\nSingle spike at Δt"]

D --> F["Neuromorphic\nHardware Execution"]

E --> F

F --> G["Hardware-Aware\nOptimizer"]

G -->|Tune thresholds| B

F --> H["Output\nInference Result"]

end

style B fill:#00d4ff,color:#000

style G fill:#76b900,color:#fff

The Performance Numbers

For edge deployment on tasks like keyword spotting (Google Speech Commands) and gesture recognition:

- Energy reduction: 2-4× lower energy consumption compared to conventional DNN inference on equivalent embedded hardware

- Accuracy: Within 1-2% of comparable DNN models on classification benchmarks

- Latency: Competitive with or faster than DNN inference for event-driven input data

The 2-4× energy reduction is meaningful but not the orders-of-magnitude advantage that neuromorphic computing has sometimes promised. Understanding why requires understanding the reality of current neuromorphic chips.

The Honest State of Neuromorphic Hardware

Let me be direct about where neuromorphic hardware stands in 2026, because the paper doesn't fully engage with the deployment realities.

Existing neuromorphic platforms:

- Intel Loihi 2: 1 million neurons, 120 million synapses per chip, excellent for sparse event-driven inference. Limited toolchain, not widely deployed.

- IBM NorthPole: Different philosophy — dense, inference-optimized, not strictly neuromorphic but inspired by brain architecture. 22nm, 224 cores, remarkable energy efficiency for on-chip DNN inference.

- BrainScaleS (EU Human Brain Project): Analog neuromorphic hardware, inherits all the analog computing challenges (noise, temperature sensitivity, calibration).

The programming problem: None of these chips has a mature, user-friendly ML framework. You can't write your PyTorch model and compile it to Loihi 2. The toolchains are specialized, require hardware expertise, and have poor interoperability with standard ML pipelines. NeuEdge tries to address this with its hardware-aware compilation layer, but the gap between "research framework" and "production toolchain" remains large.

The model accuracy gap: SNNs still underperform DNNs on most standard benchmarks, sometimes by significant margins on complex tasks. The gap has been narrowing — the hybrid temporal coding in NeuEdge is an example of meaningful progress — but it's not closed.

xychart-beta

title "SNN vs DNN Accuracy Gap on Edge Tasks (approximate)"

x-axis ["Keyword Spotting", "Gesture Recognition", "CIFAR-10 Classification", "ImageNet Top-1"]

y-axis "Accuracy (%)" 80 --> 100

line [97.2, 95.8, 88.4, 67.3]

line [96.1, 94.2, 86.1, 63.8]

Note: Top line = DNN baseline, bottom line = best SNN (approximate values)

Why This Matters

Despite the limitations, I want to argue that neuromorphic computing matters more than the ML community currently acknowledges.

The data type alignment argument: Edge AI sensors are increasingly event-based. Dynamic Vision Sensors (DVS cameras) output asynchronous spike events, not frame-based images. IMU sensors produce continuous streams of timestamped events. Neuromorphic hardware is the natural compute substrate for these sensors — not an interesting research direction, but the literally correct hardware for the input data type.

The energy physics argument: The brain's 20W power consumption for trillion-synapse compute is not magic — it's the result of sparse, event-driven computation exploiting spike-based encoding. The physics argument for neuromorphic computing's efficiency advantage is sound. The engineering is hard, but the physics is right.

The deployment scale argument: Billions of edge devices are being deployed annually. If each one runs AI inference at even 100mW, that's terawatts of aggregate power. A 4× efficiency improvement across that fleet is not marginal — it's a civilization-scale energy saving.

My Take

I've been watching neuromorphic computing for over a decade. Every few years, I see a paper that represents genuine progress followed by a longer period of silence. NeuEdge is genuine progress — the hybrid temporal coding and hardware-aware optimization are meaningful contributions.

But I want to state my honest assessment: neuromorphic computing will not replace GPU-based AI for datacenter inference in the next 5-10 years. The accuracy gap, the toolchain immaturity, and the model-hardware mismatch for large transformer models are too fundamental.

Where neuromorphic will matter — and sooner than most people think — is edge AI at ultra-low power budgets. Hearing aids, AR glasses, medical implants, industrial IoT sensors, and smart grid nodes all operate at milliwatt power budgets where the energy physics of neuromorphic computing become decisive.

NeuEdge is targeting exactly this regime. The 2-4× energy savings in the paper are for general embedded hardware — on purpose-built neuromorphic silicon, the advantage would likely be larger. The framework's adaptation to deployment-time distribution shifts is particularly useful for edge scenarios where input statistics change as the device is deployed in new environments.

I'm cautiously optimistic. Not "neuromorphic computing has arrived" optimistic, but "neuromorphic computing has found its right use case and is making steady progress" optimistic. Edge AI is the killer app for brain-inspired chips. NeuEdge is one step toward making that vision deployable.

The brain consumes 20 watts and processes the world in real time. We should take that seriously.

References

- (2026). Energy-Efficient Neuromorphic Computing for Edge AI: A Framework with Adaptive Spiking Neural Networks and Hardware-Aware Optimization. arXiv:2602.02439.

- Intel Research. (2024). Loihi 2: A New Generation of Neuromorphic Processor. Intel Technical Report.