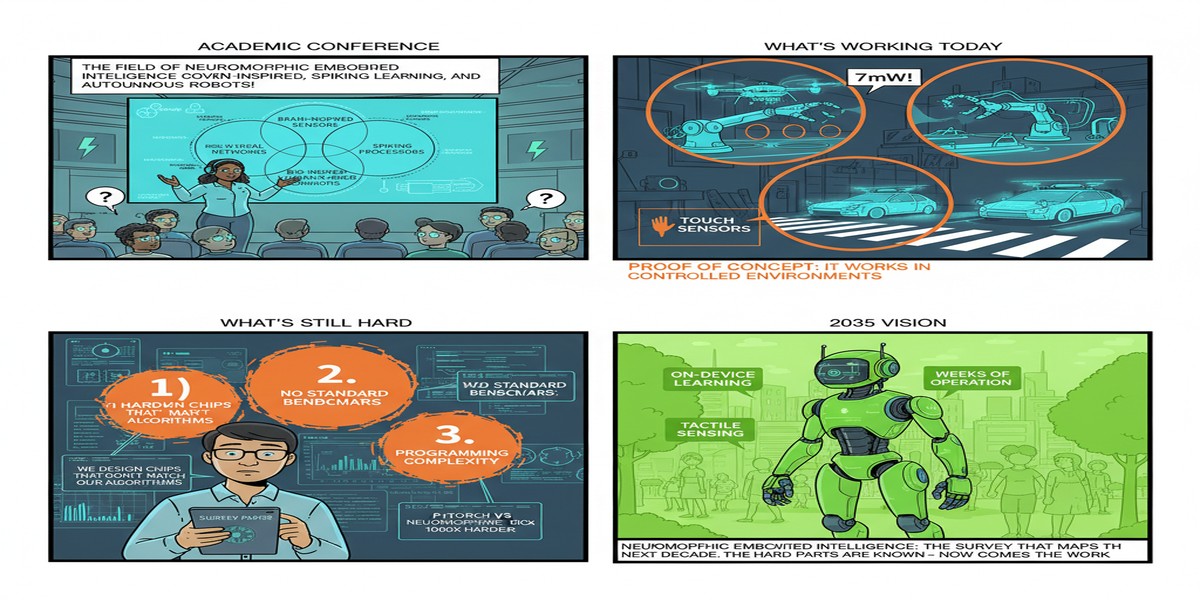

The best survey papers do three things well: they map what has been accomplished, they identify where the field is confused or overconfident, and they articulate what specifically needs to happen next. Most survey papers in fast-moving fields do the first adequately and the other two poorly. A July 2025 survey from arXiv (2507.18139) on neuromorphic computing for embodied intelligence in autonomous systems is an exception — it is technically rigorous, admirably honest about current limitations, and provides a research roadmap that is specific enough to be actionable.

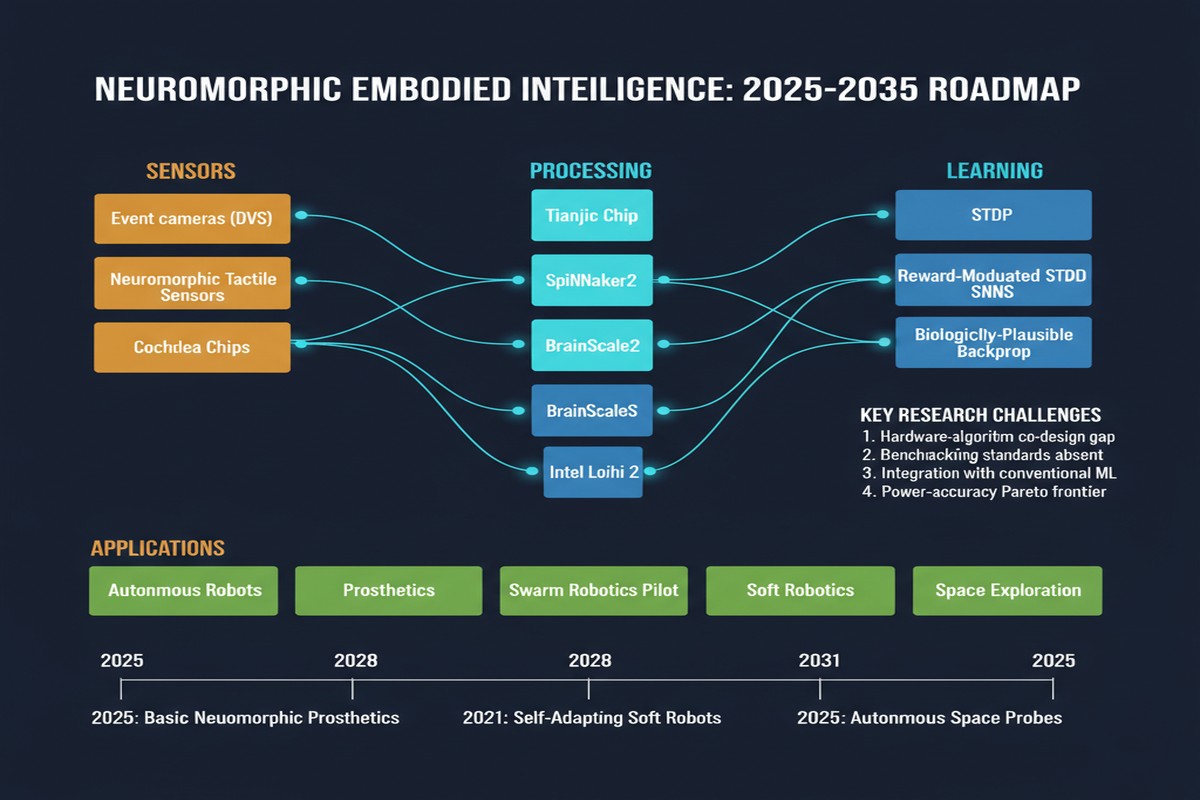

The survey covers the intersection of neuromorphic hardware and embodied AI — systems that must perceive, decide, and act in physical environments. The target applications are robotics (manipulation, navigation), unmanned aerial vehicles (quadrotors, fixed-wing, VTOL), and self-driving vehicles. These three application domains share a common challenge: they need perception and control that is fast (response times in milliseconds), energy-efficient (battery-constrained deployment), robust (real-world sensor noise and environmental variation), and adaptive (operating environments change).

This is exactly the set of requirements that neuromorphic computing was designed for. The survey's central contribution is a systematic assessment of how well current neuromorphic hardware and algorithms actually deliver on these promises — and the answer is: partially, with significant gaps that the field needs to address honestly.

The Embodied Intelligence Requirements

Before mapping what neuromorphic computing offers, the survey establishes what embodied autonomous systems actually need:

Perception: Real-time object detection, semantic segmentation, depth estimation, optical flow, and event-based sensing from dynamic vision sensors (DVS cameras that output spike streams rather than frames). Latency requirements are 1-50ms depending on system speed.

State estimation: Sensor fusion from IMU, GPS, LIDAR, camera, and proprioceptive sensors. Kalman filtering and similar algorithms run at 100-1000Hz on constrained hardware.

Decision making: Path planning, obstacle avoidance, mission planning. These operate at lower frequencies (1-100Hz) but require significant compute for complex scenarios.

Low-level control: Actuator control at 100-1000Hz. Latency requirements are extremely tight — control loop delays above a few milliseconds degrade stability for fast systems.

graph TD

subgraph "Embodied AI Compute Stack"

A[Sensors\nDVS / IMU / LIDAR / Camera] --> B[Perception\n1-50ms latency]

B --> C[State Estimation\n1-10ms latency]

C --> D[Decision Making\n10-100ms latency]

D --> E[Low-Level Control\n0.5-10ms latency]

E --> F[Actuators\nMotors / Servos]

end

subgraph "Neuromorphic Hardware Fit"

G[Excellent: DVS processing, IMU integration] --> A

H[Good: State estimation, fast control] --> C

I[Developing: Complex planning] --> D

J[Excellent: Fast reactive control] --> E

end

What Neuromorphic Hardware Currently Delivers

The survey provides a systematic tabulation of current neuromorphic hardware capabilities against embodied intelligence requirements. Key findings:

Event-based sensing is mature. Dynamic vision sensors (DVS cameras) produce spike streams that are natively compatible with spiking neural network processing. The latency advantage is real: a DVS camera responding to a moving object fires within 10-100 microseconds; a standard camera waits for the next frame (typically 10-33ms at 30-100fps). For fast-moving systems (drones, racing robots, fast industrial manipulators), this latency difference is significant.

Neuromorphic state estimation is demonstrated. The drone autopilot paper covered in this series is one example; several robotics navigation papers have demonstrated neuromorphic IMU fusion and SLAM. Power efficiency is 10-100x better than CPU-based alternatives for equivalent accuracy.

Neuromorphic planning is immature. Complex path planning and mission planning require the kind of sequential, symbolic reasoning that spiking networks do not implement naturally. The survey is honest: this is a gap, not a temporary limitation. The paper explicitly states that higher-level cognitive functions — long-horizon planning, abstract reasoning, language understanding — remain the domain of conventional computing. Neuromorphic integration is most compelling at the reactive, perception-to-action layers.

Cross-layer optimization is underdeveloped. Hardware designers optimize neuromorphic chips for spike efficiency without knowing what the software algorithms need. Software researchers develop SNN algorithms without knowing how to map them efficiently to existing hardware. The survey identifies this disconnect as a major bottleneck and calls for hardware-algorithm co-design.

graph LR

subgraph "Neuromorphic Maturity Scale"

A[DVS Processing\nMature, demonstrated] --> B[High]

C[State Estimation\nDemonstrated, improving] --> D[Medium-High]

E[Reactive Control\nDemonstrated in robots/drones] --> F[Medium]

G[Perception\nActive research, progressing] --> H[Medium-Low]

I[Complex Planning\nImmature, fundamental gaps] --> J[Low]

end

The Cross-Layer Optimization Gap

The survey's most actionable contribution is its detailed analysis of the cross-layer optimization gap. Neuromorphic computing offers three distinct optimization opportunities:

- Algorithm level — designing SNN architectures that naturally exploit sparse, event-driven computation

- Hardware level — designing neuromorphic chips that efficiently implement the chosen SNN algorithms

- System level — co-designing the hardware-algorithm stack for the target application's specific latency, power, and accuracy requirements

Current research predominantly optimizes one level at a time. Algorithm papers propose new SNN architectures and evaluate on simulation. Hardware papers design new neuromorphic chips and evaluate on synthetic benchmarks. System papers try to use existing algorithms on existing hardware and measure the resulting performance.

The result: algorithms designed for simulation do not map efficiently to available hardware. Hardware designed for general neuromorphic workloads does not meet the specific requirements of autonomous systems applications. And system integrators are left bridging the gap with software hacks that undo the efficiency advantages.

The survey calls for a different research culture: start from the application requirements, derive the algorithm structure those requirements demand, and co-design the hardware to efficiently implement those algorithms. This is how NVIDIA optimized CUDA for transformer workloads, how Apple designed the Neural Engine for mobile vision, and how Google designed TPUs for TensorFlow computation patterns. Neuromorphic computing has not yet had its application-driven co-design moment.

The Adaptation Gap

A second gap the survey identifies is adaptation — the ability to update synaptic weights during deployment as the environment changes.

Conventional deep learning separates training (expensive, offline) from inference (cheap, online). Neuromorphic computing's biological inspiration promises online learning — updating during deployment using STDP and related local learning rules. In practice, deployed neuromorphic systems do not update weights online. They run inference from fixed trained weights, exactly like conventional neural network deployment.

The gap exists because:

- Online learning algorithms for SNNs (STDP, SADP, local BPTT variants) are not yet mature enough for reliable real-world performance

- Neuromorphic hardware does not implement online learning pathways efficiently

- The interaction between online learning and system stability in autonomous systems is poorly understood

The survey calls out this gap explicitly: neuromorphic adaptation in deployed autonomous systems remains a research problem, not an engineering solution. I appreciate this honesty. Too many neuromorphic computing papers claim biological plausibility as an advantage without confronting the fact that they don't actually use the biological learning mechanisms that motivated the hardware design.

Why This Matters

The embodied intelligence application domain is where neuromorphic computing's advantages are most compelling and where its current limitations are most visible. This survey is valuable precisely because it refuses to paper over the limitations.

The autonomous systems market is large and growing: drones, delivery robots, autonomous vehicles, industrial automation, surgical robots. All of these applications have strict power budgets, real-time requirements, and deployment environments that change over time. Neuromorphic computing is the most plausible long-term hardware architecture for these systems. The question is whether the field can solve the remaining algorithmic and hardware challenges before conventional deep learning on custom ASICs (NVIDIA's approach) or custom edge AI chips (Apple, Qualcomm, Google) captures the market.

The timeline matters. Neuromorphic hardware is currently 5-10 years behind conventional AI accelerators in software ecosystem maturity, hardware availability, and deployment tooling. The survey's roadmap suggests this gap can be closed, but only if the research community stops optimizing algorithms and hardware in isolation and starts co-designing for applications.

My Take

I read this survey as both a technical document and a diagnostic for the field's culture. The technical content is excellent — the most thorough systematic treatment of neuromorphic embodied intelligence I have encountered. The diagnostic is sobering.

The neuromorphic computing community has a maturity problem: the gap between algorithm demonstrations and deployed systems is larger than it should be at this stage of the technology's development. The DVS camera community built real applications. The Loihi chip community has demonstrated real power savings. But the integration — complete autonomous systems where neuromorphic hardware delivers clear advantages over conventional alternatives across the full stack — remains elusive.

The survey's call for cross-layer co-design is the right prescription. The embodied intelligence application domain is specific enough to drive concrete co-design decisions: you know the sensor types (DVS, IMU, LIDAR), the latency requirements (1-10ms for control), the power budgets (1-5W for a drone), and the accuracy requirements (95%+ for obstacle avoidance). Design the hardware and algorithm together for this operating point, and you will produce something that conventional approaches cannot match.

What I want to see in the next generation of neuromorphic embodied intelligence research: a complete autonomous system — drone or mobile robot — where every layer from sensor to actuator is neuromorphic, the system learns and adapts during deployment using online STDP-based learning, and the power consumption is measured end-to-end rather than just for the inference network. That result does not yet exist. The Delft drone autopilot paper covered in this series is the closest thing we have. More papers in that spirit — real hardware, real measurements, honest comparisons — will move the field faster than any number of algorithm papers evaluated in simulation.

Neuromorphic Computing for Embodied Intelligence in Autonomous Systems: Current Trends, Challenges, and Future Directions — arXiv:2507.18139, July 2025.