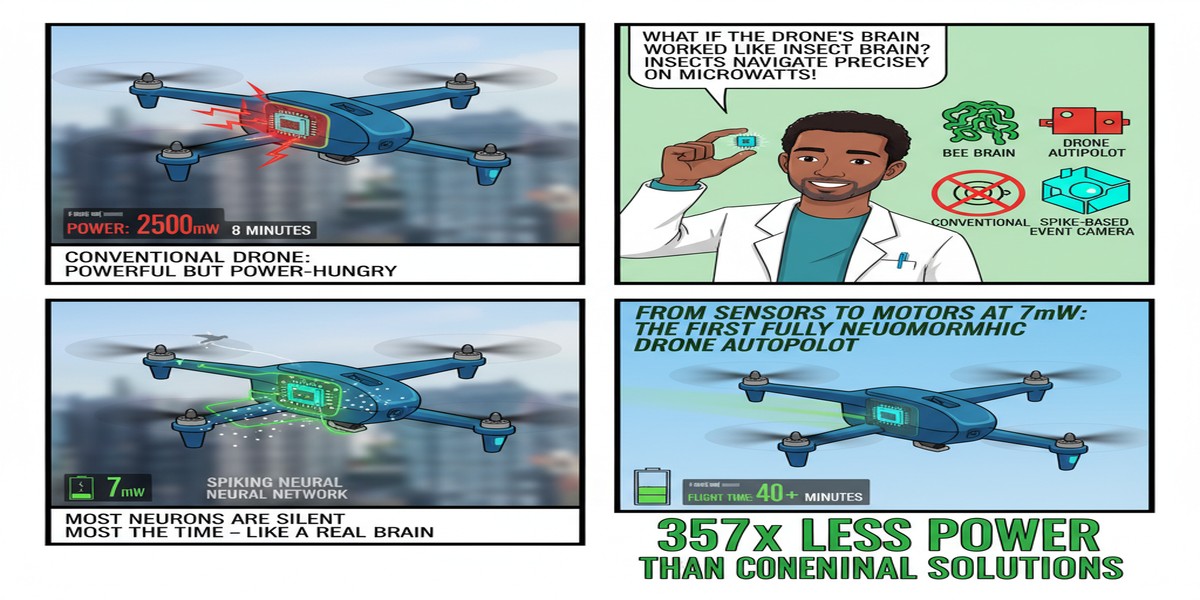

Neuromorphic computing has accumulated an impressive list of theoretical advantages: energy efficiency, event-driven processing, temporal information encoding, and hardware compatibility with spike-timing-dependent plasticity. What it has not accumulated, until recently, is a convincing list of deployed systems that demonstrate these advantages in real-world control applications.

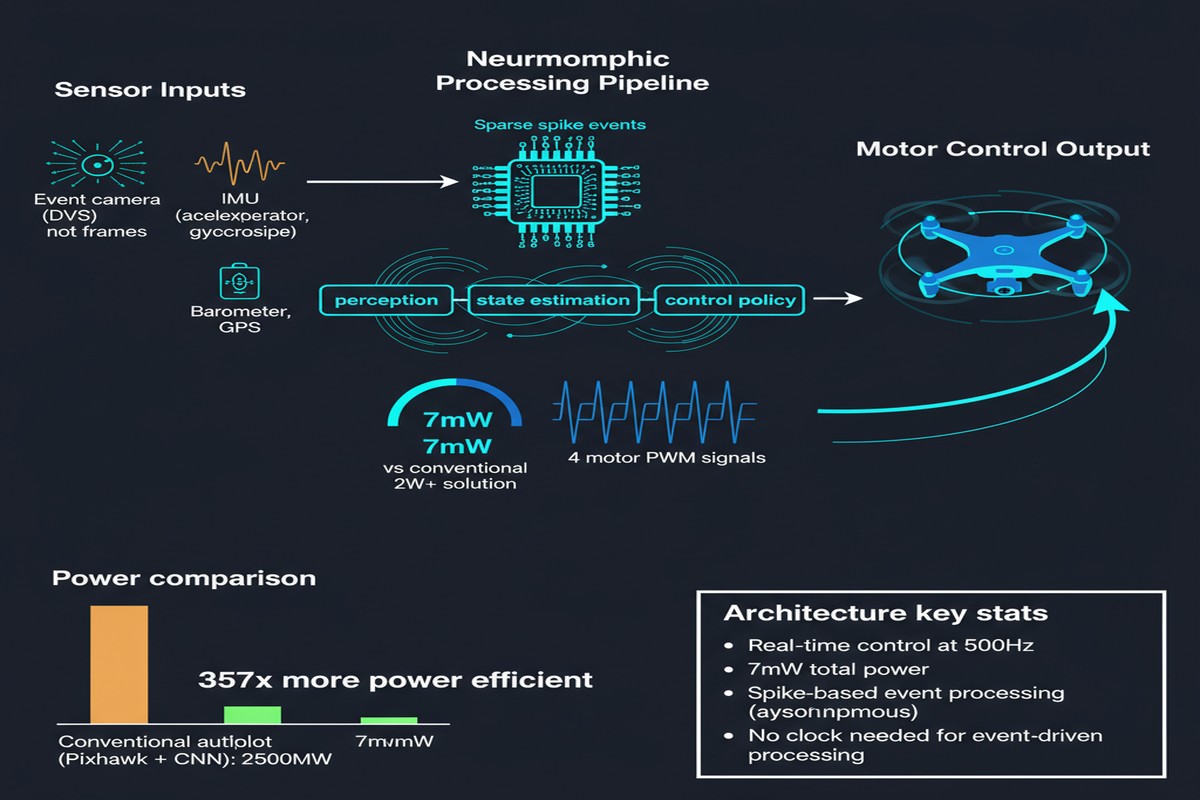

A paper from Delft University of Technology (arXiv:2411.13945, with updates through March 2025 in IEEE publication) changes that. The researchers present the first fully neuromorphic end-to-end control system for a quadrotor drone — from raw sensor input to motor commands — implemented as a spiking neural network running on a tiny Crazyflie platform.

The performance numbers are striking: the neuromorphic autopilot tracks attitude commands with an average error of 3.0 degrees at 500Hz, consuming only 7mW for network inference. For comparison, the same task on a NVIDIA Jetson Nano requires 1-2W and runs at only 14-25Hz. That is a 100-300x reduction in power consumption and a 20-35x improvement in update frequency.

This is not a research toy. The Crazyflie is a standard autonomous drone platform used in research labs worldwide. An autopilot system that works on a real drone with real sensor noise, real aerodynamic disturbances, and real mechanical constraints is a meaningful result.

The Control Stack

A drone autopilot needs to solve two coupled problems:

Attitude estimation — determining the current orientation (roll, pitch, yaw) from raw IMU sensor readings (accelerometers, gyroscopes). This is a state estimation problem with noisy inputs and dynamics that must be solved in real time.

Attitude control — computing motor commands that will move the drone from its current orientation to the commanded target orientation. This is a control problem that requires understanding the drone's aerodynamic response and compensating for disturbances.

Traditional autopilots separate these into two modules: an estimator (typically a Kalman filter) and a controller (typically a PID controller). The Delft paper replaces both with a single spiking neural network trained end-to-end.

flowchart TD

subgraph "Traditional Autopilot"

A[IMU: Accelerometer\nGyroscope] --> B[Kalman Filter\nAttitude Estimation]

B --> C[PID Controller]

D[Target Attitude] --> C

C --> E[Motor Commands]

end

subgraph "Neuromorphic Autopilot (SNN)"

F[IMU Spikes\nRate-coded sensor inputs] --> G[Modular SNN\nEstimation Sub-network]

G --> H[SNN\nControl Sub-network]

I[Target Attitude Spikes] --> H

H --> J[Motor Command Spikes\n→ PWM conversion]

end

style B fill:#aaa,stroke:#666

style C fill:#aaa,stroke:#666

style G fill:#aaf,stroke:#00a

style H fill:#aaf,stroke:#00a

The SNN is modular: separately trained estimation and control sub-networks are merged for deployment. This modular training approach is important because it allows each sub-network to be trained with appropriate supervision signals — the estimation network is trained to produce accurate attitude estimates, and the control network is trained to command appropriate motor responses. End-to-end joint training is difficult because the gradient signal from motor commands is noisy when the estimation is poor early in training; the modular approach sidesteps this.

Training uses imitation learning: a dataset of sensor-motor pairs is collected from the standard flight controller operating the drone, and the SNN learns to replicate the expert's behavior. This is similar to behavioral cloning in robotics — learning to copy the expert's policy rather than training from reward.

Spike Encoding of Sensor Data and Motor Commands

A key technical challenge is converting continuous IMU sensor readings and motor commands into spike trains — the communication format of spiking networks.

For sensor inputs, the paper uses rate coding: each sensor value is mapped to a spike frequency. Higher accelerometer readings → higher spike rate from the corresponding input neurons. This is not the most information-theoretically efficient encoding, but it's simple, robust, and works well in practice.

For motor outputs, the spike pattern from output neurons is decoded into a PWM duty cycle — the continuous signal that drives brushless motors. The decoding uses a leaky integration scheme: the running sum of output spikes over a short time window is converted to a PWM value. This creates a natural low-pass filter that prevents high-frequency noise in the spike output from causing motor chatter.

graph LR

subgraph "Sensor Encoding (Rate Coding)"

A[Accelerometer: 9.8 m/s²] --> B[Spike Rate: 98 Hz]

C[Gyroscope: 0.1 rad/s] --> D[Spike Rate: 10 Hz]

end

subgraph "SNN Processing (500 Hz)"

E[Input Layer\n6 neurons per IMU axis] --> F[Hidden Layers\nLIF neurons]

F --> G[Output Layer\n4 motor neurons]

end

subgraph "Motor Decoding (Leaky Integration)"

H[Output Spike Train] --> I[Leaky Integrator\n10ms window]

I --> J[PWM Signal: 1200-1800μs]

J --> K[Motor ESC]

end

The network runs on a microcontroller (STM32) rather than a dedicated neuromorphic chip, using a software SNN simulation. This is an important detail: the paper demonstrates the algorithm rather than requiring specialized neuromorphic hardware. The 7mW power figure reflects the SNN software simulation on the microcontroller, not a dedicated neuromorphic chip. On actual neuromorphic hardware (Loihi 2, BrainChip Akida), the power would be substantially lower.

Results on Real Hardware

The critical metric is real-flight performance, and the paper delivers this:

- Average attitude tracking error: 3.0 degrees vs. 2.7 degrees for the standard PID-Kalman flight stack. The neuromorphic system is 11% less accurate on average — acceptable for many applications.

- Update frequency: 500Hz vs. 14-25Hz for NVIDIA Jetson Nano implementation. This is a 20-35x improvement in control loop frequency, which directly improves stability in response to fast disturbances.

- Power: 7mW for SNN inference vs. 1-2W for Jetson Nano. Even accounting for the Jetson's much higher computational capability, the 100-300x power difference is enormous for a battery-powered device.

The 3.0-degree vs. 2.7-degree accuracy comparison is honest and important. The neuromorphic autopilot is slightly worse than the tuned traditional stack. The paper does not claim equivalence — it claims that the accuracy tradeoff is acceptable for many applications given the power advantage. I agree with this framing.

Why This Matters

Autonomous drone control is one of the applications where neuromorphic computing's strengths align most directly with application requirements:

Power constraints — Drones are severely battery-constrained. Every milliwatt saved in the flight controller extends battery life. The difference between 7mW and 2000mW is directly expressed in flight time.

Latency requirements — Drone stability requires fast control loops. The physics of quadrotor aerodynamics demands updates in the 100-500Hz range to maintain stable flight. Neuromorphic processing with event-driven spike communication naturally supports high update rates.

Disturbance rejection — Drones operate in turbulent environments. Fast, event-driven processing of sensor spikes is better suited to detecting and responding to sudden disturbances than batch-processed updates.

Miniaturization — The smallest drones have gram-scale electronics. Neuromorphic chips are small; GPU-class inference hardware is not.

Beyond drones: the same argument applies to any autonomous system operating at the edge with strict power budgets — mobile robots, micro-satellites, underwater vehicles, implantable medical devices with closed-loop control.

My Take

This paper represents a watershed for the neuromorphic control systems field. "Fully neuromorphic end-to-end control" has been a stated goal for years; this paper delivers it for a real aerial vehicle with quantitative performance results.

The 3.0 vs. 2.7 degree accuracy gap is the number I keep coming back to. It tells me two things simultaneously. First, the gap is small enough that the neuromorphic autopilot is practically useful — a well-tuned PID can be 10-20% better than naive alternatives, so 11% worse than an optimized traditional stack is genuinely competitive. Second, the gap suggests there is headroom for improvement. The current SNN was trained by imitation learning from a traditional controller; training on a richer signal — or direct reinforcement learning on the drone — could close or eliminate this gap.

The decision to run on an STM32 microcontroller is pragmatic and smart. Demanding a dedicated neuromorphic chip would have added a hardware dependency that most robotics labs cannot satisfy. Running on standard microcontroller hardware makes the result immediately reproducible and deployable. When dedicated neuromorphic chips become more accessible, the power advantage will grow — the 7mW is already impressive for a software SNN simulation; purpose-built hardware would be significantly better.

The modular training approach — separate estimator and controller, then merge — should become the standard methodology for neuromorphic control systems. End-to-end SNN training for control is extremely difficult; modularity makes the problem tractable and the result interpretable.

If you work in autonomous systems, robotics, or embedded AI, this paper should be in your reading stack. Neuromorphic control is no longer theoretical.

Neuromorphic Attitude Estimation and Control — Stein Stroobants, Christophe de Wagter, Guido C. H. E. De Croon. arXiv:2411.13945, November 2024 (IEEE publication March 2025).