When I first started building autonomous systems in the early 2010s, "agent" was a term that belonged almost exclusively to reinforcement learning researchers and robotics engineers. The architectures were elegant in their simplicity: observe a state, select an action, receive a reward. Today, the word "agent" has been annexed by the LLM ecosystem, and for good reason — but the conceptual debt we carry from that earlier era is worth examining carefully before we start bolting together production systems.

The Reactive Baseline: Stimulus-Response All the Way Down

The simplest agent architecture is purely reactive. It has no internal model of the world, no memory beyond what is in its current context, and no planning horizon. It sees a stimulus and produces a response. This is not a dismissal — reactive systems are extraordinarily powerful in bounded, well-defined domains. The subsumption architecture popularized by Rodney Brooks in the 1980s proved that sophisticated robot behaviors could emerge from layered reactive rules without any explicit world model.

In the LLM world, the equivalent is a prompted language model that takes a user query, runs a single inference pass, and returns a response. It works remarkably well until the problem requires state, sequencing, or external interaction. That boundary is where agentic engineering begins.

Deliberative Architectures: Planning Before Acting

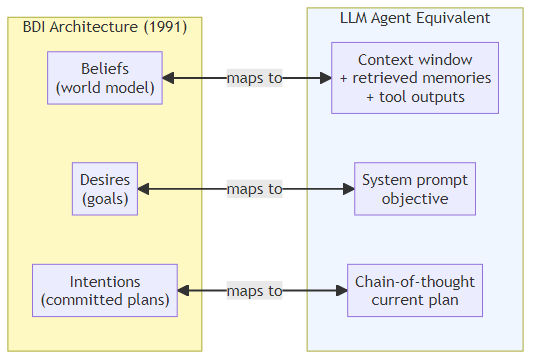

The next evolutionary step introduced an internal world model. Deliberative agents reason about their environment, construct a plan, and then execute it. The classic formalism here is the BDI (Belief-Desire-Intention) model, introduced by Rao and Georgeff in 1991. An agent maintains beliefs about the world, desires representing its goals, and intentions — the plans it has committed to executing.

BDI agents are still widely used in multi-agent simulation and autonomous systems research. What makes them relevant to modern LLM engineering is that the three-part structure maps surprisingly cleanly onto how we scaffold LLM reasoning today:

- Beliefs = the agent's context window, retrieved memories, and tool outputs

- Desires = the high-level objective specified in the system prompt or task decomposition

- Intentions = the current plan or chain-of-thought the model is executing

The translation is imperfect, but it is conceptually useful when you are designing an agent's prompting strategy.

The Hybrid Middle Ground

Pure reactive systems fail on tasks requiring foresight. Pure deliberative systems are brittle when reality diverges from the internal world model. The practical solution — both in classical AI and in modern LLM agent design — is a hybrid: a fast reactive layer handles routine decisions while a slower deliberative layer handles planning and exception handling.

This mirrors the dual-process framing that Daniel Kahneman popularized as System 1 and System 2 thinking. In LLM agent terms:

- System 1: a lightweight model or a cached response handles common patterns quickly

- System 2: a more capable (and expensive) model engages for complex reasoning, ambiguous situations, or when the fast path fails

Frameworks like LangGraph make this explicit. You can define conditional edges in your agent graph that route to a more expensive reasoning node only when a confidence check or a classification step determines it is necessary.

The Cognitive Architecture Turn

The most ambitious end of the spectrum is cognitive architectures — systems that attempt to model the full range of human-like reasoning capabilities. ACT-R and SOAR are the canonical academic examples. They include dedicated modules for declarative memory, procedural memory, perception, and action, all interacting through a central production system.

For LLM agents, the analogous aspiration is a system that:

- Maintains structured long-term memory across sessions

- Can dynamically acquire new skills (via tool creation or fine-tuning)

- Monitors its own reasoning for consistency (metacognition)

- Adapts its strategy based on past performance

The most influential modern articulation of this vision is the Generative Agents paper by Park et al. (2023), which demonstrated that GPT-4 agents given memory, reflection, and planning capabilities exhibited believable, emergent social behavior in a simulated environment. The agents didn't just react — they formed opinions, made plans, and revised those plans based on new information.

What This Means for Practical System Design

When you sit down to build an agentic system today, the architecture question is not abstract. It determines:

Latency: Reactive systems are fast. Every additional reasoning step adds latency. If your agent needs to plan a five-step tool call sequence before doing anything, you will feel that in user-facing response time.

Cost: Deliberative steps consume tokens. A cognitive loop that reflects on its own outputs doubles or triples your inference cost. Budget accordingly.

Reliability: More complex architectures have more failure modes. A reactive system has one point of failure; a multi-step planner can fail at the planning stage, the execution stage, the reflection stage, or the synthesis stage.

Debuggability: Reactive systems are easy to trace. Cognitive architectures require structured logging at every node in the graph, or you will spend hours trying to understand why your agent made a particular decision.

My practical recommendation: start reactive, add deliberation where the task demands it, and resist the urge to build the full cognitive architecture unless you have evidence that simpler approaches cannot solve your problem. The frameworks exist to support every point on this spectrum. LangGraph lets you compose arbitrary graphs. AutoGen provides a multi-agent conversation substrate. The Anthropic and OpenAI APIs give you tool use and structured outputs that make reactive-to-deliberative escalation clean to implement.

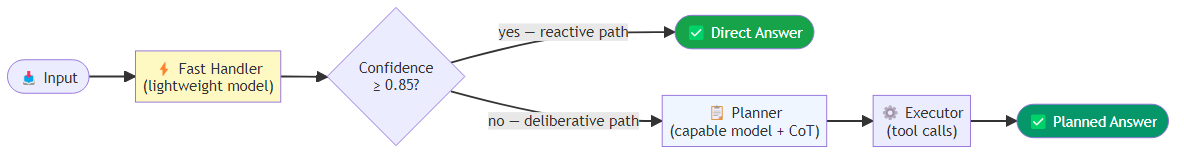

A Concrete Example: The Escalation Pattern

A pattern I use frequently is a reactive baseline with deliberative escalation. A fast handler node attempts a direct answer using a lightweight model; if the response confidence clears a threshold (say, 0.85), the answer is returned immediately via the reactive path. If not, the graph routes to a planner node that invokes a slower, more capable model with an explicit chain-of-thought prompt, producing a step-by-step plan. An executor node then carries out that plan with tool calls. The routing between these nodes is a simple conditional edge that reads the confidence flag set by the fast handler — below the threshold, it escalates to deliberative; above it, it terminates immediately.

This is not exotic engineering. It is the application of a 40-year-old architectural insight — the reactive/deliberative hybrid — to the LLM context.

The Road Ahead

The architectures that will define the next few years are not yet settled. We are seeing serious research interest in agents that can modify their own prompts and tools (self-improving agents), agents that maintain persistent world models across very long time horizons, and multi-agent systems where specialization and communication protocols matter as much as individual model capability.

What I am confident about: the teams that understand the underlying architectural principles — not just the framework APIs — will build systems that are faster to debug, cheaper to operate, and more reliable in production. The history of AI is littered with frameworks that came and went. The ideas behind reactive, deliberative, and cognitive architectures have been around for decades for good reason.

Build on the ideas, not just the tools.