Every few years, a survey comes along that forces you to look at a field from altitude — to step back from the trees and see the forest. Mohamad Abou Ali and Fadi Dornaika's Agentic AI: A Comprehensive Survey does this with unusual sharpness. Through a PRISMA-based analysis of 90 studies from 2018 to 2025, the paper surfaces a fundamental divide in the field that most LLM-centric practitioners aren't adequately accounting for: the symbolic vs. neural paradigm split.

This matters not as academic categorization, but as a practical guide to when you should use what. The paper's central finding — "the choice of paradigm is strategic" — should inform how you design agentic systems for different deployment contexts.

Paper: Agentic AI: A Comprehensive Survey of Architectures, Applications, and Future Directions Authors: Mohamad Abou Ali, Fadi Dornaika Published: arXiv:2510.25445, October 2025

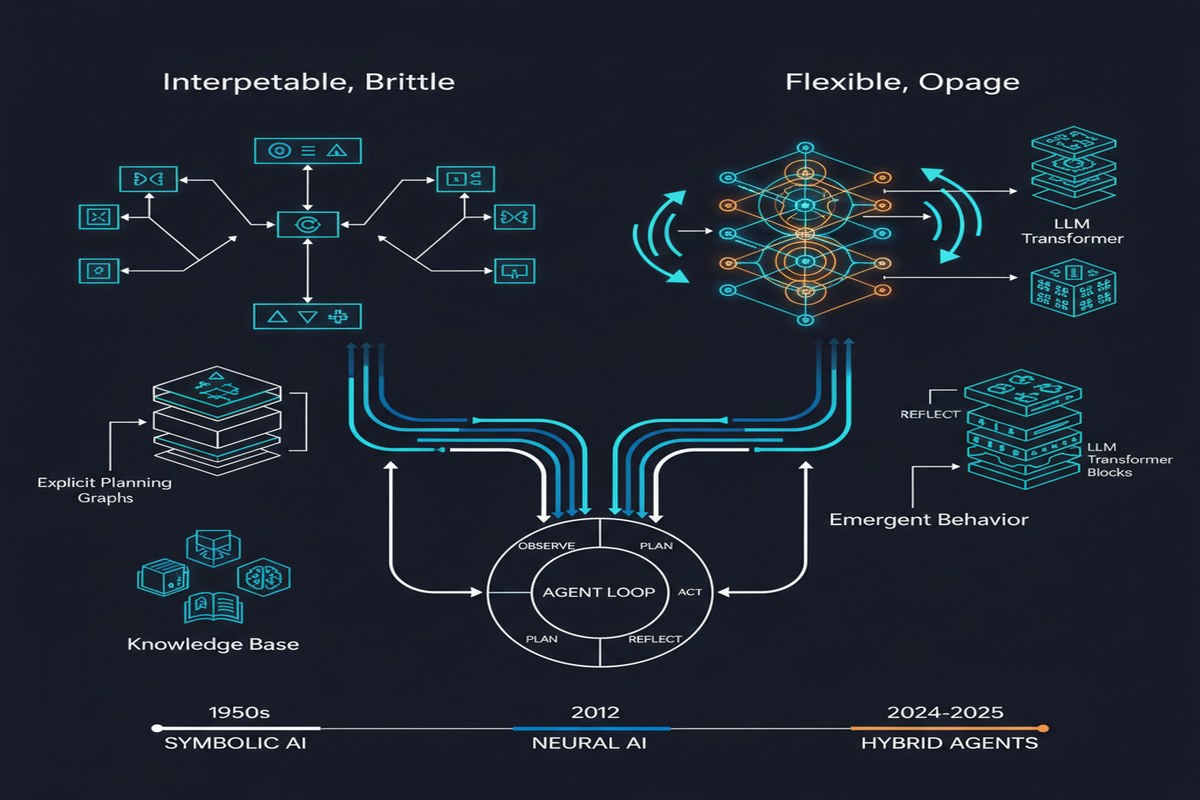

The Two Paradigms

The survey's analytical framework rests on a distinction that gets blurred in most contemporary discourse about AI agents:

Symbolic/Classical Agents operate through explicit rule systems, formal logic, deterministic planning algorithms, and structured knowledge representations. They're the descendants of GOFAI — classical AI planning systems like STRIPS, HTN planners, answer set programming systems. Their behavior is interpretable, verifiable, and predictable. When they fail, they fail in understandable ways.

Neural/Generative Agents (the LLM-based agents that dominate current discourse) operate through learned representations and stochastic generation. Their behavior is flexible, adaptive, and context-sensitive. They handle ambiguity and novel situations gracefully. When they fail, they often fail in ways that are hard to predict or diagnose.

These aren't just different implementations — they have fundamentally different properties:

graph TD

subgraph Symbolic Agents

S1[Deterministic behavior]

S2[Interpretable reasoning]

S3[Verifiable guarantees]

S4[Limited flexibility]

S5[Brittle to novel situations]

end

subgraph Neural/Generative Agents

N1[Stochastic behavior]

N2[Opaque reasoning]

N3[No formal guarantees]

N4[High flexibility]

N5[Handles novel situations]

end

subgraph Hybrid: Best of Both

H1[Structured core logic\nwith neural flexibility]

H2[Verifiable key decisions\nwith adaptive periphery]

H3[Formal constraints\nwith learned heuristics]

end

S1 & S2 & S3 --> H1

N4 & N5 --> H1

The Strategic Paradigm Choice

The survey's most useful finding is empirical: the choice of paradigm predicts deployment domain better than almost any other architectural feature.

Symbolic systems dominate safety-critical domains. Healthcare, aviation, nuclear systems, legal compliance, financial regulation. The reason is simple: these domains require verifiable guarantees that neural systems cannot currently provide. A hospital's medication dosing system needs to provably never exceed safe thresholds, regardless of what unexpected inputs it encounters. A neural agent operating stochastically is fundamentally incompatible with this requirement.

Neural systems prevail in adaptive, data-rich environments. Customer service, content generation, web navigation, personalization. These domains reward flexibility and tolerance for ambiguity. A slight inconsistency in a customer service response is annoying; a slight inconsistency in a medication dosing calculation is potentially fatal.

What's striking in the survey's data is how consistent this pattern is across 90 studies spanning eight years. The technology has changed dramatically — from rule-based chatbots to GPT-4-class agents — but the fundamental properties that determine fitness for safety-critical deployment have not.

Domain-Specific Findings

The paper's domain-specific section yields interesting findings worth highlighting:

Healthcare: Symbolic Dominance with Neural Augmentation

In healthcare, symbolic systems handle the core clinical logic (drug interaction checking, dosage calculation, diagnostic criteria), while neural systems handle the interfaces (natural language intake, report generation, patient communication). This isn't a theoretical recommendation — it's what deployed production systems actually look like when built correctly.

The inverse pattern — neural systems handling core clinical logic with symbolic systems providing interfaces — is both rarer and more dangerous. The paper identifies several case studies where neural-dominant healthcare systems were deployed and then quietly rolled back after reliability concerns emerged.

Finance: Neural Dominance with Symbolic Guardrails

Finance shows the inverse pattern. The data-rich, adaptation-requiring core of financial analysis and trading is handled by neural systems, while symbolic guardrails enforce regulatory compliance, position limits, and risk constraints. The symbolic layer doesn't need to understand the neural layer's reasoning — it just needs to detect and prevent constraint violations.

Robotics: Hybrid as Default

Robotics has been doing hybrid neuro-symbolic by necessity for longer than the LLM world has been thinking about it. The physics and safety constraints of operating in the physical world require symbolic guarantees (a robot arm that might stochastically move into a worker isn't deployable). The perception and planning challenges require neural flexibility (pre-programmed motion paths can't handle unstructured environments). The field has converged on hybrid architectures as the default, not the exception.

Why the AI Agent Community Has a Paradigm Blind Spot

I want to name something directly: the contemporary AI agent discourse is dominated by LLM practitioners who came up through the neural paradigm and have a corresponding blind spot around symbolic approaches.

This shows up in several ways:

- Treating LLM-based planning as "agent planning" when classical symbolic planners have more mature theory and stronger guarantees

- Assuming interpretability problems are fundamental when many applications could use symbolic cores with narrow neural components

- Building healthcare and legal compliance tools on neural foundations that aren't appropriate for those domains

The survey helps correct this. By analyzing 90 studies including pre-LLM work, it shows that the current LLM agent wave is one chapter in a longer story, and that the classical approaches solved real problems that the neural approaches are now rediscovering through worse methods.

The Hybrid Imperative

The paper's conclusion — that the future lies in "intentional integration" of symbolic and neural approaches — is correct and underspecified.

What does intentional integration actually look like? The paper gives high-level direction (neural for adaptability, symbolic for guarantees) but doesn't provide a design methodology for deciding where each paradigm applies within a given system.

My own view, developed from working on deployed agentic systems: the decomposition should happen along decision criticality:

flowchart TD

A[Agent Decision Point] --> B{Decision Criticality?}

B -- "Low: flexible, reversible,\nlow stakes" --> C["Neural/LLM\nHandle adaptively"]

B -- "Medium: consequential\nbut recoverable" --> D["Neural + Symbolic guardrails\nFlexible with safety nets"]

B -- "High: irreversible,\nhigh stakes, regulated" --> E["Symbolic core\nVerifiable guarantees required"]

C --> F[Monitor outputs,\nlog for learning]

D --> G[Symbolic layer checks\nbefore execution]

E --> H[Formal verification\nor human approval"]

This isn't the survey's framework — it's the practical extension of it. The survey establishes the need for the hybrid approach; practitioners need to build the design methodology.

My Take

This survey is valuable precisely because it's not LLM-centric. It takes seriously the full history of agent AI, from STRIPS to GPT-5, and draws genuine theoretical distinctions that the hype-driven discourse obscures.

The PRISMA methodology is appropriate for a survey of this scope — systematic, reproducible, explicit about inclusion/exclusion criteria. 90 studies is enough to draw statistical conclusions while still being manageable enough to analyze carefully.

My criticisms: The paper's coverage of the 2024-2025 period is thinner than earlier years, which makes sense given publication latency, but it means the survey doesn't engage deeply with the most recent LLM agent architectures. The framework established for earlier work would be more useful if applied to current systems.

Also, "intentional integration" as a conclusion is correct but unsatisfying. The field needs design patterns and decision frameworks for when to use what, not just the observation that both paradigms have complementary strengths. This survey maps the terrain well. The next work in this lineage should provide the navigation instructions.

For practitioners: use this paper when you're doing domain-level architecture decisions. The domain-paradigm mapping is genuinely useful for understanding what constraints and capabilities you need, and the domain-specific sections will save you from mistakes that others have made and documented.

Further Reading

- arXiv: 2510.25445

- Related: Agentic AI Architectures survey (2601.12560) for a January 2026 follow-on

- Related: AGENTSAFE (2512.03180) for practical safety governance

- Classic: STRIPS, HTN planning papers for the symbolic foundations this survey draws on