When 47 researchers decide to collaborate on a survey, it's either a sign that a field is mature enough to need systematization, or that it's fragmented enough that everyone has a different view and they decided to argue it out on paper. In the case of Memory in the Age of AI Agents, it's both. The field of agent memory has been developing organically — RAG here, episodic memory there, scratchpads and working memories everywhere — without a shared vocabulary or a clear theoretical foundation. This survey tries to fix that.

Paper: Memory in the Age of AI Agents Authors: Yuyang Hu and 46 co-authors (including Wangchunshu Zhou, Yixin Liu, Dawei Cheng, Qi Zhang, and others) Published: arXiv:2512.13564, December 2025 (revised January 2026)

Why We Needed This Survey

The memory problem in AI agents is easy to underestimate. On the surface, it seems solved: use a vector database, embed your documents, retrieve relevant chunks. But this confuses the storage problem with the memory problem.

Memory, as humans understand it, is not just storage and retrieval. It involves:

- Encoding: deciding what's worth remembering from a stream of experience

- Consolidation: organizing memories into structures that support future use

- Retrieval: finding the right memory at the right time with the right cue

- Forgetting: discarding or deprioritizing memories that are no longer relevant

- Updating: revising memories when new information contradicts old ones

- Metacognition: knowing what you know and knowing what you don't

Current agent memory research tends to solve one of these problems while ignoring the others. The survey's contribution is to map the full landscape and show where each piece of research sits.

The Three Lenses

The survey organizes agent memory through three analytical lenses:

1. Forms: How is memory physically instantiated?

graph TD

M[Agent Memory Forms] --> T[Token-level Memory]

M --> P[Parametric Memory]

M --> L[Latent Memory]

T --> T1[In-context: working\ncontent in active context]

T --> T2[External: vector DB,\nkey-value stores]

P --> P1[Pretrained: knowledge\nbaked into weights]

P --> P2[Fine-tuned: domain-specific\nweight updates]

L --> L1[Activation patterns\nacross layers]

L --> L2[Cached KV states\nfor efficiency]

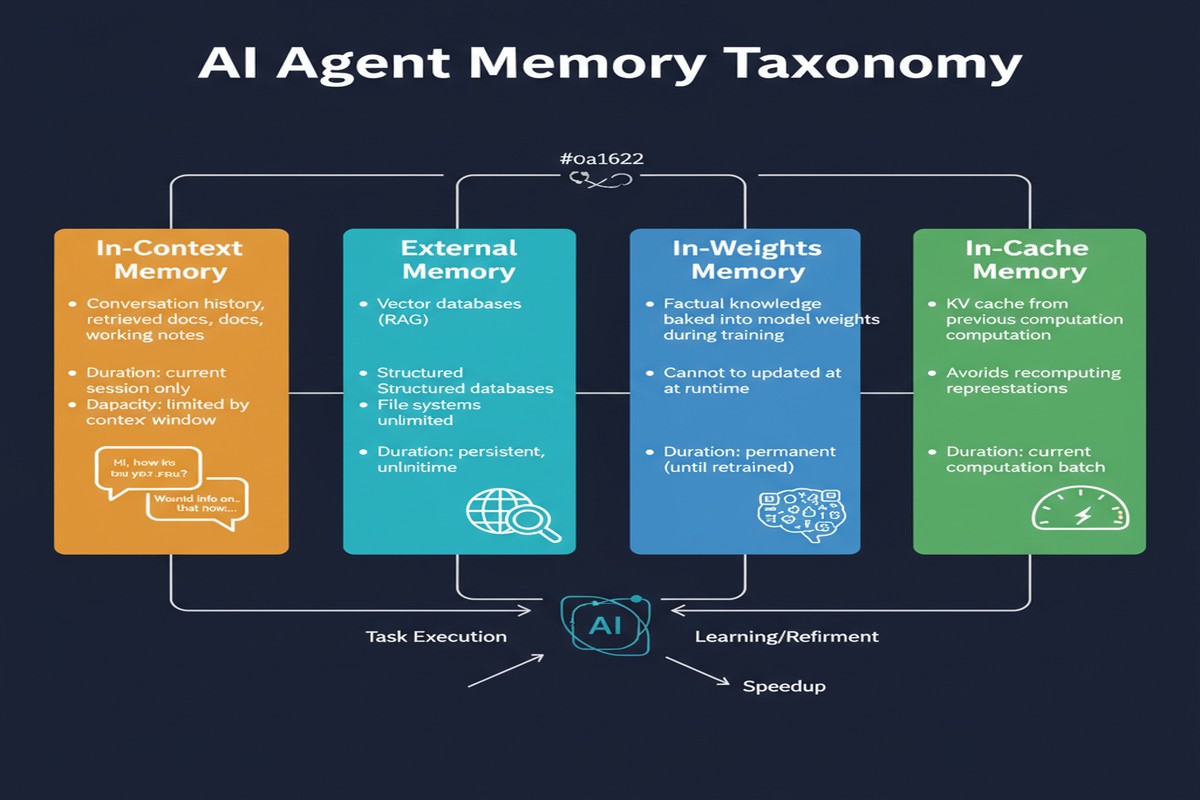

Token-level memory is the most familiar: information represented as tokens, either in the active context window (working memory) or in an external store. RAG systems are token-level external memory.

Parametric memory is knowledge encoded in model weights — both what the model learned in pretraining and what can be updated via fine-tuning. This is the most durable but least flexible form.

Latent memory refers to representations in activation space — patterns that exist across the model's internal layers without being explicitly tokenized. This is the least understood and most interesting frontier.

2. Functions: What cognitive role does memory play?

The survey distinguishes three functional categories:

- Factual memory: knowledge about the world, concepts, entities

- Experiential memory: records of past interactions, tasks, and their outcomes

- Working memory: the active information being processed right now

This maps loosely to semantic, episodic, and working memory from cognitive science — but the mapping isn't clean, and the paper is appropriately cautious about over-applying the analogy.

3. Dynamics: How does memory evolve?

The dynamics lens covers memory formation, evolution, and retrieval as processes rather than states. A key insight here is that the same information can be stored in multiple forms simultaneously, and transitions between forms (e.g., consolidating episodic experiences into factual knowledge) are important and understudied.

The Conceptual Clarification That Matters

One of the most valuable contributions is clearly distinguishing "agent memory" from two concepts it's frequently confused with:

LLM memory ≠ agent memory. LLM memory refers to what's in the model's weights — things learned during training. Agent memory refers to information an agent accumulates and manages during operation. These interact but aren't the same thing.

RAG ≠ agent memory. RAG is a specific implementation of external token-level memory. It's one small corner of the memory landscape, not a general solution. The survey helps you see the other corners.

The Emerging Research Areas

The survey identifies five areas where the field is actively developing but lacks mature solutions:

Memory automation: The goal of making memory management itself automatic — deciding what to store, when to update, when to forget. This is what papers like AgeMem (2601.01885) are attacking.

RL integration: Using reinforcement learning to train memory management policies, rather than engineering them manually. Strong overlap with AgeMem's approach.

Multimodal memory: Most current agent memory is text-based. Agents that operate in the real world need to remember images, audio, spatial layouts, and other non-textual information. This is relatively unexplored.

Multi-agent memory: When multiple agents share memory, or when one agent's memories need to be transferred to another, entirely new problems emerge around consistency, privacy, and coordination.

Trustworthiness: Can you trust what an agent claims to remember? Memory systems can be attacked, hallucinated, or manipulated. This connects to the broader agent safety space.

Why This Matters

The survey matters for a practical reason: it lets you diagnose which memory problem you have.

If your agent is failing because it can't maintain context over a long task, you have a working memory problem. If it's failing because it can't draw on past experience, you have an episodic memory problem. If it's failing because it lacks domain knowledge, you have a parametric memory problem. These require different solutions, and conflating them leads to wasted engineering effort.

The survey also highlights how far behind agent memory is compared to other aspects of agent systems. We have sophisticated reasoning models, powerful tool-use frameworks, and mature orchestration systems. Memory, by comparison, is still largely ad hoc. The long-context window race has provided a convenient excuse — "we'll just make the window bigger" — but that's not a memory architecture, it's a memory avoidance strategy.

flowchart LR

subgraph Memory Maturity Landscape

A["Tool Use\nFrameworks"] -->|"Very Mature"| L1[High]

B["Reasoning\nModels"] -->|"Mature"| L2[High]

C["Orchestration\nSystems"] -->|"Maturing"| L3[Medium]

D["Agent Memory\nSystems"] -->|"Early"| L4[Low]

E["Multimodal\nAgent Memory"] -->|"Very Early"| L5[Very Low]

end

My Take

47 authors is a lot of authors. Surveys with large author lists often suffer from committee-by-design: too cautious, too comprehensive at the expense of insight, optimized to offend nobody. Memory in the Age of AI Agents partially succumbs to this. There are sections that read like an exhaustive taxonomy when what you really want is sharp argument about what matters most.

That said, I'm glad this exists. The lack of shared vocabulary has been a real problem in this space. Researchers publishing about "episodic memory" and "experiential replay" and "experience-based learning" are often talking about closely related things without knowing it because they haven't read each other's work. Surveys like this one create the common ground for genuine intellectual progress.

The three-lens framework (forms, functions, dynamics) is genuinely useful. I've started using it when evaluating agent memory designs, and it helps me ask better questions faster.

My strongest criticism: the paper is stronger on diagnosis than prescription. It maps the landscape well but doesn't give you a clear sense of which research directions are most likely to pay off. The "emerging areas" section points at the right problems but doesn't help you prioritize.

If you work in agentic AI — building systems, designing architectures, or doing research — this is required reading. Not because it gives you answers, but because it gives you the right questions.

Further Reading

- arXiv: 2512.13564

- Related: AgeMem (2601.01885) for RL-based memory management

- Related: Hindsight is 20/20: Building Agent Memory (2512.12818) for a practical perspective

- Related: Memoria framework (2512.12686) for a scalable implementation