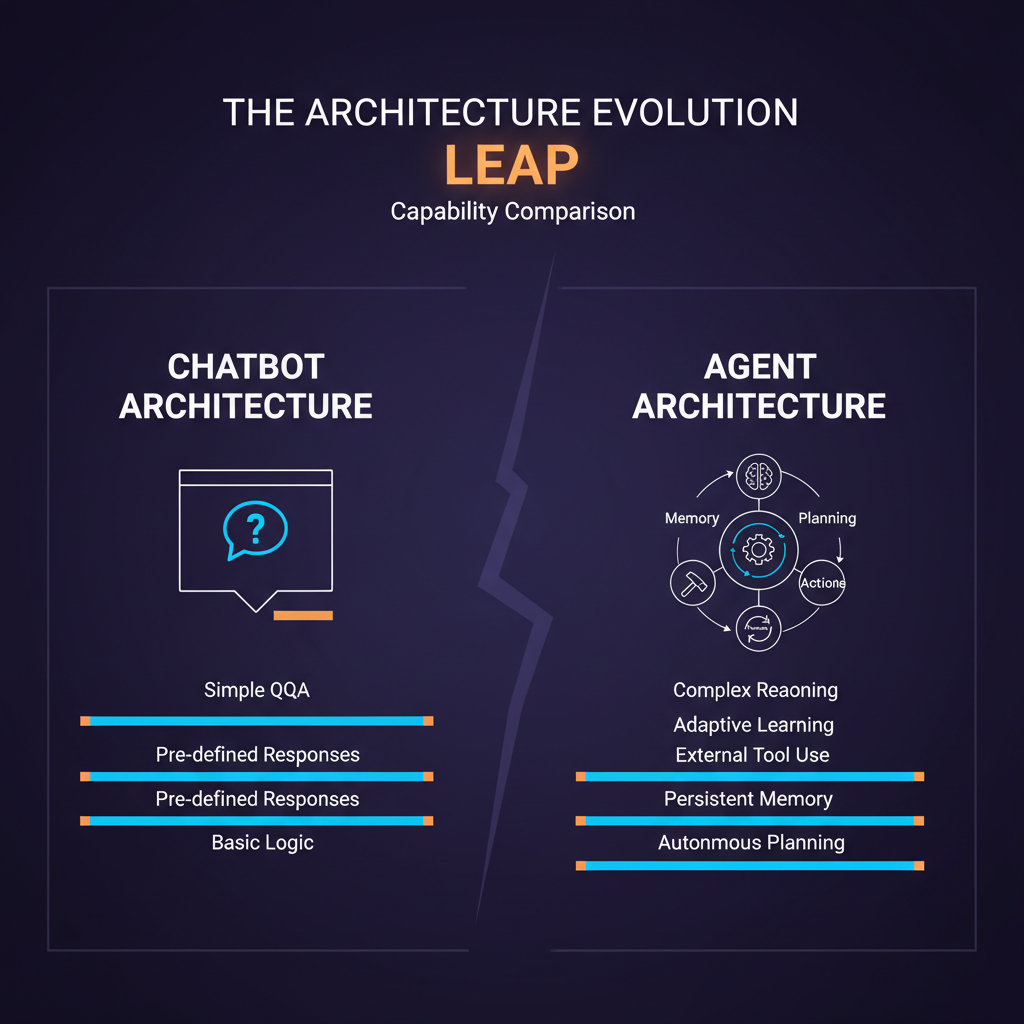

In 2022, the cutting edge of deployed AI was a chatbot that could answer questions, draft text, and engage in conversation. In 2026, the cutting edge is an agent that can write code, browse the web, manage files, call APIs, coordinate with other agents, and pursue multi-step goals across hours or days without human intervention at every step.

These are not the same technology scaled up. They are fundamentally different architectures with different properties, different failure modes, and different engineering requirements. Understanding the architectural leap — not just the marketing narrative around it — is essential for anyone building production AI systems.

The Chatbot Architecture

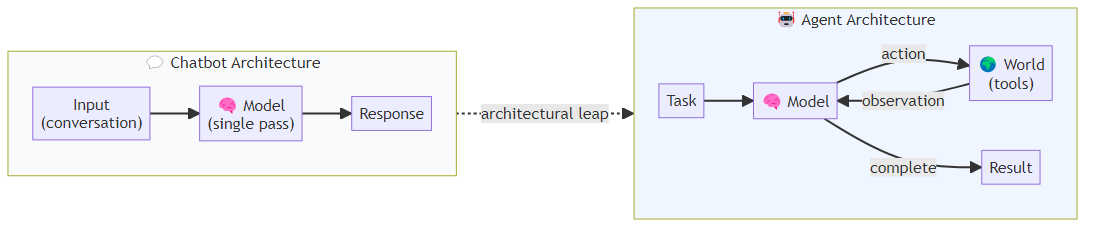

A chatbot, in the technical sense, is a function: it takes a conversation history as input and produces the next message as output. The entire "intelligence" of the system happens in a single inference pass. The model attends to everything it has seen in the conversation, generates a token distribution, samples from it, and repeats until it produces an end-of-sequence token. Input goes in, a response comes out — that is the full execution model.

This architecture has a beautiful simplicity. Each call is stateless — you can run a million chatbot queries in parallel without any shared state. The model does not remember previous conversations (unless you add memory explicitly). It does not take actions in the world. It generates text, and that text is either helpful or not.

The failure modes are correspondingly simple: the model produces unhelpful text, incorrect text, or (with poorly aligned models) harmful text. These are bad, but they are bounded. A chatbot cannot accidentally delete your files, send an email you did not want sent, or commit code that breaks production. Its blast radius is limited to the words it produces.

What Changes in an Agent Architecture

An agent architecture wraps the same language model in a fundamentally different execution loop. The model is no longer generating a single response — it is generating actions that are executed in the world, observing the results, and generating the next action based on what it observed. At each iteration, the model decides what to do next based on its current understanding of the task and the accumulated observations from prior steps. The loop continues until the agent determines the task is complete or a hard limit is reached.

This is the ReAct pattern (Yao et al., 2022) — Reasoning and Acting — and it is the foundation of nearly every modern agent architecture. The key differences from the chatbot pattern:

Tools. The agent has a set of tools it can invoke: web search, code execution, file read/write, API calls, database queries, browser control. Each tool call is an action that affects the world. The tool returns an observation that updates the agent's context.

State persistence. The current state of the task — what has been done, what was discovered, what still needs to be done — persists across tool calls. The model is not answering a single question; it is maintaining working memory about an ongoing task.

Multi-step execution. The agent does not produce a single output and stop. It iterates until it determines the task is complete (or until a hard limit is reached). A complex coding task might require 20-30 tool calls across multiple files before the task is complete.

External side effects. This is the critical architectural difference with the largest implications. When an agent calls a tool, it causes things to happen outside the model. Files are modified. APIs are called. Code is executed. Emails might be sent. These effects exist independent of the language model and persist after the task completes.

The New Engineering Surface Area

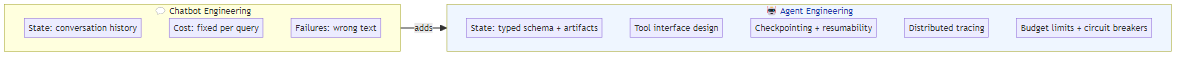

This architectural shift creates an entirely new engineering surface area that does not exist in chatbot systems.

State management. In a chatbot, state is just the conversation history — a list of messages. In an agent, state includes the current task, the results of every tool call, the intermediate artifacts produced, and metadata about the execution (elapsed time, error counts, budget remaining). This state must be designed, typed, and carefully managed. Poor state management is the leading cause of agent failures in complex workflows.

Tool interface design. The tools available to an agent determine what it can accomplish and what it can break. Each tool needs a clear schema, predictable error behavior, and appropriate permission scoping. A poorly designed tool interface — with ambiguous parameters, inconsistent error formats, or excessive permissions — creates a combinatorial surface for agent failures.

Execution context and cancellation. Chatbots are stateless; if a request fails, you retry from the beginning. Agents are stateful; if a long-running agent execution fails at step 15 of 20, you need to be able to resume from step 15 (if the previous steps were successful) or roll back and retry (if they were not). This requires checkpoint infrastructure that has no analog in chatbot systems.

Observability and tracing. In a chatbot, you log the input and output. In an agent, you need to trace every tool call, every state transition, every intermediate observation. Without this, debugging agent failures is extremely difficult. Frameworks like LangSmith, Langfuse, and Weights & Biases Weave provide agent-specific tracing, but they require deliberate instrumentation.

Budget and resource management. Chatbots have a fixed cost per query. Agents have variable, potentially unbounded costs — a poorly scoped task can result in an agent that calls expensive APIs indefinitely. Hard limits on iterations, tool calls, time, and cost are not optional in production agent systems.

The Cognitive Architecture Shift

Beyond the engineering differences, there is a cognitive architecture shift that is easy to underestimate.

A chatbot generates text that represents the model's best single response to a prompt. That response is produced in a single pass, without the ability to course-correct based on feedback from the world.

An agent can generate a hypothesis, test it, observe the result, refine the hypothesis, and iterate. This is closer to how humans approach problem-solving: we do not answer questions from pure reasoning; we interact with the world, gather information, update our understanding, and act again.

The ReAct pattern formalizes this as explicit reasoning traces in which the model articulates its current understanding, specifies an action to take, and then incorporates the observation back into its reasoning before deciding on the next step. A concrete example: an agent debugging a repository first reasons about where to look, reads the relevant configuration file, updates its understanding based on what it finds, then moves to the next file — and so on until it has gathered enough context to identify and fix the problem. Each step builds on the last in a traceable chain.

This explicit reasoning trace is not just a debugging artifact — it is a functional component of the agent's problem-solving. The model "reasons" by generating text about its reasoning, and that reasoning text influences subsequent tool calls. The quality of the reasoning trace is a significant driver of agent performance.

Implications for System Design

The architectural differences between chatbots and agents impose specific design requirements that practitioners need to internalize.

Design for partial failure. Every tool call can fail. Every action can produce an unexpected result. Agent system design requires thinking through partial failure modes: what happens if tool call 7 of 20 fails? Can you retry that tool call? Must you restart from the beginning? Can you skip it and continue? The answer to these questions should be encoded in your system design, not discovered at runtime.

Treat agents as distributed systems. An agent that coordinates multiple tool calls across multiple services is a distributed system in everything but name. The failure modes of distributed systems — partial failures, inconsistent state, timeouts, race conditions — all apply. Apply distributed systems engineering practices: idempotent operations, explicit retries with backoff, transactional semantics for operations that must succeed together.

Scope tasks tightly. The single most effective practice for improving agent reliability is reducing the scope of what you ask any single agent to do. A monolithic agent tasked with "build this feature end to end" fails in more ways and more often than three coordinated agents each responsible for a well-defined slice of the work. Decompose aggressively.

The blast radius principle. Every agent deployment should have an explicit answer to: "What is the worst thing this agent can do if it malfunctions?" That answer should inform your permission scoping, your monitoring, and your human oversight strategy. If the blast radius is large, the autonomy should be small. This is not a temporary constraint — it is a design principle.

What This Architecture Makes Possible

Despite the engineering complexity, the agent architecture enables something that chatbots fundamentally cannot: genuine task completion in the world.

A chatbot can tell you how to fix a bug. An agent can find the bug, write the fix, run the tests to verify it works, and submit a pull request. A chatbot can describe a research methodology. An agent can execute that methodology — searching sources, extracting data, running analyses, and producing a structured report.

This is not a marginal improvement in capability. It is a categorical shift from advisory to executive function. And it is why the transition from chatbots to agents represents, in my view, the most significant architectural development in applied AI since the transformer.

The engineering investment required to do it well — the state management, the tool design, the observability, the safety engineering — is substantial. But it is not optional. The practitioners who master it now will have a head start that compounds over time, because the systems they build will not just answer questions about the world. They will change it.

Explore more from Dr. Jyothi