The past two years have produced an avalanche of agent demos. A model books a flight. Another debugs a thousand-line codebase. A third orchestrates a multi-step research synthesis that would take a PhD student a week. Watching these demonstrations, it is tempting to conclude that autonomous agents have arrived — that the remaining work is just engineering polish.

It has not. And I want to be precise about why.

What Agents Actually Are

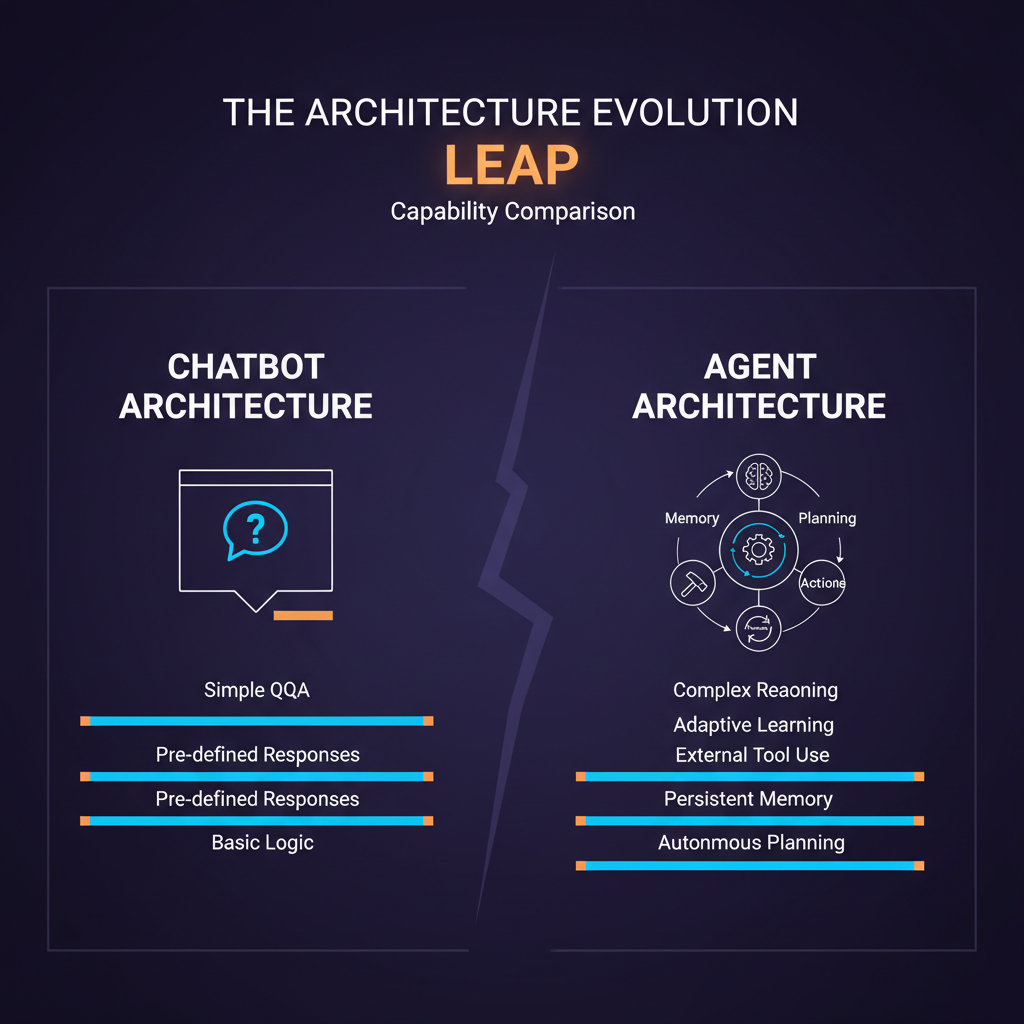

Before evaluating capability, we need a crisp definition. An autonomous agent, in the technical sense, is a system that perceives its environment, maintains some form of state or memory, selects actions from a set of available tools, and pursues a goal across multiple steps without requiring human intervention at each step.

The key word is autonomous. A chatbot that answers a single question is not an agent. A pipeline that calls three APIs in a fixed sequence is not an agent. What makes a system an agent is the ability to decide which actions to take, in which order, based on what it observes — and to adapt that plan when things go wrong.

Modern LLM-based agents combine several components: a foundation model as the reasoning core, a set of tools (web search, code execution, file I/O, API calls), a memory system (in-context, episodic, or vector-based), and an orchestration layer that manages the action-observation loop. Frameworks like LangGraph, CrewAI, and AutoGen have made assembling these components substantially easier, which is why agent deployments have accelerated.

What Agents Do Well

The honest list of genuine agent capabilities is actually impressive.

Code generation and modification. On SWE-bench Verified — a benchmark of real GitHub issues requiring multi-file code changes — top agents now resolve more than 50% of issues that stumped the original developers. Systems like SWE-agent, Devin, and Claude's agentic coding mode demonstrate that agents can navigate real codebases, understand failure modes from test output, and iterate toward working solutions.

Information synthesis. Agents with access to web search and document retrieval can synthesize across dozens of sources in minutes, producing structured outputs (comparative tables, literature reviews, decision memos) that would take humans hours. The quality is uneven, but the throughput is real.

Workflow automation. Repetitive multi-step tasks — extracting data from PDFs, reformatting outputs for downstream systems, monitoring conditions and triggering responses — are where agents deliver the most reliable value today. The task graph is known, the tools are stable, and the failure modes are bounded.

Tool use and API orchestration. Given a well-documented API and a clear goal, modern agents can compose multi-step API calls, handle pagination, retry on errors, and extract relevant fields from complex response structures. This is genuinely useful for integration tasks.

Where Agents Consistently Fail

The failures are as instructive as the successes, and they cluster in predictable places.

Long-horizon planning. As task length increases, error accumulates. An agent might make a subtly wrong assumption at step 3 of a 20-step task, and by step 15 it is pursuing a coherent but incorrect goal. This is the compounding error problem. Benchmarks like AgentBench and GAIA show steep performance drops as task complexity grows. Current best systems solve roughly 75% of easy GAIA questions but drop to under 40% on hard ones.

Reliable tool use under adversarial conditions. When APIs return unexpected formats, when web pages change structure, when file systems have unexpected permissions — agents fail in ways that are hard to predict and harder to diagnose. The brittleness is real and it scales with the number of tools and the heterogeneity of the environment.

Self-correction without human feedback. Agents can self-critique, but they are not reliably good at knowing when to self-critique. A model that is confidently wrong will often generate a confident self-critique that arrives at the same wrong conclusion. This is a fundamental limitation of using the same reasoning system for generation and evaluation.

World models and physical constraints. Agents operating in domains with physical or regulatory constraints — medical, legal, financial — tend to violate constraints in subtle ways. They optimize for the stated goal without fully representing the constraint space. This is not a data problem; it is an architecture problem.

Memory and context management. Long-running agents that accumulate context eventually hit limits — either literal context window limits or the more insidious problem of earlier information being down-weighted in attention. Episodic memory systems help, but they introduce their own retrieval failures.

The Evaluation Problem

One reason capability assessments vary so widely is that evaluation is hard. SWE-bench is the gold standard for software engineering tasks, but it has known distribution issues — the test set skews toward certain types of bugs and certain codebases. AgentBench covers a broader range of environments (web browsing, database, OS) but scores on AgentBench have not correlated cleanly with real-world performance.

GAIA, introduced by researchers at Meta and HuggingFace, uses tasks that require genuine multi-step reasoning with tool use, verified by humans. It is harder to game than benchmarks with fixed answer keys, which makes it a better signal — but even GAIA does not capture the distribution of tasks you actually care about for your specific deployment.

The deeper problem: current benchmarks measure success on complete tasks. They do not measure the probability that an agent takes a catastrophic action on the way to a failed task. For production deployment, that second number matters at least as much as the first.

A Framework for Honest Capability Assessment

When I evaluate whether an agent system is ready for a use case, I ask five questions:

- Is the task graph bounded? Tasks with a known, finite set of possible action sequences are far more tractable than open-ended exploration tasks.

- Are the failure modes recoverable? Sending a malformed API request is recoverable. Deleting production data is not. The reversibility of actions should determine how much autonomy you grant.

- Is there a ground truth signal? Code either passes tests or it does not. Research summaries do not have a pass/fail criterion. Ground truth signals enable reliable self-correction.

- What is the cost of a confident wrong answer? Agents fail confidently. If a confident wrong answer is catastrophic, you need human checkpoints regardless of agent capability.

- How stable is the environment? Agents trained on today's web structure degrade as websites change. Stable, versioned APIs are more tractable than the open web.

What the Next 12 Months Will Bring

Based on current research trajectories, I expect meaningful progress on: better uncertainty quantification (agents that know when they are guessing), improved long-context memory via external store architectures, and more reliable tool use through formal tool schemas and verification. I do not expect near-term breakthroughs on genuine world modeling or robust self-correction without ground truth signals.

The practical implication: the agents that will deliver value in 2026 are narrowly scoped, well-monitored, operating in reversible environments, and augmenting human decision-making rather than replacing it. That is not a pessimistic conclusion — it is a design principle.

Build agents that are useful given their current capabilities, not agents that would be useful if the capabilities were better. The gap between those two is where most agent projects fail.

Explore more from Dr. Jyothi