The pitch is always the same: "Our AI agent handles 80% of customer queries automatically." "Our agent processes invoices 10x faster than human teams." "Our agent writes code that passes review 60% of the time."

The reality is often different. Not because the vendors are lying — but because AI agent ROI is genuinely hard to achieve, and the path from demo to production is full of failure modes that silently erode value.

Here's the honest framework.

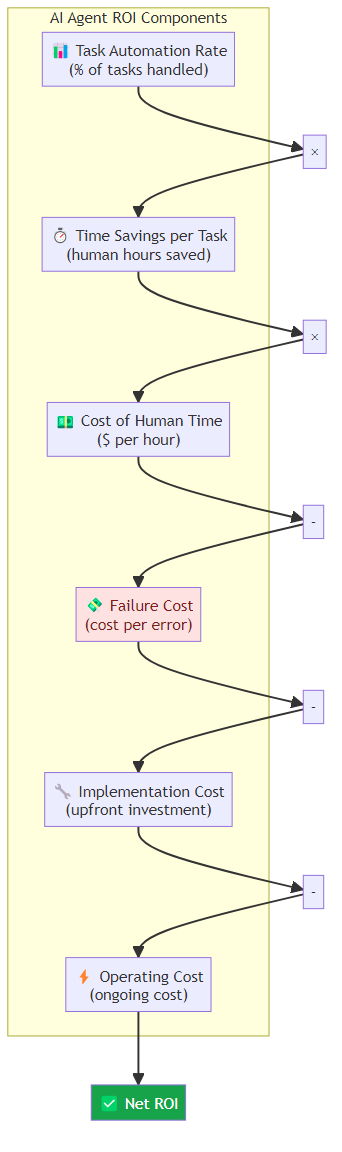

The ROI Equation for AI Agents

AI agent value = (Task automation rate × Time savings per task × Cost of time) - (Implementation cost + Operating cost + Failure cost)

The three variables that drive ROI:

- Task automation rate: What percentage of target tasks does the agent handle successfully?

- Time savings per task: How much human time does the agent save per automated task?

- Failure cost: What's the cost when the agent gets something wrong?

Every vendor emphasizes the first two. Almost none discuss the third honestly.

Where the ROI Is Real

High-Vrecision, High-Volume Tasks

The clearest ROI cases are tasks that are:

- High volume (thousands of executions per day)

- High precision (right/wrong is clearly measurable)

- Low variation (the same pattern repeated with minor variations)

Examples:

- Invoice processing: extract structured data from unstructured documents, validate against rules, route for approval. At scale, the time savings are substantial.

- Customer intent classification: route support tickets to the right team, classify urgency, surface relevant context. The agent doesn't answer — it prepares humans to answer faster.

- Data entry and migration: move data between systems, standardize formats, validate consistency. Boring work that humans hate and execute inconsistently.

For these tasks, automation rates of 70-85% are achievable. Time savings of 60-80% per task are real. ROI is positive within 3-6 months.

Research and Synthesis at Scale

AI agents can synthesize information from dozens of sources in minutes — a task that takes human researchers hours. For market research, competitive intelligence, and technical due diligence, the time savings compound.

The key: the agent isn't replacing the researcher. It's handling the data gathering and initial synthesis, letting the researcher focus on interpretation and judgment. The ROI is in researcher leverage — one researcher doing the work of three.

Code Review and Testing

Agents that review code for bugs, security issues, and style violations can process every PR automatically, flagging issues for human review. Developers spend less time on repetitive review comments.

Agents that generate tests from code coverage requirements can substantially reduce the manual test-writing burden. The agent doesn't replace the test engineer — it handles the boilerplate so the engineer focuses on edge cases.

Where the ROI Is Overhyped

Open-Ended Customer Service

"80% automation rate" for customer service is a dangerous claim. Customer queries are diverse, emotionally charged, and frequently involve edge cases that agents handle poorly.

The automation rate might be 80% by volume, but those 80% are the easy queries. The remaining 20% — the complex disputes, the emotionally distressed customers, the novel situations — often require 5x more human effort to resolve because the agent has already created expectations or made partial commitments.

True ROI in customer service requires careful segmentation: which query types does the agent handle well, and which require escalation? Automate the clear wins, hand off the rest cleanly.

Autonomous Decision-Making

Agents that make financial decisions, approve transactions, or commit resources without human oversight have a different risk profile than automation-rate metrics suggest.

The failure cost isn't just the wrong decision — it's the erosion of trust, the regulatory exposure, and the operational complexity of managing exceptions. A 95% accuracy rate sounds great until you realize the 5% failures are disproportionately expensive.

For high-stakes decisions, the right pattern is augmentation, not autonomy: the agent prepares the analysis, surfaces the options, flags the risks. The human makes the call.

Creative Work

AI agents can generate marketing copy, product descriptions, and first-draft content. The time savings are real. But the quality variance is high, and every "automation" requires human review and editing.

The effective time savings are typically 30-40%, not 70-80%. The agent handles first drafts; humans handle refinement. This is still valuable — it reduces the cost of first drafts substantially — but it's not the 10x productivity claim that gets sold.

The Adoption Barriers That Kill ROI

ROI projections almost always assume successful adoption. In practice, adoption is where enterprise AI agents fail.

Change Management Friction

Introducing AI agents into workflows requires changing how people work. Not just "use this new tool" — changing established processes, rethinking how humans and AI collaborate, developing new trust patterns.

Change management takes months. It's expensive. And it often fails when middle management isn't bought in.

The failure mode: the technology works, the pilot shows promise, but adoption outside the pilot team stalls at 15%. The agent handles a small fraction of the intended volume, so ROI never materializes.

Integration Complexity

AI agents don't exist in isolation. They need to connect to existing systems — CRM, ERP, communication tools, knowledge bases. Integration is underestimated in every initial ROI projection.

A realistic timeline: 3 months to integrate a simple agent, 6-12 months for complex integrations. During the integration period, the agent is partially operational at best, and ROI is negative.

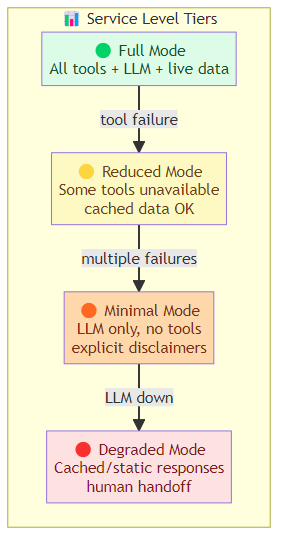

Quality Variance

Agents don't perform consistently. Performance varies by:

- Time of day (load-dependent latency)

- User query complexity

- Upstream system availability

- Model version changes

Users who experience a bad interaction often stop using the system entirely, even if the system works well 90% of the time. Quality variance management is as important as quality level.

The Framework for Honest ROI Assessment

Before You Buy/Build: Define the Automation Target Precisely

"Customer service" is not an automation target. "Tier-1 support tickets about billing questions with structured invoices and straightforward disputes" is an automation target.

The more precise the target, the more accurate the ROI projection.

During Pilot: Measure the Right Metrics

Track:

- Automation rate by query type: don't just measure overall automation, measure it by category

- Escalation quality: when the agent escalates, is the escalation appropriate (did it correctly identify that it couldn't handle it)?

- Resolution time for automated vs. escalated tasks: how long does the full lifecycle take?

- Human satisfaction scores: are the agents that work with the human making them more effective?

Before Full Rollout: Run a Shadow Period

Before going live, run the agent in shadow mode — it sees all the queries, makes recommendations, but doesn't act. Compare its recommendations against what actually happened.

This tells you: what would the automation rate actually be? What would the failure rate be? What's the risk profile of the agent's decisions?

At Scale: Continuously Monitor and Recalibrate

ROI isn't static. As the agent handles more volume, new failure modes emerge. As users find workarounds, effective utilization changes. As upstream systems change, quality degrades.

The teams that maintain positive ROI over time are the ones that continuously monitor and recalibrate — treating the agent as a product that needs ongoing investment, not a one-time deployment.

What Good Looks Like

The companies with genuinely positive AI agent ROI share a common pattern: they start with a specific, measurable task, achieve high automation on that task, measure carefully, and expand deliberately.

They're not deploying agents to "transform customer service." They're deploying agents to handle invoice processing for non-PO invoices under $5,000, with clear escalation rules and measurable quality standards.

The transformation comes from the accumulation of dozens of these well-defined deployments, not from a single grand transformation.

The Bottom Line

AI agents can deliver genuine ROI in the enterprise. The key is precision:

- Define automation targets precisely, not as broad categories

- Account for failure cost, not just automation rate

- Budget for integration time and change management

- Measure the right metrics, not just the ones vendors highlight

- Treat deployment as an ongoing product investment, not a one-time project

The teams that do this well see 2-4x ROI within 12 months. The teams that buy the 10x productivity claim and deploy broadly see negative ROI as failure costs accumulate.

Precision is the difference.

Related posts: Enterprise Agent Deployment — the governance and rollout playbook for enterprise deployment. Cutting the Cost of AI Agents — the engineering side of agent cost optimization. AI Agents That Spend Money — autonomous AI commerce and payment infrastructure. Enterprise AI's $5.5B Week — the capital infusion that changes the enterprise AI competitive landscape. The AI Jobs Debate — the mismatch between AI-created and AI-displaced jobs.