Meta buys a robotics startup to bolster its humanoid AI ambitions. Anthropic is reportedly raising at a $900B+ valuation. Sierra raised $950M. OpenAI and Anthropic both launched enterprise joint ventures.

The enterprise AI market is booming — and the buyers are starting to look past the capabilities pitch and ask the harder question: "What does this actually cost us per task, and is that price justified by the value?"

That's the agent cost optimization question. And it's where agentic engineering gets financial.

Where the Money Goes

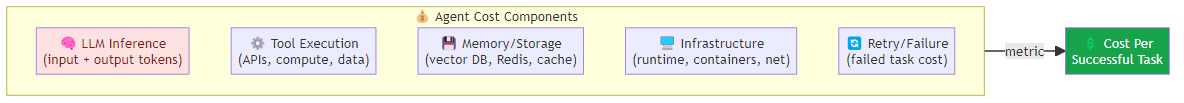

The cost of an agentic workflow isn't just the LLM API bill. It's a multi-component stack:

LLM inference cost: the largest component, driven by input tokens (context loading + prompt) and output tokens (reasoning + responses). Billed per 1M tokens.

Tool execution cost: API calls to external services (web search, code execution, database queries). Can exceed LLM cost for data-intensive tasks.

Memory storage cost: vector store queries, Redis session storage, knowledge graph maintenance. Often underestimated.

Infrastructure cost: compute for the agent runtime, container orchestration, networking. Typically 10-20% of total but scales with concurrency.

Retry and failure cost: failed tasks that get retried multiply the base cost. High failure rates can double or triple effective cost per successful task.

The metric that matters: cost per successful task, not cost per LLM call. A task that succeeds on the first attempt at $0.08 is cheaper than one that fails twice and succeeds on the third at $0.24.

The Token Efficiency Problem

Most agentic workflows are token-inefficient in ways that are fixable but rarely fixed.

Context Window Waste

The average agentic workflow loads 3-5x more context than necessary. The agent receives:

- Full conversation history (when only last N turns are relevant)

- All tool outputs from the session (when only the final result matters)

- System prompts that are 5x longer than needed (bloated with boilerplate)

Fix: Implement context compression before every LLM call. Summarize old conversation turns, truncate intermediate tool outputs to key facts, trim system prompts to the minimum viable set of instructions.

Redundant Reasoning

Agents often re-reason about information they already know. "Let me check the customer history... [already retrieved 3 steps ago]"

Fix: Maintain a working memory cache of "already known" facts. Before reasoning about a question, check if the answer is in the cache. Only call the LLM if the information isn't cached.

Over-Generation

Agents produce verbose responses when concise ones would suffice. A 500-token response when 80 tokens would answer the question costs 6x more.

Fix: Add a token budget constraint to the system prompt. "Answer in 100 tokens or less." Use output validation to reject overly verbose responses and request a rewrite.

Model Routing: The Highest-Leverage Optimization

Not every task needs the most capable model. Model routing — directing tasks to the appropriate model based on complexity — is the single highest-leverage cost optimization available.

Task complexity assessment → Route to appropriate model

├── Simple (lookup, classification, formatting) → small/fast model

│ └── Cost: $0.10/1M tokens, latency: 200ms

├── Moderate (tool orchestration, multi-step reasoning) → medium model

│ └── Cost: $2.00/1M tokens, latency: 800ms

└── Complex (novel problem-solving, high-stakes decisions) → large model

└── Cost: $15.00/1M tokens, latency: 2000ms

The routing logic can be heuristic (rule-based on task type) or learned (a classifier trained on task outcomes). Both approaches work. The key is measuring the cost of routing errors — routing a complex task to a small model wastes more than routing a simple task to a large model.

Benchmark: a well-routed multi-model system typically achieves 60-80% cost reduction vs. a single-model baseline, with <5% quality degradation.

Routing Criteria

What makes a task "simple" vs "complex"? The heuristics:

Simple (route to small model):

- Request is < 50 tokens

- Task type is in {lookup, format, classify, summarize}

- No tool calls required

- No multi-step reasoning needed

- Error cost is low (retry is cheap)

Complex (route to large model):

- Request is > 500 tokens or references complex state

- Task type is in {reason, plan, debug, negotiate}

- Requires 3+ tool calls

- Novel domain or edge case

- Error cost is high (wrong answer has real consequences)

Context Window Optimization

Context is the largest driver of input token cost. Every token in the context window is charged as input.

Progressive Context Loading

Don't load all context upfront. Load the minimum context needed for the current step, then load more as the task progresses.

Step 1: Load user query + recent history (500 tokens) → LLM decides what it needs

Step 2: Load retrieved documents for that decision (2000 tokens) → LLM acts

Step 3: Load tool results (500 tokens) → LLM synthesizes

vs. the naive approach of loading everything upfront: 10000+ tokens for every step, most of which is never used.

Semantic Chunking for Retrieval

When loading from vector stores, chunk size matters. Too large → includes irrelevant context. Too small → loses coherence.

Optimal chunk strategy: 512-1024 tokens per chunk, with 50-100 token overlap between chunks. This balances precision with coherence.

Summary-First Architecture

For long conversations, implement a summary layer:

Conversation (200 turns, 50k tokens) → Summarizer → Summary (500 tokens)

↓

+ Recent turns (2k tokens)

↓

LLM context (~3k tokens)

This reduces context cost by 90%+ for long sessions, with minimal quality impact if the summarizer is well-designed.

Tool Call Optimization

Tool calls are often the most expensive part of an agentic workflow — not because the tool itself is costly, but because the LLM that decides to call tools burns tokens deciding and reasoning.

Batch Tool Calls

When multiple independent tool calls are needed, execute them in parallel rather than sequentially. This reduces:

- LLM call count (one reasoning step instead of N)

- Total wall-clock time

- Token burn on intermediate reasoning

Tool Result Truncation

Tool outputs are often 10-100x larger than what the LLM actually needs. A web search returns 5,000 tokens; the LLM uses 200.

Fix: Truncate tool outputs before returning them to the LLM. Keep the top-N relevant results, or extract only the key facts. This requires custom truncation logic per tool type.

Caching Tool Results

Repeated tool calls for the same query waste money and latency.

- Web search: cache results for 1-24 hours (depending on topic freshness)

- Database queries: cache reads for session duration

- Code execution: cache results for identical inputs

A simple in-memory cache with a TTL can eliminate 20-40% of redundant tool calls.

Building a Cost Dashboard

If you're not measuring cost per task, you're flying blind. Build a dashboard that tracks:

Per-task metrics:

- Total cost per task (LLM + tools + infra)

- Cost breakdown by component

- Cost vs. outcome quality (did expensive tasks produce better results?)

Aggregate metrics:

- Average cost per task by type

- Cost distribution (P50, P90, P99)

- Cost trend over time (is optimization efforts reducing costs?)

- Failure cost rate (what percentage of total spend is on failed/retry tasks?)

Optimization metrics:

- Model routing accuracy (correct routing %)

- Cache hit rate (redundant call %)

- Context compression ratio (original vs. loaded tokens)

The teams that get costs under control are the ones that instrument everything and make cost visible at the task level. The teams that don't, discover their costs only when the monthly bill arrives.

The Bottom Line

The median production agentic workflow costs 10-50x more than a well-optimized version. The optimization levers are known:

- Model routing: 60-80% cost reduction by matching task complexity to model capability

- Context compression: 50-70% input token reduction via summarization and progressive loading

- Tool call optimization: 20-40% reduction via batching, truncation, and caching

- Token budget constraints: 30-50% output token reduction via explicit budget prompts

Start with model routing — it's the highest-leverage change and the fastest to implement. Then layer on context optimization. Tool call optimization comes last, because it requires custom logic per tool type.

Measure cost per successful task, not cost per API call. Because a task that costs $0.08 and succeeds is cheaper than one that costs $0.24 and succeeds after two failures.

Related posts: Enterprise AI Agent ROI — the business case for agentic systems. Real-Time AI Agent Inference — latency and throughput optimization. AI Agent Evaluation Framework — measuring agent quality and reliability. AI Chip Infrastructure — how chip architecture affects agentic AI economics.