A customer service agent that can only look up one piece of information at a time is painfully slow. A coding agent that doesn't know how to recover from a failed test is useless in production. An analytics agent that can't compose multiple tools into a single workflow is limited to toy demonstrations.

The difference between a demo agent and a production agent is tool use sophistication. Not just whether the agent can call tools, but how it orchestrates them, recovers from failures, and composes them into meaningful workflows.

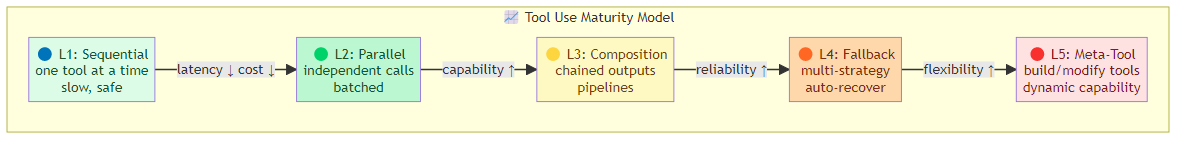

The Tool Use Maturity Model

Agents move through distinct levels of tool use sophistication:

Level 1 — Single Tool, Sequential: the agent calls one tool, processes the result, calls another. Simple but slow. Every tool call waits for the previous one to complete.

Level 2 — Parallel Tool Calls: the agent identifies independent tool calls and executes them simultaneously. Dramatically faster for workflows with branching data dependencies.

Level 3 — Tool Composition: the agent chains tools where output of one becomes input of another, constructing pipelines dynamically based on task requirements.

Level 4 — Tool Orchestration with Fallback: the agent manages multiple tool strategies for the same goal, falling back to alternatives when primary tools fail or return suboptimal results.

Level 5 — Meta-Tool Use: the agent reasons about which tools to build, modify, or combine. Tools become first-class objects in the agent's planning process.

Most production agents operate at Level 2-3. The competitive advantage comes from Level 4 and beyond.

Parallel Tool Execution

The single biggest latency optimization in multi-tool workflows: execute independent tool calls in parallel.

Sequential (slow):

Tool A (200ms) → Tool B (300ms) → Tool C (150ms) = 650ms total

Parallel (fast):

Tool A ─┐

Tool B ─┼─→ 300ms total (limited by slowest)

Tool C ─┘

The agent's planner must identify which tool calls are independent (don't depend on each other's output) and group them into parallel batches.

When parallel execution matters most: workflows where the agent needs multiple pieces of information from different sources before proceeding. A research task that needs market data, competitor info, and regulatory status — all independent queries — should execute in parallel, not sequentially.

The dependency graph problem: determining which tool calls are truly independent requires understanding the data flow. The agent needs to know whether Tool B's output depends on Tool A's output. This requires either:

- Explicit dependency declarations in the tool schema

- LLM-based dependency analysis (slower but more flexible)

- Static analysis of the tool's input/output shapes

Tool Chaining and Composition

When tool outputs feed into other tools, the agent needs to construct chains dynamically:

Task: "Get the stock price of the top 5 tech companies, calculate the average, and write it to a spreadsheet"

Chain construction:

1. web_search × 5 → ["AAPL: $189", "MSFT: $412", "GOOGL: $172", "AMZN: $198", "META: $512"]

2. calculate(average, [189, 412, 172, 198, 512]) → 296.6

3. spreadsheet_write("Average top 5 tech stock price: $296.60")

The agent constructs this chain dynamically, routing outputs to inputs.

Tool chaining requires:

- Output type awareness: the agent must know what each tool outputs to route it correctly

- Type compatibility: input/output types must align for chaining to work

- Error propagation: if any step in the chain fails, the agent must decide whether to retry, skip, or abort

The Tool Fallback Pattern

No tool is reliable 100% of the time. Web search might hit rate limits. An API might be down. A code execution environment might time out.

The fallback pattern: when a primary tool fails, automatically try alternatives:

Primary: web_search(query)

↓ failed (rate limit)

Fallback 1: cached_search(query) # our cached results

↓ not cached

Fallback 2: api_search_v2(query) # alternative search API

↓ failed

Fallback 3: report_failure() # gracefully degrade

Designing fallback chains:

- Order fallbacks by cost/reliability: try most reliable first, most expensive last

- Each fallback should be semantically equivalent (different implementation, same capability)

- Include a final graceful degradation that returns partial results rather than failing completely

Tool Result Processing

Tool outputs are often noisy. A web search returns 5,000 tokens of HTML and metadata when the agent only needs the core facts. A database query returns 100 columns when only 3 are relevant.

Result truncation: before returning tool results to the agent, truncate to the most relevant subset. This reduces context cost and improves agent performance by reducing noise.

Result transformation: convert tool outputs into formats the agent expects. A raw API response becomes a clean JSON object. A verbose HTML page becomes structured text.

Result validation: verify that the tool output is well-formed before returning it to the agent. Malformed results cause cascading failures.

Tool State and Idempotency

Production tools must be safe to retry. An idempotent tool produces the same result regardless of how many times it's called with the same inputs.

Why idempotency matters: agents retry. Network errors, timeouts, and ambiguous results all trigger retries. A non-idempotent tool (e.g., "send email") will send duplicate emails on retry.

Achieving idempotency:

- Use idempotency keys:

send_email(to, body, idempotency_key=hash(request)) - Check before write: "has this operation already been performed?"

- Use transactional semantics: wrap multi-step operations in transactions

Multi-Agent Tool Delegation

When one agent needs capabilities it doesn't have, it can delegate tool access to another agent:

Research Agent needs to:

- Write and execute test code (it doesn't have a code execution tool)

- Research Agent delegates to Code Agent

- Code Agent executes tests, returns results

- Research Agent synthesizes results into findings

This is different from a tool call — it's tool delegation to an agent with different capabilities. It requires:

- Capability discovery: how does the delegating agent know which agent to use?

- Result serialization: how does the delegating agent interpret the results?

- Trust and authorization: which agents can delegate to which other agents?

The Tool Schema Design Problem

The interface between the agent and its tools is the tool schema. Bad schemas produce bad tool use.

What a good tool schema includes:

- Name: clear, action-oriented, consistent verb tense

- Description: what the tool does, in plain language the LLM can reason about

- Input schema: every parameter with type, constraints, and purpose

- Output schema: what the tool returns, including error cases

- Constraints: timeouts, rate limits, cost, what the tool cannot do

The description is the prompt: the tool description is the most important part. It should tell the LLM not just what the tool does, but when to use it and when not to use it.

# Bad: "Search the web"

# Good: "Search the web for current factual information (stock prices, weather, news, sports scores). NOT for opinion, analysis, or historical research. Returns top 10 results with title, URL, and snippet. Rate limit: 10 calls/minute."

The Bottom Line

Basic tool calling is table stakes. The agents that work reliably in production use:

- Parallel execution for independent tool calls (60-80% latency reduction)

- Fallback chains so tool failures degrade gracefully

- Result processing to reduce noise before the agent sees it

- Idempotent design so retries are safe

- Rich schemas that tell the agent when and how to use each tool

Build tools as if the agent is a junior colleague: explain clearly what each tool does, what it can't do, and what the outputs mean. The better the interface, the better the tool use.

Related posts: Agent Communication Protocols — the infrastructure behind tool communication. ReAct and Reflexion Patterns — reasoning patterns for tool-use agents. Building Resilient Agents — error handling patterns for agentic workflows.