Last week was extraordinary, even by the standards of an industry accustomed to extraordinary things.

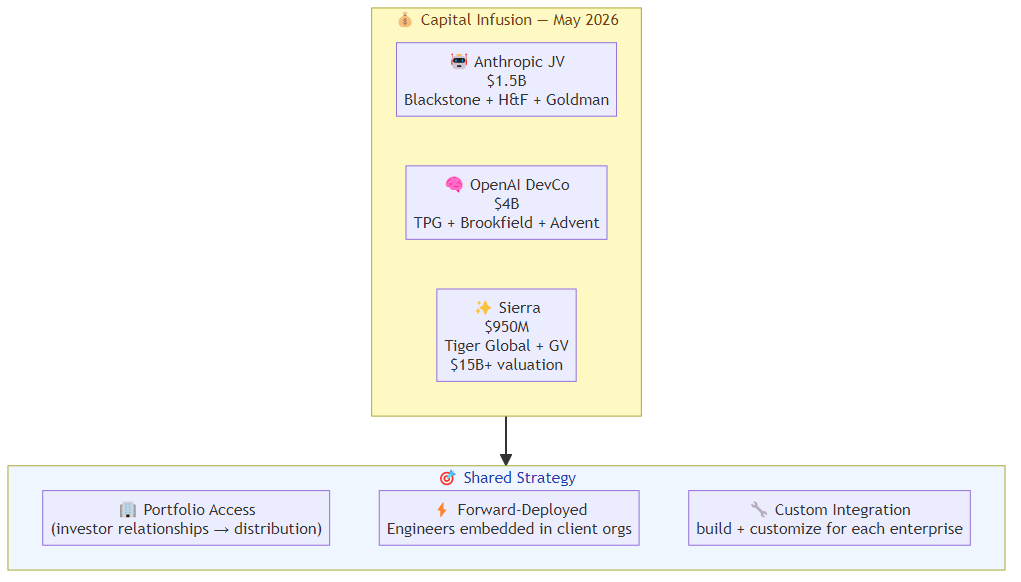

Anthropic announced a $1.5 billion enterprise joint venture backed by Blackstone, Hellman & Friedman, and Goldman Sachs. OpenAI launched "The Development Company" with $4 billion from TPG, Brookfield, Advent, and Bain Capital. Sierra — the AI customer experience company led by Bret Taylor, who also chairs OpenAI — raised $950 million at a valuation above $15 billion.

That's $5.5 billion moving toward enterprise AI infrastructure in a single news cycle. The question isn't whether enterprise AI is serious. It's what this capital is actually buying — and what the competitive landscape looks like on the other side of the infusion.

What the Money Is Buying

The two AI lab JVs (Anthropic and OpenAI) are structurally similar: large asset management firms providing capital, plus access to their portfolio companies as distribution channels. The AI lab provides the model capability and engineering talent; the financial partner provides enterprise relationships and deal flow.

The pattern is not incidental. It mirrors how enterprise software companies have historically scaled — not through product-led growth alone, but through systems integrator relationships that provide implementation capacity and enterprise trust simultaneously.

For Anthropic and OpenAI, these JVs solve a problem that pure API sales never could: the enterprise implementation gap. Every AI lab has the same complaint from enterprise customers — "the model is impressive but we don't know how to build this into our workflows." The JV structure puts forward-deployed engineers inside client organizations, customizing and integrating rather than waiting for enterprises to figure it out themselves.

Sierra's raise is different in character. Sierra is not an AI lab — it's an application company built on top of AI labs. Their $950M is buying product velocity, go-to-market expansion, and the race to become the default AI customer experience platform before Salesforce or ServiceNow builds something competitive.

The Forward-Deployed Engineering Model

The JV structure reveals something important about how enterprise AI is actually going to be delivered: the bottleneck is not model quality. It's integration.

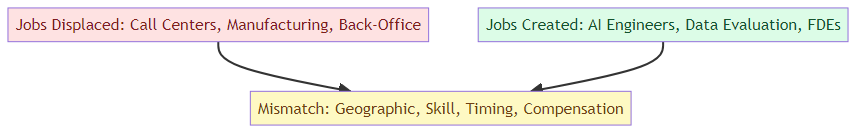

Forward-deployed engineering — the practice of embedding engineers directly in client organizations to build and customize AI solutions — is the core mechanism of both Anthropic's and OpenAI's new ventures. These engineers don't just integrate models. They sit with clinical staff and IT teams to understand workflows, they build custom tooling on top of base models, and they maintain ongoing relationships with enterprise accounts.

This is expensive, slow, and relationship-dependent. It's also the only way to close the gap between "impressive demo" and "production deployment" that has blocked enterprise AI for two years.

The implication: the competitive moat in enterprise AI isn't just the model — it's the implementation capacity. Teams that can deploy fast, integrate deeply, and maintain enterprise relationships at scale will capture more value than teams with better models but no implementation infrastructure.

Why the Capital Is Concentrating at the Infrastructure Layer

The distribution of capital is revealing: $5.5B going to AI labs and AI application platforms, not to AI-adjacent tooling, evaluation infrastructure, or agent orchestration platforms. This mirrors the pattern from previous infrastructure cycles — in cloud, the early capital went to AWS and Azure, not to the ecosystem tooling.

In the AI cycle, the capital is going to the models (Anthropic, OpenAI) and the application platforms (Sierra) because those are the points of maximum leverage. Every enterprise that wants to deploy AI needs a model provider and a solution layer. The infrastructure in between — the agent orchestration, the evaluation tooling, the deployment infrastructure — will grow, but the capital is not going there first.

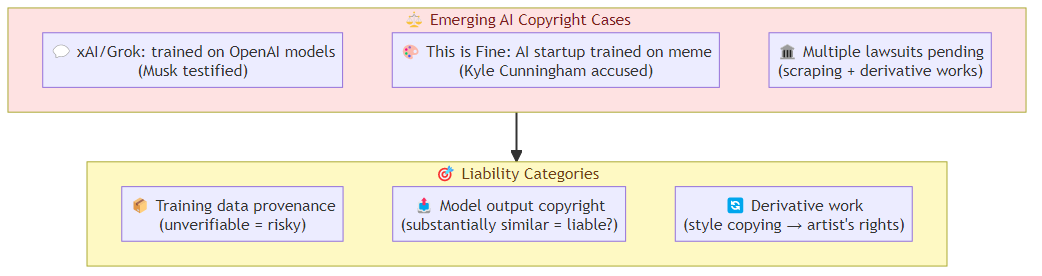

This concentration creates a risk for enterprise buyers: when the dominant AI infrastructure is funded by Blackstone, Goldman Sachs, Brookfield, and TPG, the pricing and relationship dynamics will reflect those investors' interests. Enterprise AI is becoming a financial sector play in a way that cloud infrastructure never was.

What Sierra's Position Tells Us

Sierra's raise is the most interesting data point in the bunch, for a specific reason: Bret Taylor is the chairman of OpenAI while running Sierra. The company builds customer experience AI agents on top of multiple model providers, not exclusively OpenAI.

This positioning — a platform that sits above the model layer, routing to whichever model is best for each use case — is the architecture that wins if the model layer commoditizes. If Anthropic and OpenAI continue to compete on model quality and price, the value accrues to whoever sits above them and orchestrates the selection.

Sierra's claim that 40% of the Fortune 50 are customers after two years is aggressive. If accurate, it's the fastest enterprise AI adoption curve I've seen outside of internal tooling at large tech companies. The Ghostwriter product — "agent as a service" that lets users create specialized agents via natural language — is the key product bet: not just AI for customer service, but AI for any structured enterprise workflow.

The Competitive Dynamics This Creates

The capital infusion accelerates three competitive dynamics:

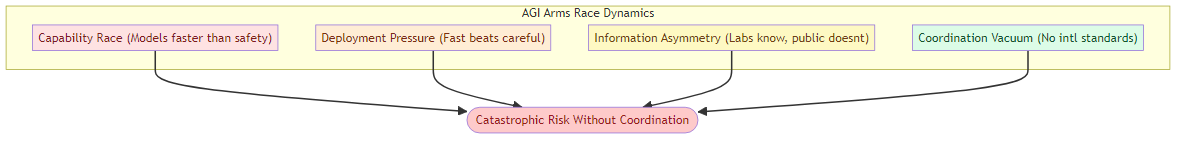

Model lab consolidation: With billions of dollars flowing to the top two labs (Anthropic and OpenAI), the gap between "funded AI labs" and "unfunded AI labs" widens. Smaller labs that can't raise matching capital will be pushed toward niche specialization or acquisition.

Application layer competition: Sierra at $15B+ valuation sets a high bar for customer experience AI agents. Every CRM, service management, and enterprise software company is now racing to embed equivalent capability before Sierra becomes the default.

Enterprise AI services war: The JV structure means Anthropic and OpenAI are now competing not just as model providers but as enterprise system integrators. This puts them in direct competition with Accenture, Deloitte, and the major systems integrators who have been building AI practices on top of their platforms.

What This Means for Teams Building on This Infrastructure

If you're building AI applications on top of Anthropic or OpenAI, the JV structure changes the competitive dynamics in two ways that matter:

First, your model provider is now also your competitor in enterprise services. Anthropic's JV with Blackstone means Anthropic-backed engineers are customising solutions for enterprises — including enterprises that might be your potential customers.

Second, the forward-deployed engineering model means enterprises that go through the JV channel get higher-quality implementations than enterprises that buy through standard API channels. The service quality gap could make self-serve AI adoption harder for application builders.

The counterargument: the enterprise market is large enough that no single provider or JV can capture all of it. The model labs will serve the largest accounts through JVs; the long tail of mid-market enterprises will continue to be served by application builders and SI partners.

The Bottom Line

$5.5 billion in a single week is not a signal that enterprise AI is still theoretical. It's a signal that the infrastructure layer is being locked in — by Anthropic and OpenAI at the model layer, by Sierra at the application layer, and by major asset managers at the capital layer.

The competitive landscape for enterprise AI in 2027 is being decided right now, in boardrooms where AI labs and financial institutions are structuring deals that determine which companies get deployment capacity and which don't.

For teams building in this space: the window for independent positioning is narrowing. The model labs are being capitalized at levels that make independent challenger labs economically difficult. The application platforms are being funded at levels that make organic growth hard to compete with. The integrator layer is being built by the AI labs themselves.

The best strategic positioning right now is depth — being so good at a specific domain, workflow, or vertical that the capital infusion at the infrastructure layer doesn't dislodge you. The commodity layer is getting crowded. The depth layer is still open.

Related posts: AI Agents in the Enterprise: Separating Signal from Hype on ROI — the honest framework for evaluating AI agent business value. Enterprise Agent Deployment — the governance and rollout playbook for enterprise deployment. Anthropic $50B Infrastructure Scale — the infrastructure bet reaching a new order of magnitude.