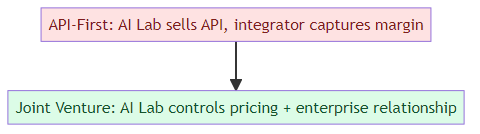

Anthropic's $1.5 billion joint venture, OpenAI's $4 billion Development Company — both structures are built on the same hidden investment: forward-deployed AI engineers embedded inside enterprise organizations.

This is not a new role. McKinsey and BCG have had "embedded consultants" for decades. Systems integrators like Accenture and Deloitte have had delivery engineers who work on-site for years at a time. What's new is the combination: the engineering depth required (you need to actually build AI systems, not just configure them), the pace of change (models and APIs are updating constantly), and the cultural challenge (the enterprise organization doesn't know how to work with AI engineers who are both more technical and more product-aware than typical consultants).

The forward-deployed AI engineer is emerging as the most important — and most scarce — role in enterprise AI. Here's what the job actually looks like.

What "Forward-Deployed" Means in Practice

The term comes from Palantir, which made forward-deployed engineering a core concept in their go-to-market model. The idea: send engineers to the client site, not to build custom software for them, but to build the product while embedded in their workflows. The engineer becomes a proxy for the company's product capabilities inside the client's organization.

Applied to AI, forward-deployed AI engineering means something specific: you work at an enterprise for weeks or months at a time, understanding their data, their workflows, their constraints, and their trust patterns. Then you build AI solutions on top of whatever model infrastructure your company provides — customizing, integrating, and iterating until the solution works in their environment.

The difference from traditional consulting: you're not implementing a pre-built product. You're building a product that is parameterized by the client's specific context, using a model that's shared infrastructure but an interface that's custom.

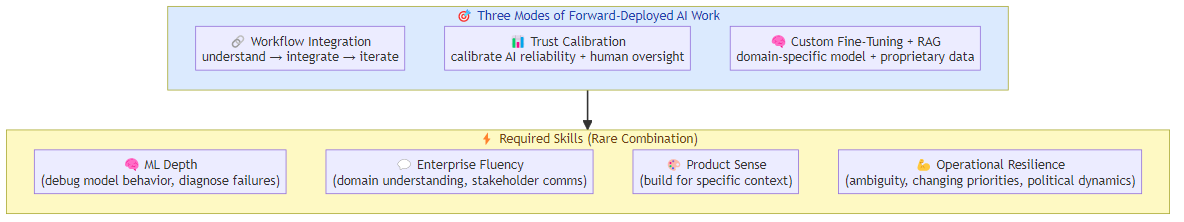

The Three Modes of Forward-Deployed AI Work

Mode 1: Workflow Integration

The most common mode. The enterprise has a process — claims processing, customer onboarding, document review, inventory management — and the forward-deployed AI engineer builds an AI solution that handles part of it.

This work is unglamorous and critical. You spend weeks understanding the actual workflow (not the documented workflow, which is different), identifying where AI adds value without disrupting the parts that work, and building the integration layer that connects the AI to the enterprise's existing systems.

The hardest part: enterprise workflows are full of edge cases that don't appear in documentation. The forward-deployed engineer discovers them by watching the actual work, not by reading process maps.

Mode 2: Trust Calibration

Enterprise AI fails not because the model is wrong but because the enterprise doesn't trust it correctly. Either they trust it too much (deploying without adequate review layers) or they don't trust it enough (ignoring AI recommendations that would improve outcomes).

The forward-deployed AI engineer spends significant time on trust calibration — helping the enterprise understand where the AI is reliable, where it needs human review, and how to design workflows that exploit the AI's strengths while containing its failure modes.

This is a communication and change management skill as much as a technical one. The engineer who can't explain confidence calibration to a business stakeholder won't succeed in this role.

Mode 3: Custom Fine-Tuning and RAG

For enterprises with proprietary data and specific domain requirements, the forward-deployed AI engineer is often responsible for building custom fine-tuned models or RAG pipelines.

This is the highest technical complexity mode: handling the enterprise's data (often subject to confidentiality or regulatory constraints), building evaluation datasets for domain-specific quality, fine-tuning or selecting from available model options, and deploying the solution with appropriate security controls.

The technical infrastructure for this work is maturing rapidly — Anthropic and OpenAI both have enterprise offerings with fine-tuning and dedicated deployment options. But the expertise to use these tools for domain-specific applications is still scarce.

Why It's Hard to Staff

The forward-deployed AI engineer needs a combination of skills that rarely co-occur:

ML depth sufficient to debug model behavior: not just prompt engineering, but understanding why the model produces certain outputs, how to diagnose failures, and when to fine-tune versus when to use RAG.

Enterprise fluency: the ability to understand a business domain quickly, communicate with business stakeholders without excessive jargon, and navigate enterprise decision-making processes.

Product sense: forward-deployed engineers aren't implementing fixed solutions — they're building solutions that need to work in a specific context, which requires genuine product thinking about what "working" means in this environment.

Operational resilience: working embedded in a client organization means dealing with unclear requirements, changing priorities, political dynamics, and the constant temptation to over-promise on AI capabilities.

The salary for a strong forward-deployed AI engineer is now comparable to senior SWE salaries in top tech companies — which creates a talent market problem. The enterprises that need these engineers most (traditional industries, not tech) often can't compete on compensation with the tech companies building the AI infrastructure itself.

The Career Path

Forward-deployed AI engineering is becoming a destination role, not a stepping stone. The practitioners who do it well are building careers in a space that didn't exist five years ago.

The progression looks like this: junior forward-deployed engineer (implementing well-defined solutions with supervision) → senior forward-deployed engineer (leading workflow integrations and trust calibration independently) → principal engineer (designing enterprise AI architecture and mentoring teams) → AI solutions architect (designing the overall engagement model and connecting technical work to business outcomes).

The depth of enterprise context that forward-deployed engineers accumulate — seeing how AI works (and fails) across multiple industries and organizations — is genuinely unique knowledge. The engineers who have been doing this for two years have seen more production AI deployments than almost anyone else in the industry.

Where This Role Is Heading

The forward-deployed AI engineering function is at an inflection point. Right now, the AI labs and large application platforms are building this capacity internally. Anthropic's JV explicitly mentions forward-deployed engineers. OpenAI's Development Company is structured around it.

Over the next two years, this will professionalize further. The major management consultancies (McKinsey, BCG, Bain) are all building AI forward-deployed practices, often in partnership with AI labs. Systems integrators (Accenture, Deloitte, KPMG) are converting their implementation practices.

The result will be a genuine profession — not quite consulting, not quite engineering, not quite product management, but something that borrows from all three. The AI labs need this function to close the enterprise implementation gap. The enterprises need it because they can't build it themselves. The talent market is responding, but the supply is not yet close to meeting the demand.

For engineers deciding where to build their careers: forward-deployed AI is one of the highest-leverage places to work right now. The problems are hard, the context is rich, the feedback loop is fast (you see if your solution works within weeks, not years), and the domain knowledge you accumulate is durable.

The models will get better. The infrastructure will improve. The forward-deployed engineers who know how to apply them in enterprise contexts — that's the scarce resource that the next phase of enterprise AI depends on.

Related posts: AI Agents in Production — the engineering practices for production agent deployment. Enterprise Agent Deployment — the governance framework for enterprise AI deployment. The AI Jobs Debate — why the AI jobs being created differ from the jobs being displaced.