Two announcements, one week, one direction.

Anthropic and OpenAI both revealed they're creating joint ventures to deliver enterprise AI services. Anthropic is partnering with consulting firms and system integrators to embed Claude into enterprise workflows. OpenAI is doing something similar — structuring partnerships that give enterprises access to its models through managed services rather than raw API calls.

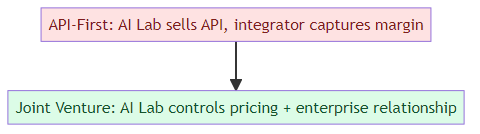

This looks like parallel coincidence. It's not. It's a strategic realization arriving at the same moment across the industry: the API-first model for enterprise AI has hit a ceiling, and the real value is in the services layer above it.

Why the API Model Hit Its Ceiling

The original AI lab go-to-market strategy was simple: build the best models, sell API access, let the market figure out the applications. This worked well for developers and startups, but it hit a wall with enterprise adoption.

Enterprise AI adoption requires more than API access. It requires:

- Integration with existing enterprise systems (ERP, CRM, HRIS, legacy databases)

- Change management and workforce training

- Governance frameworks for AI usage policies

- Compliance with industry regulations (HIPAA, SOC 2, GDPR)

- Custom fine-tuning on proprietary enterprise data

- Ongoing monitoring and optimization

None of this is in the API. It's in the services layer — the consulting, integration, and management work that wraps around the model. The AI labs discovered that enterprises will pay enormous amounts for someone to do this work correctly. But if you just sell API access, that money goes to system integrators and consulting firms who capture the margin while you get commodity pricing.

The joint venture model is the AI labs' attempt to recapture that services margin by becoming the integrator themselves — or at least controlling the integrators through structured partnerships.

What the Joint Ventures Actually Look Like

The Anthropic model reportedly involves partnerships with major consulting firms — the kind that already have enterprise relationships and implementation capacity. Anthropic provides the model and technical guidance; the partner provides the enterprise sales force, implementation engineers, and ongoing client relationships.

The OpenAI model is similar but reportedly more direct — structured joint ventures that give OpenAI more control over the enterprise relationship, including pricing, implementation timelines, and ongoing account management.

Both approaches share a common logic: control the enterprise implementation, not just the model access.

The Competitive Calculus

Why are both labs moving at the same time? Because the enterprise AI services market is becoming real, and neither wants to be left holding the commodity position while a competitor owns the margin.

Sierra just raised $950M specifically to own enterprise AI relationships. Salesforce has AgentForce. Microsoft has Copilot. The services layer is getting crowded by non-AI-lab players who are more than happy to resell AI model access while capturing the implementation margin.

If Anthropic and OpenAI don't structure their own enterprise service capabilities, they risk becoming the "electricity" of the enterprise AI market — essential, but priced like a commodity with thin margins, while the actual money goes to the companies that package and deliver it.

The Risks of the Joint Venture Approach

Moving into enterprise services isn't without risk for the AI labs.

Channel conflict: The consulting firms and system integrators that are natural partners for enterprise AI services are also selling competing capabilities. A firm that's implementing Anthropic-powered solutions is also selling Microsoft Copilot, Google Gemini, and homegrown AI. The joint venture creates incentives that may conflict with existing partner relationships.

Capacity constraints: Enterprise AI implementation is labor-intensive. Building the consulting capacity to deliver enterprise implementations at scale is a different skill set than building frontier models. The labs are trying to partner their way around this constraint, but the quality control and scalability of that approach is uncertain.

Customer concentration: The enterprises that can actually absorb significant AI implementation spend are the largest companies — the G2000. Those customers have enormous leverage, and once a joint venture locks in a major enterprise relationship, the pricing and terms may not be as favorable as the AI labs would like.

Conflict with model improvement: The labs' core competency is building better models. As they spend more time structuring enterprise deals and managing partner relationships, the question is whether the organizational focus and capital allocation moves away from frontier research toward enterprise sales and services.

Why This Matters for Enterprise AI Buyers

For enterprises evaluating these joint ventures, the key question is: who controls the relationship, and what happens when things go wrong?

In a traditional API model, the enterprise has direct access to the AI lab, can negotiate pricing and terms, and has a clear accountability structure. In a joint venture model, the accountability structure is more complex — the AI lab provides the model, the partner provides the implementation, and the enterprise has to navigate both when issues arise.

The joint venture approach does offer real advantages for enterprises that lack internal AI implementation capacity: they get access to the AI lab's technical guidance and the partner's implementation capacity in a structured relationship. But the trade-off is less direct accountability and potentially less flexibility.

The enterprises that will benefit most are those that are clear about what they're buying: if you want access to frontier AI capabilities wrapped in enterprise implementation support, the joint venture model makes sense. If you want direct control over your AI infrastructure with maximum flexibility, the API model or a cloud provider relationship may be better.

What Comes Next

The joint venture announcements are the opening move in what will become a fundamental restructuring of the enterprise AI go-to-market. The AI labs have recognized that the services layer is where enterprise AI value accumulates, and they're positioning to capture that value rather than cede it to integrators and cloud providers.

Expect more announcements along these lines: Anthropic, OpenAI, Google, and Meta will all develop more structured approaches to enterprise AI services — either through partnerships, joint ventures, or in-house capabilities. The API-first model isn't going away, but it will increasingly be the "base layer" while the value migrates upward to implementation, governance, and ongoing management.

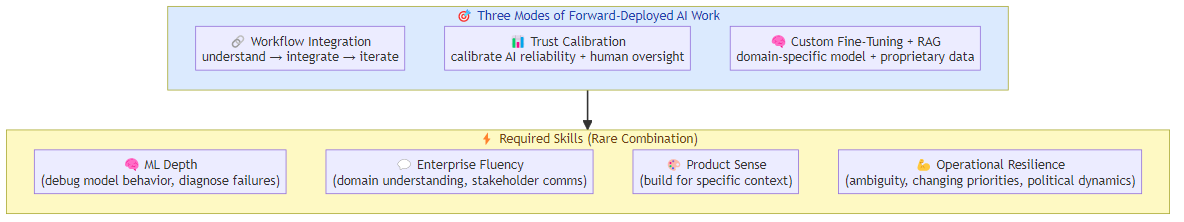

For AI engineers and technical decision-makers: the enterprise AI services layer is becoming a distinct career and business category. The forward-deployed AI engineering skills I wrote about last week are exactly the capabilities that these joint ventures will need. The integration work — making AI actually work inside enterprise environments — is where the next wave of value creation happens.

Related posts: Enterprise AI's $5.5B Week — the capital dynamics reshaping enterprise AI. Forward-Deployed AI Engineering — the new career category emerging from enterprise AI deployment. AI Agents That Spend Money — the infrastructure for autonomous AI in enterprise contexts. Sierra's $950M Raise — the pure-play enterprise AI agent company that's validating the market at $15B.