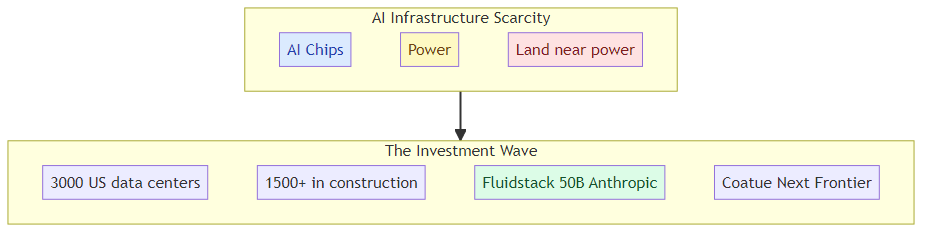

The AI chip shortage was the first infrastructure crisis. The power shortage is the second.

Coatue just launched a venture called "Next Frontier" to acquire land near large power sources and convert it into data centers. They already signed a joint venture with Fluidstack — a cloud infrastructure startup that penned a $50 billion deal to build data centers for Anthropic.

This isn't just Coatue being creative with their AI portfolio. It's a signal that the bottleneck in AI infrastructure has shifted from chips to real estate — specifically, land that's near enough to power sources to be economically viable for data center construction.

Why Land Became the Scarce Resource

Data centers need power. Massive amounts of it — each large facility draws as much electricity as a small town. The constraint isn't just having power available; it's having power available at a location where you can build a data center economically.

Land near existing power infrastructure — substations, transmission lines, renewable energy installations — is finite. The hyperscalers and AI labs have spent the last two years frantically securing power agreements, but the real estate underneath those power connections is now its own asset class.

The numbers are striking: the US has approximately 3,000 data centers today, with over 1,500 more in various stages of construction. That's a construction wave unlike anything in the industry's history — and it's happening because AI training and inference demand has created genuine power hunger that traditional data center capacity can't satisfy.

The Coatue Play: Land as Infrastructure Moat

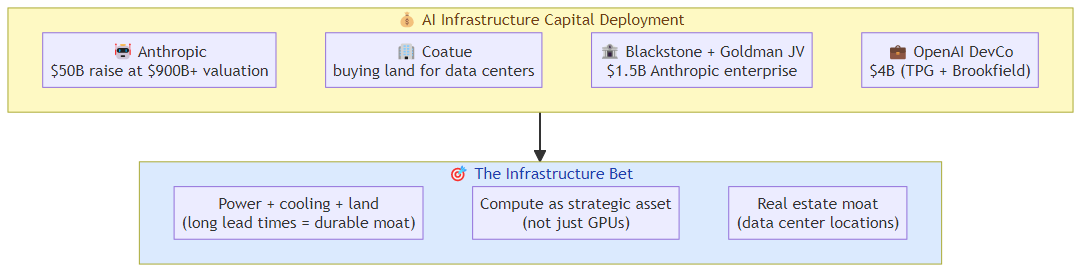

Coatue already has equity stakes in Anthropic, OpenAI, xAI, and data center companies like DayOne and CoreWeave. They understand the AI infrastructure landscape from the capital side. Now they're making a physical real estate bet that extends beyond traditional venture capital.

Next Frontier's strategy is straightforward: acquire land near power sources before the AI companies that need those locations do. Then develop the land into data centers, either for their portfolio companies or for the highest bidder.

This is the venture capital equivalent of buying property in a boomtown before the population arrives. The $50B Fluidstack deal for Anthropic proves there's demand for exactly this kind of capacity — demand that's so large and so urgent that companies are willing to sign decade-long deals for data center construction.

What $50B for Anthropic Data Centers Means

The Fluidstack joint venture is the most significant data center deal in AI infrastructure history. $50B isn't a typo — it's a multi-year construction and operations commitment that reflects how seriously Anthropic (and its investors) is taking the infrastructure buildout.

To put that number in context: $50B is more than the GDP of many small countries. It's roughly what the US government spends on defense in a year. It's being committed to building physical infrastructure for AI inference.

Why does Anthropic need $50B in data center capacity? Because inference at scale is expensive in ways that training isn't. Training runs hot for a few months and then you have a model. Inference runs continuously, for every user query, for the life of the model. And as AI adoption grows, the inference load compounds faster than the training benefits compress it.

If the AI labs are right about the trajectory of AI usage, $50B in data center capacity for a single AI company is actually conservative. The question isn't whether the capacity will be used — it's whether the deals being signed now will be profitable when the infrastructure comes online.

The Blackstone and Kevin O'Leary Signal

The fact that Blackstone is involved in data center financing and Kevin O'Leary is buying land for data centers tells you this has moved beyond tech industry speculation into institutional real estate territory.

When traditional real estate investors start treating AI infrastructure land as a distinct asset class, you're in a different phase of the market. The "AI is a bubble" argument used to focus on model companies with no revenue. Now it needs to account for companies that are building genuine physical infrastructure — just at an unprecedented scale.

For AI Builders

If you're building AI systems that depend on cloud infrastructure, the data center land grab has direct implications for your planning:

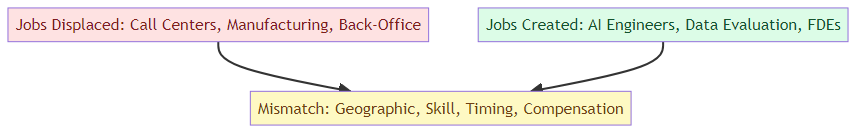

Availability risk: As large AI companies lock up data center capacity through long-term deals, available infrastructure for smaller players becomes scarcer. Your ability to get GPU time at competitive prices depends partly on whether the major providers have reserved their capacity for anchor tenants.

Geographic constraints: The power-limited locations aren't evenly distributed. If the data center buildout concentrates in specific regions (rural areas with renewable power, or near existing grid infrastructure), the geographic distribution of AI infrastructure will be uneven. This affects latency and availability depending on where your users are.

Contract structures: The $50B Anthropic deal signals that AI labs are willing to sign multi-decade infrastructure commitments. This means the economics of AI infrastructure are being locked in for years — which could make it harder for new players to enter or existing players to renegotiate.

The land beneath the AI boom is becoming as valuable as the chips that run on it. The infrastructure race has a new most-scarce resource.

Related posts: Anthropic $50B Infrastructure Scale — the capital commitment behind the infrastructure buildout. Cerebras IPO and AI Compute — the chip layer of the infrastructure stack.