The most interesting thing about the OpenAI trial isn't the corporate drama. It's Stuart Russell — one of the most respected AI researchers in the world, author of the standard AI textbook, professor at UC Berkeley — testifying that he's concerned about an AGI arms race.

This matters because Russell isn't a sensationalist. He's a careful scientist who has spent decades thinking about AI risk from a technical perspective. When he says he's worried about an AGI arms race, it's worth understanding exactly what he means and why it matters.

Who Stuart Russell Is and Why His Views Matter

Russell co-authored "Artificial Intelligence: A Modern Approach" — the most widely used AI textbook in the world, required reading for CS students at most major universities. He's not an alarmist. He's a technical researcher who has spent his career building formal frameworks for understanding AI systems.

His concern about AGI arms races isn't based on science fiction. It's based on a technical analysis of what happens when multiple organizations are racing to build increasingly capable AI systems without adequate coordination on safety protocols.

The core of his argument: if multiple actors are simultaneously pushing toward AGI — OpenAI, Anthropic, Google DeepMind, xAI, Meta AI, and others — and each is racing to be first, the safety investments that should accompany frontier AI development get compressed or skipped. The incentive structure of competition creates pressure to cut corners on safety verification.

What an AGI Arms Race Actually looks Like

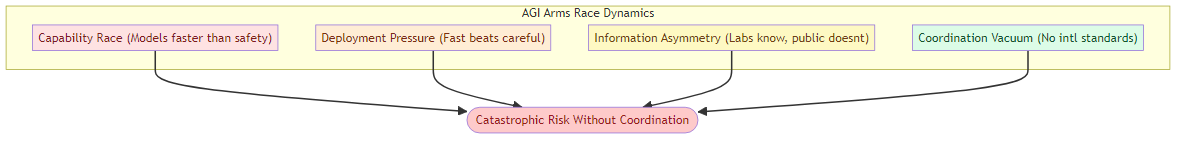

The term "AGI arms race" gets thrown around loosely. Russell's version of it isn't about killer robots — it's more subtle and more concerning:

Capability race without safety equivalence: each frontier AI lab is pushing to build more capable systems. The investment in safety evaluation — understanding what a system can and can't do before deployment — doesn't scale with capability investment. As labs compete to build bigger, more capable models faster, the safety verification gap widens.

Deployment pressure from competition: if Lab A deploys a frontier model, Lab B feels pressure to deploy their frontier model before the competitive window closes. This creates deployment pressure that can override safety review processes.

Information asymmetry on risks: the safety risks of frontier AI aren't publicly verifiable in real time. Labs have information about their systems' capabilities and limitations that external observers don't have. This creates strategic advantages for labs that withhold safety information, which creates incentives to not disclose risks.

International coordination vacuum: unlike nuclear weapons (where there were arms control treaties before proliferation), there are no effective international agreements on AI safety or capability limits. Multiple countries are simultaneously developing frontier AI without coordination frameworks.

Why the Arms Race Analogy Is apt and Important

The nuclear arms race analogy is precise in one important way: the risks of the technology are genuinely catastrophic at sufficient scale, and the competitive dynamics create pressure to develop and deploy faster than safety measures can keep pace.

But there's also a critical difference: nuclear weapons are inherently military technologies. AGI capabilities, even if they originate in military contexts, have broad civilian applications. This means the arms race dynamics are complicated by commercial incentives — every major AI lab is both racing for competitive advantage and developing technology with potential military implications.

The combination of commercial competition and strategic competition (between nations) creates dynamics that are harder to manage than either alone.

What Russell Is Actually Proposing

Russell has been consistent for decades that the path forward requires:

Alignment research as a prerequisite: before building AGI-level systems, we need to have solved the alignment problem — how to ensure that a system more intelligent than humans is actually pursuing human-intended goals. We don't have a solution to this problem yet.

Coordination on safety standards: international frameworks for AI safety, similar to nuclear safety agreements, that create common standards for frontier AI development and deployment.

Verification mechanisms: ways to verify that frontier AI systems have been safety-tested before deployment, similar to nuclear test ban treaties.

None of these are currently in place. And the current competitive dynamics are moving faster than the coordination frameworks are developing.

The Industry Response

The AI labs have mostly responded to arms race concerns by arguing that competitive pressure actually improves safety: more people working on AI means more people thinking about safety, and market forces reward safe products.

This argument has some merit in the middle of the capability development curve. But it breaks down at the frontier, where the safety questions are genuinely novel and the competitive pressure is highest. At the frontier, you can't learn from everyone else's safety failures because you're the first to encounter the failure mode.

What This Means for AI Builders

For people building AI systems, Russell's testimony is a reminder that the competitive dynamics of the AI industry are not aligned with the safety dynamics of frontier AI development. This doesn't mean don't build — it means build with awareness of the systemic pressures that are pushing the industry faster than safety frameworks can keep pace.

The practical implication: invest in safety infrastructure proportional to capability investment. If you're building systems that push toward frontier capability, the safety verification cost grows faster than the capability development cost.

The arms race isn't inevitable. But avoiding it requires choices that the current competitive dynamics don't incentivize. That's the gap Russell is pointing at — and it's a gap that matters for everyone building at the frontier.

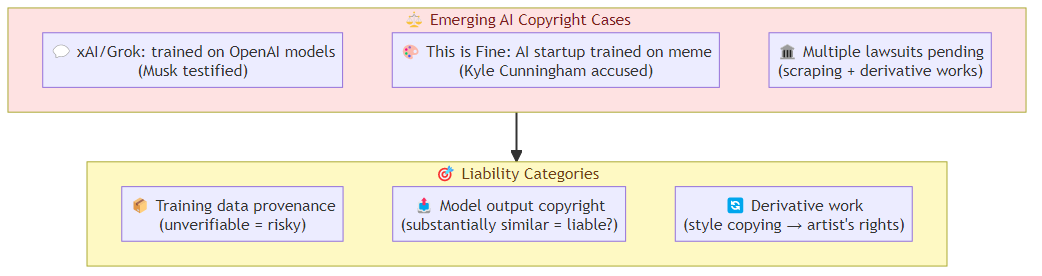

Related posts: AI Agent Governance — the governance frameworks for autonomous AI. AI Copyright War — another legal front in the AI industry's accountability crisis.