The Pentagon's deals with Nvidia, Microsoft, and AWS to deploy AI on classified networks represent a fundamental shift in how the US government approaches AI infrastructure. It's not just about military applications — it's about the government establishing security and deployment standards that will ripple into the commercial sector.

The pattern from previous technology cycles (cloud computing, mobile devices, encryption) is consistent: the government sets requirements, the prime contractors build to meet them, and the commercial sector adopts the resulting practices as de facto standards. AI infrastructure is on the same path.

What the Contracts Actually Cover

The reported deals involve AI deployment on classified networks — infrastructure that can handle sensitive government data, integrate with existing classified systems, and meet the security requirements that government procurement mandates. This is a different class of AI infrastructure than what most commercial enterprises use.

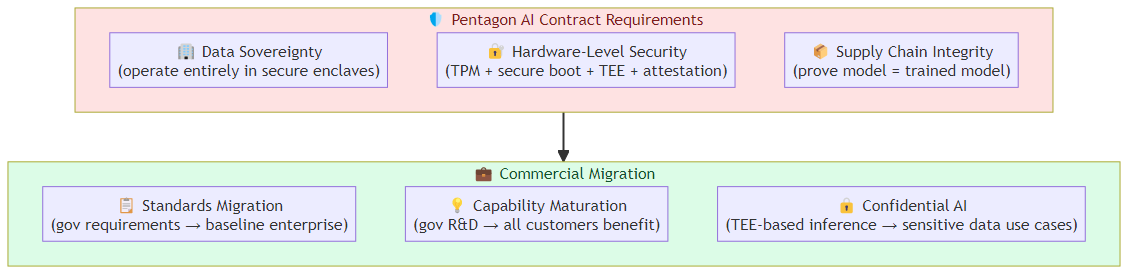

The key requirements these contracts impose:

Data sovereignty and residency: Classified data can't leave controlled environments. AI systems processing classified data need to operate entirely within secure enclaves, not transmitting data to external cloud infrastructure. This drives investment in on-premise and hybrid AI deployment architectures that commercial enterprises are increasingly adopting for sensitive data.

Hardware-level security: The Pentagon's AI contracts require hardware that meets specific security standards — TPM modules, secure boot chains, hardware attestation, and increasingly, TEE (Trusted Execution Environment) capabilities like Intel TDX and AMD SEV-SNP. These hardware security requirements were once unique to government procurement; they're now appearing in commercial enterprise security requirements.

Supply chain integrity: The contracts include requirements for AI model supply chain verification — proving that the model being deployed is the model that was trained, without unauthorized modifications. This is a harder problem than it sounds, and the solutions developed for government contracts will migrate to commercial practice.

Why the Government AI Bar Matters for Commercial Enterprise

The government doesn't set AI deployment requirements gently. FedRAMP (for cloud services), CMMC (for defense contractors), and sector-specific regulations create requirements that are specific, auditable, and enforced with penalties. When the government signs contracts with Nvidia, Microsoft, and AWS for classified AI, those vendors need to meet requirements that far exceed what most commercial customers ask for.

The commercial sector benefits from this in two ways:

Standards migration: Requirements developed for government procurement become baseline expectations for sensitive commercial deployments. Healthcare organizations processing PHI, financial institutions handling customer data, and legal firms managing privileged information all face regulatory requirements similar in structure to government requirements. The practices developed for government AI contracts provide a template.

Capability maturation: The investment that prime contractors make to meet government requirements benefits all customers. Microsoft's investment in secure AI deployment infrastructure for the Pentagon benefits their Azure OpenAI commercial customers. AWS's investment in confidential computing for government contracts benefits their enterprise cloud customers. The R&D cost is partially subsidized by government procurement, while the capability benefits flow to all customers.

The Confidential AI Implications

The classified network requirement drives investment in confidential AI — AI systems where data and inference operations are protected at the hardware level, not just the software level. This is relevant to enterprise AI because confidential AI addresses one of the primary barriers to AI adoption in regulated industries: the concern that sensitive data used in AI inference could be exposed or misused.

The hardware security mechanisms required for classified AI deployments (TEE-based inference, encrypted memory, attestation chains) are the same mechanisms that enable enterprises to safely use AI with sensitive data. A healthcare system that wants to use AI to assist with patient diagnosis needs to know that the AI system can't access or expose patient data beyond what's needed for the specific inference. Hardware-level confidential computing provides that guarantee.

The commercial availability of confidential AI capabilities has improved dramatically in the past 18 months. AWS Nitro enclaves, Azure Confidential Computing, and Google Cloud Confidential Computing all offer production-grade confidential AI infrastructure — capabilities that were developed or matured to meet government requirements and are now available commercially.

What the Contract Structure Tells Us

The structure of government AI contracts — multiple primes for the same work, with different specialization — is a signal about how the AI infrastructure market is consolidating. The Pentagon's strategy appears to be deliberate diversification: not depending on a single vendor for AI infrastructure, but building relationships with multiple primes who can provide different capabilities.

For commercial enterprises, the lesson is different but related: the AI infrastructure market is consolidating around a small number of very large vendors. The government is actively working to maintain competitive alternatives. Commercial enterprises should do the same — avoiding single-vendor dependency for critical AI infrastructure, even as the consolidation pressures increase.

The Broader Signal

The Pentagon's AI bet is part of a broader pattern where AI is being treated as critical national infrastructure. The same way the electrical grid, telecommunications, and financial systems are treated as strategic assets requiring government attention, AI infrastructure is now receiving similar treatment.

This has implications for the AI industry that go beyond the specific contracts:

Export controls on AI: The government is already implementing export controls on advanced AI models and the chips that run them. This will affect how AI infrastructure is built and deployed globally.

Antitrust considerations: As AI infrastructure consolidates around a small number of very large players, antitrust concerns will increase. The government has shown willingness to intervene in technology market consolidation (the various antitrust cases against major tech platforms), and AI infrastructure could be the next focus.

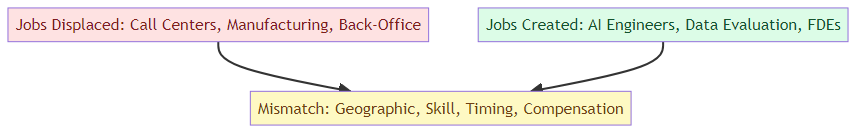

Workforce and talent: The government's AI ambitions are constrained by the same talent shortage affecting the commercial sector. The competition for AI talent between government and commercial sectors will intensify, with implications for both compensation and workforce availability.

The Bottom Line

The Pentagon's AI contracts with Nvidia, Microsoft, and AWS are significant not because of the specific applications (classified networks are not relevant to most commercial enterprises) but because of the standards they establish.

The security requirements, the confidential AI infrastructure, the supply chain verification practices — all of this will migrate into commercial best practice. The government is effectively funding AI infrastructure R&D that the commercial sector will use.

For enterprise AI teams: the standards being set in government AI contracts are a preview of where regulatory and compliance requirements are heading. Investing in compliance-ready AI infrastructure now — confidential computing, hardware attestation, supply chain verification — positions you well for when those requirements become mandatory.

The government bet on AI is setting the bar. The commercial sector will follow.

Related posts: Confidential AI for Agentic Systems — the TEE-based confidential AI architecture. AI Infrastructure Readiness — the infrastructure stack for production AI agents. Agent Security Hardening — the four-layer defense architecture.