The conversation about AI governance has shifted.

A year ago, governance was mostly about policy documents and ethical frameworks — important but abstract. Today, it's about production systems that make decisions, take actions, and move money without humans watching every step. The shift from "AI should be governed" to "AI must be governed in production" is where the hard work is happening.

The context is becoming more urgent: the Pentagon's AI contracts for classified networks, enterprise AI deployments reaching scale, and the EU AI Act coming into enforcement are all converging on the same realization — governance frameworks that look good on paper need to be translated into technical enforcement mechanisms that hold when the system is actually running.

I've written about agentic AI threat models, security hardening, and enterprise deployment. This post is about the governance layer that sits above all of that — the frameworks that determine what agents are allowed to do, how compliance is verified, and what happens when things go wrong.

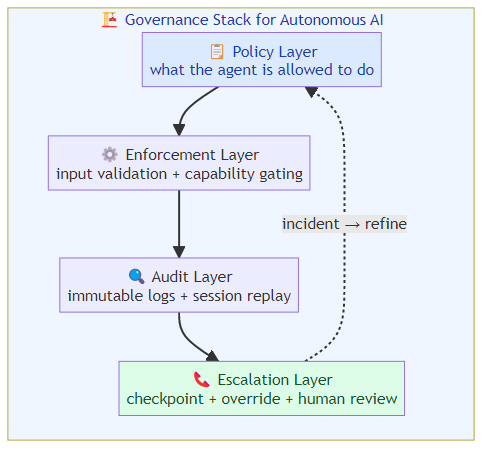

The Governance Stack for Autonomous AI

Governance for autonomous AI isn't one thing — it's a stack of mechanisms at different layers:

Policy layer: What is the agent allowed to do? This is the specification of boundaries, typically written as rules, role definitions, and capability constraints. The policy layer is where human judgment about acceptable risk gets encoded.

Technical enforcement layer: How are the policy rules enforced in the running system? This includes input validation, capability gating, output filtering, and behavioral monitoring. The enforcement layer is where policy meets implementation.

Audit layer: What did the agent do, and was it within policy? This requires immutable logging, session replay capability, and compliance reporting. The audit layer is where accountability is established after the fact.

Escalation layer: What happens when the agent approaches a boundary? This includes human-in-the-loop checkpoints, confidence threshold alerts, and override mechanisms. The escalation layer is where governance catches problems before they become incidents.

Most governance failures I see in enterprise AI deployments happen because organizations invest in the policy layer and underinvest in the other three. A policy document that says "the agent must escalate high-stakes decisions to human review" is meaningless if the technical implementation doesn't actually enforce escalation.

What Enterprise Governance Actually Looks Like

Working with enterprise AI deployments, the governance patterns that actually hold up in production are more specific than the policy frameworks suggest.

Capability gating by risk class: Rather than a single governance policy applied to all agent tasks, the most effective approach is risk-stratified capability gating. Low-risk tasks (querying a knowledge base, summarizing a document) can proceed with minimal oversight. High-risk tasks (executing financial transactions, modifying system configurations, generating external communications) require explicit authorization and post-action review.

The implementation: the orchestration layer assigns a risk class to each task before dispatch, and routes accordingly. High-risk tasks hit a human approval queue. Medium-risk tasks proceed with enhanced logging. Low-risk tasks run autonomously with standard logging.

Behavioral baselines with anomaly detection: The most effective governance mechanism I've seen in production isn't preventing specific actions — it's establishing behavioral baselines and alerting when the agent's behavior deviates. An agent that suddenly starts making more tool calls, accessing different data sources, or generating longer outputs than its baseline is flagged for review, even if none of the individual actions violate policy.

The intuition: agents often fail gracefully — one wrong tool call is a mistake. Two wrong tool calls in the same session is a pattern. Agents that have been compromised or are experiencing unexpected context drift show behavioral signatures before they cause harm.

Immutable audit logs with cryptographic integrity: Every agent action logged with a hash chain — so audit logs can't be modified retroactively. The cryptographic integrity requirement sounds extreme, but it's necessary for compliance with financial regulations, healthcare privacy rules, and government contracting requirements.

The implementation doesn't need to be complex — a simple hash chain where each log entry includes the hash of the previous entry provides tamper-evident logging that's sufficient for most compliance purposes.

Where Governance Frameworks Still Have Gaps

Despite progress, the governance frameworks for autonomous AI have specific gaps that current approaches don't address well:

Cross-agent accountability: When multiple agents coordinate to complete a task, which agent is accountable for the outcome? The current frameworks assume single-agent accountability, which breaks down in multi-agent workflows where responsibility is distributed.

The gap matters because multi-agent orchestration is the dominant pattern for complex enterprise AI tasks. A financial analyst agent and a market research agent coordinate to produce an investment memo. The memo contains a material error. Who is accountable? The orchestration framework needs a way to assign and track cross-agent accountability.

Temporal context in governance rules: Governance policies written at one point in time may be inappropriate at another. An agent authorized to spend up to $10,000 on software subscriptions last year operates in a different economic context than today. Governance rules that don't account for temporal context can authorize decisions that were reasonable when written but are unreasonable now.

Third-party model governance: Enterprise agents often call third-party APIs (OpenAI, Anthropic, data providers). When a third-party model produces a harmful or incorrect output that propagates through the enterprise agent's workflow, the accountability question is genuinely unclear. The enterprise agent didn't generate the output, but it used the output to make decisions.

The Regulatory Pressure Coming

The EU AI Act's enforcement timeline means that within the next 18 months, enterprise AI deployments in Europe will face mandatory conformity assessments for high-risk AI systems. The definition of "high-risk" includes AI systems that make decisions about employment, credit, education, and several other domains — categories that cover a significant fraction of enterprise AI deployments.

For enterprise AI teams: the governance frameworks you build now will become compliance requirements in 12-18 months. The investment in rigorous governance today is also an investment in regulatory readiness.

The US government is moving in a similar direction through sector-specific regulation. The financial services regulators (OCC, CFPB, SEC) have all signaled increased scrutiny of AI-assisted decision-making. Government contractors face FedRAMP requirements for AI systems. The pattern is consistent: AI governance is becoming a compliance requirement across sectors.

Building Governance That Scales

The governance frameworks that work at scale share specific characteristics:

Governance as code, not governance as documentation: The governance rules that matter are encoded in the system's technical implementation, not in documents that humans review periodically. Policy documents are necessary but insufficient.

Graduated controls by risk: Not all agent actions need the same level of governance oversight. The most efficient approach is graduated controls where governance intensity matches risk level.

Continuous monitoring over periodic review: Governance that depends on humans periodically reviewing agent behavior is governance that fails. Continuous automated monitoring with human review triggered by anomalies is more reliable than periodic human review.

Incident-driven refinement: Every governance incident — every time an agent does something it shouldn't, every time a human overrides an agent decision, every time an audit reveals a compliance gap — is a governance refinement opportunity. Systems designed to capture these incidents and feed them back into governance refinement improve over time.

The governance frameworks for autonomous AI are still immature. The organizations that invest in building rigorous, technically-enforced governance now will have a significant advantage as regulatory requirements tighten and as AI agents take on more consequential tasks.

The question isn't whether to govern autonomous AI. It's whether to govern it rigorously or superficially. The consequences of the latter are becoming clearer every week.

Related posts: Agent Security Hardening — the four-layer defense architecture for agentic systems. Enterprise Agent Deployment — governance and rollout playbook for enterprise deployment. AI Agent Threat Models — threat modeling for production AI agents. Stuart Russell's AGI Arms Race Warning — why competitive dynamics at the frontier are creating safety coordination problems.