This week, OPAQUE Technology acquired cryptographic AI technology from the Technology Innovation Institute, specifically targeting confidential AI capabilities. The move signals what forward-thinking companies have understood for months: standard AI inference infrastructure isn't designed for workloads where data sensitivity is non-negotiable.

Agentic AI systems raise the stakes further. Unlike chatbots that process queries and forget, agents maintain state, accumulate context, access tools, and operate on behalf of users over extended sessions. The attack surface for a production AI agent handling sensitive data is qualitatively different from a stateless API call.

This is the missing piece in the confidential AI puzzle.

Why Agentic Systems Break Confidential Computing Assumptions

Traditional confidential computing — TEE (Trusted Execution Environments) like Intel SGX, AMD SEV, ARM TrustZone — was designed for relatively simple workloads: run this computation on this sensitive data, return the result, done. The security model assumes:

- Isolated execution: The workload runs in an enclave with no external dependencies

- Short-lived operations: Cryptographic operations, database queries, short computations

- Clear data boundaries: Input goes in, output goes out

Agentic systems violate all three assumptions:

Agents need tools: An agent that searches the web, reads files, calls APIs, or executes code can't run entirely inside a TEE. The moment an agent calls an external tool, you've left the enclave.

Agents are long-running: A customer service agent handling a complex dispute might run for 30 minutes across dozens of LLM calls and tool executions. Maintaining TEE attestation across a 30-minute distributed workflow is unsolved at scale.

Agents accumulate state: The context that makes an agent useful — conversation history, retrieved documents, intermediate results — is exactly the data that needs protection. But that data is being passed to multiple LLM calls, stored in vector databases, cached in memory, and written to logs.

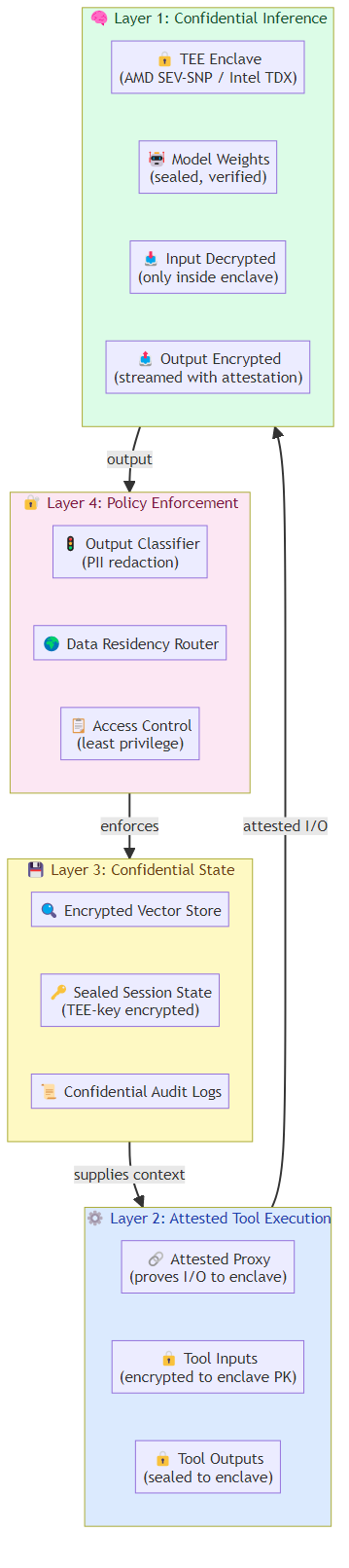

What's Emerging: Layered Confidential AI for Agents

The solution isn't to run everything in a TEE. It's to build a layered architecture where the most sensitive operations get the strongest guarantees, and less sensitive operations run with appropriate, lower-cost protection.

Layer 1: Confidential Inference at the Model Boundary

The foundation: running LLM inference inside a TEE. This ensures:

- Input privacy: User prompts never leave the enclave in plaintext

- Output privacy: Model responses are decrypted only within the enclave

- Model integrity: The model weight can't be tampered with or exfiltrated

AMD SEV-SNP and Intel TDX have matured significantly in 2026, with cloud providers offering confidential GPU instances that can run inference with reasonable performance overhead (typically 10-30% for the inference pass).

The key advance: confidential streaming. Early TEE implementations required full request/response cycles, making real-time agentic interactions sluggish. New attestation protocols support streaming tokens out of the enclave with cryptographic integrity verification, enabling interactive agentic experiences.

Layer 2: Attested Tool Execution

The harder problem: tools that run outside the TEE but whose inputs and outputs need to be protected. The emerging pattern:

- The agent's reasoning engine runs inside a TEE

- Tool calls are made through an attested proxy — a service that can prove to the enclave what data it transmitted and received

- Tool inputs are encrypted to the enclave's public key

- Tool outputs are similarly sealed, only decryptable within the enclave

This creates a "confidential chain" from the user's original query through all intermediate tool calls, even when those tools run on untrusted infrastructure.

Layer 3: Confidential Memory and State

Agentic systems accumulate state — conversation history, retrieved documents, intermediate reasoning. Protecting this state at rest and in transit requires:

- Encrypted vector stores: Embeddings stored in vector databases are encrypted, with search performed on encrypted vectors using techniques like confidential nearest-neighbor search

- Sealed session state: Agent session data is encrypted with keys derived from the TEE's measurement, so only the specific enclave instance can decrypt it

- Confidential logging: Audit logs and traces are encrypted and can only be read by authorized parties with TEE-based key release

Layer 4: Policy Enforcement at the Agent Boundary

The outermost layer: access control and data governance policies enforced at the point where the agent interacts with external systems:

- Output classification: Agent outputs are automatically scanned for sensitive content before leaving the enclave

- PII redaction: Personally identifiable information in tool results is redacted before entering agent context

- Data residency: Agent operations are routed to geographically appropriate infrastructure based on data governance requirements

The Enterprise Use Cases Driving This

Three categories of agentic workloads are the primary drivers of confidential AI investment:

Healthcare Agents

A clinical decision support agent needs access to patient records, medical literature, and diagnostic imaging. All of this is PHI (Protected Health Information). A hospital deploying such an agent needs guarantees that:

- The patient's data is only visible to the AI model inside a TEE

- No data is logged or cached outside the enclave

- Audit logs prove compliance with HIPAA requirements

- The agent's reasoning can be independently verified for medical malpractice purposes

Confidential AI provides all of this.

Financial Agents

Investment research agents, fraud detection agents, and compliance monitoring agents all handle non-public information (MNPI) and personally identifiable financial data. Regulators are increasingly explicit about data residency and processing requirements. A hedge fund running an AI agent that processes material non-public information needs to demonstrate:

- Data never left a compliant infrastructure boundary

- All processing happened in audited, attested environments

- Access logs are cryptographically verifiable

Legal and IP-Sensitive Agents

Law firms and corporations using AI agents to analyze contracts, litigation strategy, or intellectual property face the highest stakes. Attorney-client privilege and trade secret protections are absolute — there's no "close enough." Confidential AI for legal agents ensures:

- Confidential documents are processed inside a TEE

- The AI's analysis can't be exfiltrated

- Third-party AI providers can't use submitted documents for training

The Open Problems

Despite significant progress, several challenges remain unsolved:

Attestation scalability: TEE attestation is expensive. Verifying the integrity of a TEE for every tool call in a long-running agentic workflow adds meaningful latency. Protocols for batch attestation and cached attestation need standardization.

Confidential multi-party agents: What happens when two organizations want to run a joint agentic analysis on their combined data, without either party being able to see the other's raw data? Secure multi-party computation approaches exist but are currently too slow for practical agentic use.

Performance overhead: Confidential GPU instances carry a 10-30% cost and latency premium. For high-volume, latency-sensitive agentic applications, this overhead is non-trivial.

Tool ecosystem compatibility: Most production tools weren't designed for confidential execution. Database drivers, API clients, and code execution sandboxes all need modification to work within the confidential AI stack.

What to Evaluate

If you're building a confidential AI agent system, here's what to assess:

What data does your agent handle, and what's the regulatory classification? (PHI, PII, MNPI, trade secrets, etc.)

Where are your TEE-supported inference options? Major cloud providers (AWS Nitro, Azure Confidential Computing, GCP Confidential Computing) all offer confidential GPU instances as of 2026.

Can your tools support attested execution? Code execution (Docker/Linux containers with measured boot), database queries (encrypted-in-transit with attestation), and API calls (mTLS with enclave verification) all need evaluation.

What's your key management architecture? Who holds the keys that seal agent state? Hardware security modules (HSMs) are the gold standard — see our HSM for AI Key Management post for details.

Do you need end-to-end confidential traces for compliance? If regulators can demand your AI's reasoning process, you need cryptographically attested logs.

The Direction of Travel

Confidential AI for agents is moving from "interesting research" to "production necessity" in 2026. The convergence of:

- Mature TEE hardware (AMD SEV-SNP, Intel TDX)

- Cloud provider support for confidential GPU instances

- Emerging attested tool execution protocols

- Regulatory pressure around AI data handling

...means that within 18-24 months, confidential execution will be a baseline expectation for any agentic system handling sensitive data, not a premium feature.

The companies building that capability now — whether through in-house development or partners like OPAQUE + TII — are positioning for a world where the privacy guarantees around AI agents are as important as the intelligence.

Related posts: Confidential Computing for AI Inference — TEE implementations for AI workloads. Hardware Root-of-Trust in Cloud AI — attestation mechanisms. AI Agent Security Chaos — the broader agentic security landscape.