The numbers in AI infrastructure are reaching a scale that strains comprehension.

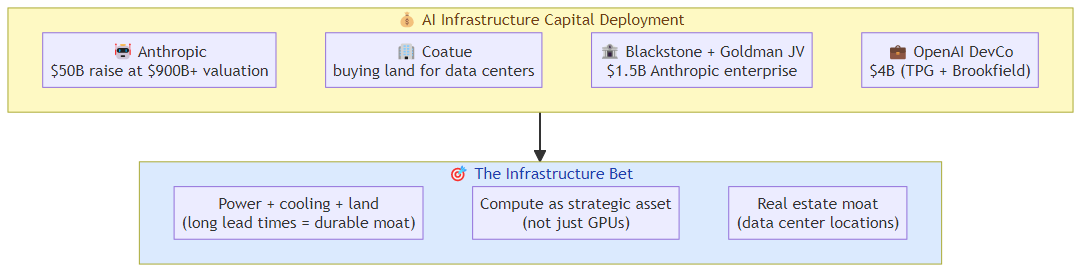

Anthropic is reportedly raising a $50 billion round at a $900B+ valuation — within weeks of closing a $1.5 billion enterprise joint venture backed by Blackstone, Goldman Sachs, and Hellman & Friedman. Coatue Management, one of the most influential AI-focused investors, is reportedly acquiring land for data centers, potentially for Anthropic's infrastructure needs. Meanwhile, Anthropic's enterprise JV, OpenAI's $4 billion Development Company, Sierra's $950M raise, and Cerebras's IPO pipeline all represent capital being deployed into AI infrastructure at a pace that has no historical analog.

The infrastructure bet is on. Here's what it means for the competitive landscape and for anyone building on top of it.

The Scale of the Bet

$50 billion is not a venture round in any conventional sense. It's a strategic capital deployment that reshapes the entire competitive landscape of the AI industry.

To put it in perspective: $50B would be among the largest capital raises in corporate history. It's roughly the GDP of a small country. It's more than most technology companies are worth as public companies. And it's being deployed into AI infrastructure — data centers, GPU clusters, networking, power infrastructure — by a company that was founded in 2021.

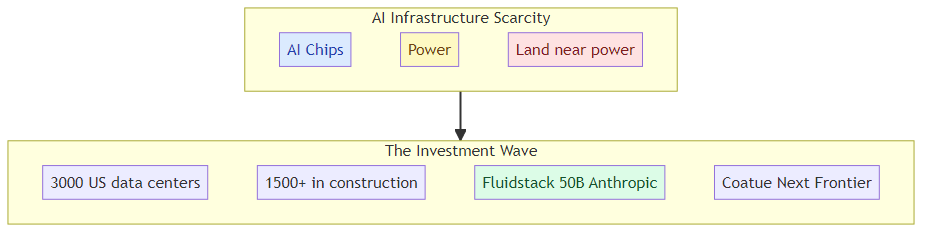

The scale implies a specific bet: that the compute infrastructure required for frontier AI models will be so valuable, and so scarce relative to demand, that whoever controls it will have a durable competitive advantage that justifies this level of investment.

Why Land and Data Centers, Not Just GPUs

The Coatue land acquisition for potential Anthropic data centers is a specific signal that's easy to overlook. Infrastructure at this scale isn't just about GPUs — it's about power, cooling, and physical space. The data center needs to be located near power generation, needs robust networking connectivity, and needs physical security.

Buying land means Anthropic (or its investors) are betting that the constraint on AI compute won't be GPUs — which can be purchased — but physical infrastructure that has long lead times. If you don't own the land, you don't control when the data center gets built.

This is a different kind of moat: a real estate moat. The company that controls the physical locations where AI models run has a durable advantage that isn't replicable by simply spending more on GPUs.

The Infrastructure Moat Question

The central question in AI infrastructure investment is: does scale create a durable moat, or does it create a commodity?

The optimistic case: whoever builds the most capable infrastructure can offer the best model serving, the lowest latency, the highest throughput, and the most reliable availability. These advantages compound — more users generate more revenue, which funds more infrastructure, which serves more users.

The skeptical case: compute is a commodity. Nvidia sells GPUs to everyone. Data center construction is not that hard if you have the capital. The advantage of scale evaporates as competitors catch up.

My view: the moat is real but not permanent. The infrastructure advantage is durable for 2-3 years, after which competitive pressure and technology commoditization erode it. The window of maximum advantage is now, which is why investors are deploying capital at this pace — they're buying into the peak of the infrastructure moat.

What This Means for Independent AI Builders

If you're building AI applications on top of Anthropic, OpenAI, or any AI infrastructure provider, the infrastructure race has specific implications:

Your provider is making a very long-term bet: $50B raised means Anthropic is committed to a multi-year infrastructure buildout that will define the competitive landscape. The AI labs aren't just competing for current market share — they're building for a future where AI compute is as fundamental as electricity.

Switching costs are rising: as Anthropic builds proprietary infrastructure (custom silicon, dedicated data centers, specialized networking), the differentiation between using Anthropic's API and using a competitor's API grows. The services become more differentiated, not more commoditized.

The JV structure changes competitive dynamics: Anthropic's Blackstone-backed JV means Anthropic has enterprise customers who are also equity investors. That's a different kind of customer relationship — one where the customer has financial incentive to see Anthropic succeed. This could create channel advantages that independent application builders don't have access to.

The compute cost curve is the critical variable: if infrastructure scale drives down the cost of AI inference faster than the market expects, the economics of AI applications improve dramatically. If infrastructure costs stay high (power constraints, GPU shortages, real estate competition), the economics stay challenging. The $50B bet is partially a bet on which direction the cost curve goes.

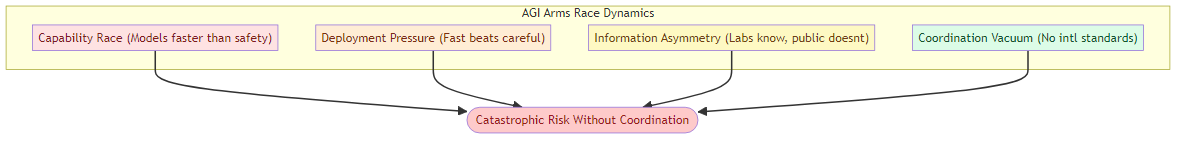

The Geopolitical Dimension

AI infrastructure at this scale has a geopolitical dimension that can't be ignored. Data centers require power. Power generation requires resources. Resources require supply chains that run through geopolitically complex regions.

The US government's involvement in AI infrastructure (the Pentagon's deals with Nvidia, Microsoft, and AWS for classified AI networks) and the regulatory scrutiny around AI model exports signal that AI infrastructure is being treated as a strategic national asset.

This has implications for international AI application builders: the infrastructure they'll be building on is increasingly a national strategic asset, which means regulatory uncertainty, export controls, and national security considerations become material factors in infrastructure decisions.

The Bottom Line

The $50B Anthropic round, combined with the enterprise JV wave and the Coatue data center land acquisition, represents a bet on AI infrastructure as a durable, strategic asset — not a commodity.

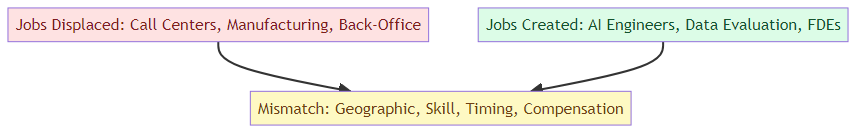

For the AI industry: we're entering a phase where the infrastructure layer is consolidating around a small number of very well-capitalized players. The application layer will grow on top of this infrastructure, but the value capture will be uneven between the infrastructure layer and the application layer.

For independent builders: the window for positioning is now. As infrastructure moats solidify, the leverage of application-layer builders against their infrastructure providers decreases. The depth strategy (specialized domain expertise, workflow integration, enterprise relationships) becomes more important as the infrastructure layer becomes more concentrated.

The bet is enormous. The implications for the competitive landscape are just beginning to unfold.

Related posts: Enterprise AI's $5.5B Week — the enterprise JV wave that precedes this infrastructure scale. AI Chip Infrastructure — the chip layer that powers the infrastructure buildout. Cerebras IPO and Frontier AI Infrastructure — what the Cerebras IPO filing reveals about AI compute economics.