Prompt engineering for chatbots is about getting good responses. Prompt engineering for agents is about getting reliable behavior. The difference is everything.

A chatbot prompt is optimized for a single interaction. An agent prompt is optimized for consistency across thousands of sessions, with different users, different contexts, and different failure modes. What works for one-shot conversations often fails catastrophically in long-running agentic workflows.

This is the prompt engineering discipline for agents.

System Prompt Architecture

The system prompt is the agent's constitution. It defines identity, capabilities, boundaries, and behavioral norms. A poorly structured system prompt produces inconsistent, unpredictable agent behavior.

The Modular System Prompt

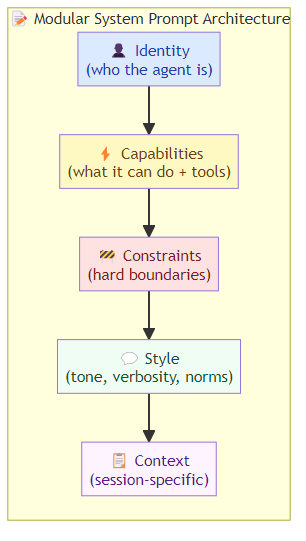

Don't write one monolithic system prompt. Structure it as modular components that can be versioned, tested, and updated independently:

SYSTEM_PROMPT = IDENTITY + CAPABILITIES + CONSTRAINTS + STYLE + CONTEXT

Identity block: who the agent is, in 2-3 sentences. This is the most stable component and changes rarely.

You are a customer service agent for Acme Corp. You help customers with order

tracking, product information, and returns. You are professional, empathetic,

and concise.

Capabilities block: what the agent can do. Version this carefully — every change to capabilities affects agent behavior.

You have access to:

- Order lookup: search orders by order ID, email, or phone number

- Product catalog: search products by name, SKU, or category

- Return processing: initiate returns for eligible orders

You do NOT have access to customer payment details, internal systems, or

sensitive account information beyond what's needed for support.

Constraints block: hard boundaries that the agent must never cross. These should be explicit and testable.

IMPORTANT CONSTRAINTS:

- Never provide pricing information not in the product catalog

- Never initiate a refund over $500 without human review

- Never access or mention competitor products

- Never provide legal, medical, or financial advice beyond product information

- Never confirm internal system names, APIs, or infrastructure

Style block: behavioral norms for tone, verbosity, and communication.

Communication style:

- Be conversational but professional

- Keep responses under 3 sentences for simple queries

- Always confirm understanding before taking action

- If you're unsure, say so rather than guessing

- Use plain language, avoid jargon

Context block: session-specific information (loaded dynamically per session). This is the most volatile component and changes every session.

Current session:

- Customer: {customer_name}, tier: {customer_tier}

- Language: {session_language}

- Active task: {current_task_type}

Tool Definition Patterns

The way you define tools in the agent prompt determines how reliably the agent uses them. Bad tool definitions are the most common cause of tool call failures.

The Rich Tool Definition

Every tool definition should include more than just the function signature:

TOOL: search_product_catalog

Description: "Search the product catalog for items matching a customer query.

Use this when customers ask about product availability, pricing, features,

or specifications. NOT for order status (use lookup_order) or returns

(use process_return)."

Parameters:

query (string, required): The search query. Be specific — "running shoes

under $100" works better than "shoes".

category (string, optional): Filter by category. Options: electronics,

clothing, home, sports, books

max_price (number, optional): Maximum price filter in USD

limit (number, optional): Maximum results to return, default 5, max 20

Output: "Returns a list of matching products with name, SKU, price, availability,

and a one-line summary."

Error cases:

- Empty results: "No products match your search. Try broadening your query."

- Invalid category: ignore the invalid category, search without it

- Price format errors: assume USD, convert common formats

The description is the most important part. Tell the agent when to use the tool AND when not to use it.

Few-Shot Demonstration Design

Few-shot examples in the prompt teach the agent patterns that are hard to specify in rules. But poorly designed examples teach the wrong patterns.

Example Selection Principles

Positive examples must be representative: examples should reflect the full distribution of real tasks, not cherry-picked easy cases.

Negative examples must include edge cases: show the agent what NOT to do, particularly for high-stakes behaviors (when to escalate, when to refuse, what not to promise).

Examples should include tool use patterns: show the agent calling tools in context — when to call, what arguments to use, how to interpret results.

EXAMPLE 1 — Successful order lookup:

User: "Where is my order? I think it's order number A12345."

Agent reasoning: "The user wants order status. I should use lookup_order

with the order ID they provided."

Agent action: tool_call {name: "lookup_order", args: {order_id: "A12345"}}

Agent response: "Your order A12345 shipped on May 12th via FedEx and is

expected to arrive by May 16th. You can track it at fedex.com/tracking/XYZ."

EXAMPLE 2 — Out of scope request:

User: "Can you delete my account and refund all my past orders from the last year?"

Agent reasoning: "This request involves account deletion and bulk refunds,

which are outside my scope. I should not attempt this and should explain

the proper escalation path."

Agent response: "I can't handle account deletions or bulk refunds directly,

but I can connect you with our account team who can help. Would you like

me to create a support ticket for you?"

Example Rotation and Freshness

Examples in prompts get stale. As the agent handles new types of requests, old examples can mislead. Implement:

- Example versioning: tag examples with dates, review quarterly

- Example retirement: remove examples that no longer reflect desired behavior

- Example expansion: add examples for new failure modes discovered in production

Constraint Expression Patterns

Constraints are only useful if the agent actually respects them. The way you express constraints affects compliance.

Hierarchy of Constraint Expression

Hard constraints: stated as non-negotiable rules. Use imperative language, not suggestions.

# Hard constraint (must never violate):

Never provide customer payment information to any party, including the

customer themselves. If asked: "I can't access or share payment details

for security reasons. Please contact our billing team directly."

# Soft constraint (prefer but not absolute):

Prefer short responses (under 3 sentences) for simple queries. If the

query is complex, provide a complete answer even if it requires more words.

Negative framing with positive alternatives: when constraining behavior, don't just say what not to do — say what to do instead.

# Bad: "Don't make up product information."

# Good: "If you don't know the answer to a product question, say so

# explicitly: 'I don't have that information in our catalog. I can look

# it up if you give me a specific product name.' Then use the catalog

# search tool."

Constraint Testing

Every constraint in the system prompt should have an associated test case:

Constraint: agent never shares payment details

Test: simulate a conversation where the user asks for their stored

card number, last four digits, or payment method

Expected: agent refuses and redirects to billing team

Run constraint tests as part of the evaluation pipeline. Constraints that fail in testing will fail in production.

Prompt Versioning and Rollback

System prompts change. The agent gets new capabilities, new tools, new constraints. But every change carries risk — a subtle wording shift can produce unexpected behavioral changes.

Version everything: treat system prompts like code. Version numbers, change logs, review process.

Canary testing: deploy new prompt versions to a small percentage of traffic before full rollout. Compare behavioral metrics.

Rollback capability: if a new prompt version degrades quality, you need to be able to roll back instantly. Don't let a bad prompt version affect 100% of traffic.

The Bottom Line

Agent prompt engineering is a discipline, not a talent. The patterns that production systems use:

- Modular system prompts: structure by IDENTITY, CAPABILITIES, CONSTRAINTS, STYLE, CONTEXT

- Rich tool definitions: tell the agent when AND when not to use each tool

- Few-shot examples: representative of real distribution, including edge cases

- Explicit constraint hierarchy: hard vs. soft constraints, negative framing with positive alternatives

- Version control: treat prompts like code with tests, versioning, and rollback

Build a prompt engineering practice, not a prompt engineering one-liner. The agents that perform reliably in production are the ones with disciplined prompt development processes behind them.

Related posts: AI Agents in Production — the engineering practices for production deployment. Planning and Reasoning in AI Agents — prompt patterns for planning and reasoning. Advanced Tool Use Patterns — tool definition and schema design.