Last week, OpenAI and Anthropic both announced enterprise joint ventures for AI services. The market is maturing — and the buyers are asking harder questions than "can it do the task?" They're asking "how does it decide to do the task, and can we audit that decision?"

That question is about planning and reasoning. And it's where agentic engineering gets genuinely interesting.

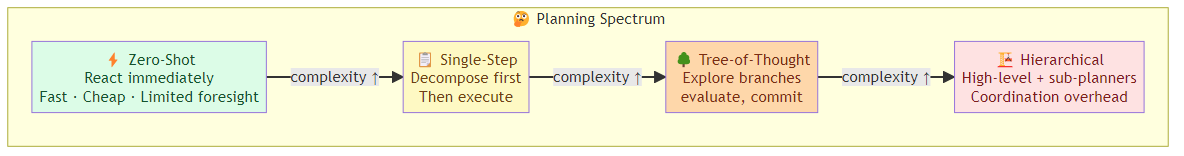

The Planning Spectrum

Agentic systems don't all plan the same way. The architecture you choose determines latency, cost, reliability, and explainability — and the tradeoff between these dimensions is non-trivial.

Zero-Shot (Reactive) Planning

The agent receives a task and generates a response immediately, reasoning through the problem in a single pass. No explicit plan is constructed — the reasoning happens inside the LLM's forward pass.

When to use: simple tasks, time-critical responses, tasks where the LLM has strong prior knowledge.

Tradeoffs: fast and cheap, but lacks foresight. The agent can't course-correct mid-execution because it never committed to a plan. If the first approach is wrong, it won't know until it's already acted on it.

Single-Step Decomposition

The agent breaks the task into 2-3 high-level steps before executing. More structured than zero-shot, but still reactive — it plans only at the start.

When to use: multi-step tasks with clear subgoals, moderate complexity.

Tradeoffs: better structure than zero-shot, but no mid-course correction. If step 1 reveals information that changes the plan, the agent either replans (costly) or ignores the new information (risky).

Recursive (Tree-of-Thought) Planning

The agent explores multiple possible plan branches, evaluating each before committing. Like a chess player considering several moves ahead — but inside the LLM's context.

When to use: complex tasks with uncertain paths, high-stakes decisions where the cost of a wrong approach is high.

Tradeoffs: produces better plans but burns significant tokens (and latency) on the planning process itself. Not appropriate for tasks where speed matters more than optimality.

Hierarchical Planning

A high-level planner decomposes the task into sub-goals. Specialized sub-planners handle each subgoal independently. Results are synthesized at the end.

When to use: tasks with distinct phases that require different reasoning strategies (e.g., research → analysis → synthesis).

Tradeoffs: mirrors how organizations actually work — specialists handle sub-problems, a coordinator integrates results. But the coordination overhead can dominate for simpler tasks.

The Reasoning Mechanisms

Planning requires reasoning — and the reasoning mechanism you choose has downstream effects on everything else.

Chain-of-Thought (CoT)

The agent writes out its reasoning step by step before producing a final answer. The reasoning chain is visible (in the context) and auditable.

Why it works: externalizing reasoning forces the LLM to commit to a logical chain. Errors in the chain are more visible than errors in a final answer.

When it matters most: tasks where reasoning errors are costly (legal analysis, code generation, complex data interpretation). The visible chain also helps with debugging and user trust.

Tree-of-Thought (ToT)

The agent explores multiple reasoning paths, evaluating each branch. Unlike CoT's linear progression, ToT maintains a tree of possible reasoning trajectories.

Why it works: for problems with multiple viable approaches, branching exploration finds solutions that linear reasoning misses. Particularly effective when the right approach isn't obvious from the start.

When it matters most: complex problem-solving where the first approach often fails. Tasks like planning, negotiation, multi-constraint optimization.

ReAct (Reasoning + Acting)

The agent interleaves reasoning with tool use. After each tool execution, the agent pauses to reason about the observation before deciding the next action. Cycle: think → act → observe → think.

Why it works: closes the loop between reasoning and reality. The agent can course-correct based on actual tool outputs, not just predicted ones.

When it matters most: tasks that require external data or computation. The agent's reasoning must be grounded in real observations, not just the model's internal knowledge.

Reflexion

After completing a task, the agent reflects on the outcome, identifies failure modes, and updates its strategy for next time. The reflection is stored in memory for future reference.

Why it works: agents that learn from their mistakes improve over time. The reflection step converts failures into institutional knowledge.

When it matters most: long-running agents that handle many instances of the same task type. The marginal benefit of reflection increases with repetition.

Planning Under Uncertainty

The hardest planning problem: when the agent doesn't have enough information to plan optimally. In real-world deployments, this is the common case.

Information Gathering as Planning

When an agent lacks sufficient information to plan, the optimal strategy is often to plan for information gathering first. The agent builds a skeleton plan, identifies the information gaps, acquires that information (via tool calls, web search, database queries), then completes the plan.

Task: "Help me restructure my investment portfolio"

→ Gap: what are the current holdings, risk tolerance, financial goals?

→ Plan: 1) Query investment accounts 2) Assess risk profile 3) Generate restructuring options

→ Execute plan with full information

This is fundamentally different from the "reason then act" model. Here, the agent plans to plan — it constructs the meta-plan first, identifies what it needs to know, acquires that knowledge, then commits to a real plan.

Contingency Planning

For tasks with high uncertainty, build contingencies into the plan. Instead of "A → B → C", plan "A → B → C; but if X fails, fall back to A' → B'".

Task: "Research competitor pricing and generate a pricing recommendation"

→ Primary plan: scrape competitor websites → analyze pricing patterns → generate recommendation

→ Contingency: if scraping fails (CAPTCHA, blocking), fall back to web search + analyst reports

→ Contingency: if pricing data is sparse, fall back to qualitative analysis + historical trends

Contingency planning adds complexity but prevents catastrophic failure when the primary plan collapses.

Budget-Aware Planning

Agents operating under resource constraints (token budget, time budget, API call limits) must plan with those constraints in mind. Budget-aware planning tracks remaining resources and adapts the plan to fit within them.

Task: Analyze 50-page contract for legal risks

→ Budget: 50,000 tokens max, 5 tool calls max

→ Plan: pre-scan document (tool call 1) → identify high-risk sections → deep-dive on risk areas (tool calls 2-4) → synthesize findings (tool call 5)

→ If document exceeds estimated size: reduce deep-dive scope

→ If tool calls exhausted: return partial analysis with explicit "incomplete" flag

Budget-aware planning prevents the scenario where an agent is 80% through a task and runs out of resources — the task fails at the worst possible moment.

The Planning Architecture Decision

The choice between planning architectures isn't binary — production systems typically combine approaches:

- Fast path (reactive): simple tasks → zero-shot or single-step decomposition → low latency

- Slow path (deliberative): complex tasks → tree-of-thought or hierarchical → higher latency but better outcomes

- Escalation boundary: when task complexity exceeds threshold → escalate from fast to slow path

The key is making the decision explicitly and consistently:

Task complexity score = f(token_estimate, tool_call_count, reasoning_depth_required)

if complexity_score < threshold:

→ fast path (reactive planning)

else:

→ slow path (deliberative planning with explicit plan construction)

The threshold is domain-specific. A task that's "complex" in one context might be routine in another. Calibrate based on observed failure rates in your specific domain.

The Bottom Line

Planning in AI agents isn't a solved problem — it's an evolving discipline with real tradeoffs. Zero-shot reasoning is fast and cheap; deliberative planning is slower but produces better outcomes on complex tasks.

The teams building reliable agentic systems aren't choosing one approach — they're building routing systems that match task complexity to planning depth, with explicit escalation paths when tasks exceed their expected complexity.

Build budget-aware planning into your agents from the start. Monitor how often plans fail due to insufficient information gathering. Instrument the planning process so you can see when the agent is planning well vs. when it's guessing.

Because a well-planned agent is an order of magnitude more reliable than one that's guessing its way through every task.

Related posts: Agent Prompt Engineering Patterns — the discipline of designing prompts for reliable agent behavior. Planning in AI Agents — foundational planning patterns for goal-directed agents. The Architecture of Agent Memory — how memory enables long-horizon planning.