Three years ago, every enterprise I spoke with had a "GenAI strategy." In practice, most had a ChatGPT wrapper and a PowerPoint deck. Fast forward to early 2026 and the picture looks dramatically different — not uniformly better, but far more honest. Organizations have burned through their pilot budgets, learned hard lessons about data quality and change management, and are now making genuinely strategic decisions about where AI creates durable value and where it doesn't.

This post is a synthesis of what I've observed across industries, from conversations with CIOs and AI leads at large enterprises to the patterns visible in public disclosures and vendor announcements. My goal is to give you a grounded view — not a press release.

The Adoption Curve Has Finally Separated Winners from Waiters

The early adopters who experimented aggressively in 2023–2024 have now separated into two camps. The first group — I'd call them the builders — treated early experiments as genuine learning investments. They accepted failed pilots as tuition, built internal capability, and are now running AI at meaningful scale in production. The second group — the survivors — ran pilots under executive pressure, celebrated modest wins internally, and then quietly deprioritized AI when the ROI math didn't pencil out cleanly.

The difference isn't industry or budget size. I've seen mid-market professional services firms outperform Fortune 500 companies. The differentiating factors are simpler and more cultural: tolerance for ambiguity during the learning phase, executive sponsorship that doesn't require quarterly ROI, and a willingness to let domain experts drive AI initiatives rather than IT alone.

What's Actually Working: The Three Durable Use Cases

Across sectors, three categories of GenAI application have demonstrated consistent, measurable value in enterprise settings.

1. Knowledge work augmentation in bounded domains

Legal document review, financial report summarization, contract analysis, medical coding — these tasks share a critical characteristic: the output can be verified by a human expert in a fraction of the time it takes to produce it from scratch. This asymmetry is the key. When a lawyer can review a 50-page contract summary in 10 minutes rather than spending 3 hours reading the full document, the productivity gain is real and measurable. Thomson Reuters' CoCounsel, Harvey's legal AI platform, and similar products have proven this model works at scale.

The lesson: GenAI works best when it accelerates expert review, not when it replaces expert judgment entirely.

2. Internal knowledge retrieval and synthesis

The "enterprise search" problem is genuinely hard. Most large organizations have years of institutional knowledge buried in Confluence pages, SharePoint folders, Slack archives, and email threads that are functionally inaccessible. Retrieval-augmented generation (RAG) systems built on internal corpora have shown strong adoption and high employee satisfaction when implemented well. Microsoft's Copilot for Microsoft 365 has become a significant reference implementation — not because it's perfect, but because it meets employees where they already work.

The failure mode here is treating RAG as a plug-and-play solution. The organizations getting real value have invested heavily in data governance, chunking strategies, metadata tagging, and continuous evaluation pipelines. RAG is an engineering discipline, not a product feature.

3. Customer-facing automation with defined scope

Narrowly scoped customer service automation — handling password resets, order status queries, returns processing, tier-1 technical support — is working well when companies are disciplined about scope boundaries. The trap is scope creep: a bot that handles 80% of queries confidently and 20% poorly is worse than a bot that handles 50% confidently and escalates the rest gracefully.

Salesforce's Agentforce platform, ServiceNow's Now Assist, and Zendesk's AI features have all matured significantly. The common thread in successful deployments is a clear escalation path and ongoing human-in-the-loop monitoring — not full automation.

The Pitfalls That Keep Catching Organizations

Data quality is the irreducible foundation. I cannot say this loudly enough. Every enterprise GenAI failure I've examined at close range has had data quality as either the primary cause or a significant contributing factor. Models don't compensate for garbage data — they confidently synthesize garbage into convincing-sounding garbage. Organizations that skipped the unglamorous work of data governance are paying for it now.

Hallucination is a product design problem, not just a model problem. Yes, frontier models from Anthropic, OpenAI, and Google have improved significantly on factual grounding. But hallucination risk in production systems is largely a function of how applications are designed — prompt engineering, retrieval quality, confidence thresholding, user interface design that communicates uncertainty. Enterprises that treat hallucination as someone else's problem (the model vendor's) rather than their own engineering responsibility are building on sand.

Change management is chronically underinvested. The ratio of technology spend to change management spend in most GenAI programs I've reviewed is roughly 10:1. It should be closer to 3:1. Deploying an AI tool to knowledge workers who haven't been trained on how to use it effectively, who don't trust its outputs, and who aren't given time to adapt their workflows is not a technology problem — it's a leadership failure. The best tools in the world deliver zero value if adoption rates are 15%.

Build vs. buy decisions are being made without full cost accounting. The "build on foundation models" path has hidden costs that aren't always visible upfront: inference costs at scale, fine-tuning compute, evaluation infrastructure, prompt management, safety testing, and ongoing model maintenance as base models update. Many organizations have discovered that what looked like a $2M build project has a $1.5M annual run cost they didn't budget for.

Emerging Patterns for 2026

Looking at where enterprise AI is headed this year, a few patterns stand out.

AI agents are moving from demo to deployment. The conversation has shifted from RAG chatbots to agentic systems capable of multi-step reasoning and tool use. Early enterprise agent deployments — orchestrating workflows across CRM, ERP, and communication tools — are showing real promise in organizations with well-defined business processes and clean system integrations. The risk is complexity: agentic systems fail in more interesting and harder-to-debug ways than retrieval systems.

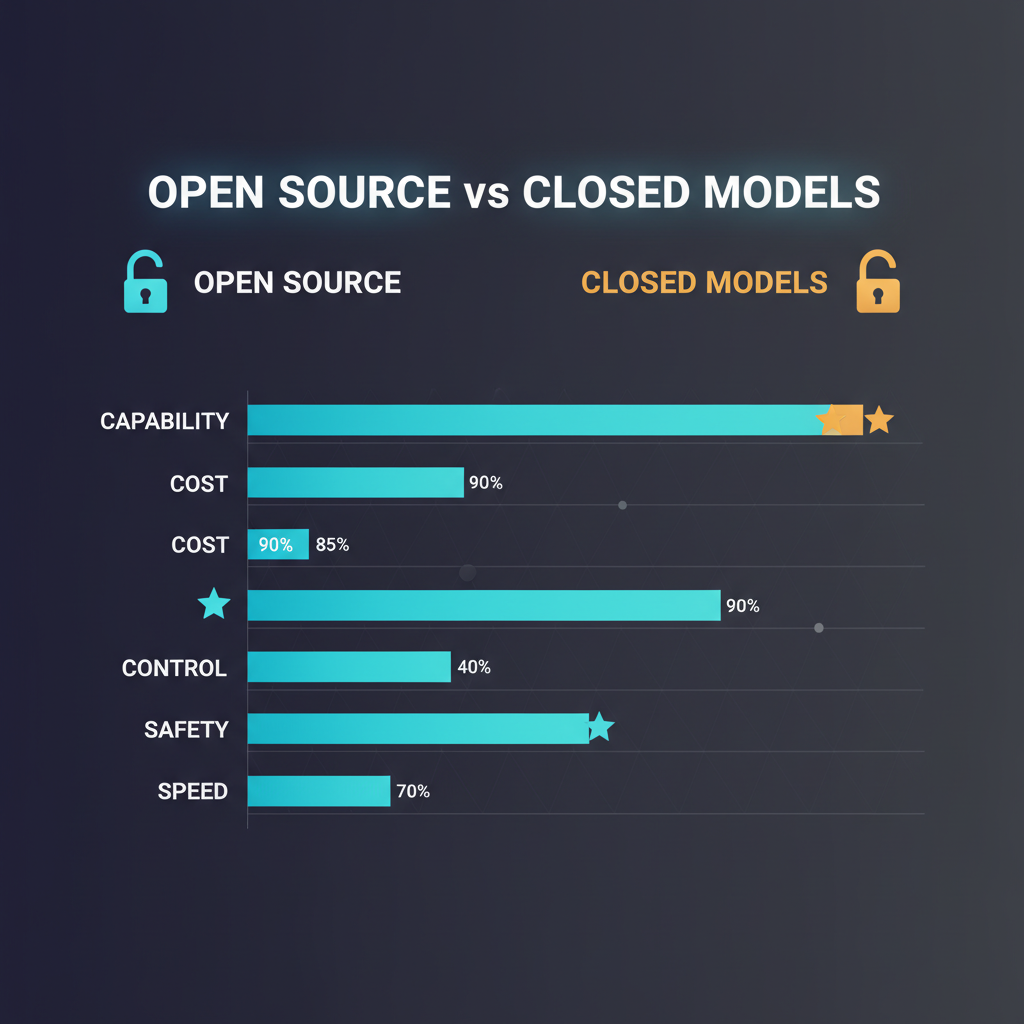

Model selection is becoming a portfolio decision. The era of "we're an OpenAI shop" or "we're all-in on Azure AI" is giving way to more sophisticated model routing strategies. Frontier models from OpenAI (GPT-4o), Anthropic (Claude 3.5 Sonnet/Opus), and Google (Gemini 2.0) each have distinct strength profiles. Enterprises are increasingly running different models for different task types — using smaller, faster, cheaper models for high-volume classification tasks and frontier models for complex synthesis and generation.

Governance infrastructure is finally being built. After two years of ad hoc GenAI deployments that bypassed IT and legal review, enterprise governance frameworks are materializing. This is partly regulatory pressure (the EU AI Act's provisions for high-risk AI systems are driving formal risk assessment processes) and partly hard experience. AI governance tooling from vendors like Credo AI, Arthur, and Weights & Biases is seeing enterprise adoption that would have been unimaginable 18 months ago.

The Bottom Line

Enterprise GenAI in 2026 is a discipline, not a product category. The organizations generating real value have treated it as such — investing in capability building, data infrastructure, governance, and the unglamorous work of change management alongside the exciting work of building AI applications.

The optimism I feel about enterprise AI is grounded in the specifics: I can point to law firms processing three times the document volume with the same headcount, healthcare systems reducing clinical documentation burden by 40%, and software teams shipping 30% faster. These numbers are real, and they're getting better as the technology matures and organizations accumulate operational experience.

But the hype merchants who promised that GenAI would transform every business process in 18 months were wrong, and the cynics who declared it all hallucination and hot air were also wrong. The truth — as is usually the case with genuinely transformative technology — is more interesting, more contingent, and more demanding than either camp wants to admit.

The enterprises that treat 2026 as year one of a decade-long capability building journey will look back on this period the way successful internet-era companies look at 1999: as a foundational moment that rewarded disciplined investment, not speculation.

Explore more from Dr. Jyothi